EfficientViT: Memory Efficient Vision Transformer with Cascaded Group Attention, CVPR 2023

论文:https://arxiv.org/abs/2305.07027

代码:https://github.com/microsoft/Cream/tree/main/EfficientViT

解读:【CVPR2023】EfficientViT:具级联组注意力的访存高效ViT - 知乎 (zhihu.com)

EfficientViT:用于高分辨率计算机视觉的内存高效视觉转换器 - Unite.AI

摘要

Vision Transformer由于其高度的建模能力而取得了巨大的成功。 然而,它们的卓越性能伴随着沉重的计算代价,不适合于实时应用。 本文提出了一个高速ViT,即EfficientViT。 论文发现,现有的Transformer模型的速度通常受到访存效率低的操作的限制,尤其是在MHSA中的张量重塑和逐元素函数。 因此,论文设计了一种新的三明治布局的构建块,即在有效的FFN层之间使用单个内存受限的MHSA,在增强通道通信的同时提高了访存效率。 此外,注意力图在头部之间有很高的相似性,导致计算冗余。 为解决这一问题,论文提出了一个级联的分组注意力模块,给注意力头提供全特征的不同划分,节省了计算开销,提高了注意力的多样性。 实验证明EfficientViT优于现有的高效模型,在速度和精度之间取得良好的平衡。

如图所示,EfficientViT 框架在准确性和速度方面均优于当前最先进的 CNN 和 ViT 模型。

EfficientViT:提高视觉变压器的效率

EfficientViT模型旨在从三个角度提高现有视觉变压器模型的效率,

- 计算冗余。

- 内存访问。

- 参数使用。

该模型旨在找出上述参数如何影响视觉Transformer模型的效率,以及如何解决它们以更好的效率获得更好的结果。

本文通过分析DeiT和Swin两个Transformer架构得出如下结论:

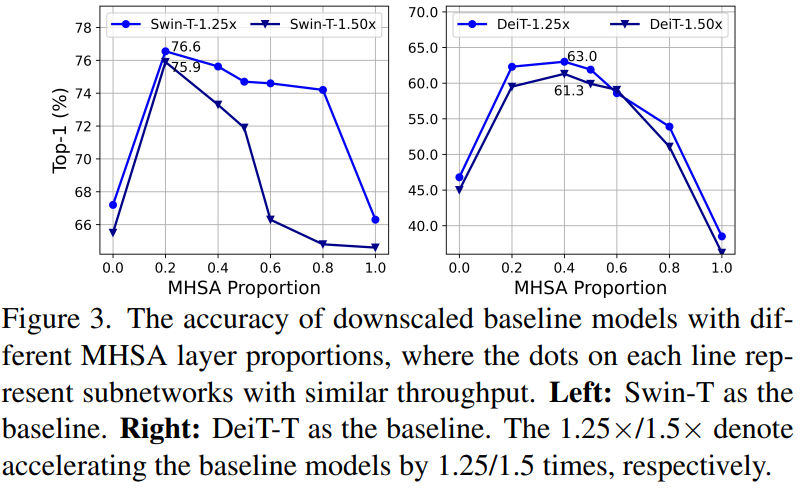

- 适当降低MHSA层利用率可以在提高模型性能的同时提高访存效率(如图3所示)

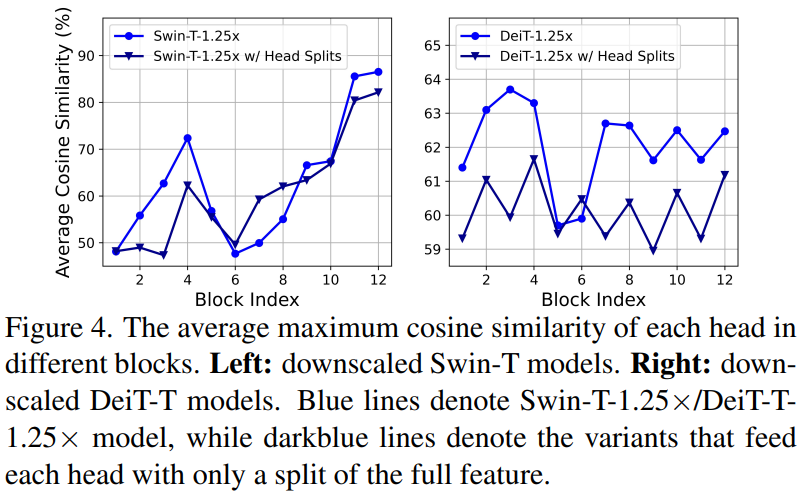

- 在不同的头部使用不同的通道划分特征,而不是像MHSA那样对所有头部使用相同的全特征,可以有效地减少注意力计算冗余(如图4所示)

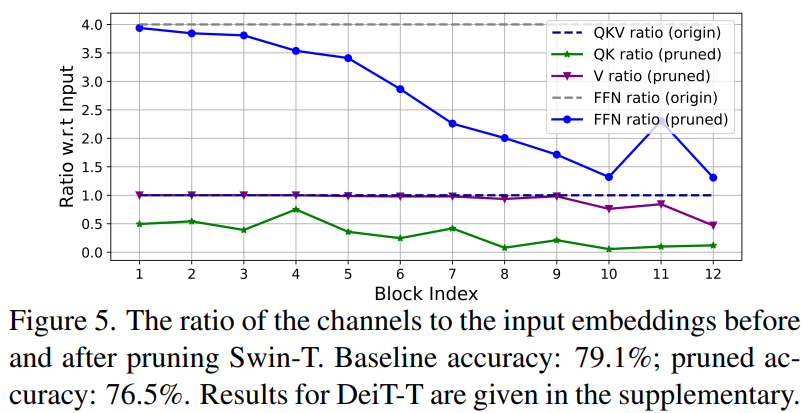

- 典型的通道配置,即在每个阶段之后将通道数加倍或对所有块使用等效通道,可能在最后几个块中产生大量冗余(如图5所示)

- 在维度相同的情况下,Q、K的冗余度比V大得多(如图5所示)

内存访问和效率

影响模型速度的重要因素之一是 内存访问开销 。 如下图所示,本文首先分析了DeiT和Swin两个架构的运行时间分析,发现Transformer架构的速度通常受限于访存。Transformer 中的多个运算符(包括逐元素加法、归一化和频繁整形)都是内存效率低下的操作,因为它们需要访问不同的内存单元,这是一个耗时的过程。针对这一问题本文提出了一种三明治架构,即2N个FFN中间中间夹一个MHSA结构的级联分组注意力。同时本文发现现有的多头划分方法导致每个头的注意力图高度相似,这造成了资源的浪费,本文提出了一种新的多头划分策略来缓解这一问题。

尽管有一些现有的方法可以简化标准的 softmax 自注意力计算,例如低秩近似和稀疏注意力,但它们通常提供有限的加速,并降低准确性。

另一方面,EfficientViT 框架旨在通过减少框架中内存低效层的数量来降低内存访问成本。 该模型将 DeiT-T 和 Swin-T 缩小为具有较高干扰吞吐量 1.25 倍和 1.5 倍的小型子网,并将这些子网的性能与 MHSA 层的比例进行比较。 如下图所示,实施后,该方法可将 MHSA 层的准确性提高约 20% 至 40%。

计算效率

MHSA 层倾向于将输入序列嵌入到多个子空间或头中,并单独计算注意力图,这是一种已知可以提高性能的方法。 然而,注意力图的计算成本并不便宜,为了探索计算成本,EfficientViT 模型探索了如何减少较小 ViT 模型中的冗余注意力。 该模型通过以 1.25 倍推理加速训练宽度缩小的 DeiT-T 和 Swim-T 模型,测量每个块内每个头和其余头的最大余弦相似度。 如下图所示,注意力头之间存在大量相似性,这表明模型会产生计算冗余,因为许多头倾向于学习精确完整特征的相似投影。

为了鼓励头部学习不同的模式,该模型明确应用了一种直观的解决方案,其中每个头部仅接收完整特征的一部分,这是一种类似于组卷积思想的技术。 该模型训练具有修改的 MHSA 层的缩小模型的不同方面。

参数效率

平均 ViT 模型继承了它们的设计策略,例如使用等效宽度进行投影、将 FFN 中的扩展比设置为 4,以及增加 NLP 转换器的阶段头数。 这些组件的配置需要针对轻量级模块仔细重新设计。 EfficientViT模型部署泰勒结构剪枝以自动找到Swim-T和DeiT-T层中的基本组件,并进一步探索底层参数分配原则。 在一定的资源限制下,剪枝方法会删除不重要的通道,并保留关键的通道,以确保尽可能高的准确性。 下图比较了 Swin-T 框架上剪枝前后的通道与输入嵌入的比率。 观察结果: 基线准确度:79.1%; 剪枝准确率:76.5%。

该图表明框架的前两个阶段保留了更多的维度,而后两个阶段保留了更少的维度。 这可能意味着在每个阶段之后将通道加倍或对所有块使用等效通道的典型通道配置可能会导致最后几个块中存在大量冗余。

EfficientViT构建块

EfficientViT的新构建块:

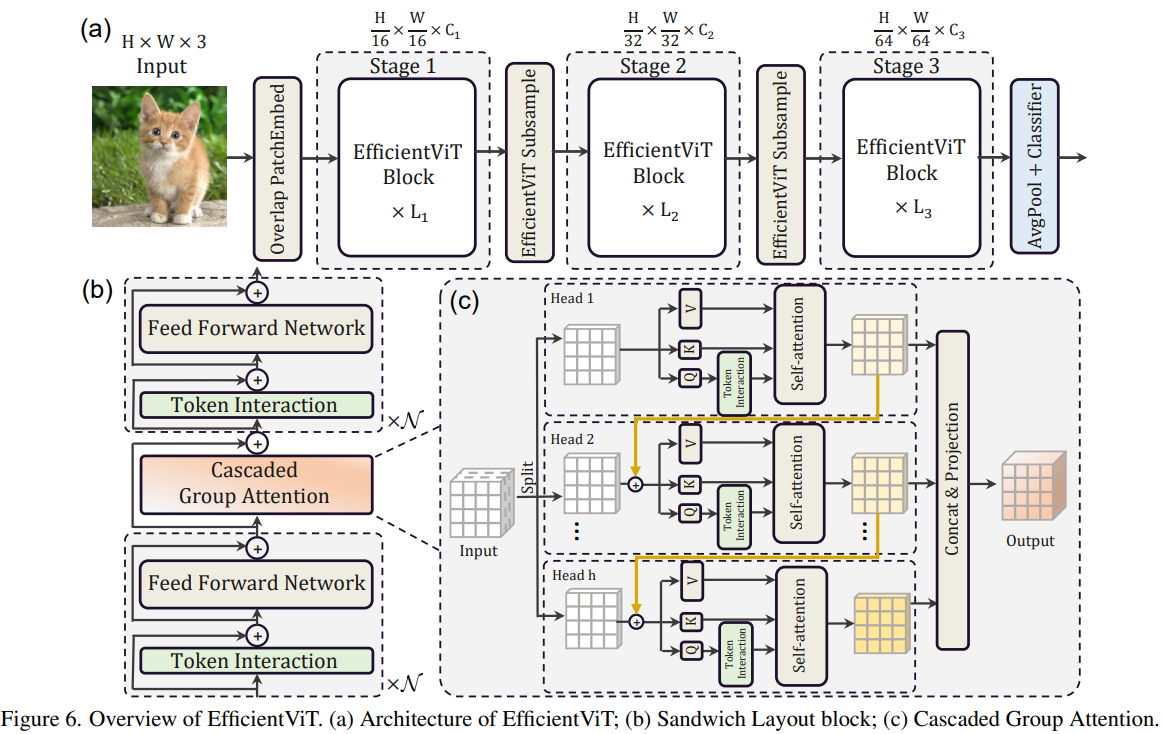

该框架由级联组注意力模块、内存高效的三明治布局和参数重新分配策略组成,分别专注于提高模型在计算、内存和参数方面的效率。

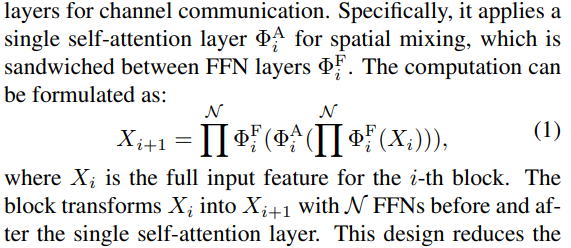

三明治布局

该模型使用新的三明治布局为框架构建更有效和高效的内存块。 三明治布局使用较少内存限制的自注意力层,并利用内存效率更高的前馈网络进行通道通信。 更具体地说,该模型应用夹在 FFN 层之间的单个自注意力层进行空间混合。

该设计不仅有助于减少由于自注意力层而导致的内存时间消耗,而且由于使用 FFN 层,还允许网络内不同通道之间的有效通信。 该模型还使用 DWConv 在每个前馈网络层之前应用额外的交互标记层,并通过引入局部结构信息的归纳偏差来增强模型容量。

级联分组注意力

MHSA 层的主要问题之一是注意力头的冗余,这使得计算效率更低。 为了解决这个问题,该模型提出了用于视觉变换器的 CGA(Cascaded Group Attention),这是一种新的注意力模块,其灵感来自高效 CNN 中的组卷积。 在这种方法中,模型向各个头部提供完整特征的分割,因此将注意力计算明确地分解到各个头部。 分割特征而不是向每个头提供完整特征可以节省计算量,并使过程更加高效,并且模型通过鼓励各层学习具有更丰富信息的特征的投影,继续致力于提高准确性和容量。

参数重新分配

为了提高参数的效率,模型通过扩大关键模块的通道宽度,同时缩小不那么重要的模块的通道宽度来重新分配网络中的参数。 基于泰勒分析,模型要么在每个阶段为每个头中的投影设置小通道尺寸,要么模型允许投影具有与输入相同的尺寸。 前馈网络的扩展比也从 2 降至 4,以帮助其参数冗余。 EfficientViT 框架实现的建议重新分配策略为重要模块分配更多通道,使它们能够更好地学习高维空间中的表示,从而最大限度地减少特征信息的丢失。 此外,为了加快干扰过程并进一步提高模型效率,模型会自动删除不重要模块中的冗余参数。

EfficientViT网络架构

网络架构

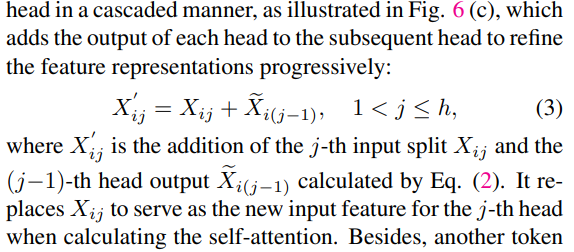

本文的整体框架如图所示,包含三个阶段,每个阶段包含若干个三明治结构,三明治结构由2N个DWConv(空间局部通信)和FFN(信道通信)以及级联分组注意力构成。级联分组注意力相对于之前的MHSA不同之处在于先划分头部然后再生成Q、K、V。同时为了学习更丰富的特征映射来提高模型容量,本文将每个头的输出与下一个头的输入相加。最后将多个头输出Concat起来,使用一个线性层进行映射得到最终的输出。

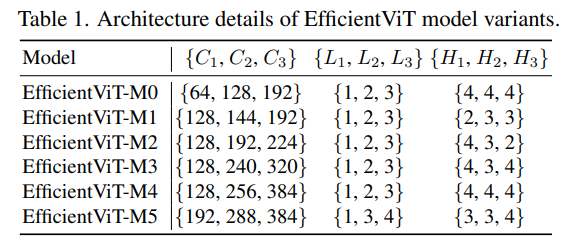

EfficientViT 模型变体

EfficientViT 模型系列由 6 个具有不同深度和宽度比例的模型组成,并且为每个阶段分配了一定数量的头。 下表提供了 EfficientViT 模型系列的架构细节,其中 C、L 和 H 指的是特定阶段的宽度、深度和头数量。

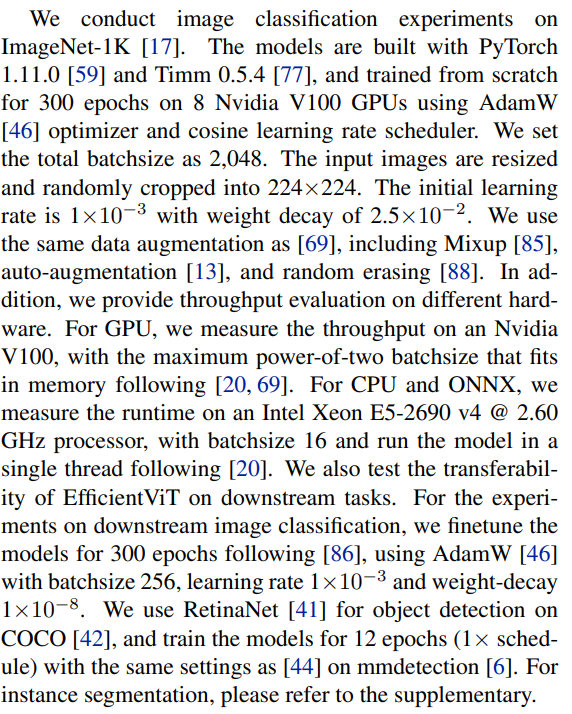

实验

实验细节

ImageNet结果

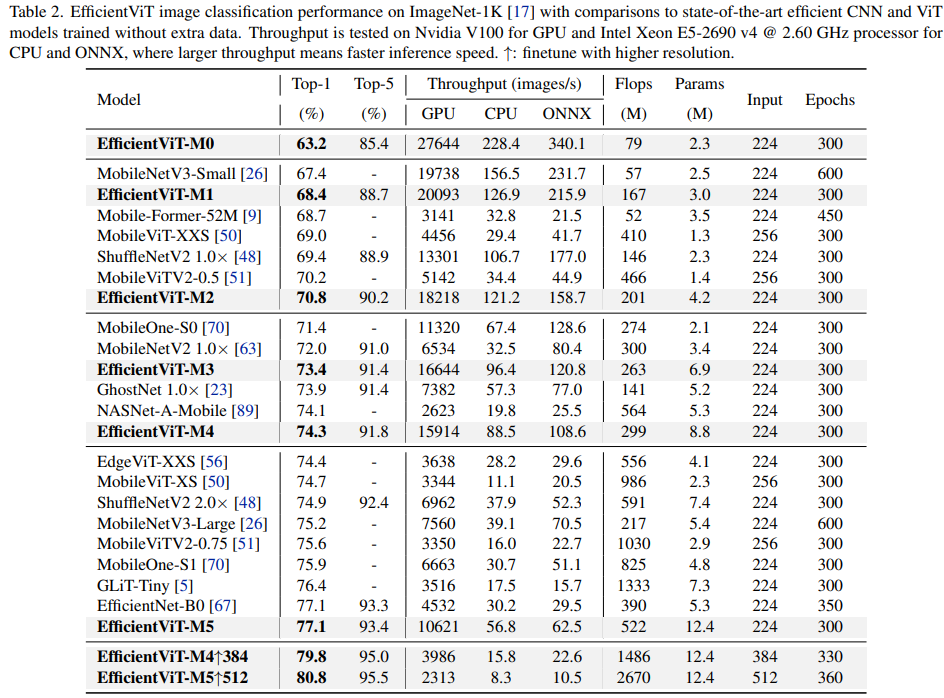

与 ImageNet 数据集上的当前 ViT 和 CNN 模型进行比较。 EfficientViT模型族在大多数情况下都优于当前的框架,并成功地在速度和准确性之间实现了理想的平衡。

与高效 CNN 和高效 ViT 的比较

该模型首先将其性能与 EfficientNet 等 Efficient CNN 和 MobileNets 等普通 CNN 框架进行比较。 与 MobileNet 框架相比,EfficientViT 模型获得了更好的 top-1 准确度分数,同时在 Intel CPU 和 V3.0 GPU 上的运行速度分别快了 2.5 倍和 100 倍。

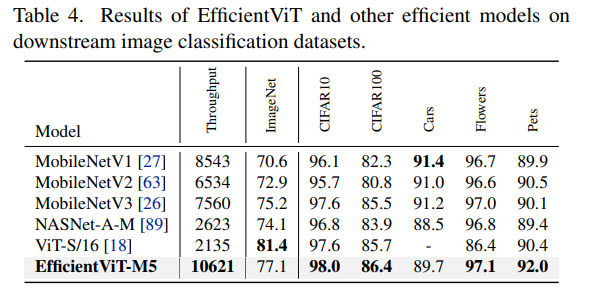

下游图像分类

将EfficientViT模型应用于各种下游任务来研究模型的迁移学习能力。

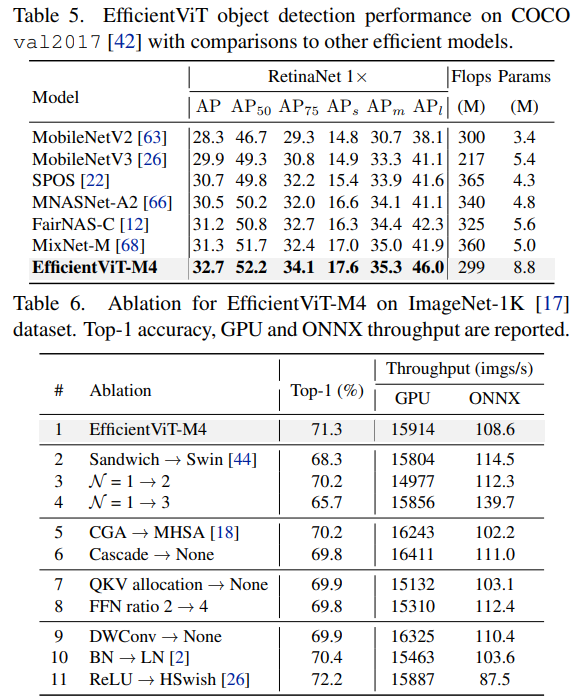

目标检测

在 COCO 目标检测数据集上的检测比较。

关键代码

EfficientBlock

import torch

import itertools

from timm.models.vision_transformer import trunc_normal_

from timm.models.layers import SqueezeExcite

class Conv2d_BN(torch.nn.Sequential):

def __init__(self, a, b, ks=1, stride=1, pad=0, dilation=1,

groups=1, bn_weight_init=1, resolution=-10000):

super().__init__()

self.add_module('c', torch.nn.Conv2d(

a, b, ks, stride, pad, dilation, groups, bias=False))

self.add_module('bn', torch.nn.BatchNorm2d(b))

torch.nn.init.constant_(self.bn.weight, bn_weight_init)

torch.nn.init.constant_(self.bn.bias, 0)

@torch.no_grad()

def fuse(self):

c, bn = self._modules.values()

w = bn.weight / (bn.running_var + bn.eps)**0.5

w = c.weight * w[:, None, None, None]

b = bn.bias - bn.running_mean * bn.weight / \

(bn.running_var + bn.eps)**0.5

m = torch.nn.Conv2d(w.size(1) * self.c.groups, w.size(

0), w.shape[2:], stride=self.c.stride, padding=self.c.padding, dilation=self.c.dilation, groups=self.c.groups)

m.weight.data.copy_(w)

m.bias.data.copy_(b)

return m

class BN_Linear(torch.nn.Sequential):

def __init__(self, a, b, bias=True, std=0.02):

super().__init__()

self.add_module('bn', torch.nn.BatchNorm1d(a))

self.add_module('l', torch.nn.Linear(a, b, bias=bias))

trunc_normal_(self.l.weight, std=std)

if bias:

torch.nn.init.constant_(self.l.bias, 0)

@torch.no_grad()

def fuse(self):

bn, l = self._modules.values()

w = bn.weight / (bn.running_var + bn.eps)**0.5

b = bn.bias - self.bn.running_mean * \

self.bn.weight / (bn.running_var + bn.eps)**0.5

w = l.weight * w[None, :]

if l.bias is None:

b = b @ self.l.weight.T

else:

b = (l.weight @ b[:, None]).view(-1) + self.l.bias

m = torch.nn.Linear(w.size(1), w.size(0))

m.weight.data.copy_(w)

m.bias.data.copy_(b)

return m

class PatchMerging(torch.nn.Module):

def __init__(self, dim, out_dim, input_resolution):

super().__init__()

hid_dim = int(dim * 4)

self.conv1 = Conv2d_BN(dim, hid_dim, 1, 1, 0, resolution=input_resolution)

self.act = torch.nn.ReLU()

self.conv2 = Conv2d_BN(hid_dim, hid_dim, 3, 2, 1, groups=hid_dim, resolution=input_resolution)

self.se = SqueezeExcite(hid_dim, .25)

self.conv3 = Conv2d_BN(hid_dim, out_dim, 1, 1, 0, resolution=input_resolution // 2)

def forward(self, x):

x = self.conv3(self.se(self.act(self.conv2(self.act(self.conv1(x))))))

return x

class Residual(torch.nn.Module):

def __init__(self, m, drop=0.):

super().__init__()

self.m = m

self.drop = drop

def forward(self, x):

if self.training and self.drop > 0:

return x + self.m(x) * torch.rand(x.size(0), 1, 1, 1,

device=x.device).ge_(self.drop).div(1 - self.drop).detach()

else:

return x + self.m(x)

class FFN(torch.nn.Module):

def __init__(self, ed, h, resolution):

super().__init__()

self.pw1 = Conv2d_BN(ed, h, resolution=resolution)

self.act = torch.nn.ReLU()

self.pw2 = Conv2d_BN(h, ed, bn_weight_init=0, resolution=resolution)

def forward(self, x):

x = self.pw2(self.act(self.pw1(x)))

return x

class CascadedGroupAttention(torch.nn.Module):

r""" Cascaded Group Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution, correspond to the window size.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

kernels=[5, 5, 5, 5],):

super().__init__()

self.num_heads = num_heads

self.scale = key_dim ** -0.5

self.key_dim = key_dim

self.d = int(attn_ratio * key_dim)

self.attn_ratio = attn_ratio

qkvs = []

dws = []

for i in range(num_heads):

qkvs.append(Conv2d_BN(dim // (num_heads), self.key_dim * 2 + self.d, resolution=resolution))

dws.append(Conv2d_BN(self.key_dim, self.key_dim, kernels[i], 1, kernels[i]//2, groups=self.key_dim, resolution=resolution))

self.qkvs = torch.nn.ModuleList(qkvs)

self.dws = torch.nn.ModuleList(dws)

self.proj = torch.nn.Sequential(torch.nn.ReLU(), Conv2d_BN(

self.d * num_heads, dim, bn_weight_init=0, resolution=resolution))

points = list(itertools.product(range(resolution), range(resolution)))

N = len(points)

attention_offsets = {}

idxs = []

for p1 in points:

for p2 in points:

offset = (abs(p1[0] - p2[0]), abs(p1[1] - p2[1]))

if offset not in attention_offsets:

attention_offsets[offset] = len(attention_offsets)

idxs.append(attention_offsets[offset])

self.attention_biases = torch.nn.Parameter(

torch.zeros(num_heads, len(attention_offsets)))

self.register_buffer('attention_bias_idxs',

torch.LongTensor(idxs).view(N, N))

@torch.no_grad()

def train(self, mode=True):

super().train(mode)

if mode and hasattr(self, 'ab'):

del self.ab

else:

self.ab = self.attention_biases[:, self.attention_bias_idxs]

def forward(self, x): # x (B,C,H,W)

B, C, H, W = x.shape

trainingab = self.attention_biases[:, self.attention_bias_idxs]

feats_in = x.chunk(len(self.qkvs), dim=1)

feats_out = []

feat = feats_in[0]

for i, qkv in enumerate(self.qkvs):

if i > 0: # add the previous output to the input

feat = feat + feats_in[i]

feat = qkv(feat)

q, k, v = feat.view(B, -1, H, W).split([self.key_dim, self.key_dim, self.d], dim=1) # B, C/h, H, W

q = self.dws[i](q)

q, k, v = q.flatten(2), k.flatten(2), v.flatten(2) # B, C/h, N

attn = (

(q.transpose(-2, -1) @ k) * self.scale

+

(trainingab[i] if self.training else self.ab[i])

)

attn = attn.softmax(dim=-1) # BNN

feat = (v @ attn.transpose(-2, -1)).view(B, self.d, H, W) # BCHW

feats_out.append(feat)

x = self.proj(torch.cat(feats_out, 1))

return x

class LocalWindowAttention(torch.nn.Module):

r""" Local Window Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dim = dim

self.num_heads = num_heads

self.resolution = resolution

assert window_resolution > 0, 'window_size must be greater than 0'

self.window_resolution = window_resolution

window_resolution = min(window_resolution, resolution)

self.attn = CascadedGroupAttention(dim, key_dim, num_heads,

attn_ratio=attn_ratio,

resolution=window_resolution,

kernels=kernels,)

def forward(self, x):

H = W = self.resolution

B, C, H_, W_ = x.shape

# Only check this for classifcation models

assert H == H_ and W == W_, 'input feature has wrong size, expect {}, got {}'.format((H, W), (H_, W_))

if H <= self.window_resolution and W <= self.window_resolution:

x = self.attn(x)

else:

x = x.permute(0, 2, 3, 1)

pad_b = (self.window_resolution - H %

self.window_resolution) % self.window_resolution

pad_r = (self.window_resolution - W %

self.window_resolution) % self.window_resolution

padding = pad_b > 0 or pad_r > 0

if padding:

x = torch.nn.functional.pad(x, (0, 0, 0, pad_r, 0, pad_b))

pH, pW = H + pad_b, W + pad_r

nH = pH // self.window_resolution

nW = pW // self.window_resolution

# window partition, BHWC -> B(nHh)(nWw)C -> BnHnWhwC -> (BnHnW)hwC -> (BnHnW)Chw

x = x.view(B, nH, self.window_resolution, nW, self.window_resolution, C).transpose(2, 3).reshape(

B * nH * nW, self.window_resolution, self.window_resolution, C

).permute(0, 3, 1, 2)

x = self.attn(x)

# window reverse, (BnHnW)Chw -> (BnHnW)hwC -> BnHnWhwC -> B(nHh)(nWw)C -> BHWC

x = x.permute(0, 2, 3, 1).view(B, nH, nW, self.window_resolution, self.window_resolution,

C).transpose(2, 3).reshape(B, pH, pW, C)

if padding:

x = x[:, :H, :W].contiguous()

x = x.permute(0, 3, 1, 2)

return x

class EfficientViTBlock(torch.nn.Module):

""" A basic EfficientViT building block.

Args:

type (str): Type for token mixer. Default: 's' for self-attention.

ed (int): Number of input channels.

kd (int): Dimension for query and key in the token mixer.

nh (int): Number of attention heads.

ar (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, type,

ed, kd, nh=8,

ar=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dw0 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn0 = Residual(FFN(ed, int(ed * 2), resolution))

if type == 's':

self.mixer = Residual(LocalWindowAttention(ed, kd, nh, attn_ratio=ar, \

resolution=resolution, window_resolution=window_resolution, kernels=kernels))

self.dw1 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn1 = Residual(FFN(ed, int(ed * 2), resolution))

def forward(self, x):

return self.ffn1(self.dw1(self.mixer(self.ffn0(self.dw0(x)))))EfficientViT

# https://github.com/microsoft/Cream/blob/main/EfficientViT/classification/model/efficientvit.py

# --------------------------------------------------------

# EfficientViT Model Architecture

# Copyright (c) 2022 Microsoft

# Build the EfficientViT Model

# Written by: Xinyu Liu

# --------------------------------------------------------

import torch

import itertools

from timm.models.vision_transformer import trunc_normal_

from timm.models.layers import SqueezeExcite

class Conv2d_BN(torch.nn.Sequential):

def __init__(self, a, b, ks=1, stride=1, pad=0, dilation=1,

groups=1, bn_weight_init=1, resolution=-10000):

super().__init__()

self.add_module('c', torch.nn.Conv2d(

a, b, ks, stride, pad, dilation, groups, bias=False))

self.add_module('bn', torch.nn.BatchNorm2d(b))

torch.nn.init.constant_(self.bn.weight, bn_weight_init)

torch.nn.init.constant_(self.bn.bias, 0)

@torch.no_grad()

def fuse(self):

c, bn = self._modules.values()

w = bn.weight / (bn.running_var + bn.eps)**0.5

w = c.weight * w[:, None, None, None]

b = bn.bias - bn.running_mean * bn.weight / \

(bn.running_var + bn.eps)**0.5

m = torch.nn.Conv2d(w.size(1) * self.c.groups, w.size(

0), w.shape[2:], stride=self.c.stride, padding=self.c.padding, dilation=self.c.dilation, groups=self.c.groups)

m.weight.data.copy_(w)

m.bias.data.copy_(b)

return m

class BN_Linear(torch.nn.Sequential):

def __init__(self, a, b, bias=True, std=0.02):

super().__init__()

self.add_module('bn', torch.nn.BatchNorm1d(a))

self.add_module('l', torch.nn.Linear(a, b, bias=bias))

trunc_normal_(self.l.weight, std=std)

if bias:

torch.nn.init.constant_(self.l.bias, 0)

@torch.no_grad()

def fuse(self):

bn, l = self._modules.values()

w = bn.weight / (bn.running_var + bn.eps)**0.5

b = bn.bias - self.bn.running_mean * \

self.bn.weight / (bn.running_var + bn.eps)**0.5

w = l.weight * w[None, :]

if l.bias is None:

b = b @ self.l.weight.T

else:

b = (l.weight @ b[:, None]).view(-1) + self.l.bias

m = torch.nn.Linear(w.size(1), w.size(0))

m.weight.data.copy_(w)

m.bias.data.copy_(b)

return m

class PatchMerging(torch.nn.Module):

def __init__(self, dim, out_dim, input_resolution):

super().__init__()

hid_dim = int(dim * 4)

self.conv1 = Conv2d_BN(dim, hid_dim, 1, 1, 0, resolution=input_resolution)

self.act = torch.nn.ReLU()

self.conv2 = Conv2d_BN(hid_dim, hid_dim, 3, 2, 1, groups=hid_dim, resolution=input_resolution)

self.se = SqueezeExcite(hid_dim, .25)

self.conv3 = Conv2d_BN(hid_dim, out_dim, 1, 1, 0, resolution=input_resolution // 2)

def forward(self, x):

x = self.conv3(self.se(self.act(self.conv2(self.act(self.conv1(x))))))

return x

class Residual(torch.nn.Module):

def __init__(self, m, drop=0.):

super().__init__()

self.m = m

self.drop = drop

def forward(self, x):

if self.training and self.drop > 0:

return x + self.m(x) * torch.rand(x.size(0), 1, 1, 1,

device=x.device).ge_(self.drop).div(1 - self.drop).detach()

else:

return x + self.m(x)

class FFN(torch.nn.Module):

def __init__(self, ed, h, resolution):

super().__init__()

self.pw1 = Conv2d_BN(ed, h, resolution=resolution)

self.act = torch.nn.ReLU()

self.pw2 = Conv2d_BN(h, ed, bn_weight_init=0, resolution=resolution)

def forward(self, x):

x = self.pw2(self.act(self.pw1(x)))

return x

class CascadedGroupAttention(torch.nn.Module):

r""" Cascaded Group Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution, correspond to the window size.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

kernels=[5, 5, 5, 5],):

super().__init__()

self.num_heads = num_heads

self.scale = key_dim ** -0.5

self.key_dim = key_dim

self.d = int(attn_ratio * key_dim)

self.attn_ratio = attn_ratio

qkvs = []

dws = []

for i in range(num_heads):

qkvs.append(Conv2d_BN(dim // (num_heads), self.key_dim * 2 + self.d, resolution=resolution))

dws.append(Conv2d_BN(self.key_dim, self.key_dim, kernels[i], 1, kernels[i]//2, groups=self.key_dim, resolution=resolution))

self.qkvs = torch.nn.ModuleList(qkvs)

self.dws = torch.nn.ModuleList(dws)

self.proj = torch.nn.Sequential(torch.nn.ReLU(), Conv2d_BN(

self.d * num_heads, dim, bn_weight_init=0, resolution=resolution))

points = list(itertools.product(range(resolution), range(resolution)))

N = len(points)

attention_offsets = {}

idxs = []

for p1 in points:

for p2 in points:

offset = (abs(p1[0] - p2[0]), abs(p1[1] - p2[1]))

if offset not in attention_offsets:

attention_offsets[offset] = len(attention_offsets)

idxs.append(attention_offsets[offset])

self.attention_biases = torch.nn.Parameter(

torch.zeros(num_heads, len(attention_offsets)))

self.register_buffer('attention_bias_idxs',

torch.LongTensor(idxs).view(N, N))

@torch.no_grad()

def train(self, mode=True):

super().train(mode)

if mode and hasattr(self, 'ab'):

del self.ab

else:

self.ab = self.attention_biases[:, self.attention_bias_idxs]

def forward(self, x): # x (B,C,H,W)

B, C, H, W = x.shape

trainingab = self.attention_biases[:, self.attention_bias_idxs]

feats_in = x.chunk(len(self.qkvs), dim=1)

feats_out = []

feat = feats_in[0]

for i, qkv in enumerate(self.qkvs):

if i > 0: # add the previous output to the input

feat = feat + feats_in[i]

feat = qkv(feat)

q, k, v = feat.view(B, -1, H, W).split([self.key_dim, self.key_dim, self.d], dim=1) # B, C/h, H, W

q = self.dws[i](q)

q, k, v = q.flatten(2), k.flatten(2), v.flatten(2) # B, C/h, N

attn = (

(q.transpose(-2, -1) @ k) * self.scale

+

(trainingab[i] if self.training else self.ab[i])

)

attn = attn.softmax(dim=-1) # BNN

feat = (v @ attn.transpose(-2, -1)).view(B, self.d, H, W) # BCHW

feats_out.append(feat)

x = self.proj(torch.cat(feats_out, 1))

return x

class LocalWindowAttention(torch.nn.Module):

r""" Local Window Attention.

Args:

dim (int): Number of input channels.

key_dim (int): The dimension for query and key.

num_heads (int): Number of attention heads.

attn_ratio (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, dim, key_dim, num_heads=8,

attn_ratio=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dim = dim

self.num_heads = num_heads

self.resolution = resolution

assert window_resolution > 0, 'window_size must be greater than 0'

self.window_resolution = window_resolution

window_resolution = min(window_resolution, resolution)

self.attn = CascadedGroupAttention(dim, key_dim, num_heads,

attn_ratio=attn_ratio,

resolution=window_resolution,

kernels=kernels,)

def forward(self, x):

H = W = self.resolution

B, C, H_, W_ = x.shape

# Only check this for classifcation models

assert H == H_ and W == W_, 'input feature has wrong size, expect {}, got {}'.format((H, W), (H_, W_))

if H <= self.window_resolution and W <= self.window_resolution:

x = self.attn(x)

else:

x = x.permute(0, 2, 3, 1)

pad_b = (self.window_resolution - H %

self.window_resolution) % self.window_resolution

pad_r = (self.window_resolution - W %

self.window_resolution) % self.window_resolution

padding = pad_b > 0 or pad_r > 0

if padding:

x = torch.nn.functional.pad(x, (0, 0, 0, pad_r, 0, pad_b))

pH, pW = H + pad_b, W + pad_r

nH = pH // self.window_resolution

nW = pW // self.window_resolution

# window partition, BHWC -> B(nHh)(nWw)C -> BnHnWhwC -> (BnHnW)hwC -> (BnHnW)Chw

x = x.view(B, nH, self.window_resolution, nW, self.window_resolution, C).transpose(2, 3).reshape(

B * nH * nW, self.window_resolution, self.window_resolution, C

).permute(0, 3, 1, 2)

x = self.attn(x)

# window reverse, (BnHnW)Chw -> (BnHnW)hwC -> BnHnWhwC -> B(nHh)(nWw)C -> BHWC

x = x.permute(0, 2, 3, 1).view(B, nH, nW, self.window_resolution, self.window_resolution,

C).transpose(2, 3).reshape(B, pH, pW, C)

if padding:

x = x[:, :H, :W].contiguous()

x = x.permute(0, 3, 1, 2)

return x

class EfficientViTBlock(torch.nn.Module):

""" A basic EfficientViT building block.

Args:

type (str): Type for token mixer. Default: 's' for self-attention.

ed (int): Number of input channels.

kd (int): Dimension for query and key in the token mixer.

nh (int): Number of attention heads.

ar (int): Multiplier for the query dim for value dimension.

resolution (int): Input resolution.

window_resolution (int): Local window resolution.

kernels (List[int]): The kernel size of the dw conv on query.

"""

def __init__(self, type,

ed, kd, nh=8,

ar=4,

resolution=14,

window_resolution=7,

kernels=[5, 5, 5, 5],):

super().__init__()

self.dw0 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn0 = Residual(FFN(ed, int(ed * 2), resolution))

if type == 's':

self.mixer = Residual(LocalWindowAttention(ed, kd, nh, attn_ratio=ar, \

resolution=resolution, window_resolution=window_resolution, kernels=kernels))

self.dw1 = Residual(Conv2d_BN(ed, ed, 3, 1, 1, groups=ed, bn_weight_init=0., resolution=resolution))

self.ffn1 = Residual(FFN(ed, int(ed * 2), resolution))

def forward(self, x):

return self.ffn1(self.dw1(self.mixer(self.ffn0(self.dw0(x)))))

class EfficientViT(torch.nn.Module):

def __init__(self, img_size=224,

patch_size=16,

in_chans=3,

num_classes=1000,

stages=['s', 's', 's'],

embed_dim=[64, 128, 192],

key_dim=[16, 16, 16],

depth=[1, 2, 3],

num_heads=[4, 4, 4],

window_size=[7, 7, 7],

kernels=[5, 5, 5, 5],

down_ops=[['subsample', 2], ['subsample', 2], ['']],

distillation=False,):

super().__init__()

resolution = img_size

# Patch embedding

self.patch_embed = torch.nn.Sequential(Conv2d_BN(in_chans, embed_dim[0] // 8, 3, 2, 1, resolution=resolution), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 8, embed_dim[0] // 4, 3, 2, 1, resolution=resolution // 2), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 4, embed_dim[0] // 2, 3, 2, 1, resolution=resolution // 4), torch.nn.ReLU(),

Conv2d_BN(embed_dim[0] // 2, embed_dim[0], 3, 2, 1, resolution=resolution // 8))

resolution = img_size // patch_size

attn_ratio = [embed_dim[i] / (key_dim[i] * num_heads[i]) for i in range(len(embed_dim))]

self.blocks1 = []

self.blocks2 = []

self.blocks3 = []

# Build EfficientViT blocks

for i, (stg, ed, kd, dpth, nh, ar, wd, do) in enumerate(

zip(stages, embed_dim, key_dim, depth, num_heads, attn_ratio, window_size, down_ops)):

for d in range(dpth):

eval('self.blocks' + str(i+1)).append(EfficientViTBlock(stg, ed, kd, nh, ar, resolution, wd, kernels))

if do[0] == 'subsample':

# Build EfficientViT downsample block

#('Subsample' stride)

blk = eval('self.blocks' + str(i+2))

resolution_ = (resolution - 1) // do[1] + 1

blk.append(torch.nn.Sequential(Residual(Conv2d_BN(embed_dim[i], embed_dim[i], 3, 1, 1, groups=embed_dim[i], resolution=resolution)),

Residual(FFN(embed_dim[i], int(embed_dim[i] * 2), resolution)),))

blk.append(PatchMerging(*embed_dim[i:i + 2], resolution))

resolution = resolution_

blk.append(torch.nn.Sequential(Residual(Conv2d_BN(embed_dim[i + 1], embed_dim[i + 1], 3, 1, 1, groups=embed_dim[i + 1], resolution=resolution)),

Residual(FFN(embed_dim[i + 1], int(embed_dim[i + 1] * 2), resolution)),))

self.blocks1 = torch.nn.Sequential(*self.blocks1)

self.blocks2 = torch.nn.Sequential(*self.blocks2)

self.blocks3 = torch.nn.Sequential(*self.blocks3)

# Classification head

self.head = BN_Linear(embed_dim[-1], num_classes) if num_classes > 0 else torch.nn.Identity()

self.distillation = distillation

if distillation:

self.head_dist = BN_Linear(embed_dim[-1], num_classes) if num_classes > 0 else torch.nn.Identity()

@torch.jit.ignore

def no_weight_decay(self):

return {x for x in self.state_dict().keys() if 'attention_biases' in x}

def forward(self, x):

x = self.patch_embed(x)

x = self.blocks1(x)

x = self.blocks2(x)

x = self.blocks3(x)

x = torch.nn.functional.adaptive_avg_pool2d(x, 1).flatten(1)

if self.distillation:

x = self.head(x), self.head_dist(x)

if not self.training:

x = (x[0] + x[1]) / 2

else:

x = self.head(x)

return x