Update rule for parameter w with gradient g when momentum is 0:

新参数 = 旧参数 - 学习率*梯度

w = w - learning_rate * g

Update rule when momentum is larger than 0:

velocity = momentum * velocity - learning_rate * g

w = w + velocity

When nesterov=True, this rule becomes:

velocity = momentum * velocity - learning_rate * g

w = w + momentum * velocity - learning_rate * g

Args |

|

|---|---|

learning_rate |

A Tensor, floating point value, or a schedule that is a keras.optimizers.schedules.LearningRateSchedule, or a callable that takes no arguments and returns the actual value to use. The learning rate. Defaults to 0.001. |

momentum |

float hyperparameter >= 0 that accelerates gradient descent in the relevant direction and dampens oscillations. Defaults to 0, i.e., vanilla gradient descent. |

nesterov |

boolean. Whether to apply Nesterov momentum. Defaults to False. |

name |

String. The name to use for momentum accumulator weights created by the optimizer. |

weight_decay |

Float, defaults to None. If set, weight decay is applied. |

clipnorm |

Float. If set, the gradient of each weight is individually clipped so that its norm is no higher than this value. |

clipvalue |

Float. If set, the gradient of each weight is clipped to be no higher than this value. |

global_clipnorm |

Float. If set, the gradient of all weights is clipped so that their global norm is no higher than this value. |

use_ema |

Boolean, defaults to False. If True, exponential moving average (EMA) is applied. EMA consists of computing an exponential moving average of the weights of the model (as the weight values change after each training batch), and periodically overwriting the weights with their moving average. |

ema_momentum |

Float, defaults to 0.99. Only used if use_ema=True. This is the momentum to use when computing the EMA of the model's weights: new_average = ema_momentum * old_average + (1 - ema_momentum) * current_variable_value. |

ema_overwrite_frequency |

Int or None, defaults to None. Only used if use_ema=True. Every ema_overwrite_frequency steps of iterations, we overwrite the model variable by its moving average. If None, the optimizer does not overwrite model variables in the middle of training, and you need to explicitly overwrite the variables at the end of training by calling optimizer.finalize_variable_values() (which updates the model variables in-place). When using the built-in fit() training loop, this happens automatically after the last epoch, and you don't need to do anything. |

jit_compile |

Boolean, defaults to True. If True, the optimizer will use XLA compilation. If no GPU device is found, this flag will be ignored. |

mesh |

optional tf.experimental.dtensor.Mesh instance. When provided, the optimizer will be run in DTensor mode, e.g. state tracking variable will be a DVariable, and aggregation/reduction will happen in the global DTensor context. |

**kwargs |

keyword arguments only used for backward compatibility. |

Usage:

opt = tf.keras.optimizers.SGD(learning_rate=0.1)var = tf.Variable(1.0)loss = lambda: (var ** 2)/2.0 # d(loss)/d(var1) = var1opt.minimize(loss, [var])# Step is `- learning_rate * grad`var.numpy()

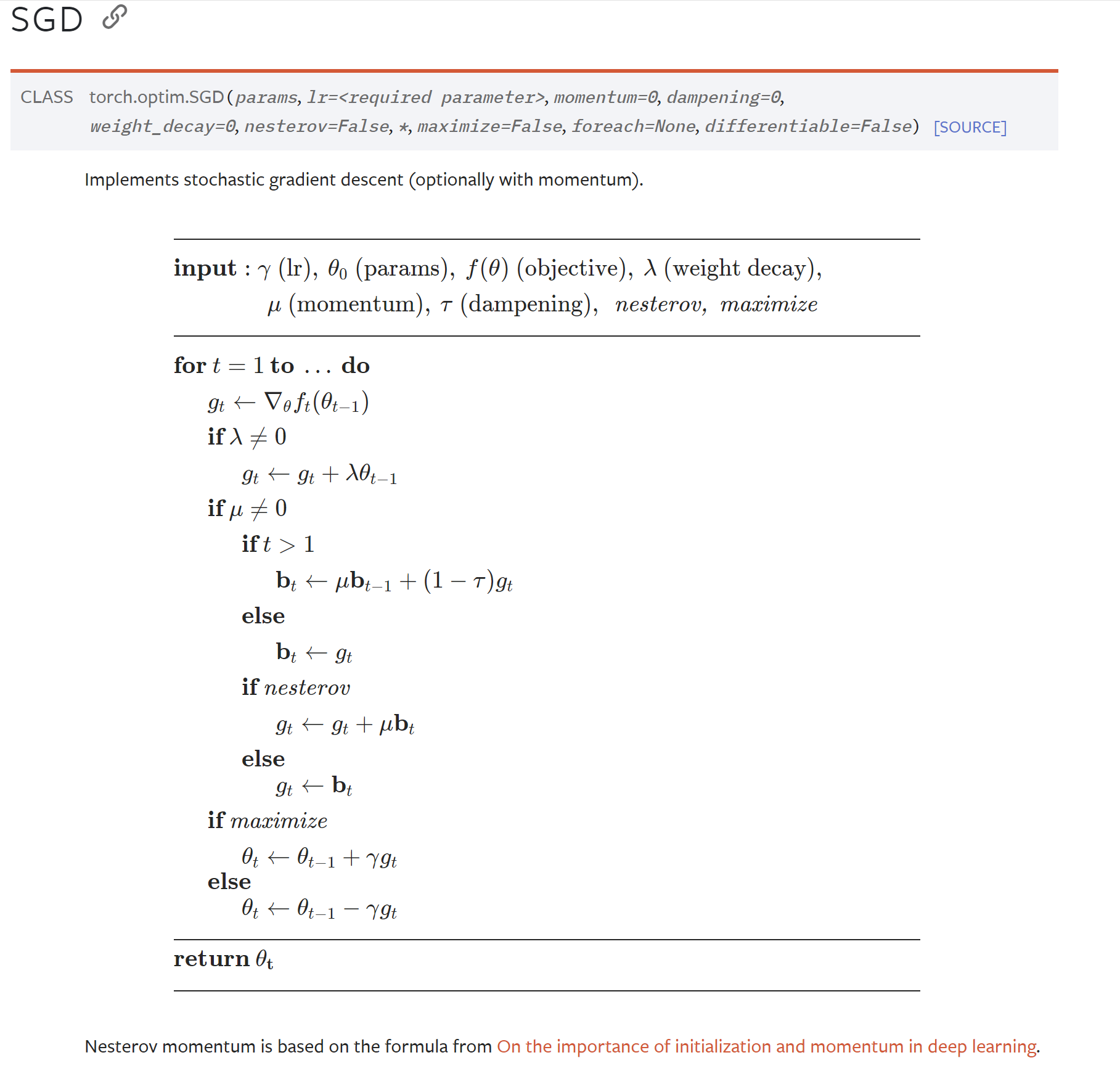

Pytorch

更新参数:使用计算得到的梯度来更新模型的参数。SGD的更新规则如下:

新参数 = 旧参数 - 学习率 * 梯度w= w - lr * g