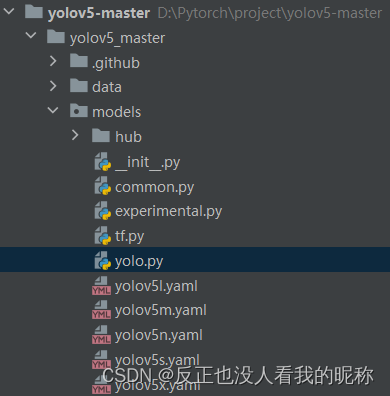

在该目录下存放着yolo.py文件,里面的代码是关于网络构建相关的。

里面其实就写了两个class,一个是Detect,一个是Model

Detect

class Detect(nn.Module):

stride = None # strides computed during build

onnx_dynamic = False # ONNX export parameter

def __init__(self, nc=80, anchors=(), ch=(), inplace=True): # detection layer

super().__init__()

self.nc = nc # number of classes

self.no = nc + 5 # number of outputs per anchor

self.nl = len(anchors) # number of detection layers

self.na = len(anchors[0]) // 2 # number of anchors

self.grid = [torch.zeros(1)] * self.nl # init grid

self.anchor_grid = [torch.zeros(1)] * self.nl # init anchor grid

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch) # output conv

self.inplace = inplace # use in-place ops (e.g. slice assignment)

def forward(self, x):

z = [] # inference output

for i in range(self.nl):

x[i] = self.m[i](x[i]) # conv

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

if not self.training: # inference

if self.grid[i].shape[2:4] != x[i].shape[2:4] or self.onnx_dynamic:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

y = x[i].sigmoid()

if self.inplace:

y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

else: # for YOLOv5 on AWS Inferentia https://github.com/ultralytics/yolov5/pull/2953

xy = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, y[..., 4:]), -1)

z.append(y.view(bs, -1, self.no))

return x if self.training else (torch.cat(z, 1), x)

def _make_grid(self, nx=20, ny=20, i=0):

d = self.anchors[i].device

yv, xv = torch.meshgrid([torch.arange(ny).to(d), torch.arange(nx).to(d)])

grid = torch.stack((xv, yv), 2).expand((1, self.na, ny, nx, 2)).float()

anchor_grid = (self.anchors[i].clone() * self.stride[i]) \

.view((1, self.na, 1, 1, 2)).expand((1, self.na, ny, nx, 2)).float()

return grid, anchor_grid先看__init__初始化方法:一开始是接受的了4个数据 nc=80, anchors=(), ch=(), inplace=True

nc:分类总数 默认是80个类别是coco数据集的。

anchors:每一个feature map上先验框大小。 每个维度存放了3个框的大小 里面数据存储方式是[[10,13,16,30,33,23],[30,61,62,45,59,119],[116,90,156,198,373,326]]

ch:3个feature map的通道数 [128,256,512]

inplace: 一般都是True 默认不使用AWS Inferentia加速

self.nc = nc # 重写了分类数

self.no = nc + 5 # 每个先验框输出的结果,前面的nc是目标类得分 + 先验框的数据[x, y, h, w, p(目标检测得分)]

self.nl = len(anchors) # 检测维度,一般是3,

self.na = len(anchors[0]) // 2 # 先验框的个数,一般也是3

self.grid = [torch.zeros(1)] * self.nl # 全是1的格子

self.anchor_grid = [torch.zeros(1)] * self.nl # 先验框的格子

# 模型中需要保存的参数一般有两种:一种是反向传播需要被优化器更新的,称为parameter;

# 一种不要被优化器更新称为buffer

# 不需要被更新的参数,我们需要创建一个tensor,然后通过register_buffer去注册

# 可以通过model.buffers() 返回,注册后的参数也会被自动保存到OrderDict中去。

# 需要注意的是buffer的参数更新是在forward中,而optim.step只能更新nn.parameter类型的参数

self.register_buffer('anchors', torch.tensor(anchors).float().view(self.nl, -1, 2)) # shape(nl,na,2)

self.m = nn.ModuleList(nn.Conv2d(x, self.no * self.na, 1) for x in ch) # output conv 1*1的卷积

# 一般都是True 默认不使用AWS Inferentia加速

self.inplace = inplace # use in-place ops (e.g. slice assignment)接下来就是forward方法

def forward(self, x):

# 先将z赋值成一个空列表

z = [] # inference output

# 然后对每一个检测维度进行迭代

for i in range(self.nl):

# 先进行一个1*1的卷积操作,统一维度,便于拼接

# [bs, 128/256/512, 80, 80] - [bs, 75, 80, 80]

x[i] = self.m[i](x[i]) # conv

# 取出x的维度

bs, _, ny, nx = x[i].shape # x(bs,255,20,20) to x(bs,3,20,20,85)

# 调整顺序

x[i] = x[i].view(bs, self.na, self.no, ny, nx).permute(0, 1, 3, 4, 2).contiguous()

# 判断是否是训练模式,为训练模式则不工作

"""

因为推理返回的不是归一化后的网格偏移量 需要再加上网格的位置 得到最终的推理坐标 再送入nms

所以这里构建网格就是为了纪律每个grid的网格坐标 方面后面使用

"""

# 如果当前模式为预测推理模式

if not self.training: # inference 推理

if self.grid[i].shape[2:4] != x[i].shape[2:4] or self.onnx_dynamic:

self.grid[i], self.anchor_grid[i] = self._make_grid(nx, ny, i)

y = x[i].sigmoid()

"""

默认执行 不使用AWS Inferentia

这里的公式和yolov3、v4中使用的不一样 是yolov5作者自己用的 效果更好

"""

if self.inplace:

y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

else:

xy = (y[..., 0:2] * 2. - 0.5 + self.grid[i]) * self.stride[i] # xy

wh = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

y = torch.cat((xy, wh, y[..., 4:]), -1)

z.append(y.view(bs, -1, self.no))

# 如果是训练模式,返回x就行。如果不是则返回拼接结果 预测框坐标,object,class

return x if self.training else (torch.cat(z, 1), x)