这部分是手写ORB特征提取、匹配的具体实现,整体流程如主函数 注释:

int main(int agrc ,char ** agrv){

// 1.读图像

cv::Mat first_image=cv::imread(first_file,0);

cv::Mat second_image=cv::imread(second_file,0);

assert(first_image.data !=nullptr && second_image.data!=nullptr);

cv::Ptr<cv::DescriptorMatcher> matcher = cv::DescriptorMatcher::create("BruteForce-Hamming(2)");

// 2.fast 角点提取

// 2.1定义 vector 容器储存keypoingts1 和 keypoints2

vector<cv::KeyPoint>keypoints1;

vector<cv::KeyPoint>keypoints2;

// 2.2提取fast角点存放在以上容器中

cv::FAST(first_image,keypoints1,40);

cv::FAST(second_image,keypoints2,40);

// 3.计算描述子

// 3.1 定义vector 容器存储descriptor1 和descriptor2 描述子

vector <DescType>descriptor1;

vector <DescType>descriptor2;

// 3.2 调用 ComputeORB 函数去计算描述子

ComputeORB (first_image,keypoints1,descriptor1);

ComputeORB (first_image,keypoints2,descriptor2);

// 4.匹配描述子

// 4.1 定义vector 容器存储 dmatch

vector<cv::DMatch> matches;

//4.2 调用BfMatch 函数去计算描述子

BfMatch(descriptor1,descriptor2,matches);

// 5.画图显示

cv::Mat image_show;

cv::drawMatches(first_image, keypoints1, second_image, keypoints2, matches, image_show);

cv::imshow("matches", image_show);

cv::imwrite("matches.png", image_show);

cv::waitKey(0);

cout << "done." << endl;

return 0;

}

下面是完整代码注释 (由于 ORB_pattern行数太多,这里没有放进来)

#include <opencv2/opencv.hpp>

#include <string>

#include <nmmintrin.h>

#include <chrono>

using namespace std;

// global variables 全局变量路径中读图片

string first_file = "./1.png";

string second_file = "./2.png";

// 32 bit unsigned int, will have 8, 8x32=256

typedef vector<uint32_t> DescType; // Descriptor type

/**

* compute descriptor of orb keypoints

* @param img input image 输入图像

* @param keypoints detected fast keypoints fast角点

* @param descriptors descriptors 描述子

*

* NOTE: if a keypoint goes outside the image boundary (8 pixels), descriptors will not be computed and will be left as

* empty

* 如果关键点超出图像边界(8像素),则不会计算描述符,并将其保留为 * empty

*/

//函数声明 自定义了ComputeORB函数来描述ORB特征点,并旋转使其具备旋转尺度不变性

void ComputeORB(const cv::Mat &img, vector<cv::KeyPoint> &keypoints, vector<DescType> &descriptors);

/**

* brute-force match two sets of descriptors

* @param desc1 the first descriptor 描述子1

* @param desc2 the second descriptor 描述子2

* @param matches matches of two images 匹配

*/

//函数声明 自定义了BfMatch函数来进行 brute-force matching

void BfMatch(const vector<DescType> &desc1, const vector<DescType> &desc2, vector<cv::DMatch> &matches);

//主函数

int main(int argc, char **argv) {

// load image 读图片

cv::Mat first_image = cv::imread(first_file, 0);

cv::Mat second_image = cv::imread(second_file, 0);

assert(first_image.data != nullptr && second_image.data != nullptr); //检测图片是否为空

// detect FAST keypoints1 using threshold=40

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

vector<cv::KeyPoint> keypoints1;

cv::FAST(first_image, keypoints1, 40); //利用FAST从图1中提取关键点keypoints1

vector<DescType> descriptor1; //定义图1的描述子

ComputeORB(first_image, keypoints1, descriptor1);//根据图1和FAST提取的关键点,通过ORB设置描述子descriptor1

// same for the second

//keypoints2的操作和1一样

vector<cv::KeyPoint> keypoints2;

vector<DescType> descriptor2;

cv::FAST(second_image, keypoints2, 40);

ComputeORB(second_image, keypoints2, descriptor2);

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "extract ORB cost = " << time_used.count() << " seconds. " << endl;

// find matches

//匹配

vector<cv::DMatch> matches;

t1 = chrono::steady_clock::now();

BfMatch(descriptor1, descriptor2, matches);

t2 = chrono::steady_clock::now();

time_used = chrono::duration_cast<chrono::duration<double>>(t2 - t1);

cout << "match ORB cost = " << time_used.count() << " seconds. " << endl;

cout << "matches: " << matches.size() << endl;

// plot the matches

//画出匹配、显示窗口

cv::Mat image_show;

cv::drawMatches(first_image, keypoints1, second_image, keypoints2, matches, image_show);

cv::imshow("matches", image_show);

cv::imwrite("matches.png", image_show);

cv::waitKey(0);

cout << "done." << endl;

return 0;

}

// compute the descriptor

//计算描述子步骤:

// 1、因为以关键点为中心,边长为16×16的方形区域灰度计算关键点角度信息;所以先去掉所有在离图像边缘在8个像素范围内的像素,否则计算关键点角度会出错;

// 2、计算关键点周围16×16的领域质心,并计算关键点角度的cos,和sin

// 3、根据指定随机规则,选择关键点周围的随机点对计算随机点对的亮度强弱, 如果第一个像素pp比第二个qq亮,则为描述符中的相应位分配值 1,否则分配值 0

// 4、将计算的关键点描述子向量加入到描述子向量集合中

void ComputeORB(const cv::Mat &img, vector<cv::KeyPoint> &keypoints, vector<DescType> &descriptors) {

const int half_patch_size = 8;//ORB检测Oriented FAST关键点时选取的图像块边长,即计算质心时选取的图像块区域边长,为长、宽各为16 pixel的区域。虽然16x16=256,但并不是描述子的向量长度

const int half_boundary = 16;//ORB计算BRIEF描述子时所选取的图像块区域,为长宽各为32 pixel的图像块

//badpoints检测

int bad_points = 0;

//如果如果以该keypoints为中心的图像块,超出了图像范围,则记录为badpoints

for (auto &kp: keypoints) {

if (kp.pt.x < half_boundary || kp.pt.y < half_boundary ||

kp.pt.x >= img.cols - half_boundary || kp.pt.y >= img.rows - half_boundary) {

// outside

bad_points++;

// descriptors.push_back({});

continue;

}

//计算灰度质心

float m01 = 0, m10 = 0;

//从左到右遍历以关键点为中心的,半径为8的像素点,共256个像素点

for (int dx = -half_patch_size; dx < half_patch_size; ++dx) {

//从上到下遍历以关键点为中心的,半径为8的像素点,共256个像素点

for (int dy = -half_patch_size; dy < half_patch_size; ++dy) {

//计算区域内的像素坐标,关键点坐标(x,y)+偏移坐标(dx,dy)

//img.at(y,x),参数是点所在的行列而不是点的坐标

uchar pixel = img.at<uchar>(kp.pt.y + dy, kp.pt.x + dx);

//计算x方向灰度总权重,注意:此处使用dx,非X方向坐标值

m10 += dx * pixel;

//计算y方向灰度总权重,注意:此处使用dy,非y方向坐标值

m01 += dy * pixel;

}

}

// angle should be arc tan(m01/m10);

// 计算关键点角度信息 arc tan(m01/m10);

//这里计算灰度质心与关键点构成的直角三角形的长边,用于后面计算角度;没有通过除总灰度值计算质心位置

float m_sqrt = sqrt(m01 * m01 + m10 * m10) + 1e-18; // avoid divide by zero

float sin_theta = m01 / m_sqrt;

float cos_theta = m10 / m_sqrt;

// compute the angle of this point

//计算关键点描述子向量

//8个描述子向量,每个向量中的元素占据32位,初始化为0;每个描述子使用256位二进制数进行描述

DescType desc(8, 0);

//处理每个向量

for (int i = 0; i < 8; i++) {

uint32_t d = 0;

//处理每一位

for (int k = 0; k < 32; k++) {

//在256*4的随机数中随机选一行作为p(idx1,idx2),q(idx3,idx4),i,k递增,所以所有点选择特征向量的规制一致,才能比较

int idx_pq = i * 32 + k;

//ORB_pattern含义是在16*16图像块中按高斯分布选取点对,选出来的p、q是未经过旋转的

//相当于在以关键点为中心[-13,12]的范围内,随机选点对p,q;进行关键点的向量构建

cv::Point2f p(ORB_pattern[idx_pq * 4], ORB_pattern[idx_pq * 4 + 1]);

cv::Point2f q(ORB_pattern[idx_pq * 4 + 2], ORB_pattern[idx_pq * 4 + 3]);

// rotate with theta

// 计算关键点随机选择的特征点对旋转后的位置

//pp和qq利用了特征点的方向,计算了原始随机选出的p,q点旋转后的位置pp,qq,体现了ORB的旋转不变性

cv::Point2f pp = cv::Point2f(cos_theta * p.x - sin_theta * p.y, sin_theta * p.x + cos_theta * p.y)

+ kp.pt;

cv::Point2f qq = cv::Point2f(cos_theta * q.x - sin_theta * q.y, sin_theta * q.x + cos_theta * q.y)

+ kp.pt;

//比较pp, qq处的像素大小,决定0 or 1

if (img.at<uchar>(pp.y, pp.x) < img.at<uchar>(qq.y, qq.x)) {

d |= 1 << k;

}

}

desc[i] = d;

}

descriptors.push_back(desc);

}

cout << "bad/total: " << bad_points << "/" << keypoints.size() << endl;

}

// brute-force matching

//对两个descriptor中的特征做matching

void BfMatch(const vector<DescType> &desc1, const vector<DescType> &desc2, vector<cv::DMatch> &matches) {

const int d_max = 40;

//每个i1对应一个最小距离的i2

for (size_t i1 = 0; i1 < desc1.size(); ++i1) {

if (desc1[i1].empty()) continue;

//cv:: DMatch包含queryIdx(特征1的idx),trainIdx(与特征1相匹配的特征2的idx),distance(特征1与特征2的距离)

cv::DMatch m{i1, 0, 256};

for (size_t i2 = 0; i2 < desc2.size(); ++i2) {

if (desc2[i2].empty()) continue;

int distance = 0;

for (int k = 0; k < 8; k++) {

//统计两个distance中不同bit的个数

distance += _mm_popcnt_u32(desc1[i1][k] ^ desc2[i2][k]);

}

//找出最小距离和对应的desc2的index

if (distance < d_max && distance < m.distance) {

m.distance = distance;

m.trainIdx = i2;

}

}

if (m.distance < d_max) {

matches.push_back(m);

}

}

}

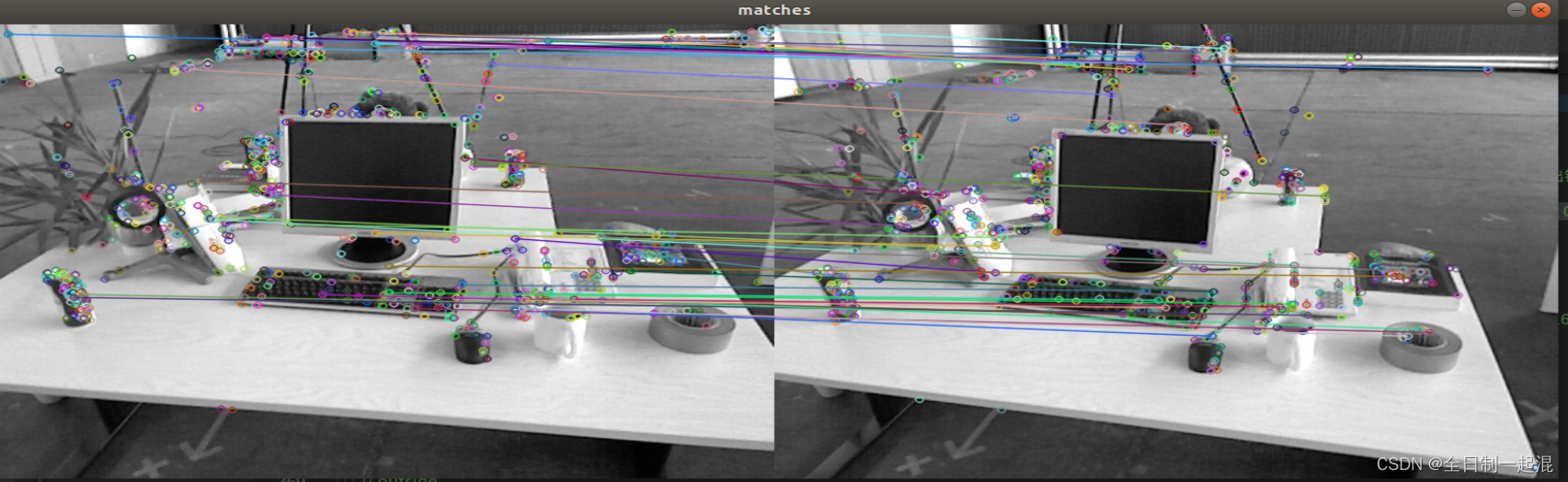

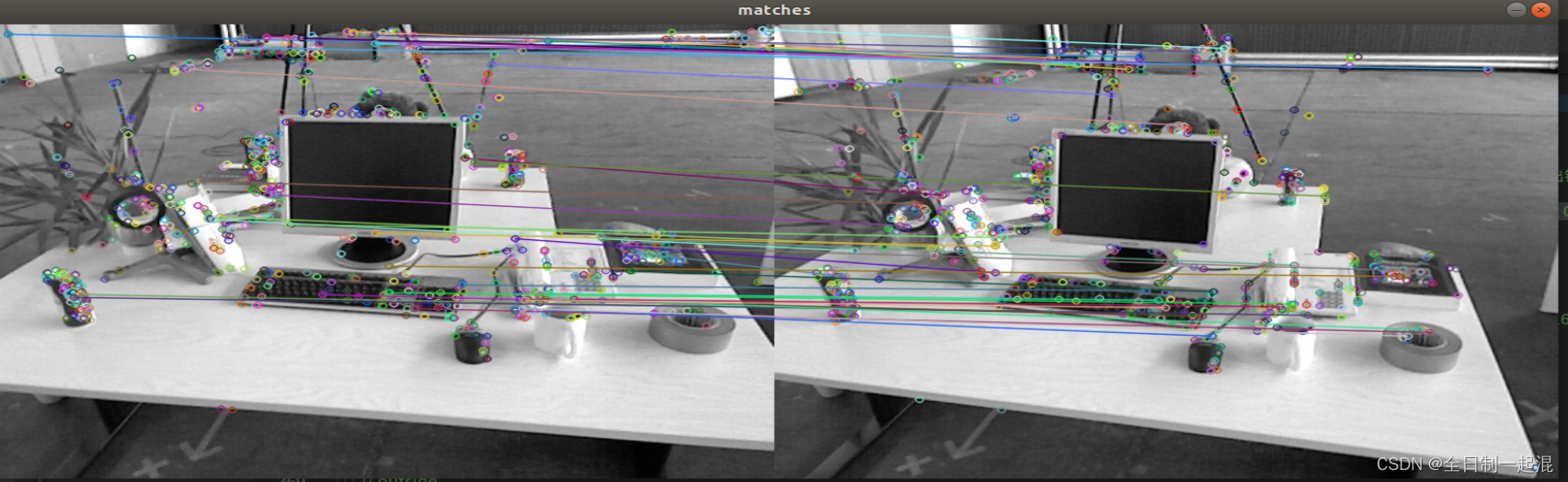

实现效果: