concurrentHashMap介绍

concurrentHashMap是一个高性能、线程安全的HashMap,底层数据结构主要还是以数组+链表+红黑树实现与HashMap的结构是一致的。

concurrentHashMap1.7和1.8的区别?

对比名称 |

1.7 |

1.8 |

备注 |

线程安全 |

是 |

是 |

|

数据结构 |

数组+链表 |

数组+链表/红黑树 |

|

并发实现 |

ReentrantLock+Segment+HashEntry |

自旋+cas+分段锁 synchronized+CAS+HashEntry+红黑树 |

|

锁实现 |

分段锁,默认并发是16,初始化后Segment数组大小就固定,无法扩容 |

CAS+synchronized 保证并发安全 |

|

释放锁 |

需要调用unlock() |

不需要显示解锁 |

为什么有hashTable还需要concurrentHashMap?

concurrentHashMap的出现主要是用来替换饱受嫌弃的HashTable,原因是HashTable虽然线程安全的但是使用的是synchronized的类锁,导致每次加锁都是把整个类锁了,在高并发的场景下性能非常低下,在有些生产的场景因为使用这个出现线上重大事故。所以可以说concurrentHashMap的出现用于解决高并发、高效率及线程安全的场景而设计的。

concurrentHashMap的基础使用

public class ConcurrentHashMapStudy {

public static void main(String[] args) {

ConcurrentHashMap map = new ConcurrentHashMap(32);

map.put("a",1);

map.put("b",2);

map.put("c",3);

System.out.println(map.toString());

//添加不成功,返回旧值,原因是原来的key已存在

Object a = map.putIfAbsent("a", 2);

System.out.println(a);

//添加成功 返回Null

Object d = map.putIfAbsent("d", 5);

System.out.println(d);

System.out.println(map.toString());

}

}结果

{a=1, b=2, c=3}

1

null

{a=1, b=2, c=3, d=5}其它更多api请自动search

源码学习

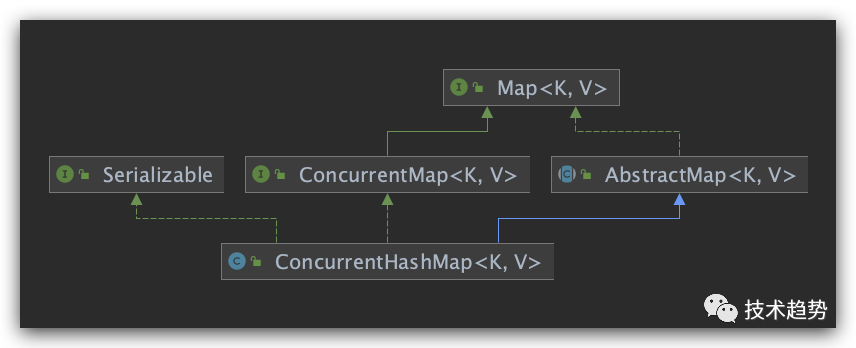

ConcurrentHashMap实现ConcurrentMap和Serializable 接口,继承 AbstractMap

属性名称 |

作用 |

备注 |

MAXIMUM_CAPACITY |

散列表数组最大容量 |

用于判断 |

DEFAULT_CAPACITY |

散列表默认容量(是2的幂次方) |

初始化为16 |

MAX_ARRAY_SIZE |

最大的数组大小(不是2的幂次方)toArray和相关方法所需。 |

实始化为Integer.MAX_VALUE - 8 |

LOAD_FACTOR |

负载因子 |

默认为0.75 |

TREEIFY_THRESHOLD |

树化阈值(超过8转成红黑树) |

将链表转为红黑树 |

UNTREEIFY_THRESHOLD |

转为链表的阈值 |

小于6转为链表 |

MIN_TRANSFER_STRIDE |

转为树型化的小最容量 |

|

MIN_TRANSFER_STRIDE |

迁移阈值 |

默认为16 |

RESIZE_STAMP_BITS |

扩容基数 |

固定16是为了扩容的时候使用 |

MAX_RESIZERS |

并发扩容线程最大阈值 |

|

RESIZE_STAMP_SHIFT |

扩容计算值 |

用于扩容的判断和计算值 |

详细源码实现:

//线程安全map实现 默认继承AbstractMap(与hashMap一致)

public class ConcurrentHashMap<K,V> extends AbstractMap<K,V>

implements ConcurrentMap<K,V>, Serializable {

private static final long serialVersionUID = 7249069246763182397L;

/* ---------------- Constants -------------- */

//散列表数组最大容量

private static final int MAXIMUM_CAPACITY = 1 << 30;

//散列表默认容量(是2的幂次方)

private static final int DEFAULT_CAPACITY = 16;

//最大的数组大小(不是2的幂次方)toArray和相关方法所需。

static final int MAX_ARRAY_SIZE = Integer.MAX_VALUE - 8;

//默认的并发级别,目前未被使用,用于旧版本的兼容(jdk1.7遗留)

private static final int DEFAULT_CONCURRENCY_LEVEL = 16;

//负载因子

private static final float LOAD_FACTOR = 0.75f;

//树化阈值(超过8转成红黑树):将链表转为红黑树

static final int TREEIFY_THRESHOLD = 8;

//转为链表的阈值:将红黑树转为树

static final int UNTREEIFY_THRESHOLD = 6;

//转为树型化的小最容量:需要某个桶容量达到8才会转为红黑树

static final int MIN_TREEIFY_CAPACITY = 64;

//迁移阈值:控制线程迁移的最小阈值

private static final int MIN_TRANSFER_STRIDE = 16;

//扩容基数:固定16是为了扩容的时候使用

private static int RESIZE_STAMP_BITS = 16;

//并发扩容线程最大阈值 (1<<(32-16))-1

private static final int MAX_RESIZERS = (1 << (32 - RESIZE_STAMP_BITS)) - 1;

//扩容计算值:用于扩容的判断和计算值

private static final int RESIZE_STAMP_SHIFT = 32 - RESIZE_STAMP_BITS;

static final int MOVED = -1; // 正在转移状态

static final int TREEBIN = -2; // 表示已转换成树状态

static final int RESERVED = -3; // 临时保留节点状态

static final int HASH_BITS = 0x7fffffff; // 值大于0就是链表

//CPU的数量

static final int NCPU = Runtime.getRuntime().availableProcessors();

//序列化兼容性

private static final ObjectStreamField[] serialPersistentFields = {

new ObjectStreamField("segments", Segment[].class),

new ObjectStreamField("segmentMask", Integer.TYPE),

new ObjectStreamField("segmentShift", Integer.TYPE)

};

/* ---------------- Nodes -------------- */

//node节点

static class Node<K,V> implements Map.Entry<K,V> {

//hash值(不可变 )

final int hash;

//key值(不可变)

final K key;

//值 内存可见

volatile V val;

//下一个节点

volatile Node<K,V> next;

//带参构造方法

Node(int hash, K key, V val, Node<K,V> next) {

this.hash = hash;

this.key = key;

this.val = val;

this.next = next;

}

//获取当前key方法

public final K getKey() { return key; }

//获取当前key的值

public final V getValue() { return val; }

//藜取这个对应的哈希值

public final int hashCode() { return key.hashCode() ^ val.hashCode(); }

//转成字符串方法

public final String toString(){ return key + "=" + val; }

//设置当前节点的值(会直接抛出异常)

public final V setValue(V value) {

throw new UnsupportedOperationException();

}

/对比是否一致方法

public final boolean equals(Object o) {

Object k, v, u; Map.Entry<?,?> e;

//主要判断key和内容一样才为一致的对象

return ((o instanceof Map.Entry) &&

(k = (e = (Map.Entry<?,?>)o).getKey()) != null &&

(v = e.getValue()) != null &&

(k == key || k.equals(key)) &&

(v == (u = val) || v.equals(u)));

}

//查询对象 h为传进来的哈希值

Node<K,V> find(int h, Object k) {

//获取当前对象

Node<K,V> e = this;

//传进来的k如果为空直接返回空

if (k != null) {

//do循环去查找的,主要是从头到尾

do {

K ek;

if (e.hash == h &&

((ek = e.key) == k || (ek != null && k.equals(ek))))

return e;

} while ((e = e.next) != null);

}

return null;

}

}

/* ---------------- Static utilities -------------- */

//获取hash值

static final int spread(int h) {

return (h ^ (h >>> 16)) & HASH_BITS;

}

//扩容的时候使用到,判断当前的值是否达到扩容条件,如果是进行扩容计算,然后返回扩容空量大小。

private static final int tableSizeFor(int c) {

int n = c - 1;

n |= n >>> 1;

n |= n >>> 2;

n |= n >>> 4;

n |= n >>> 8;

n |= n >>> 16;

return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1;

}

//获取指定的对象,如果存在返回class否则返回空

static Class<?> comparableClassFor(Object x) {

//判断为Comparable类型

if (x instanceof Comparable) {

Class<?> c; Type[] ts, as; Type t; ParameterizedType p;

if ((c = x.getClass()) == String.class) // bypass checks

return c;

if ((ts = c.getGenericInterfaces()) != null) {

for (int i = 0; i < ts.length; ++i) {

if (((t = ts[i]) instanceof ParameterizedType) &&

((p = (ParameterizedType)t).getRawType() ==

Comparable.class) &&

(as = p.getActualTypeArguments()) != null &&

as.length == 1 && as[0] == c) // type arg is c

return c;

}

}

}

return null;

}

//对比对象是否一致

@SuppressWarnings({"rawtypes","unchecked"}) // for cast to Comparable

static int compareComparables(Class<?> kc, Object k, Object x) {

return (x == null || x.getClass() != kc ? 0 :

((Comparable)k).compareTo(x));

}

//从Unsafe获取对象

@SuppressWarnings("unchecked")

static final <K,V> Node<K,V> tabAt(Node<K,V>[] tab, int i) {

return (Node<K,V>)U.getObjectVolatile(tab, ((long)i << ASHIFT) + ABASE);

}

//同上类型

static final <K,V> boolean casTabAt(Node<K,V>[] tab, int i,

Node<K,V> c, Node<K,V> v) {

return U.compareAndSwapObject(tab, ((long)i << ASHIFT) + ABASE, c, v);

}

//设置内容到指定下标位置

static final <K,V> void setTabAt(Node<K,V>[] tab, int i, Node<K,V> v) {

U.putObjectVolatile(tab, ((long)i << ASHIFT) + ABASE, v);

}

//存储数据的数组

transient volatile Node<K,V>[] table;

//临时表列,扩展的时候

private transient volatile Node<K,V>[] nextTable;

//用于计算的基础统计值

private transient volatile long baseCount;

//-1的时候代表正在node数组正在初始化 初始化之后赋值为扩容的阈值

private transient volatile int sizeCtl;

/**

* 调整大小时下一个表的索引+1

*/

private transient volatile int transferIndex;

/**

* 自旋锁标记(用于计算size的加锁标记)

*/

private transient volatile int cellsBusy;

//计算size的默认长度

private transient volatile CounterCell[] counterCells;

//当前节点的keySet

private transient KeySetView<K,V> keySet;

//当前节点的内容

private transient ValuesView<K,V> values;

//当前节点的entrySet

private transient EntrySetView<K,V> entrySet;

//空构造方法

public ConcurrentHashMap() {

}

//指定容量的构造方法

public ConcurrentHashMap(int initialCapacity) {

//小于0抛出异常

if (initialCapacity < 0)

throw new IllegalArgumentException();

int cap = ((initialCapacity >= (MAXIMUM_CAPACITY >>> 1)) ?

MAXIMUM_CAPACITY :

tableSizeFor(initialCapacity + (initialCapacity >>> 1) + 1));

this.sizeCtl = cap;

}

//构造方法 带初始化参数

public ConcurrentHashMap(Map<? extends K, ? extends V> m) {

this.sizeCtl = DEFAULT_CAPACITY;

putAll(m);

}

//构造方法

//initialCapacity 初始容量

//loadFactor 负载因子

public ConcurrentHashMap(int initialCapacity, float loadFactor) {

this(initialCapacity, loadFactor, 1);

}

//构造方法

//initialCapacity 初始容量

//loadFactor 负载因子

//concurrencyLevel 并发级别

public ConcurrentHashMap(int initialCapacity,

float loadFactor, int concurrencyLevel) {

//如果loadFactor小于0 或 initialCapacity 小于0 或 concurrencyLevel 小于等于0 直接抛出异常

if (!(loadFactor > 0.0f) || initialCapacity < 0 || concurrencyLevel <= 0)

throw new IllegalArgumentException();

//初始容易小于等级 则 初始容易等于等级

if (initialCapacity < concurrencyLevel) // Use at least as many bins

initialCapacity = concurrencyLevel; // as estimated threads

//计算大小为 初如容量/赋载因子

long size = (long)(1.0 + (long)initialCapacity / loadFactor);

//容量为 size大于最大值取最大容易否则 计算这个size

int cap = (size >= (long)MAXIMUM_CAPACITY) ?

MAXIMUM_CAPACITY : tableSizeFor((int)size);

//最后将这个 计算出来赋值给sizeCtl

this.sizeCtl = cap;

}

//获取当前数组长度(超过最大值取默认最大值)

public int size() {

long n = sumCount();

return ((n < 0L) ? 0 :

(n > (long)Integer.MAX_VALUE) ? Integer.MAX_VALUE :

(int)n);

}

//判断是否数组为空方法

public boolean isEmpty() {

return sumCount() <= 0L; // ignore transient negative values

}

//通过key获取内容

public V get(Object key) {

Node<K,V>[] tab; Node<K,V> e, p; int n, eh; K ek;

//获取key

int h = spread(key.hashCode());

//判断不为空且长度大于0且获取的下标不为空

if ((tab = table) != null && (n = tab.length) > 0 &&

(e = tabAt(tab, (n - 1) & h)) != null) {

//如果获取的哈希等于计算出来的哈希

if ((eh = e.hash) == h) {

//key一致则返回内容

if ((ek = e.key) == key || (ek != null && key.equals(ek)))

return e.val;

}

//如果小于0(有可能正在扩容)

else if (eh < 0)

//返回 通过find方法获取的这个对象不为空返回内容否则直接返回空

return (p = e.find(h, key)) != null ? p.val : null;

//最后循环判断是否key一致,如果是返回内容

while ((e = e.next) != null) {

if (e.hash == h &&

((ek = e.key) == key || (ek != null && key.equals(ek))))

return e.val;

}

}

//如果什么都不匹配则返回Null

return null;

}

//判断当前数组是否包含key

public boolean containsKey(Object key) {

return get(key) != null;

}

//包含内容方法

public boolean containsValue(Object value) {

//如果内容为空则抛出异常

if (value == null)

throw new NullPointerException();

//存放链表数据

Node<K,V>[] t;

//如果不为空

if ((t = table) != null) {

//转换为Traverser 格式

Traverser<K,V> it = new Traverser<K,V>(t, t.length, 0, t.length);

//迭代判断内容如果一致则返回true

for (Node<K,V> p; (p = it.advance()) != null; ) {

V v;

if ((v = p.val) == value || (v != null && value.equals(v)))

return true;

}

}

//找不到则返回false

return false;

}

//添加元素方法

public V put(K key, V value) {

return putVal(key, value, false);

}

//真实添加元素方法

final V putVal(K key, V value, boolean onlyIfAbsent) {

//key为空 或 value为空直接抛出异常

if (key == null || value == null) throw new NullPointerException();

//计算哈希值

int hash = spread(key.hashCode());

//

int binCount = 0;

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

//如果为空 或长度等于0 则进行初始化

if (tab == null || (n = tab.length) == 0)

tab = initTable();

//判断指定位置如果为空

else if ((f = tabAt(tab, i = (n - 1) & hash)) == null) {

//通过cas进行新节点添加

if (casTabAt(tab, i, null,

new Node<K,V>(hash, key, value, null)))

break; // no lock when adding to empty bin

}

//如果节点已被移动,表示正在扩容,过去协助扩容

else if ((fh = f.hash) == MOVED)

//协作转移或扩容 并返回最新表

tab = helpTransfer(tab, f);

else {

//初始化oldVal为空

V oldVal = null;

//使用同步锁

synchronized (f) {

//再次获取元素与之前元素进行比较

if (tabAt(tab, i) == f) {

//如果哈希大于等于,证明还不是红黑树,(红黑树为-2)

if (fh >= 0) {

binCount = 1;

//循环判断哈希是否一致,如果一致进行赋值

for (Node<K,V> e = f;; ++binCount) {

K ek;

if (e.hash == hash &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

oldVal = e.val;

if (!onlyIfAbsent)

e.val = value;

break;

}

Node<K,V> pred = e;

if ((e = e.next) == null) {

pred.next = new Node<K,V>(hash, key,

value, null);

break;

}

}

}

//如果为红黑树

else if (f instanceof TreeBin) {

Node<K,V> p;

binCount = 2;

//添加到红黑树中

if ((p = ((TreeBin<K,V>)f).putTreeVal(hash, key,

value)) != null) {

oldVal = p.val;

if (!onlyIfAbsent)

p.val = value;

}

}

}

}

if (binCount != 0) {

//如果节点数超过或等于8则进行转换为树

if (binCount >= TREEIFY_THRESHOLD)

treeifyBin(tab, i);

if (oldVal != null)

return oldVal;

break;

}

}

}

//增加统计数

addCount(1L, binCount);

//返回空

return null;

}

//添加多个map到列表的方法

public void putAll(Map<? extends K, ? extends V> m) {

//尝试调整大小

tryPresize(m.size());

//循环增加

for (Map.Entry<? extends K, ? extends V> e : m.entrySet())

putVal(e.getKey(), e.getValue(), false);

}

//从队列中移除元素

public V remove(Object key) {

return replaceNode(key, null, null);

}

//替换节点信息 被remove引用和需要替换场景使用

final V replaceNode(Object key, V value, Object cv) {

//计算哈希值

int hash = spread(key.hashCode());

//循环查找节点

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

//列表为空直接退出

if (tab == null || (n = tab.length) == 0 ||

(f = tabAt(tab, i = (n - 1) & hash)) == null)

break;

//如果当前正在扩容 或位置已经改变

else if ((fh = f.hash) == MOVED)

//协作转移或扩容 并返回最新表

tab = helpTransfer(tab, f);

else {

//初始化值

V oldVal = null;

//初始化处理过的标记

boolean validated = false;

//同步锁(对象锁)

synchronized (f) {

//重新获取内容判断是否一致(有可能转移后或被替换后不一样了)

if (tabAt(tab, i) == f) {

//大于等于0 证明非黑树

if (fh >= 0) {

//标记为是

validated = true;

//循环判断

for (Node<K,V> e = f, pred = null;;) {

K ek;

//如果哈希且key一致 替换内容

if (e.hash == hash &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

//获取e的值

V ev = e.val;

//如果cv为空 或cv等于ev 或 ev不为空 且cv与ev相等

if (cv == null || cv == ev ||

(ev != null && cv.equals(ev))) {

//内容为ev

oldVal = ev;

if (value != null)

//e.val重新赋值

e.val = value;

else if (pred != null)

//获取下一节点

pred.next = e.next;

else

//设置下一节点

setTabAt(tab, i, e.next);

}

//退出

break;

}

//下一节点赋值

pred = e;

//为空退出

if ((e = e.next) == null)

break;

}

}

//如果节点为红黑树

else if (f instanceof TreeBin) {

//标识为是

validated = true;

//转换类型

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> r, p;

//r赋值为根节点不为空 且 查出来的节点信息不为空

if ((r = t.root) != null &&

(p = r.findTreeNode(hash, key, null)) != null) {

//获取p的内容

V pv = p.val;

//cv不为空 或 cv等于pv 或pv不为空 并且 cv等于pv

if (cv == null || cv == pv ||

(pv != null && cv.equals(pv))) {

//赋值

oldVal = pv;

//不为空进行赋值

if (value != null)

p.val = value;

//为空进行删除节点

else if (t.removeTreeNode(p))

//重新将数赋值

setTabAt(tab, i, untreeify(t.first));

}

}

}

}

}

//如果标识是直

if (validated) {

//并且有值

if (oldVal != null) {

//如果传进来的value为空 则长度-1

if (value == null)

addCount(-1L, -1);

//返回旧值

return oldVal;

}

//退出

break;

}

}

}

//返回空

return null;

}

//清空所有数据

public void clear() {

long delta = 0L; // negative number of deletions

int i = 0;

//获取当前列表

Node<K,V>[] tab = table;

//循环清空

while (tab != null && i < tab.length) {

int fh;

Node<K,V> f = tabAt(tab, i);

if (f == null)

++i;

else if ((fh = f.hash) == MOVED) {

tab = helpTransfer(tab, f);

i = 0; // restart

}

else {

synchronized (f) {

if (tabAt(tab, i) == f) {

Node<K,V> p = (fh >= 0 ? f :

(f instanceof TreeBin) ?

((TreeBin<K,V>)f).first : null);

while (p != null) {

--delta;

p = p.next;

}

setTabAt(tab, i++, null);

}

}

}

}

//清除所有统计

if (delta != 0L)

addCount(delta, -1);

}

//获取keySet

public KeySetView<K,V> keySet() {

KeySetView<K,V> ks;

return (ks = keySet) != null ? ks : (keySet = new KeySetView<K,V>(this, null));

}

//获取列表的 值列表

public Collection<V> values() {

ValuesView<K,V> vs;

return (vs = values) != null ? vs : (values = new ValuesView<K,V>(this));

}

//获取set列表

public Set<Map.Entry<K,V>> entrySet() {

EntrySetView<K,V> es;

return (es = entrySet) != null ? es : (entrySet = new EntrySetView<K,V>(this));

}

//获取哈希code

public int hashCode() {

int h = 0;

Node<K,V>[] t;

if ((t = table) != null) {

Traverser<K,V> it = new Traverser<K,V>(t, t.length, 0, t.length);

for (Node<K,V> p; (p = it.advance()) != null; )

h += p.key.hashCode() ^ p.val.hashCode();

}

return h;

}

//转成string的方法

public String toString() {

Node<K,V>[] t;

int f = (t = table) == null ? 0 : t.length;

Traverser<K,V> it = new Traverser<K,V>(t, f, 0, f);

StringBuilder sb = new StringBuilder();

sb.append('{');

Node<K,V> p;

if ((p = it.advance()) != null) {

for (;;) {

K k = p.key;

V v = p.val;

sb.append(k == this ? "(this Map)" : k);

sb.append('=');

sb.append(v == this ? "(this Map)" : v);

if ((p = it.advance()) == null)

break;

sb.append(',').append(' ');

}

}

return sb.append('}').toString();

}

//判断对象是否一致

public boolean equals(Object o) {

//o不等于当前对象

if (o != this) {

//o为map 直接返回false

if (!(o instanceof Map))

return false;

//map

Map<?,?> m = (Map<?,?>) o;

Node<K,V>[] t;

//获取长度

int f = (t = table) == null ? 0 : t.length;

//创建迭代器

Traverser<K,V> it = new Traverser<K,V>(t, f, 0, f);

//遍历

for (Node<K,V> p; (p = it.advance()) != null; ) {

V val = p.val;

Object v = m.get(p.key);

//如果v为空 或v不等于val那就返回false

if (v == null || (v != val && !v.equals(val)))

return false;

}

//通过map方式判断 主要比较key

for (Map.Entry<?,?> e : m.entrySet()) {

Object mk, mv, v;

if ((mk = e.getKey()) == null ||

(mv = e.getValue()) == null ||

(v = get(mk)) == null ||

(mv != v && !mv.equals(v)))

return false;

}

}

//如果没有不一致就是存在

return true;

}

//兼容老板本

static class Segment<K,V> extends ReentrantLock implements Serializable {

private static final long serialVersionUID = 2249069246763182397L;

final float loadFactor;

Segment(float lf) { this.loadFactor = lf; }

}

//序列化实现,如果发现异常则抛出

private void writeObject(java.io.ObjectOutputStream s)

throws java.io.IOException {

// For serialization compatibility

// Emulate segment calculation from previous version of this class

int sshift = 0;

int ssize = 1;

while (ssize < DEFAULT_CONCURRENCY_LEVEL) {

++sshift;

ssize <<= 1;

}

int segmentShift = 32 - sshift;

int segmentMask = ssize - 1;

@SuppressWarnings("unchecked")

Segment<K,V>[] segments = (Segment<K,V>[])

new Segment<?,?>[DEFAULT_CONCURRENCY_LEVEL];

for (int i = 0; i < segments.length; ++i)

segments[i] = new Segment<K,V>(LOAD_FACTOR);

s.putFields().put("segments", segments);

s.putFields().put("segmentShift", segmentShift);

s.putFields().put("segmentMask", segmentMask);

s.writeFields();

Node<K,V>[] t;

if ((t = table) != null) {

Traverser<K,V> it = new Traverser<K,V>(t, t.length, 0, t.length);

for (Node<K,V> p; (p = it.advance()) != null; ) {

s.writeObject(p.key);

s.writeObject(p.val);

}

}

s.writeObject(null);

s.writeObject(null);

segments = null; // throw away

}

//返序列化实现(实例化) 如果发生异常则抛出

private void readObject(java.io.ObjectInputStream s)

throws java.io.IOException, ClassNotFoundException {

/*

* To improve performance in typical cases, we create nodes

* while reading, then place in table once size is known.

* However, we must also validate uniqueness and deal with

* overpopulated bins while doing so, which requires

* specialized versions of putVal mechanics.

*/

sizeCtl = -1; // force exclusion for table construction

s.defaultReadObject();

long size = 0L;

Node<K,V> p = null;

for (;;) {

@SuppressWarnings("unchecked")

K k = (K) s.readObject();

@SuppressWarnings("unchecked")

V v = (V) s.readObject();

if (k != null && v != null) {

p = new Node<K,V>(spread(k.hashCode()), k, v, p);

++size;

}

else

break;

}

if (size == 0L)

sizeCtl = 0;

else {

int n;

if (size >= (long)(MAXIMUM_CAPACITY >>> 1))

n = MAXIMUM_CAPACITY;

else {

int sz = (int)size;

n = tableSizeFor(sz + (sz >>> 1) + 1);

}

@SuppressWarnings("unchecked")

Node<K,V>[] tab = (Node<K,V>[])new Node<?,?>[n];

int mask = n - 1;

long added = 0L;

while (p != null) {

boolean insertAtFront;

Node<K,V> next = p.next, first;

int h = p.hash, j = h & mask;

if ((first = tabAt(tab, j)) == null)

insertAtFront = true;

else {

K k = p.key;

if (first.hash < 0) {

TreeBin<K,V> t = (TreeBin<K,V>)first;

if (t.putTreeVal(h, k, p.val) == null)

++added;

insertAtFront = false;

}

else {

int binCount = 0;

insertAtFront = true;

Node<K,V> q; K qk;

for (q = first; q != null; q = q.next) {

if (q.hash == h &&

((qk = q.key) == k ||

(qk != null && k.equals(qk)))) {

insertAtFront = false;

break;

}

++binCount;

}

if (insertAtFront && binCount >= TREEIFY_THRESHOLD) {

insertAtFront = false;

++added;

p.next = first;

TreeNode<K,V> hd = null, tl = null;

for (q = p; q != null; q = q.next) {

TreeNode<K,V> t = new TreeNode<K,V>

(q.hash, q.key, q.val, null, null);

if ((t.prev = tl) == null)

hd = t;

else

tl.next = t;

tl = t;

}

setTabAt(tab, j, new TreeBin<K,V>(hd));

}

}

}

if (insertAtFront) {

++added;

p.next = first;

setTabAt(tab, j, p);

}

p = next;

}

table = tab;

sizeCtl = n - (n >>> 2);

baseCount = added;

}

}

//添加成功返回Null 如果已存在抛出异常

public V putIfAbsent(K key, V value) {

return putVal(key, value, true);

}

//删除指定的key 如果key为空抛出NullPointerException

public boolean remove(Object key, Object value) {

if (key == null)

throw new NullPointerException();

return value != null && replaceNode(key, null, value) != null;

}

//替换指定key内容方法

public boolean replace(K key, V oldValue, V newValue) {

//key为空或新旧内容为空则抛出空指针异常

if (key == null || oldValue == null || newValue == null)

throw new NullPointerException();

return replaceNode(key, newValue, oldValue) != null;

}

//替换key内容方法

public V replace(K key, V value) {

//key或内容为空则抛出异常

if (key == null || value == null)

throw new NullPointerException();

return replaceNode(key, value, null);

}

//获取指定key的值,如果没有则返回默认值(这个默认值是自已传进来的)

public V getOrDefault(Object key, V defaultValue) {

V v;

return (v = get(key)) == null ? defaultValue : v;

}

//循环遍历方法

public void forEach(BiConsumer<? super K, ? super V> action) {

//如果对象为空则抛出NullPointerException

if (action == null) throw new NullPointerException();

Node<K,V>[] t;

if ((t = table) != null) {

//通过迭代器实现

Traverser<K,V> it = new Traverser<K,V>(t, t.length, 0, t.length);

for (Node<K,V> p; (p = it.advance()) != null; ) {

action.accept(p.key, p.val);

}

}

}

//替换所有Key相同的节点

public void replaceAll(BiFunction<? super K, ? super V, ? extends V> function) {

//为空抛出异常

if (function == null) throw new NullPointerException();

Node<K,V>[] t;

if ((t = table) != null) {

Traverser<K,V> it = new Traverser<K,V>(t, t.length, 0, t.length);

for (Node<K,V> p; (p = it.advance()) != null; ) {

V oldValue = p.val;

for (K key = p.key;;) {

V newValue = function.apply(key, oldValue);

//新值为空抛出NullPointerException

if (newValue == null)

throw new NullPointerException();

//替换节点信息

if (replaceNode(key, newValue, oldValue) != null ||

(oldValue = get(key)) == null)

break;

}

}

}

}

//添加方法,如果key不存在mappingFunction的结果作为value的值

public V computeIfAbsent(K key, Function<? super K, ? extends V> mappingFunction) {

if (key == null || mappingFunction == null)

throw new NullPointerException();

int h = spread(key.hashCode());

V val = null;

int binCount = 0;

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

//初始化

if (tab == null || (n = tab.length) == 0)

tab = initTable();

else if ((f = tabAt(tab, i = (n - 1) & h)) == null) {

Node<K,V> r = new ReservationNode<K,V>();

//同步锁实现

synchronized (r) {

//cas获取锁

if (casTabAt(tab, i, null, r)) {

binCount = 1;

Node<K,V> node = null;

try {

//提交函数后不为空,则但也建新节点

if ((val = mappingFunction.apply(key)) != null)

node = new Node<K,V>(h, key, val, null);

} finally {

//最后将结果存放到指定位置

setTabAt(tab, i, node);

}

}

}

if (binCount != 0)

break;

}

//正在迁移 去辅助转移

else if ((fh = f.hash) == MOVED)

tab = helpTransfer(tab, f);

else {

boolean added = false;

//同步锁

synchronized (f) {

//获取元素一致

if (tabAt(tab, i) == f) {

if (fh >= 0) {

binCount = 1;

for (Node<K,V> e = f;; ++binCount) {

K ek; V ev;

//判断为同一个对象,则跳出

if (e.hash == h &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

val = e.val;

break;

}

Node<K,V> pred = e;

//如果没有下一个节点则跳出

if ((e = e.next) == null) {

//将mappingFunction的结果作为value

if ((val = mappingFunction.apply(key)) != null) {

added = true;

pred.next = new Node<K,V>(h, key, val, null);

}

break;

}

}

}

//如果为红黑树

else if (f instanceof TreeBin) {

binCount = 2;

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> r, p;

if ((r = t.root) != null &&

(p = r.findTreeNode(h, key, null)) != null)

val = p.val;

else if ((val = mappingFunction.apply(key)) != null) {

added = true;

//添加到红墨树中

t.putTreeVal(h, key, val);

}

}

}

}

//判断是否需要扩容

if (binCount != 0) {

if (binCount >= TREEIFY_THRESHOLD)

treeifyBin(tab, i);

if (!added)

return val;

break;

}

}

}

//不为空有值,自增+1

if (val != null)

addCount(1L, binCount);

return val;

}

//同上类似

public V computeIfPresent(K key, BiFunction<? super K, ? super V, ? extends V> remappingFunction) {

if (key == null || remappingFunction == null)

throw new NullPointerException();

int h = spread(key.hashCode());

V val = null;

int delta = 0;

int binCount = 0;

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

if (tab == null || (n = tab.length) == 0)

tab = initTable();

else if ((f = tabAt(tab, i = (n - 1) & h)) == null)

break;

else if ((fh = f.hash) == MOVED)

tab = helpTransfer(tab, f);

else {

synchronized (f) {

if (tabAt(tab, i) == f) {

if (fh >= 0) {

binCount = 1;

for (Node<K,V> e = f, pred = null;; ++binCount) {

K ek;

if (e.hash == h &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

val = remappingFunction.apply(key, e.val);

if (val != null)

e.val = val;

else {

delta = -1;

Node<K,V> en = e.next;

if (pred != null)

pred.next = en;

else

setTabAt(tab, i, en);

}

break;

}

pred = e;

if ((e = e.next) == null)

break;

}

}

else if (f instanceof TreeBin) {

binCount = 2;

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> r, p;

if ((r = t.root) != null &&

(p = r.findTreeNode(h, key, null)) != null) {

val = remappingFunction.apply(key, p.val);

if (val != null)

p.val = val;

else {

delta = -1;

if (t.removeTreeNode(p))

setTabAt(tab, i, untreeify(t.first));

}

}

}

}

}

if (binCount != 0)

break;

}

}

if (delta != 0)

addCount((long)delta, binCount);

return val;

}

//同上类似

public V compute(K key,

BiFunction<? super K, ? super V, ? extends V> remappingFunction) {

if (key == null || remappingFunction == null)

throw new NullPointerException();

//获取hash值

int h = spread(key.hashCode());

V val = null;

int delta = 0;

int binCount = 0;

//遍历

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

//实始化

if (tab == null || (n = tab.length) == 0)

tab = initTable();

//获取位置

else if ((f = tabAt(tab, i = (n - 1) & h)) == null) {

Node<K,V> r = new ReservationNode<K,V>();

synchronized (r) {

if (casTabAt(tab, i, null, r)) {

binCount = 1;

Node<K,V> node = null;

try {

if ((val = remappingFunction.apply(key, null)) != null) {

delta = 1;

node = new Node<K,V>(h, key, val, null);

}

} finally {

setTabAt(tab, i, node);

}

}

}

if (binCount != 0)

break;

}

//正在转移

else if ((fh = f.hash) == MOVED)

tab = helpTransfer(tab, f);

else {

synchronized (f) {

if (tabAt(tab, i) == f) {

if (fh >= 0) {

binCount = 1;

for (Node<K,V> e = f, pred = null;; ++binCount) {

K ek;

if (e.hash == h &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

val = remappingFunction.apply(key, e.val);

if (val != null)

e.val = val;

else {

delta = -1;

Node<K,V> en = e.next;

if (pred != null)

pred.next = en;

else

setTabAt(tab, i, en);

}

break;

}

pred = e;

if ((e = e.next) == null) {

val = remappingFunction.apply(key, null);

if (val != null) {

delta = 1;

pred.next =

new Node<K,V>(h, key, val, null);

}

break;

}

}

}

else if (f instanceof TreeBin) {

binCount = 1;

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> r, p;

if ((r = t.root) != null)

p = r.findTreeNode(h, key, null);

else

p = null;

V pv = (p == null) ? null : p.val;

val = remappingFunction.apply(key, pv);

if (val != null) {

if (p != null)

p.val = val;

else {

delta = 1;

t.putTreeVal(h, key, val);

}

}

else if (p != null) {

delta = -1;

if (t.removeTreeNode(p))

setTabAt(tab, i, untreeify(t.first));

}

}

}

}

if (binCount != 0) {

if (binCount >= TREEIFY_THRESHOLD)

treeifyBin(tab, i);

break;

}

}

}

//自增1

if (delta != 0)

addCount((long)delta, binCount);

return val;

}

//合并方法

public V merge(K key, V value, BiFunction<? super V, ? super V, ? extends V> remappingFunction) {

if (key == null || value == null || remappingFunction == null)

throw new NullPointerException();

//计算哈希

int h = spread(key.hashCode());

V val = null;

int delta = 0;

int binCount = 0;

//循环添加

for (Node<K,V>[] tab = table;;) {

Node<K,V> f; int n, i, fh;

//初始化

if (tab == null || (n = tab.length) == 0)

tab = initTable();

//通过cas获取位置并赋值

else if ((f = tabAt(tab, i = (n - 1) & h)) == null) {

if (casTabAt(tab, i, null, new Node<K,V>(h, key, value, null))) {

delta = 1;

val = value;

break;

}

}

//辅助扩容

else if ((fh = f.hash) == MOVED)

tab = helpTransfer(tab, f);

else {

//同步锁增加

synchronized (f) {

if (tabAt(tab, i) == f) {

if (fh >= 0) {

binCount = 1;

for (Node<K,V> e = f, pred = null;; ++binCount) {

K ek;

if (e.hash == h &&

((ek = e.key) == key ||

(ek != null && key.equals(ek)))) {

//获取计算后的值

val = remappingFunction.apply(e.val, value);

if (val != null)

e.val = val;

else {

delta = -1;

Node<K,V> en = e.next;

if (pred != null)

pred.next = en;

else

setTabAt(tab, i, en);

}

break;

}

pred = e;

//如果下一个节点为空进行创建新对象并赋值

if ((e = e.next) == null) {

delta = 1;

val = value;

pred.next =

new Node<K,V>(h, key, val, null);

break;

}

}

}

//如果为红黑树类型进行增加逻辑

else if (f instanceof TreeBin) {

binCount = 2;

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> r = t.root;

TreeNode<K,V> p = (r == null) ? null :

r.findTreeNode(h, key, null);

val = (p == null) ? value :

remappingFunction.apply(p.val, value);

if (val != null) {

if (p != null)

p.val = val;

else {

delta = 1;

t.putTreeVal(h, key, val);

}

}

else if (p != null) {

delta = -1;

if (t.removeTreeNode(p))

setTabAt(tab, i, untreeify(t.first));

}

}

}

}

//扩容逻辑

if (binCount != 0) {

if (binCount >= TREEIFY_THRESHOLD)

treeifyBin(tab, i);

break;

}

}

}

//自动指定数量

if (delta != 0)

addCount((long)delta, binCount);

return val;

}

// Hashtable legacy methods

//判断是否包含这个值

public boolean contains(Object value) {

return containsValue(value);

}

//获取所有的key

public Enumeration<K> keys() {

Node<K,V>[] t;

int f = (t = table) == null ? 0 : t.length;

return new KeyIterator<K,V>(t, f, 0, f, this);

}

//获取所有的元素

public Enumeration<V> elements() {

Node<K,V>[] t;

int f = (t = table) == null ? 0 : t.length;

return new ValueIterator<K,V>(t, f, 0, f, this);

}

//返回映射的数量

public long mappingCount() {

long n = sumCount();

return (n < 0L) ? 0L : n; // ignore transient negative values

}

//创建新的set

public static <K> KeySetView<K,Boolean> newKeySet() {

return new KeySetView<K,Boolean>

(new ConcurrentHashMap<K,Boolean>(), Boolean.TRUE);

}

//带初始容易的set

public static <K> KeySetView<K,Boolean> newKeySet(int initialCapacity) {

return new KeySetView<K,Boolean>

(new ConcurrentHashMap<K,Boolean>(initialCapacity), Boolean.TRUE);

}

//初始化keySet方法

public KeySetView<K,V> keySet(V mappedValue) {

if (mappedValue == null)

throw new NullPointerException();

return new KeySetView<K,V>(this, mappedValue);

}

/* ---------------- Special Nodes -------------- */

//在转移操作时插入在箱头的节点。

static final class ForwardingNode<K,V> extends Node<K,V> {

final Node<K,V>[] nextTable;

ForwardingNode(Node<K,V>[] tab) {

super(MOVED, null, null, null);

this.nextTable = tab;

}

//查到方法

Node<K,V> find(int h, Object k) {

// 循环以避免转发节点上的任意深度递归

outer: for (Node<K,V>[] tab = nextTable;;) {

Node<K,V> e; int n;

if (k == null || tab == null || (n = tab.length) == 0 ||

(e = tabAt(tab, (n - 1) & h)) == null)

return null;

for (;;) {

int eh; K ek;

if ((eh = e.hash) == h &&

((ek = e.key) == k || (ek != null && k.equals(ek))))

return e;

if (eh < 0) {

if (e instanceof ForwardingNode) {

tab = ((ForwardingNode<K,V>)e).nextTable;

continue outer;

}

else

return e.find(h, k);

}

if ((e = e.next) == null)

return null;

}

}

}

}

//ReservationNode 类

static final class ReservationNode<K,V> extends Node<K,V> {

ReservationNode() {

super(RESERVED, null, null, null);

}

Node<K,V> find(int h, Object k) {

return null;

}

}

/* ---------------- Table Initialization and Resizing -------------- */

//获取初始化大小计算

static final int resizeStamp(int n) {

return Integer.numberOfLeadingZeros(n) | (1 << (RESIZE_STAMP_BITS - 1));

}

//初始化表方法

private final Node<K,V>[] initTable() {

Node<K,V>[] tab; int sc;

while ((tab = table) == null || tab.length == 0) {

if ((sc = sizeCtl) < 0)

Thread.yield(); // lost initialization race; just spin

else if (U.compareAndSwapInt(this, SIZECTL, sc, -1)) {

try {

if ((tab = table) == null || tab.length == 0) {

int n = (sc > 0) ? sc : DEFAULT_CAPACITY;

@SuppressWarnings("unchecked")

Node<K,V>[] nt = (Node<K,V>[])new Node<?,?>[n];

table = tab = nt;

sc = n - (n >>> 2);

}

} finally {

sizeCtl = sc;

}

break;

}

}

return tab;

}

//自增数量的实现

private final void addCount(long x, int check) {

CounterCell[] as; long b, s;

if ((as = counterCells) != null ||

!U.compareAndSwapLong(this, BASECOUNT, b = baseCount, s = b + x)) {

CounterCell a; long v; int m;

boolean uncontended = true;

if (as == null || (m = as.length - 1) < 0 ||

(a = as[ThreadLocalRandom.getProbe() & m]) == null ||

!(uncontended =

U.compareAndSwapLong(a, CELLVALUE, v = a.value, v + x))) {

fullAddCount(x, uncontended);

return;

}

if (check <= 1)

return;

s = sumCount();

}

//大于等于0才进来

if (check >= 0) {

Node<K,V>[] tab, nt; int n, sc;

while (s >= (long)(sc = sizeCtl) && (tab = table) != null &&

(n = tab.length) < MAXIMUM_CAPACITY) {

int rs = resizeStamp(n);

if (sc < 0) {

if ((sc >>> RESIZE_STAMP_SHIFT) != rs || sc == rs + 1 ||

sc == rs + MAX_RESIZERS || (nt = nextTable) == null ||

transferIndex <= 0)

break;

if (U.compareAndSwapInt(this, SIZECTL, sc, sc + 1))

transfer(tab, nt);

}

else if (U.compareAndSwapInt(this, SIZECTL, sc,

(rs << RESIZE_STAMP_SHIFT) + 2))

transfer(tab, null);

s = sumCount();

}

}

}

//如果正在调整大小,则帮助转移方法

final Node<K,V>[] helpTransfer(Node<K,V>[] tab, Node<K,V> f) {

Node<K,V>[] nextTab; int sc;

//如果传进来的tab不为空 且 f的类型为ForwardingNode 且 获取f的nextTable不为空

if (tab != null && (f instanceof ForwardingNode) &&

(nextTab = ((ForwardingNode<K,V>)f).nextTable) != null) {

//计算新的长度

int rs = resizeStamp(tab.length);

//循环复制表

while (nextTab == nextTable && table == tab &&

(sc = sizeCtl) < 0) {

if ((sc >>> RESIZE_STAMP_SHIFT) != rs || sc == rs + 1 ||

sc == rs + MAX_RESIZERS || transferIndex <= 0)

break;

if (U.compareAndSwapInt(this, SIZECTL, sc, sc + 1)) {

transfer(tab, nextTab);

break;

}

}

return nextTab;

}

return table;

}

//尝试调整大小(带初始大小)

private final void tryPresize(int size) {

int c = (size >= (MAXIMUM_CAPACITY >>> 1)) ? MAXIMUM_CAPACITY :

tableSizeFor(size + (size >>> 1) + 1);

int sc;

//sc 如果为0则待初始化,大于0则为下一个需要初始化大小的计数值

while ((sc = sizeCtl) >= 0) {

Node<K,V>[] tab = table; int n;

if (tab == null || (n = tab.length) == 0) {

n = (sc > c) ? sc : c;

if (U.compareAndSwapInt(this, SIZECTL, sc, -1)) {

try {

if (table == tab) {

@SuppressWarnings("unchecked")

Node<K,V>[] nt = (Node<K,V>[])new Node<?,?>[n];

table = nt;

sc = n - (n >>> 2);

}

} finally {

sizeCtl = sc;

}

}

}

//如果初始化的值小于原来的值或大于最大值则直接跳出

else if (c <= sc || n >= MAXIMUM_CAPACITY)

break;

//赋值表 然后进行复制到新表

else if (tab == table) {

int rs = resizeStamp(n);

if (sc < 0) {

Node<K,V>[] nt;

if ((sc >>> RESIZE_STAMP_SHIFT) != rs || sc == rs + 1 ||

sc == rs + MAX_RESIZERS || (nt = nextTable) == null ||

transferIndex <= 0)

break;

if (U.compareAndSwapInt(this, SIZECTL, sc, sc + 1))

transfer(tab, nt);

}

else if (U.compareAndSwapInt(this, SIZECTL, sc,

(rs << RESIZE_STAMP_SHIFT) + 2))

transfer(tab, null);

}

}

}

//移动或复制每个bin中的节点到新表方法

private final void transfer(Node<K,V>[] tab, Node<K,V>[] nextTab) {

//获取列表长度

int n = tab.length, stride;

//判断cpu数量是否大于1

如果是再判断计算出来的结果是否小于初始容量

if ((stride = (NCPU > 1) ? (n >>> 3) / NCPU : n) < MIN_TRANSFER_STRIDE)

//初始化最小容易

stride = MIN_TRANSFER_STRIDE; // subdivide range

//如下没有下一个nextTab则进行初始化(2倍初始化)

if (nextTab == null) { // initiating

try {

@SuppressWarnings("unchecked")

Node<K,V>[] nt = (Node<K,V>[])new Node<?,?>[n << 1];

nextTab = nt;

} catch (Throwable ex) { // try to cope with OOME

sizeCtl = Integer.MAX_VALUE;

return;

}

nextTable = nextTab;

transferIndex = n;

}

//获取nextTab长度

int nextn = nextTab.length;

//fwd用于并发控制

ForwardingNode<K,V> fwd = new ForwardingNode<K,V>(nextTab);

//是否继续向前查找标志位

boolean advance = true;

//完成清理标记

boolean finishing = false; // to ensure sweep before committing nextTab

//下面是移动或复制到新表逻辑

for (int i = 0, bound = 0;;) {

Node<K,V> f; int fh;

while (advance) {

int nextIndex, nextBound;

if (--i >= bound || finishing)

advance = false;

else if ((nextIndex = transferIndex) <= 0) {

i = -1;

advance = false;

}

else if (U.compareAndSwapInt

(this, TRANSFERINDEX, nextIndex,

nextBound = (nextIndex > stride ?

nextIndex - stride : 0))) {

bound = nextBound;

i = nextIndex - 1;

advance = false;

}

}

if (i < 0 || i >= n || i + n >= nextn) {

int sc;

//表示已完成转移

if (finishing) {

nextTable = null;

table = nextTab;

//计算扩容后的0.75

sizeCtl = (n << 1) - (n >>> 1);

return;

}

if (U.compareAndSwapInt(this, SIZECTL, sc = sizeCtl, sc - 1)) {

if ((sc - 2) != resizeStamp(n) << RESIZE_STAMP_SHIFT)

return;

finishing = advance = true;

i = n; // recheck before commit

}

}

else if ((f = tabAt(tab, i)) == null)

advance = casTabAt(tab, i, null, fwd);

else if ((fh = f.hash) == MOVED)

advance = true; // already processed

else {

synchronized (f) {

if (tabAt(tab, i) == f) {

Node<K,V> ln, hn;

if (fh >= 0) {

int runBit = fh & n;

Node<K,V> lastRun = f;

for (Node<K,V> p = f.next; p != null; p = p.next) {

int b = p.hash & n;

if (b != runBit) {

runBit = b;

lastRun = p;

}

}

//下面的逻辑同上面的类似

if (runBit == 0) {

ln = lastRun;

hn = null;

}

else {

hn = lastRun;

ln = null;

}

for (Node<K,V> p = f; p != lastRun; p = p.next) {

int ph = p.hash; K pk = p.key; V pv = p.val;

if ((ph & n) == 0)

ln = new Node<K,V>(ph, pk, pv, ln);

else

hn = new Node<K,V>(ph, pk, pv, hn);

}

setTabAt(nextTab, i, ln);

setTabAt(nextTab, i + n, hn);

setTabAt(tab, i, fwd);

advance = true;

}

else if (f instanceof TreeBin) {

TreeBin<K,V> t = (TreeBin<K,V>)f;

TreeNode<K,V> lo = null, loTail = null;

TreeNode<K,V> hi = null, hiTail = null;

int lc = 0, hc = 0;

for (Node<K,V> e = t.first; e != null; e = e.next) {

int h = e.hash;

TreeNode<K,V> p = new TreeNode<K,V>

(h, e.key, e.val, null, null);

if ((h & n) == 0) {

if ((p.prev = loTail) == null)

lo = p;

else

loTail.next = p;

loTail = p;

++lc;

}

else {

if ((p.prev = hiTail) == null)

hi = p;

else

hiTail.next = p;

hiTail = p;

++hc;

}

}

//小于等于6为链表不是红黑树

ln = (lc <= UNTREEIFY_THRESHOLD) ? untreeify(lo) :

(hc != 0) ? new TreeBin<K,V>(lo) : t;

hn = (hc <= UNTREEIFY_THRESHOLD) ? untreeify(hi) :

(lc != 0) ? new TreeBin<K,V>(hi) : t;

setTabAt(nextTab, i, ln);

setTabAt(nextTab, i + n, hn);

setTabAt(tab, i, fwd);

advance = true;

}

}

}

}

}

}

//获取分配计算器

@sun.misc.Contended static final class CounterCell {

volatile long value;

CounterCell(long x) { value = x; }

}

//计算数量方法

final long sumCount() {

CounterCell[] as = counterCells; CounterCell a;

long sum = baseCount;

if (as != null) {

for (int i = 0; i < as.length; ++i) {

if ((a = as[i]) != null)

sum += a.value;

}

}

return sum;

}

//

private final void fullAddCount(long x, boolean wasUncontended) {

int h;

//获取随机数 如果为0进行初始化

if ((h = ThreadLocalRandom.getProbe()) == 0) {

ThreadLocalRandom.localInit(); // force initialization

h = ThreadLocalRandom.getProbe();

wasUncontended = true;

}

boolean collide = false; // True if last slot nonempty

//

for (;;) {

CounterCell[] as; CounterCell a; int n; long v;

if ((as = counterCells) != null && (n = as.length) > 0) {

if ((a = as[(n - 1) & h]) == null) {

//尝试连接新的单元格

if (cellsBusy == 0) { // Try to attach new Cell

//进行初始化

CounterCell r = new CounterCell(x); // Optimistic create

//进行cas加锁操作

if (cellsBusy == 0 &&

U.compareAndSwapInt(this, CELLSBUSY, 0, 1)) {

boolean created = false;

try { // Recheck under lock

CounterCell[] rs; int m, j;

if ((rs = counterCells) != null &&

(m = rs.length) > 0 &&

rs[j = (m - 1) & h] == null) {

rs[j] = r;

created = true;

}

} finally {

cellsBusy = 0;

}

if (created)

break;

continue; // Slot is now non-empty

}

}

collide = false;

}

//如果cas已上锁,那么标记会失败

else if (!wasUncontended) // CAS already known to fail

//进行标记

wasUncontended = true; // Continue after rehash

//cas计算count 计算成功直接跳出循环

else if (U.compareAndSwapLong(a, CELLVALUE, v = a.value, v + x))

break;

//超过cpu数量

else if (counterCells != as || n >= NCPU)

collide = false; // At max size or stale

else if (!collide)

collide = true;

else if (cellsBusy == 0 &&

U.compareAndSwapInt(this, CELLSBUSY, 0, 1)) {

try {

if (counterCells == as) {// Expand table unless stale

CounterCell[] rs = new CounterCell[n << 1];

for (int i = 0; i < n; ++i)

rs[i] = as[i];

counterCells = rs;

}

} finally {

cellsBusy = 0;

}

collide = false;

continue; // Retry with expanded table

}

h = ThreadLocalRandom.advanceProbe(h);

}

else if (cellsBusy == 0 && counterCells == as &&

U.compareAndSwapInt(this, CELLSBUSY, 0, 1)) {

boolean init = false;

try { // Initialize table

if (counterCells == as) {

CounterCell[] rs = new CounterCell[2];

rs[h & 1] = new CounterCell(x);

counterCells = rs;

init = true;

}

} finally {

cellsBusy = 0;

}

if (init)

break;

}

//如果最后还是没有算出来,跳出循环

else if (U.compareAndSwapLong(this, BASECOUNT, v = baseCount, v + x))

break; // Fall back on using base

}

}

/* ---------------- Conversion from/to TreeBins -------------- */

//替换表中的索引(带扩容)

private final void treeifyBin(Node<K,V>[] tab, int index) {

Node<K,V> b; int n, sc;

if (tab != null) {

//如果数组小于64那么进行扩容

if ((n = tab.length) < MIN_TREEIFY_CAPACITY)

tryPresize(n << 1);

//如果tabAt位值不为空 值b.hash有值

else if ((b = tabAt(tab, index)) != null && b.hash >= 0) {

//同步锁

synchronized (b) {

//将链表转化为红黑树

if (tabAt(tab, index) == b) {

TreeNode<K,V> hd = null, tl = null;

for (Node<K,V> e = b; e != null; e = e.next) {

TreeNode<K,V> p =

new TreeNode<K,V>(e.hash, e.key, e.val,

null, null);

if ((p.prev = tl) == null)

hd = p;

else

tl.next = p;

tl = p;

}

//将数组下标的值设置转化完的红黑树使用

setTabAt(tab, index, new TreeBin<K,V>(hd));

}

}

}

}

}

/**

* 返回一个给定列表的非树列表

*/

static <K,V> Node<K,V> untreeify(Node<K,V> b) {

Node<K,V> hd = null, tl = null;

for (Node<K,V> q = b; q != null; q = q.next) {

Node<K,V> p = new Node<K,V>(q.hash, q.key, q.val, null);

if (tl == null)

hd = p;

else

tl.next = p;

tl = p;

}

return hd;

}

/* ---------------- TreeNodes -------------- */

//树节点的实现

static final class TreeNode<K,V> extends Node<K,V> {

TreeNode<K,V> parent; // 父节点

TreeNode<K,V> left; // 左子节点

TreeNode<K,V> right; //右节点

TreeNode<K,V> prev; // 前一节点(前区节点)

boolean red;

//构造方法

TreeNode(int hash, K key, V val, Node<K,V> next,

TreeNode<K,V> parent) {

super(hash, key, val, next);

this.parent = parent;

}

//查询节点方法

Node<K,V> find(int h, Object k) {

return findTreeNode(h, k, null);

}

//查询节点的实现 没有返回Null

final TreeNode<K,V> findTreeNode(int h, Object k, Class<?> kc) {

if (k != null) {

TreeNode<K,V> p = this;

do {

int ph, dir; K pk; TreeNode<K,V> q;

TreeNode<K,V> pl = p.left, pr = p.right;

if ((ph = p.hash) > h)

p = pl;

else if (ph < h)

p = pr;

else if ((pk = p.key) == k || (pk != null && k.equals(pk)))

return p;

else if (pl == null)

p = pr;

else if (pr == null)

p = pl;

else if ((kc != null ||

(kc = comparableClassFor(k)) != null) &&

(dir = compareComparables(kc, k, pk)) != 0)

p = (dir < 0) ? pl : pr;

else if ((q = pr.findTreeNode(h, k, kc)) != null)

return q;

else

p = pl;

} while (p != null);

}

return null;

}

}

//陈树黑类实现

static final class TreeBin<K,V> extends Node<K,V> {

//根节点

TreeNode<K,V> root;

//首节点

volatile TreeNode<K,V> first;

//等待线程

volatile Thread waiter;

//锁状态 写锁为独占状态

volatile int lockState;

// values for lockState

//写锁状态

static final int WRITER = 1; // set while holding write lock

//等待获取锁状态

static final int WAITER = 2; // set when waiting for write lock

//读锁状态

static final int READER = 4; // increment value for setting read lock

/**

* Tie-breaking utility for ordering insertions when equal

* hashCodes and non-comparable. We don't require a total

* order, just a consistent insertion rule to maintain

* equivalence across rebalancings. Tie-breaking further than

* necessary simplifies testing a bit.

*/

static int tieBreakOrder(Object a, Object b) {

int d;

if (a == null || b == null ||

(d = a.getClass().getName().

compareTo(b.getClass().getName())) == 0)

d = (System.identityHashCode(a) <= System.identityHashCode(b) ?

-1 : 1);

return d;

}

//红黑树初始化

TreeBin(TreeNode<K,V> b) {

super(TREEBIN, null, null, null);

this.first = b;

TreeNode<K,V> r = null;

for (TreeNode<K,V> x = b, next; x != null; x = next) {

next = (TreeNode<K,V>)x.next;

x.left = x.right = null;

if (r == null) {

x.parent = null;

x.red = false;

r = x;

}

else {

K k = x.key;

int h = x.hash;

Class<?> kc = null;

for (TreeNode<K,V> p = r;;) {

int dir, ph;

K pk = p.key;

if ((ph = p.hash) > h)

dir = -1;

else if (ph < h)

dir = 1;

else if ((kc == null &&

(kc = comparableClassFor(k)) == null) ||

(dir = compareComparables(kc, k, pk)) == 0)

dir = tieBreakOrder(k, pk);

TreeNode<K,V> xp = p;

if ((p = (dir <= 0) ? p.left : p.right) == null) {

x.parent = xp;

if (dir <= 0)

xp.left = x;

else

xp.right = x;

r = balanceInsertion(r, x);

break;

}

}

}

}

this.root = r;

assert checkInvariants(root);

}

//获取树的写锁

private final void lockRoot() {

if (!U.compareAndSwapInt(this, LOCKSTATE, 0, WRITER))

contendedLock(); // offload to separate method

}

//释放锁

private final void unlockRoot() {

lockState = 0;

}

//等待锁

private final void contendedLock() {

boolean waiting = false;

for (int s;;) {

if (((s = lockState) & ~WAITER) == 0) {

if (U.compareAndSwapInt(this, LOCKSTATE, s, WRITER)) {

if (waiting)

waiter = null;

return;

}

}

else if ((s & WAITER) == 0) {

if (U.compareAndSwapInt(this, LOCKSTATE, s, s | WAITER)) {

waiting = true;

waiter = Thread.currentThread();

}

}

else if (waiting)

LockSupport.park(this);

}

}

//获取匹配的方法

final Node<K,V> find(int h, Object k) {

if (k != null) {

//循环判断

for (Node<K,V> e = first; e != null; ) {

int s; K ek;

if (((s = lockState) & (WAITER|WRITER)) != 0) {

if (e.hash == h &&

((ek = e.key) == k || (ek != null && k.equals(ek))))

return e;

e = e.next;

}

else if (U.compareAndSwapInt(this, LOCKSTATE, s,

s + READER)) {

TreeNode<K,V> r, p;

try {

p = ((r = root) == null ? null :

r.findTreeNode(h, k, null));

} finally {

Thread w;

if (U.getAndAddInt(this, LOCKSTATE, -READER) ==

(READER|WAITER) && (w = waiter) != null)

LockSupport.unpark(w);

}

return p;

}

}

}

return null;

}

//添加树节点

final TreeNode<K,V> putTreeVal(int h, K k, V v) {

Class<?> kc = null;

boolean searched = false;

for (TreeNode<K,V> p = root;;) {

int dir, ph; K pk;

if (p == null) {

first = root = new TreeNode<K,V>(h, k, v, null, null);

break;

}

else if ((ph = p.hash) > h)

dir = -1;

else if (ph < h)

dir = 1;

else if ((pk = p.key) == k || (pk != null && k.equals(pk)))

return p;

else if ((kc == null &&

(kc = comparableClassFor(k)) == null) ||

(dir = compareComparables(kc, k, pk)) == 0) {

if (!searched) {

TreeNode<K,V> q, ch;

searched = true;

if (((ch = p.left) != null &&

(q = ch.findTreeNode(h, k, kc)) != null) ||

((ch = p.right) != null &&

(q = ch.findTreeNode(h, k, kc)) != null))

return q;

}

dir = tieBreakOrder(k, pk);

}

TreeNode<K,V> xp = p;

if ((p = (dir <= 0) ? p.left : p.right) == null) {

TreeNode<K,V> x, f = first;

first = x = new TreeNode<K,V>(h, k, v, f, xp);

if (f != null)

f.prev = x;

if (dir <= 0)

xp.left = x;

else

xp.right = x;

if (!xp.red)

x.red = true;

else {

lockRoot();

try {

root = balanceInsertion(root, x);

} finally {

unlockRoot();

}

}

break;

}

}

assert checkInvariants(root);

return null;

}

//删除树节点

final boolean removeTreeNode(TreeNode<K,V> p) {

TreeNode<K,V> next = (TreeNode<K,V>)p.next;

TreeNode<K,V> pred = p.prev; // unlink traversal pointers

TreeNode<K,V> r, rl;

if (pred == null)

first = next;

else

pred.next = next;

if (next != null)

next.prev = pred;

if (first == null) {

root = null;

return true;

}

if ((r = root) == null || r.right == null || // too small

(rl = r.left) == null || rl.left == null)

return true;

lockRoot();

try {

TreeNode<K,V> replacement;

TreeNode<K,V> pl = p.left;

TreeNode<K,V> pr = p.right;

if (pl != null && pr != null) {

TreeNode<K,V> s = pr, sl;

while ((sl = s.left) != null) // find successor

s = sl;

boolean c = s.red; s.red = p.red; p.red = c; // swap colors

TreeNode<K,V> sr = s.right;

TreeNode<K,V> pp = p.parent;

if (s == pr) { // p was s's direct parent

p.parent = s;

s.right = p;

}

else {

TreeNode<K,V> sp = s.parent;

if ((p.parent = sp) != null) {

if (s == sp.left)

sp.left = p;

else

sp.right = p;

}

if ((s.right = pr) != null)

pr.parent = s;

}

p.left = null;

if ((p.right = sr) != null)

sr.parent = p;

if ((s.left = pl) != null)

pl.parent = s;

if ((s.parent = pp) == null)

r = s;

else if (p == pp.left)

pp.left = s;

else

pp.right = s;

if (sr != null)

replacement = sr;

else

replacement = p;

}

else if (pl != null)

replacement = pl;

else if (pr != null)

replacement = pr;

else

replacement = p;

if (replacement != p) {

TreeNode<K,V> pp = replacement.parent = p.parent;

if (pp == null)

r = replacement;

else if (p == pp.left)

pp.left = replacement;

else

pp.right = replacement;

p.left = p.right = p.parent = null;

}

root = (p.red) ? r : balanceDeletion(r, replacement);

if (p == replacement) { // detach pointers

TreeNode<K,V> pp;

if ((pp = p.parent) != null) {

if (p == pp.left)

pp.left = null;

else if (p == pp.right)

pp.right = null;

p.parent = null;

}

}

} finally {

unlockRoot();

}

assert checkInvariants(root);

return false;

}

//左黑树左旋

static <K,V> TreeNode<K,V> rotateLeft(TreeNode<K,V> root,

TreeNode<K,V> p) {

TreeNode<K,V> r, pp, rl;

//p不为Null 且 p右边节点不为空

if (p != null && (r = p.right) != null) {

//左边赋值给右边且不为空 那么rl的父节点为p (向上挪)

if ((rl = p.right = r.left) != null)

rl.parent = p;

//r的父节点来自p的父节点赋值并且为空

if ((pp = r.parent = p.parent) == null)

//红黑树标识为false

(root = r).red = false;

//p等于pp的左边

else if (pp.left == p)

//r赋给pp左边

pp.left = r;

else

//r赋给pp右边

pp.right = r;

//p赋给r的左边

r.left = p;

//r赋给p的父索引

p.parent = r;

}

return root;

}

//右旋节点(同上类型)

static <K,V> TreeNode<K,V> rotateRight(TreeNode<K,V> root,

TreeNode<K,V> p) {

TreeNode<K,V> l, pp, lr;

if (p != null && (l = p.left) != null) {

if ((lr = p.left = l.right) != null)

lr.parent = p;

if ((pp = l.parent = p.parent) == null)

(root = l).red = false;

else if (pp.right == p)

pp.right = l;

else

pp.left = l;

l.right = p;

p.parent = l;

}

return root;

}

//平衡红黑树(在节点插入之后会调用)

static <K,V> TreeNode<K,V> balanceInsertion(TreeNode<K,V> root,

TreeNode<K,V> x) {

//红黑树标记

x.red = true;

//循环标记那些parent为空的不是红黑树并返回

for (TreeNode<K,V> xp, xpp, xppl, xppr;;) {

if ((xp = x.parent) == null) {

x.red = false;

return x;

}

//如果不是红黑树标记或父过引为空直接返回根节点

else if (!xp.red || (xpp = xp.parent) == null)

return root;

//如果父节点为红色的那么需要调整(再平衡)

if (xp == (xppl = xpp.left)) {

if ((xppr = xpp.right) != null && xppr.red) {

xppr.red = false;

xp.red = false;

xpp.red = true;

x = xpp;

}

else {

if (x == xp.right) {

root = rotateLeft(root, x = xp);

xpp = (xp = x.parent) == null ? null : xp.parent;

}

if (xp != null) {

xp.red = false;

if (xpp != null) {

xpp.red = true;

root = rotateRight(root, xpp);

}

}

}

}

//其他的需要再平衡的节点 比如上级的上级

else {

if (xppl != null && xppl.red) {

xppl.red = false;

xp.red = false;

xpp.red = true;

x = xpp;

}

else {

if (x == xp.left) {

root = rotateRight(root, x = xp);

xpp = (xp = x.parent) == null ? null : xp.parent;

}

if (xp != null) {

xp.red = false;

if (xpp != null) {

xpp.red = true;

root = rotateLeft(root, xpp);

}

}

}

}

}

}

//平衡红黑树方法(同上类似)

static <K,V> TreeNode<K,V> balanceDeletion(TreeNode<K,V> root,

TreeNode<K,V> x) {

for (TreeNode<K,V> xp, xpl, xpr;;) {

if (x == null || x == root)

return root;

else if ((xp = x.parent) == null) {

x.red = false;

return x;

}

else if (x.red) {

x.red = false;

return root;

}

else if ((xpl = xp.left) == x) {

if ((xpr = xp.right) != null && xpr.red) {

xpr.red = false;

xp.red = true;

root = rotateLeft(root, xp);

xpr = (xp = x.parent) == null ? null : xp.right;

}

if (xpr == null)

x = xp;

else {

TreeNode<K,V> sl = xpr.left, sr = xpr.right;

if ((sr == null || !sr.red) &&

(sl == null || !sl.red)) {

xpr.red = true;

x = xp;

}

else {

if (sr == null || !sr.red) {

if (sl != null)

sl.red = false;

xpr.red = true;

root = rotateRight(root, xpr);

xpr = (xp = x.parent) == null ?

null : xp.right;

}

if (xpr != null) {

xpr.red = (xp == null) ? false : xp.red;

if ((sr = xpr.right) != null)

sr.red = false;

}

if (xp != null) {

xp.red = false;

root = rotateLeft(root, xp);

}

x = root;

}

}

}

else { // symmetric

if (xpl != null && xpl.red) {

xpl.red = false;

xp.red = true;

root = rotateRight(root, xp);

xpl = (xp = x.parent) == null ? null : xp.left;

}

if (xpl == null)

x = xp;

else {

TreeNode<K,V> sl = xpl.left, sr = xpl.right;

if ((sl == null || !sl.red) &&

(sr == null || !sr.red)) {

xpl.red = true;

x = xp;

}

else {

if (sl == null || !sl.red) {

if (sr != null)

sr.red = false;

xpl.red = true;

root = rotateLeft(root, xpl);

xpl = (xp = x.parent) == null ?

null : xp.left;

}

if (xpl != null) {

xpl.red = (xp == null) ? false : xp.red;

if ((sl = xpl.left) != null)

sl.red = false;

}

if (xp != null) {

xp.red = false;

root = rotateRight(root, xp);

}

x = root;

}

}

}

}

}

//递归检查

static <K,V> boolean checkInvariants(TreeNode<K,V> t) {

TreeNode<K,V> tp = t.parent, tl = t.left, tr = t.right,

tb = t.prev, tn = (TreeNode<K,V>)t.next;

if (tb != null && tb.next != t)

return false;

if (tn != null && tn.prev != t)

return false;

if (tp != null && t != tp.left && t != tp.right)

return false;

if (tl != null && (tl.parent != t || tl.hash > t.hash))

return false;

if (tr != null && (tr.parent != t || tr.hash < t.hash))

return false;

if (t.red && tl != null && tl.red && tr != null && tr.red)

return false;

if (tl != null && !checkInvariants(tl))

return false;

if (tr != null && !checkInvariants(tr))

return false;

return true;

}

//cas锁

private static final sun.misc.Unsafe U;

//状态

private static final long LOCKSTATE;

//初始化

static {

try {

U = sun.misc.Unsafe.getUnsafe();

Class<?> k = TreeBin.class;

LOCKSTATE = U.objectFieldOffset

(k.getDeclaredField("lockState"));

} catch (Exception e) {

throw new Error(e);

}

}

}

//遍历器记录表

static final class TableStack<K,V> {

int length;

int index;

Node<K,V>[] tab;

TableStack<K,V> next;

}

//保存当前便利数组以及索引的信息的类信息

static class Traverser<K,V> {

//当前数组

Node<K,V>[] tab; // current table; updated if resized

//下一个元素的指针

Node<K,V> next; // the next entry to use

//栈顶结点

TableStack<K,V> stack, spare; // to save/restore on ForwardingNodes

//下一个读取的索引下标

int index; // index of bin to use next

//开始下标

int baseIndex; // current index of initial table

//终止下标

int baseLimit; // index bound for initial table

//数组的总长度

final int baseSize; // initial table size

//构造方法

Traverser(Node<K,V>[] tab, int size, int index, int limit) {

this.tab = tab;

this.baseSize = size;

this.baseIndex = this.index = index;

this.baseLimit = limit;

this.next = null;

}

//返回有效节点(就是下一个节点)

final Node<K,V> advance() {

Node<K,V> e;

if ((e = next) != null)

e = e.next;

for (;;) {

Node<K,V>[] t; int i, n; // must use locals in checks

if (e != null)

return next = e;

if (baseIndex >= baseLimit || (t = tab) == null ||

(n = t.length) <= (i = index) || i < 0)

return next = null;

if ((e = tabAt(t, i)) != null && e.hash < 0) {

if (e instanceof ForwardingNode) {

tab = ((ForwardingNode<K,V>)e).nextTable;

e = null;

pushState(t, i, n);

continue;

}

else if (e instanceof TreeBin)

e = ((TreeBin<K,V>)e).first;

else

e = null;

}

if (stack != null)

recoverState(n);

else if ((index = i + baseSize) >= n)

index = ++baseIndex; // visit upper slots if present

}

}

//保存状态(遍历时使用)

private void pushState(Node<K,V>[] t, int i, int n) {

TableStack<K,V> s = spare; // reuse if possible

if (s != null)

spare = s.next;

else

s = new TableStack<K,V>();

s.tab = t;

s.length = n;

s.index = i;

s.next = stack;

stack = s;

}

//移除方法

private void recoverState(int n) {

TableStack<K,V> s; int len;

while ((s = stack) != null && (index += (len = s.length)) >= n) {

n = len;

index = s.index;

tab = s.tab;

s.tab = null;

TableStack<K,V> next = s.next;

s.next = spare; // save for reuse

stack = next;

spare = s;

}

if (s == null && (index += baseSize) >= n)

index = ++baseIndex;

}

}

//基础迭代器

static class BaseIterator<K,V> extends Traverser<K,V> {

final ConcurrentHashMap<K,V> map;

Node<K,V> lastReturned;

BaseIterator(Node<K,V>[] tab, int size, int index, int limit,

ConcurrentHashMap<K,V> map) {

super(tab, size, index, limit);

this.map = map;

advance();

}

//判断是否有下一个节点

public final boolean hasNext() { return next != null; }

//判断是否有更多元素方法(同上)

public final boolean hasMoreElements() { return next != null; }

//移除方法

public final void remove() {

Node<K,V> p;

if ((p = lastReturned) == null)

throw new IllegalStateException();

lastReturned = null;

map.replaceNode(p.key, null, null);

}

}

//迭代key

static final class KeyIterator<K,V> extends BaseIterator<K,V>

implements Iterator<K>, Enumeration<K> {

KeyIterator(Node<K,V>[] tab, int index, int size, int limit,

ConcurrentHashMap<K,V> map) {

super(tab, index, size, limit, map);

}

//获取下一个节点

public final K next() {

Node<K,V> p;

if ((p = next) == null)

throw new NoSuchElementException();

K k = p.key;

lastReturned = p;

advance();

return k;

}

public final K nextElement() { return next(); }

}

//获取所有值的列表

static final class ValueIterator<K,V> extends BaseIterator<K,V>

implements Iterator<V>, Enumeration<V> {

ValueIterator(Node<K,V>[] tab, int index, int size, int limit,

ConcurrentHashMap<K,V> map) {

super(tab, index, size, limit, map);

}

public final V next() {

Node<K,V> p;

if ((p = next) == null)

throw new NoSuchElementException();

V v = p.val;

lastReturned = p;

advance();

return v;

}

public final V nextElement() { return next(); }

}

//迭代器

static final class EntryIterator<K,V> extends BaseIterator<K,V>

implements Iterator<Map.Entry<K,V>> {

EntryIterator(Node<K,V>[] tab, int index, int size, int limit,

ConcurrentHashMap<K,V> map) {

super(tab, index, size, limit, map);

}

public final Map.Entry<K,V> next() {

Node<K,V> p;

if ((p = next) == null)

throw new NoSuchElementException();

K k = p.key;

V v = p.val;

lastReturned = p;

advance();

return new MapEntry<K,V>(k, v, map);

}

}

//导出map格式的列表

static final class MapEntry<K,V> implements Map.Entry<K,V> {

final K key; // non-null

V val; // non-null

final ConcurrentHashMap<K,V> map;

MapEntry(K key, V val, ConcurrentHashMap<K,V> map) {

this.key = key;

this.val = val;

this.map = map;

}

public K getKey() { return key; }

public V getValue() { return val; }

public int hashCode() { return key.hashCode() ^ val.hashCode(); }

public String toString() { return key + "=" + val; }

public boolean equals(Object o) {

Object k, v; Map.Entry<?,?> e;

return ((o instanceof Map.Entry) &&

(k = (e = (Map.Entry<?,?>)o).getKey()) != null &&

(v = e.getValue()) != null &&

(k == key || k.equals(key)) &&

(v == val || v.equals(val)));

}

/**

* Sets our entry's value and writes through to the map. The

* value to return is somewhat arbitrary here. Since we do not

* necessarily track asynchronous changes, the most recent

* "previous" value could be different from what we return (or

* could even have been removed, in which case the put will

* re-establish). We do not and cannot guarantee more.

*/

public V setValue(V value) {

if (value == null) throw new NullPointerException();

V v = val;

val = value;

map.put(key, value);

return v;

}

}

//获取key的分割器实现

static final class KeySpliterator<K,V> extends Traverser<K,V>

implements Spliterator<K> {

long est; // size estimate

KeySpliterator(Node<K,V>[] tab, int size, int index, int limit,

long est) {

super(tab, size, index, limit);

this.est = est;

}

public Spliterator<K> trySplit() {

int i, f, h;

return (h = ((i = baseIndex) + (f = baseLimit)) >>> 1) <= i ? null :

new KeySpliterator<K,V>(tab, baseSize, baseLimit = h,

f, est >>>= 1);

}

public void forEachRemaining(Consumer<? super K> action) {

if (action == null) throw new NullPointerException();

for (Node<K,V> p; (p = advance()) != null;)

action.accept(p.key);

}

public boolean tryAdvance(Consumer<? super K> action) {

if (action == null) throw new NullPointerException();

Node<K,V> p;

if ((p = advance()) == null)

return false;

action.accept(p.key);

return true;

}

public long estimateSize() { return est; }

public int characteristics() {

return Spliterator.DISTINCT | Spliterator.CONCURRENT |

Spliterator.NONNULL;

}

}

//值的分割器实现

static final class ValueSpliterator<K,V> extends Traverser<K,V>

implements Spliterator<V> {

long est; // size estimate

ValueSpliterator(Node<K,V>[] tab, int size, int index, int limit,

long est) {

super(tab, size, index, limit);

this.est = est;

}

public Spliterator<V> trySplit() {

int i, f, h;

return (h = ((i = baseIndex) + (f = baseLimit)) >>> 1) <= i ? null :

new ValueSpliterator<K,V>(tab, baseSize, baseLimit = h,

f, est >>>= 1);

}

public void forEachRemaining(Consumer<? super V> action) {

if (action == null) throw new NullPointerException();

for (Node<K,V> p; (p = advance()) != null;)

action.accept(p.val);

}

public boolean tryAdvance(Consumer<? super V> action) {

if (action == null) throw new NullPointerException();

Node<K,V> p;

if ((p = advance()) == null)

return false;

action.accept(p.val);

return true;

}

public long estimateSize() { return est; }

public int characteristics() {

return Spliterator.CONCURRENT | Spliterator.NONNULL;

}

}

//节点的分割器

static final class EntrySpliterator<K,V> extends Traverser<K,V>

implements Spliterator<Map.Entry<K,V>> {

final ConcurrentHashMap<K,V> map; // To export MapEntry

long est; // size estimate

EntrySpliterator(Node<K,V>[] tab, int size, int index, int limit,

long est, ConcurrentHashMap<K,V> map) {

super(tab, size, index, limit);

this.map = map;

this.est = est;

}

public Spliterator<Map.Entry<K,V>> trySplit() {

int i, f, h;

return (h = ((i = baseIndex) + (f = baseLimit)) >>> 1) <= i ? null :

new EntrySpliterator<K,V>(tab, baseSize, baseLimit = h,

f, est >>>= 1, map);

}

public void forEachRemaining(Consumer<? super Map.Entry<K,V>> action) {

if (action == null) throw new NullPointerException();

for (Node<K,V> p; (p = advance()) != null; )

action.accept(new MapEntry<K,V>(p.key, p.val, map));

}

public boolean tryAdvance(Consumer<? super Map.Entry<K,V>> action) {

if (action == null) throw new NullPointerException();

Node<K,V> p;

if ((p = advance()) == null)

return false;

action.accept(new MapEntry<K,V>(p.key, p.val, map));

return true;

}

public long estimateSize() { return est; }

public int characteristics() {

return Spliterator.DISTINCT | Spliterator.CONCURRENT |

Spliterator.NONNULL;

}

}

//计算批量任务的初始批处理值

final int batchFor(long b) {

long n;

if (b == Long.MAX_VALUE || (n = sumCount()) <= 1L || n < b)

return 0;

int sp = ForkJoinPool.getCommonPoolParallelism() << 2; // slack of 4

return (b <= 0L || (n /= b) >= sp) ? sp : (int)n;

}

//循环处理

public void forEach(long parallelismThreshold,

BiConsumer<? super K,? super V> action) {

if (action == null) throw new NullPointerException();

new ForEachMappingTask<K,V>

(null, batchFor(parallelismThreshold), 0, 0, table,

action).invoke();

}

//同上类似

public <U> void forEach(long parallelismThreshold,

BiFunction<? super K, ? super V, ? extends U> transformer,

Consumer<? super U> action) {

if (transformer == null || action == null)

throw new NullPointerException();

new ForEachTransformedMappingTask<K,V,U>

(null, batchFor(parallelismThreshold), 0, 0, table,

transformer, action).invoke();

}

//查询方法

public <U> U search(long parallelismThreshold,

BiFunction<? super K, ? super V, ? extends U> searchFunction) {

if (searchFunction == null) throw new NullPointerException();

return new SearchMappingsTask<K,V,U>

(null, batchFor(parallelismThreshold), 0, 0, table,

searchFunction, new AtomicReference<U>()).invoke();

}

//返回累加结果

public <U> U reduce(long parallelismThreshold,

BiFunction<? super K, ? super V, ? extends U> transformer,

BiFunction<? super U, ? super U, ? extends U> reducer) {

if (transformer == null || reducer == null)

throw new NullPointerException();

return new MapReduceMappingsTask<K,V,U>

(null, batchFor(parallelismThreshold), 0, 0, table,

null, transformer, reducer).invoke();

}

//同上类似

public double reduceToDouble(long parallelismThreshold,

ToDoubleBiFunction<? super K, ? super V> transformer,

double basis,

DoubleBinaryOperator reducer) {

if (transformer == null || reducer == null)

throw new NullPointerException();