目录

- imuPreintegration.cpp

-

- 1. mapOptimization 类

-

- 1.1. laserCloudInfoHandler

- 2. loopClosureThread() :回环检测线程

- 3. visualizeGlobalMapThread(): 全局可视化线程

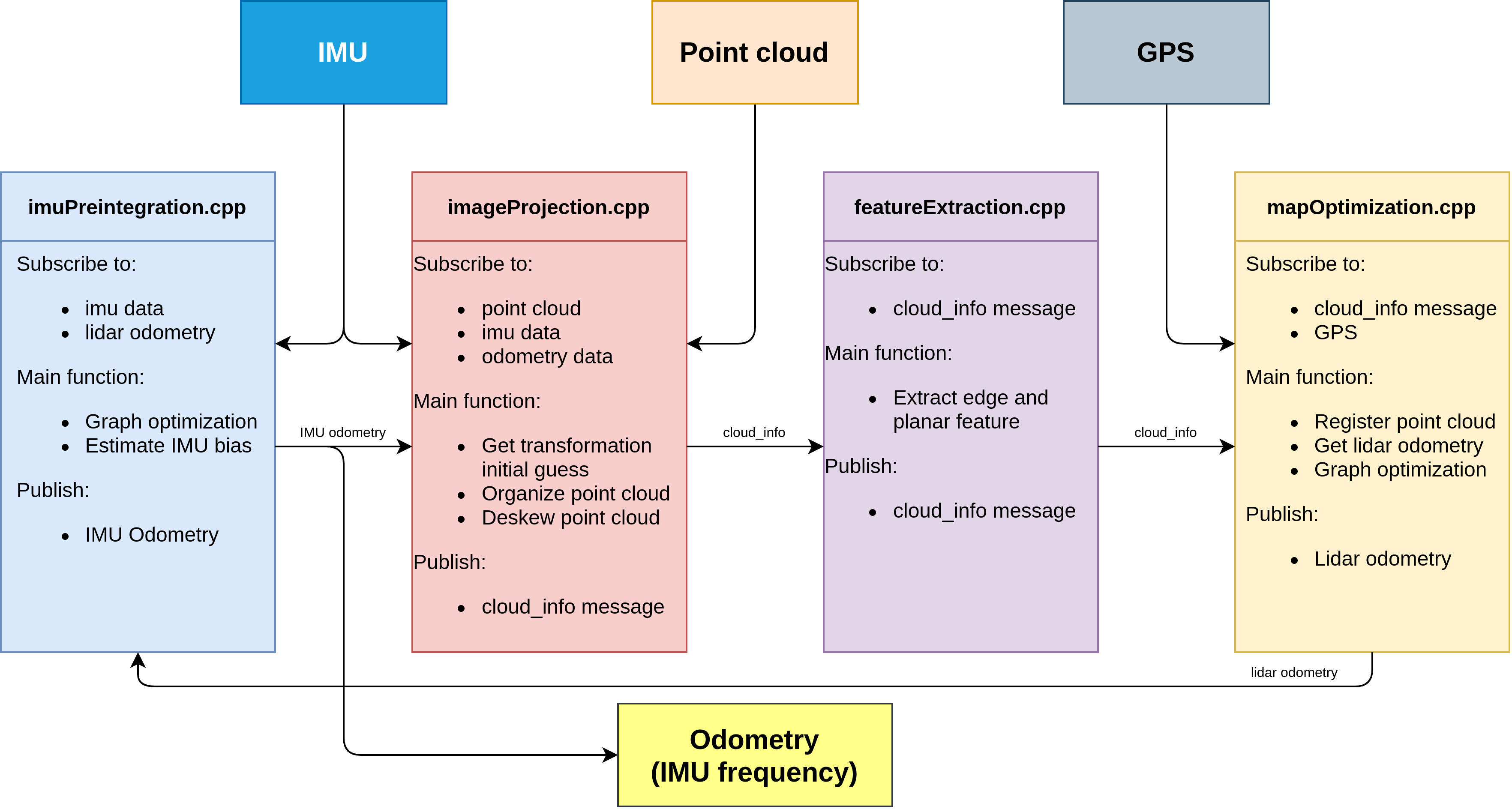

传感器输入: IMU,Point Cloud, GPS(可选)

传感器输入: IMU,Point Cloud, GPS(可选)

输出 : IMU 频率的odometry

imageProjection.cpp: 接受IMU,PointCloud以及IMU预积分输出的IMU odometry(系统刚初始化时没有IMU odometry)。

- 主要功能:

- 基于IMU odometry得到系统的初始位姿

- 将点云投影到cv::mat中,做相应的预处理

- 对原始点云数据做运动补偿(点云的去畸变补偿在代码中只应用于旋转部分,注释掉了平移部分)

featureExtraction.cpp :

- 主要功能:

- 提取点云边缘特征和面特征

mapOptimization.cpp:

- 主要功能:

- 将提取到点云特征与地图中的边缘特征和面特征进行配准

- 配准后得到当前帧在地图中的位姿

- 图优化: 将lidar的帧间约束,回环的约束,(GPS因子)添加到因子图中

imuPreintegration.cpp:一开始并没有工作,只有收到lidar odometry后才会工作

- 主要功能:

- 图优化:lidar odometry和IMU的帧间约束添加到因子图中

- 估计IMU零偏

imuPreintegration.cpp

主函数主要存在1个类mapOptimization和两个线程。

// 回环检测线程

std::thread loopthread(&mapOptimization::loopClosureThread, &MO);

// 可视化线程

std::thread visualizeMapThread(&mapOptimization::visualizeGlobalMapThread, &MO);

1. mapOptimization 类

构造函数写了一堆内容,大部分都是用于可视化的,真正关键的是 laserCloudInfoHandler(),lidar帧的回调函数。

mapOptimization()

{

ISAM2Params parameters;

parameters.relinearizeThreshold = 0.1;

parameters.relinearizeSkip = 1;

isam = new ISAM2(parameters);

pubKeyPoses = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/trajectory", 1);

pubLaserCloudSurround = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/map_global", 1);

pubLaserOdometryGlobal = nh.advertise<nav_msgs::Odometry> ("lio_sam/mapping/odometry", 1);

pubLaserOdometryIncremental = nh.advertise<nav_msgs::Odometry> ("lio_sam/mapping/odometry_incremental", 1);

pubPath = nh.advertise<nav_msgs::Path>("lio_sam/mapping/path", 1);

subCloud = nh.subscribe<lio_sam::cloud_info>("lio_sam/feature/cloud_info", 1, &mapOptimization::laserCloudInfoHandler, this, ros::TransportHints().tcpNoDelay());

subGPS = nh.subscribe<nav_msgs::Odometry> (gpsTopic, 200, &mapOptimization::gpsHandler, this, ros::TransportHints().tcpNoDelay());

// 除了系统的回环检测外,还有人为指定的回环

subLoop = nh.subscribe<std_msgs::Float64MultiArray>("lio_loop/loop_closure_detection", 1, &mapOptimization::loopInfoHandler, this, ros::TransportHints().tcpNoDelay());

// 订阅一个保存地图功能的服务

srvSaveMap = nh.advertiseService("lio_sam/save_map", &mapOptimization::saveMapService, this);

pubHistoryKeyFrames = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/icp_loop_closure_history_cloud", 1);

pubIcpKeyFrames = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/icp_loop_closure_corrected_cloud", 1);

pubLoopConstraintEdge = nh.advertise<visualization_msgs::MarkerArray>("/lio_sam/mapping/loop_closure_constraints", 1);

pubRecentKeyFrames = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/map_local", 1);

pubRecentKeyFrame = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/cloud_registered", 1);

pubCloudRegisteredRaw = nh.advertise<sensor_msgs::PointCloud2>("lio_sam/mapping/cloud_registered_raw", 1);

// 体素滤波设置栅格大小

downSizeFilterCorner.setLeafSize(mappingCornerLeafSize, mappingCornerLeafSize, mappingCornerLeafSize);

downSizeFilterSurf.setLeafSize(mappingSurfLeafSize, mappingSurfLeafSize, mappingSurfLeafSize);

downSizeFilterICP.setLeafSize(mappingSurfLeafSize, mappingSurfLeafSize, mappingSurfLeafSize);

downSizeFilterSurroundingKeyPoses.setLeafSize(surroundingKeyframeDensity, surroundingKeyframeDensity, surroundingKeyframeDensity); // for surrounding key poses of scan-to-map optimization

allocateMemory();

}

1.1. laserCloudInfoHandler

void laserCloudInfoHandler(const lio_sam::cloud_infoConstPtr& msgIn)

{

// 提取当前时间戳

timeLaserInfoStamp = msgIn->header.stamp;

// toSec() : 时间戳转换为秒

timeLaserInfoCur = msgIn->header.stamp.toSec();

// extract info and feature cloud

// 提取cloudinfo中的角点和面点

cloudInfo = *msgIn;

pcl::fromROSMsg(msgIn->cloud_corner, *laserCloudCornerLast);

pcl::fromROSMsg(msgIn->cloud_surface, *laserCloudSurfLast);

std::lock_guard<std::mutex> lock(mtx);

static double timeLastProcessing = -1;

// 控制后端频率,两帧处理一帧

if (timeLaserInfoCur - timeLastProcessing >= mappingProcessInterval)

{

timeLastProcessing = timeLaserInfoCur;

// 更新当前匹配结果的初始位姿

updateInitialGuess();

// 提取当前帧相关的关键帧并且构建点云局部地图

extractSurroundingKeyFrames();

// 对当前帧进行下采样

downsampleCurrentScan();

// 对点云配准进行优化问题构建求解

scan2MapOptimization();

// 根据配准结果确定是否是关键帧

saveKeyFramesAndFactor();

// 调整全局轨迹

correctPoses();

// 将lidar里程计信息发送出去

publishOdometry();

// 发送可视化点云信息

publishFrames();

}

}

1.1.1. updateInitialGuess() : 更新当前匹配结果的初始位姿

我们回忆一下imageProjection.cpp,它提供了lidar帧点云,同时也包含了IMU里程计和IMU旋转角。一般情况下, 直接把它提供的里程计给到位姿的初始估计就可以了。

但是对于第一帧而言,这就是一个先有鸡还是先有蛋的问题了。这里cloudInfo来源于imageProjection.cpp,而imageProjection的里程计来源于 IMUPreintegration.cpp,IMUPreintegration要想提供高频的里程计,就需要mapOptimization.cpp先提供低频的里程计,而这里就是mapOptimization,绕了一圈回来了。

所以来自imageProjection的第一帧lidar点云数据是没有里程计数据的,这个函数得区分第一帧还是后续帧这两种情况。很多解析都把里程计理解成两个,IMU里程计和lidar里程计,我觉得这样命名是容易歧义的,代码里这两个里程计都是lidar->world的表示,唯二的区别是在不同的地方生成的和频率不一样。

这个函数的核心思想是分了当前是第一帧,第二帧,>=第三帧这三种情况的:

当前是第一帧:输入数据只有IMU旋转变换,没有里程计输入,所以第一帧位姿初始化为IMU的旋转数据;

当前是第二帧:此时输入数据开始有里程计了,但是没有上一个里程计,所以这两帧的位姿变换得通过IMU数据获得,然后变换矩阵作用到上一帧位姿上得到当前帧位姿初始值。

>=第三帧:上一帧输入数据就有里程计了,那么这两帧之间的来自输入里程计的位姿变换就能求出来,然后变换矩阵作用到上一帧位姿上得到当前帧位姿初始值。

-

- 如果是第一帧,肯定没有里程计数据,但是有IMU数据,所以把开始时的旋转角记录下来作为第一帧位姿的初始估计

if (cloudKeyPoses3D->points.empty())

{

// 当前帧位姿的旋转部分,用激光帧信息中的RPY(来自imu原始数据)初始化

transformTobeMapped[0] = cloudInfo.imuRollInit;

transformTobeMapped[1] = cloudInfo.imuPitchInit;

transformTobeMapped[2] = cloudInfo.imuYawInit;

// 无论VIO还是LIO, 系统的不可观都是4自由度, 平移+yaw角,这里虽然有磁力计将yaw对齐,但是也可以考虑不使用yaw

if (!useImuHeadingInitialization)

transformTobeMapped[2] = 0;

// 保存磁力计得到的位姿,平移置为0

lastImuTransformation = pcl::getTransformation(0, 0, 0, cloudInfo.imuRollInit, cloudInfo.imuPitchInit, cloudInfo.imuYawInit); // save imu before return;

return;

}

-

- 如果是第二帧lidar,首先把里程计记录下来

static bool lastImuPreTransAvailable = false;

static Eigen::Affine3f lastImuPreTransformation;

// 如果有预积分节点提供的里程计

if (cloudInfo.odomAvailable == true)

{

// 将当前帧的初始估计位姿(来自imu里程计)转化为eigen的数据结构保存下来,后面用来计算增量位姿变换

Eigen::Affine3f transBack = pcl::getTransformation(cloudInfo.initialGuessX, cloudInfo.initialGuessY, cloudInfo.initialGuessZ,

cloudInfo.initialGuessRoll, cloudInfo.initialGuessPitch, cloudInfo.initialGuessYaw);

// 这个标志位表示是否收到过第一帧预积分里程计信息

if (lastImuPreTransAvailable == false)

{

// 将当前里程计结果记录下来

lastImuPreTransformation = transBack;

// 收到第一个里程计数据以后这个标志位就是true

lastImuPreTransAvailable = true;

}

我们跳过后面的else{},直接看后面的内容:

这个时候就忽略掉平移了,只看旋转。上一帧IMU旋转知道,当前IMU旋转知道,那么旋转的变化量就能知道,作用到上一帧位姿后就能得到当前位姿估计了。

if (cloudInfo.imuAvailable == true)

{

// 初值计算方式和上面相同,只不过注意平移置0

// 当前帧的姿态角(来自原始imu数据) Twli+1

Eigen::Affine3f transBack = pcl::getTransformation(0, 0, 0, cloudInfo.imuRollInit, cloudInfo.imuPitchInit, cloudInfo.imuYawInit);

// 当前帧相对于前一帧的姿态变换 Tlili+1 = Twli.inv * Twli+1

Eigen::Affine3f transIncre = lastImuTransformation.inverse() * transBack;

// 前一帧的位姿(来自map)Twli

Eigen::Affine3f transTobe = trans2Affine3f(transformTobeMapped);

// 当前帧的位姿 Twli+1 = Twli * Tlili+1

Eigen::Affine3f transFinal = transTobe * transIncre;

// 更新当前帧位姿transformTobeMapped Twri+1

pcl::getTranslationAndEulerAngles(transFinal, transformTobeMapped[3], transformTobeMapped[4], transformTobeMapped[5],

transformTobeMapped[0], transformTobeMapped[1], transformTobeMapped[2]);

lastImuTransformation = pcl::getTransformation(0, 0, 0, cloudInfo.imuRollInit, cloudInfo.imuPitchInit, cloudInfo.imuYawInit); // save imu before return;

return;

}

- 3.如果是第三帧以后,就可以根据输入里程计的变化量作用到上一帧位姿上获得当前帧位姿的估计了

else {

// 如果是第三帧以后,就可以根据输入里程计的变化量作用到上一帧位姿上,获得当前帧位姿的估计。

// 如果不是第一次收到,就计算上一个里程计的结果与当前里程计结果之间的delta pose

// 由IMU里程计得到 : Tlili+1 = Tl0li.inv * Tl0li+1

Eigen::Affine3f transIncre = lastImuPreTransformation.inverse() * transBack;

// 前一帧的最佳位姿 Twli

Eigen::Affine3f transTobe = trans2Affine3f(transformTobeMapped);

// 将这个增量加到上一帧最佳位姿上去,就是当前帧位姿的一个先验估计位姿

// Twli+1 = Twli * Tlili+1

Eigen::Affine3f transFinal = transTobe * transIncre;

// 将eigen变量转化为欧拉角和平移的形式

pcl::getTranslationAndEulerAngles(transFinal, transformTobeMapped[3], transformTobeMapped[4], transformTobeMapped[5],

transformTobeMapped[0], transformTobeMapped[1], transformTobeMapped[2]);

// 同理,把当前帧的值保存下来

lastImuPreTransformation = transBack;

// 虽然有里程计信息,仍然需要把IMU磁力计得到的旋转记录下来

lastImuTransformation = pcl::getTransformation(0, 0, 0, cloudInfo.imuRollInit, cloudInfo.imuPitchInit, cloudInfo.imuYawInit); // save imu before return;

return;

}

1.1.2. extractSurroundingKeyFrames() :提取当前帧相关的关键帧并且构建点云局部地图

进入函数,发现跳到了extractNearby(),你会发现这和基于位姿信息的回环检测比较像:把时间和最新帧近的地图都找出来,这些地图肯定都很近;然后再把时间久远但是距离近的帧对应的地图找过来,由于是lidar,你不用考虑朝向,只考虑距离就好了。

{

// 如果当前没有关键帧,就return

if (cloudKeyPoses3D->points.empty() == true)

return;

// 提取当前帧相关的关键帧并且构建点云局部地图

extractNearby();

}

1.1.2.1. extractNearby()

- 找出与当前帧距离相近的帧:

kdtreeSurroundingKeyPoses->setInputCloud(cloudKeyPoses3D); // create kd-tree

// 根据最后一个kf的位置,提取一定距离内的关键帧(最近邻搜索,以上一个关键帧的3d位置为起点)

kdtreeSurroundingKeyPoses->radiusSearch(cloudKeyPoses3D->back(), (double)surroundingKeyframeSearchRadius, pointSearchInd, pointSearchSqDis);

// 根据查询的结果,把这些点的位置存进一个点云结构中

for (int i = 0; i < (int)pointSearchInd.size(); ++i)

{

int id = pointSearchInd[i];

surroundingKeyPoses->push_back(cloudKeyPoses3D->points[id]);

}

// 避免关键帧过多,因此做一个下采样

downSizeFilterSurroundingKeyPoses.setInputCloud(surroundingKeyPoses);

downSizeFilterSurroundingKeyPoses.filter(*surroundingKeyPosesDS);

// 确认每个下采样后的点的索引,就使用一个最近邻搜索,其索引赋值给这个点的intensity数据位

for(auto& pt : surroundingKeyPosesDS->points)

{

kdtreeSurroundingKeyPoses->nearestKSearch(pt, 1, pointSearchInd, pointSearchSqDis);

pt.intensity = cloudKeyPoses3D->points[pointSearchInd[0]].intensity;

}

// also extract some latest key frames in case the robot rotates in one position

int numPoses = cloudKeyPoses3D->size();

- 找出与当前帧时间相近的帧:

for (int i = numPoses-1; i >= 0; --i)

{

// 最近10秒的关键帧也保存下来

if (timeLaserInfoCur - cloudKeyPoses6D->points[i].time < 10.0)

surroundingKeyPosesDS->push_back(cloudKeyPoses3D->points[i]);

else

break;

}

- 根据筛选出来的关键帧进行局部地图构建

extractCloud(surroundingKeyPosesDS);

1.1.2.1.1. extractCloud(): 构建用于scan-to-map匹配的局部点云地图

// 根据筛选出来的关键帧进行局部地图构建

void extractCloud(pcl::PointCloud<PointType>::Ptr cloudToExtract)

{

// fuse the map

// 分别存储角点和面点相关的局部地图

laserCloudCornerFromMap->clear();

laserCloudSurfFromMap->clear();

for (int i = 0; i < (int)cloudToExtract->size(); ++i)

{

// 简单校验一下关键帧距离不能太远,这个实际上太会触发

if (pointDistance(cloudToExtract->points[i], cloudKeyPoses3D->back()) > surroundingKeyframeSearchRadius)

continue;

// 取出提取出来的关键帧的索引

int thisKeyInd = (int)cloudToExtract->points[i].intensity;

// 如果这个关键帧对应的点云信息已经存储在一个地图容器中

if (laserCloudMapContainer.find(thisKeyInd) != laserCloudMapContainer.end())

{

// transformed cloud available

// 直接从容器中取出来加到局部地图中

*laserCloudCornerFromMap += laserCloudMapContainer[thisKeyInd].first;

*laserCloudSurfFromMap += laserCloudMapContainer[thisKeyInd].second;

} else {

// transformed cloud not available

// 如果这个点云没有实现存取,那么就通过该帧对应的位姿,把该帧点云从当前帧的位姿转移到世界坐标系下

pcl::PointCloud<PointType> laserCloudCornerTemp = *transformPointCloud(cornerCloudKeyFrames[thisKeyInd], &cloudKeyPoses6D->points[thisKeyInd]);

pcl::PointCloud<PointType> laserCloudSurfTemp = *transformPointCloud(surfCloudKeyFrames[thisKeyInd], &cloudKeyPoses6D->points[thisKeyInd]);

// 点云转换之后加到局部地图中

*laserCloudCornerFromMap += laserCloudCornerTemp;

*laserCloudSurfFromMap += laserCloudSurfTemp;

// 把转换后的面点和角点存进这个容器中,方便后续直接加入点云地图,避免点云转换的操作,节约时间

laserCloudMapContainer[thisKeyInd] = make_pair(laserCloudCornerTemp, laserCloudSurfTemp);

}

}

// Downsample the surrounding corner key frames (or map)

// 把提取的关键帧点云转到世界坐标系下后,避免点云过度密集,因此对面点和角点的局部地图做一个下采样的过程

downSizeFilterCorner.setInputCloud(laserCloudCornerFromMap);

downSizeFilterCorner.filter(*laserCloudCornerFromMapDS);

laserCloudCornerFromMapDSNum = laserCloudCornerFromMapDS->size();

// Downsample the surrounding surf key frames (or map)

downSizeFilterSurf.setInputCloud(laserCloudSurfFromMap);

downSizeFilterSurf.filter(*laserCloudSurfFromMapDS);

laserCloudSurfFromMapDSNum = laserCloudSurfFromMapDS->size();

// clear map cache if too large

// 如果这个局部地图太大,就clear一下,避免占用内存过大

if (laserCloudMapContainer.size() > 1000)

laserCloudMapContainer.clear();

}

1.1.3. scan2MapOptimization()⭐ :对点云配准进行优化问题构建求解

该部分代码的核心思想是两拨点云匹配,求出相对位姿变换,然后作用到当前位姿上,实现位姿矫正。最简单的办法就直接用pcl的icp就好了,实际上代码里回环检测的部分就是用pcl的icp的。那为什么这里作者要自己实现呢?

因为传统的icp匹配,是点和点之间的匹配,对初始位姿(差的不能太大)和点云质量(完整度/噪声)要求很高。但是这里,用的是特征的匹配:点与面之间的匹配,点与线之间的匹配,会带来更多的鲁棒性。

// 对点云配准进行优化问题构建求解

void scan2MapOptimization()

{

// 如果没有关键帧,那也没办法做当前帧到局部地图的匹配

if (cloudKeyPoses3D->points.empty())

return;

// 判断当前帧的角点和面点数是否足够

if (laserCloudCornerLastDSNum > edgeFeatureMinValidNum && laserCloudSurfLastDSNum > surfFeatureMinValidNum)

{

// 分别把角点面点局部地图构建kdtree

kdtreeCornerFromMap->setInputCloud(laserCloudCornerFromMapDS);

kdtreeSurfFromMap->setInputCloud(laserCloudSurfFromMapDS);

// 迭代求解

for (int iterCount = 0; iterCount < 30; iterCount++)

{

// 每次迭代清空特征点集合

laserCloudOri->clear();

coeffSel->clear();

// 边缘点的优化

cornerOptimization();

// 面点的优化

surfOptimization();

// 结合残差和雅克比

combineOptimizationCoeffs();

// 高斯牛顿下降法

if (LMOptimization(iterCount) == true)

break;

}

// 优化问题结束

transformUpdate();

} else {

ROS_WARN("Not enough features! Only %d edge and %d planar features available.", laserCloudCornerLastDSNum, laserCloudSurfLastDSNum);

}

}

1.1.3.1. void cornerOptimization() : 边缘点的优化

这部分内容相当于点-线icp,过程为遍历当前帧地图点,转到world系下,找到局部地图里和它最近的5个点

void cornerOptimization()

{

updatePointAssociateToMap();

// 使用openmp并行加速(适用于独立的循环)

#pragma omp parallel for num_threads(numberOfCores)

// 遍历当前帧的角点

for (int i = 0; i < laserCloudCornerLastDSNum; i++)

{

PointType pointOri, pointSel, coeff;

std::vector<int> pointSearchInd;

std::vector<float> pointSearchSqDis;

pointOri = laserCloudCornerLastDS->points[i];

// 将该点从当前帧通过初始的位姿转换到地图坐标系下去

pointAssociateToMap(&pointOri, &pointSel);

// 在角点地图里寻找距离当前点比较近的五个点

kdtreeCornerFromMap->nearestKSearch(pointSel, 5, pointSearchInd, pointSearchSqDis);

构建协方差矩阵并分解:

// 定义协方差矩阵,特征值,特征向量的容器

cv::Mat matA1(3, 3, CV_32F, cv::Scalar::all(0));

cv::Mat matD1(1, 3, CV_32F, cv::Scalar::all(0));

cv::Mat matV1(3, 3, CV_32F, cv::Scalar::all(0));

// 计算找到的点中距离当前点最远的点,如果距离太大那说明这个约束不可信,就跳过

if (pointSearchSqDis[4] < 1.0) {

float cx = 0, cy = 0, cz = 0;

/// 计算协方差矩阵

// 首先计算均值

for (int j = 0; j < 5; j++) {

cx += laserCloudCornerFromMapDS->points[pointSearchInd[j]].x;

cy += laserCloudCornerFromMapDS->points[pointSearchInd[j]].y;

cz += laserCloudCornerFromMapDS->points[pointSearchInd[j]].z;

}

cx /= 5; cy /= 5; cz /= 5;

float a11 = 0, a12 = 0, a13 = 0, a22 = 0, a23 = 0, a33 = 0;

for (int j = 0; j < 5; j++) {

float ax = laserCloudCornerFromMapDS->points[pointSearchInd[j]].x - cx;

float ay = laserCloudCornerFromMapDS->points[pointSearchInd[j]].y - cy;

float az = laserCloudCornerFromMapDS->points[pointSearchInd[j]].z - cz;

a11 += ax * ax; a12 += ax * ay; a13 += ax * az;

a22 += ay * ay; a23 += ay * az;

a33 += az * az;

}

a11 /= 5; a12 /= 5; a13 /= 5; a22 /= 5; a23 /= 5; a33 /= 5;

matA1.at<float>(0, 0) = a11; matA1.at<float>(0, 1) = a12; matA1.at<float>(0, 2) = a13;

matA1.at<float>(1, 0) = a12; matA1.at<float>(1, 1) = a22; matA1.at<float>(1, 2) = a23;

matA1.at<float>(2, 0) = a13; matA1.at<float>(2, 1) = a23; matA1.at<float>(2, 2) = a33;

// 特征值分解

cv::eigen(matA1, matD1, matV1);

- 判断局部地图里的这5个点能否组成一条直线:

// 这是线性特征,要求最大特征值大于3倍的次大特征值

if (matD1.at<float>(0, 0) > 3 * matD1.at<float>(0, 1)) {

// 当前帧角点坐标(map坐标系)

float x0 = pointSel.x;

float y0 = pointSel.y;

float z0 = pointSel.z;

// 特征向量对应的就是直线的方向向量

// 通过点的均值往两边拓展,构成一条线的两个端点

float x1 = cx + 0.1 * matV1.at<float>(0, 0);

float y1 = cy + 0.1 * matV1.at<float>(0, 1);

float z1 = cz + 0.1 * matV1.at<float>(0, 2);

float x2 = cx - 0.1 * matV1.at<float>(0, 0);

float y2 = cy - 0.1 * matV1.at<float>(0, 1);

float z2 = cz - 0.1 * matV1.at<float>(0, 2);

- 计算当前帧的当前点与这条直线之间的距离:

lio-sam中对角点特征点残差方程定义为:

式中 P i + 1 , k e P^e_{i+1,k} Pi+1,ke表示在第i+1时刻第k个特征点通过估计的位姿转换到世界坐标系下的特征点坐标, P i , u e P^e_{i,u} Pi,ue, P i . v e P^e_{i.v} Pi.ve表示第k个角点特征点在地图中对应的边线特征的上的两个坐标点。该公式的原理可用下图说明。

式中 P i + 1 , k e P^e_{i+1,k} Pi+1,ke表示在第i+1时刻第k个特征点通过估计的位姿转换到世界坐标系下的特征点坐标, P i , u e P^e_{i,u} Pi,ue, P i . v e P^e_{i.v} Pi.ve表示第k个角点特征点在地图中对应的边线特征的上的两个坐标点。该公式的原理可用下图说明。

∣ A O ⃗ × B O ⃗ ∣ = ∣ i ⃗ j ⃗ k ⃗ x 0 − x 1 y 0 − y 1 z 0 − z 1 x 0 − x 2 y 0 − y 2 z 0 − z 2 ∣ |\vec{AO} \times \vec{BO} |=\begin{vmatrix} \vec i&\vec j&\vec k\\ x_0-x_1&y_0-y_1&z_0-z_1\\ x_0-x_2&y_0-y_2&z_0-z_2\\ \end{vmatrix} ∣AO×BO∣=

ix0−x1x0−x2jy0−y1y0−y2kz0−z1z0−z2

= [ ( y 0 − y 1 ) ( z 0 − z 2 ) − ( y 0 − y 2 ) ( z 0 − z 1 ) ] i ⃗ + [ ( x 0 − x 2 ) ( z 0 − z 1 ) − ( x 0 − x 1 ) ( z 0 − z 2 ) ] j ⃗ + [ ( x 0 − x 1 ) ( y 0 − y 2 ) − ( x 0 − x 2 ) ( y 0 − y 1 ) ] ) k ⃗ = [(y_0-y_1)(z_0-z_2)-(y_0-y_2)(z_0-z_1)]\vec i + [(x_0-x_2)(z_0-z_1)-(x_0-x_1)(z_0-z_2)]\vec j+[(x_0-x_1)(y_0-y_2)-(x_0-x_2)(y_0-y_1)])\vec k =[(y0−y1)(z0−z2)−(y0−y2)(z0−z1)]i+[(x0−x2)(z0−z1)−(x0−x1)(z0−z2)]j+[(x0−x1)(y0−y2)−(x0−x2)(y0−y1)])k

代码中a012即相当于 A O ⃗ × B O ⃗ \vec{AO} \times \vec{BO} AO×BO的模长

float a012 = sqrt(((x0 - x1)*(y0 - y2) - (x0 - x2)*(y0 - y1)) * ((x0 - x1)*(y0 - y2) - (x0 - x2)*(y0 - y1))

+ ((x0 - x1)*(z0 - z2) - (x0 - x2)*(z0 - z1)) * ((x0 - x1)*(z0 - z2) - (x0 - x2)*(z0 - z1))

+ ((y0 - y1)*(z0 - z2) - (y0 - y2)*(z0 - z1)) * ((y0 - y1)*(z0 - z2) - (y0 - y2)*(z0 - z1)));

代码中l12即相当于 A B ⃗ \vec{AB} AB 的模长

float l12 = sqrt((x1 - x2)*(x1 - x2) + (y1 - y2)*(y1 - y2) + (z1 - z2)*(z1 - z2));

A O ⃗ × B O ⃗ \vec{AO} \times \vec{BO} AO×BO相当于上图中的 O M ⃗ \vec{OM} OM,根据右手法则,其方向为垂直向下。则 B A ⃗ × O M ⃗ \vec{BA} \times \vec{OM} BA×OM相当于 C O ⃗ \vec{CO} CO,对 C O ⃗ \vec{CO} CO求导相当于求解点到直线距离的梯度下降方向。

∣ B A ⃗ × O M ⃗ ∣ = ∣ i ⃗ j ⃗ k ⃗ x 1 − x 2 y 1 − y 2 z 1 − z 2 ( y 0 − y 1 ) ( z 0 − z 2 ) − ( y 0 − y 2 ) ( z 0 − z 1 ) ( x 0 − x 2 ) ( z 0 − z 1 ) − ( x 0 − x 1 ) ( z 0 − z 2 ) ( x 0 − x 1 ) ( y 0 − y 2 ) − ( x 0 − x 2 ) ( y 0 − y 1 ) ∣ |\vec{BA} \times \vec{OM} |=\begin{vmatrix} \vec i&\vec j&\vec k\\ x_1-x_2&y_1-y_2&z_1-z_2\\ (y_0-y_1)(z_0-z_2)-(y_0-y_2)(z_0-z_1)&(x_0-x_2)(z_0-z_1)-(x_0-x_1)(z_0-z_2)&(x_0-x_1)(y_0-y_2)-(x_0-x_2)(y_0-y_1)\\ \end{vmatrix} ∣BA×OM∣=

ix1−x2(y0−y1)(z0−z2)−(y0−y2)(z0−z1)jy1−y2(x0−x2)(z0−z1)−(x0−x1)(z0−z2)kz1−z2(x0−x1)(y0−y2)−(x0−x2)(y0−y1)

同代码la,lb,lc,代码除以/ a012 / l12即得到梯度下降的单位方向向量

float la = ((y1 - y2)*((x0 - x1)*(y0 - y2) - (x0 - x2)*(y0 - y1))

+ (z1 - z2)*((x0 - x1)*(z0 - z2) - (x0 - x2)*(z0 - z1))) / a012 / l12;

float lb = -((x1 - x2)*((x0 - x1)*(y0 - y2) - (x0 - x2)*(y0 - y1))

- (z1 - z2)*((y0 - y1)*(z0 - z2) - (y0 - y2)*(z0 - z1))) / a012 / l12;

float lc = -((x1 - x2)*((x0 - x1)*(z0 - z2) - (x0 - x2)*(z0 - z1))

+ (y1 - y2)*((y0 - y1)*(z0 - z2) - (y0 - y2)*(z0 - z1))) / a012 / l12;

计算该点在优化中占比的权重,和它与直线的距离有关:

// 一个简单的核函数,残差越大权重降低

float s = 1 - 0.9 * fabs(ld2);

把这个权重乘到法向量和距离上,并保存下来,用于一会进行的优化

coeff.x = s * la;

coeff.y = s * lb;

coeff.z = s * lc;

coeff.intensity = s * ld2;

// 如果残差小于10cm,就认为是一个有效的约束

if (s > 0.1) {

laserCloudOriCornerVec[i] = pointOri;

coeffSelCornerVec[i] = coeff;

laserCloudOriCornerFlag[i] = true;

}

1.1.3.2. surfOptimization(): 面点的优化

这部分内容相当于点-面icp,过程为遍历当前帧地图点,转到world系下,找到局部地图里和它最近的5个点

// 遍历当前帧平面点集合

#pragma omp parallel for num_threads(numberOfCores)

for (int i = 0; i < laserCloudSurfLastDSNum; i++)

{

PointType pointOri, pointSel, coeff;

std::vector<int> pointSearchInd;

std::vector<float> pointSearchSqDis;

// 平面点(坐标还是lidar系)

pointOri = laserCloudSurfLastDS->points[i];

// 根据当前帧位姿,变换到世界坐标系(map系)下

pointAssociateToMap(&pointOri, &pointSel);

// 在局部平面点map中查找当前平面点相邻的5个平面点

kdtreeSurfFromMap->nearestKSearch(pointSel, 5, pointSearchInd, pointSearchSqDis);

判断局部地图这5个点能否构成一个平面:

Eigen::Matrix<float, 5, 3> matA0;

Eigen::Matrix<float, 5, 1> matB0;

Eigen::Vector3f matX0;

// 平面方程Ax+By+Cz+1 = 0

matA0.setZero();

matB0.fill(-1);

matX0.setZero();

// 同样距离不能超过1m

if (pointSearchSqDis[4] < 1.0) {

for (int j = 0; j < 5; j++) {

matA0(j, 0) = laserCloudSurfFromMapDS->points[pointSearchInd[j]].x;

matA0(j, 1) = laserCloudSurfFromMapDS->points[pointSearchInd[j]].y;

matA0(j, 2) = laserCloudSurfFromMapDS->points[pointSearchInd[j]].z;

}

// 求解Ax=b这个超定方程

matX0 = matA0.colPivHouseholderQr().solve(matB0);

// 求出来x就是这个平面的法向量

float pa = matX0(0, 0);

float pb = matX0(1, 0);

float pc = matX0(2, 0);

float pd = 1;

float ps = sqrt(pa * pa + pb * pb + pc * pc);

// 归一化,将法向量模长统一为1

pa /= ps; pb /= ps; pc /= ps; pd /= ps;

bool planeValid = true;

for (int j = 0; j < 5; j++) {

// 每个点代入平面方程,计算点到面的距离,如果距离大于0.2m,认为这个平面曲率偏大,就是无效的平面

if (fabs(pa * laserCloudSurfFromMapDS->points[pointSearchInd[j]].x +

pb * laserCloudSurfFromMapDS->points[pointSearchInd[j]].y +

pc * laserCloudSurfFromMapDS->points[pointSearchInd[j]].z + pd) > 0.2) {

planeValid = false;

break;

}

}

判断局部地图这5个点能否构成一个平面:

// 如果通过了平面的检验

if (planeValid) {

// 计算当前点到平面的距离

float pd2 = pa * pointSel.x + pb * pointSel.y + pc * pointSel.z + pd;

// 为了让面点的权重更大?

float s = 1 - 0.9 * fabs(pd2) / sqrt(sqrt(pointSel.x * pointSel.x

+ pointSel.y * pointSel.y + pointSel.z * pointSel.z));

coeff.x = s * pa;

coeff.y = s * pb;

coeff.z = s * pc;

coeff.intensity = s * pd2;

if (s > 0.1) {

laserCloudOriSurfVec[i] = pointOri;

coeffSelSurfVec[i] = coeff;

laserCloudOriSurfFlag[i] = true;

}

}

1.1.3.3. combineOptimizationCoeffs() : 将角点和面点的约束统一到一起

void combineOptimizationCoeffs()

{

// combine corner coeffs

for (int i = 0; i < laserCloudCornerLastDSNum; ++i){

// 只有标志位为true的时候才是有效的约束

if (laserCloudOriCornerFlag[i] == true){

laserCloudOri->push_back(laserCloudOriCornerVec[i]);

coeffSel->push_back(coeffSelCornerVec[i]);

}

}

// combine surf coeffs

for (int i = 0; i < laserCloudSurfLastDSNum; ++i){

if (laserCloudOriSurfFlag[i] == true){

laserCloudOri->push_back(laserCloudOriSurfVec[i]);

coeffSel->push_back(coeffSelSurfVec[i]);

}

}

// reset flag for next iteration

// 标志位清零

std::fill(laserCloudOriCornerFlag.begin(), laserCloudOriCornerFlag.end(), false);

std::fill(laserCloudOriSurfFlag.begin(), laserCloudOriSurfFlag.end(), false);

}

1.1.3.4. LMOptimization() : 优化

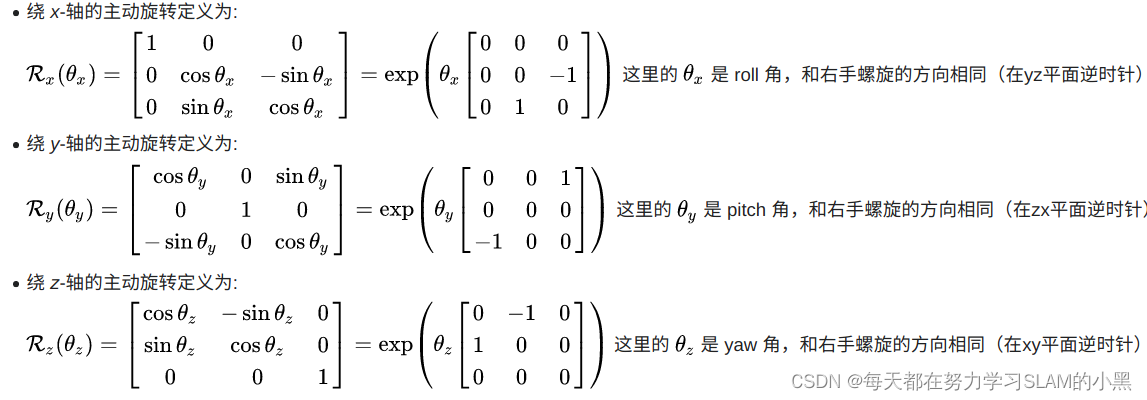

原始的loam代码为了适用于kitti数据集的评测,将lidar坐标系转到相机坐标系。这里lio-sam把原先loam中的代码拷贝了过来,但是为了坐标系的统一,就先转到相机系优化,然后结果转回lidar系

由于lidar坐标系的roll,pitch,yaw相对与相机坐标系的yaw,roll,pitch。lidar的Z-Y-X相对于相机系的Y-X-Z

bool LMOptimization(int iterCount)

{

// This optimization is from the original loam_velodyne by Ji Zhang, need to cope with coordinate transformation

// lidar <- camera --- camera <- lidar

// x = z --- x = y

// y = x --- y = z

// z = y --- z = x

// roll = yaw --- roll = pitch

// pitch = roll --- pitch = yaw

// yaw = pitch --- yaw = roll

// 将lidar坐标系转到相机坐标系

float srx = sin(transformTobeMapped[1]);

float crx = cos(transformTobeMapped[1]);

float sry = sin(transformTobeMapped[2]);

float cry = cos(transformTobeMapped[2]);

float srz = sin(transformTobeMapped[0]);

float crz = cos(transformTobeMapped[0]);

刚刚点线icp和点面icp一共的匹配点对数得超过一定的数量才行,越多越准确:

int laserCloudSelNum = laserCloudOri->size();

if (laserCloudSelNum < 50) {

return false;

}

- 求解高斯牛顿的增量方程求出位姿的增量

由于lidar坐标系的roll,pitch,yaw相对与相机坐标系的yaw,roll,pitch

lidar的Z-Y-X相对于相机系的Y-X-Z

所以会导致旋转矩阵的性质不同,根据不同欧拉角轴旋转顺序生成的旋转矩阵如下图所示:

注意上图数字与顺序对应

∂ e ∂ t = ∂ e W P ⋅ ∂ W P ∂ t \frac{\partial e}{\partial t} = \frac{\partial e}{^W{}P}\cdot \frac{\partial ^W{}P}{\partial t} ∂t∂e=WP∂e⋅∂t∂WP

其中 ∂ e W P \frac{\partial e}{^W{}P} WP∂e =coeff , W P = R ⋅ L P + t ^WP=R\cdot ^L{}P+t WP=R⋅LP+t

此处的R为Y-X-Z的顺序生成的,所以结构为 Y 1 X 2 Z 3 Y_1X_2Z_3 Y1X2Z3

R = [ c o s y ⋅ c o s z + s i n y ⋅ s i n x ⋅ s i n z c o s z ⋅ s i n y ⋅ s i n x c o s x ⋅ s i n y c o s x ⋅ s i n z c o s x ⋅ c o s z − s i n x c o s y ⋅ s i n x ⋅ s i n z − c o s z ⋅ s i n y c o s y ⋅ c o s z ⋅ s i n x + s i n y ⋅ s i n z c o s y ⋅ c o s x ] R=\begin{bmatrix} cosy\cdot cosz+siny \cdot sinx \cdot sinz& cosz\cdot siny\cdot sinx& cosx \cdot siny\\ cosx \cdot sinz& cosx \cdot cosz& -sinx\\ cosy \cdot sinx \cdot sinz - cosz \cdot siny& cosy \cdot cosz \cdot sinx + siny \cdot sinz& cosy \cdot cosx\\ \end{bmatrix} R=

cosy⋅cosz+siny⋅sinx⋅sinzcosx⋅sinzcosy⋅sinx⋅sinz−cosz⋅sinycosz⋅siny⋅sinxcosx⋅coszcosy⋅cosz⋅sinx+siny⋅sinzcosx⋅siny−sinxcosy⋅cosx

L P = [ L P x L P y L P z ] ^L{}P=\begin{bmatrix} ^L{}P_x\\ ^L{}P_y\\ ^L{}P_z\\ \end{bmatrix} LP=

LPxLPyLPz

∂ W P ∂ r o l l = [ ∂ R o o ∂ r o l l L P x + ∂ R o 1 ∂ r o l l L P y + ∂ R o 2 ∂ r o l l L P z ∂ R 1 o ∂ p i t c h L P x + ∂ R 11 ∂ p i t c h L P y + ∂ R 12 ∂ p i t c h L P z ∂ R 2 o ∂ y a w L P x + ∂ R 21 ∂ y a w L P y + ∂ R 22 ∂ y a w L P z ] \frac{\partial^WP}{\partial roll} = \begin{bmatrix} \frac{\partial R_{oo}}{\partial roll}^LP_x+\frac{\partial R_{o1}}{\partial roll}^LP_y+\frac{\partial R_{o2}}{\partial roll}^LP_z\\ \frac{\partial R_{1o}}{\partial pitch}^LP_x+\frac{\partial R_{11}}{\partial pitch}^LP_y+\frac{\partial R_{12}}{\partial pitch}^LP_z\\ \frac{\partial R_{2o}}{\partial yaw}^LP_x+\frac{\partial R_{21}}{\partial yaw}^LP_y+\frac{\partial R_{22}}{\partial yaw}^LP_z\\ \end{bmatrix} ∂roll∂WP=

∂roll∂RooLPx+∂roll∂Ro1LPy+∂roll∂Ro2LPz∂pitch∂R1oLPx+∂pitch∂R11LPy+∂pitch∂R12LPz∂yaw∂R2oLPx+∂yaw∂R21LPy+∂yaw∂R22LPz

= [ s i n y ⋅ c o s x ⋅ s i n z ⋅ L P x + c o s z ⋅ s i n y ⋅ c o s x ⋅ L P y + − s i n x ⋅ s i n y ⋅ L P z − s i n x ⋅ s i n z ⋅ L P x + − s i n x ⋅ c o s z ⋅ L P y + − c o s x ⋅ L P z c o s y ⋅ c o s x ⋅ s i n z ⋅ L P x + c o s y ⋅ c o s z ⋅ c o s x ⋅ L P y + c o s y ⋅ − s i n x ⋅ L P z ] =\begin{bmatrix} siny \cdot cosx \cdot sinz\cdot ^LP_x +cosz\cdot siny\cdot cosx\cdot ^LP_y + -sinx \cdot siny\cdot ^LP_z\\ -sinx \cdot sinz\cdot ^LP_x + -sinx \cdot cosz\cdot ^LP_y + -cosx\cdot ^LP_z\\ cosy \cdot cosx \cdot sinz \cdot ^LP_x + cosy \cdot cosz \cdot cosx \cdot ^LP_y + cosy \cdot -sinx\cdot ^LP_z\\ \end{bmatrix} =

siny⋅cosx⋅sinz⋅LPx+cosz⋅siny⋅cosx⋅LPy+−sinx⋅siny⋅LPz−sinx⋅sinz⋅LPx+−sinx⋅cosz⋅LPy+−cosx⋅LPzcosy⋅cosx⋅sinz⋅LPx+cosy⋅cosz⋅cosx⋅LPy+cosy⋅−sinx⋅LPz

同代码arx

float arx = (crx*sry*srz*pointOri.x + crx*crz*sry*pointOri.y - srx*sry*pointOri.z) * coeff.x

+ (-srx*srz*pointOri.x - crz*srx*pointOri.y - crx*pointOri.z) * coeff.y

+ (crx*cry*srz*pointOri.x + crx*cry*crz*pointOri.y - cry*srx*pointOri.z) * coeff.z;

cv::Mat matA(laserCloudSelNum, 6, CV_32F, cv::Scalar::all(0));

cv::Mat matAt(6, laserCloudSelNum, CV_32F, cv::Scalar::all(0));

cv::Mat matAtA(6, 6, CV_32F, cv::Scalar::all(0));

cv::Mat matB(laserCloudSelNum, 1, CV_32F, cv::Scalar::all(0));

cv::Mat matAtB(6, 1, CV_32F, cv::Scalar::all(0));

cv::Mat matX(6, 1, CV_32F, cv::Scalar::all(0));

PointType pointOri, coeff;

for (int i = 0; i < laserCloudSelNum; i++) {

// 首先将当前点以及点到线(面)的单位向量转到相机系

// lidar -> camera

pointOri.x = laserCloudOri->points[i].y;

pointOri.y = laserCloudOri->points[i].z;

pointOri.z = laserCloudOri->points[i].x;

// lidar -> camera

coeff.x = coeffSel->points[i].y;

coeff.y = coeffSel->points[i].z;

coeff.z = coeffSel->points[i].x;

coeff.intensity = coeffSel->points[i].intensity;

// in camera

// 相机系下的旋转顺序是Y-X-Z对应lidar系下Z-Y-X

// (对roll的导数)

float arx = (crx*sry*srz*pointOri.x + crx*crz*sry*pointOri.y - srx*sry*pointOri.z) * coeff.x

+ (-srx*srz*pointOri.x - crz*srx*pointOri.y - crx*pointOri.z) * coeff.y

+ (crx*cry*srz*pointOri.x + crx*cry*crz*pointOri.y - cry*srx*pointOri.z) * coeff.z;

float ary = ((cry*srx*srz - crz*sry)*pointOri.x

+ (sry*srz + cry*crz*srx)*pointOri.y + crx*cry*pointOri.z) * coeff.x

+ ((-cry*crz - srx*sry*srz)*pointOri.x

+ (cry*srz - crz*srx*sry)*pointOri.y - crx*sry*pointOri.z) * coeff.z;

float arz = ((crz*srx*sry - cry*srz)*pointOri.x + (-cry*crz-srx*sry*srz)*pointOri.y)*coeff.x

+ (crx*crz*pointOri.x - crx*srz*pointOri.y) * coeff.y

+ ((sry*srz + cry*crz*srx)*pointOri.x + (crz*sry-cry*srx*srz)*pointOri.y)*coeff.z;

// lidar -> camera

// 这里就把camera转到lidar了

matA.at<float>(i, 0) = arz;

matA.at<float>(i, 1) = arx;

matA.at<float>(i, 2) = ary;

matA.at<float>(i, 3) = coeff.z;

matA.at<float>(i, 4) = coeff.x;

matA.at<float>(i, 5) = coeff.y;

matB.at<float>(i, 0) = -coeff.intensity;

}

// 构造JTJ以及-JTe矩阵

cv::transpose(matA, matAt);

matAtA = matAt * matA;

matAtB = matAt * matB;

// 求解增量

cv::solve(matAtA, matAtB, matX, cv::DECOMP_QR);

接着是对JTJ矩阵的退化检查,测试发现matE第一行这六个数是依次变小的,如果匹配的好的话这几个数都会很大,甚至比100都大很多,如果跑到后面匹配的越来越差的时候,就会变小了,退化就会经常出现了。

if (iterCount == 0) {

// 检查一下是否有退化的情况

cv::Mat matE(1, 6, CV_32F, cv::Scalar::all(0));

cv::Mat matV(6, 6, CV_32F, cv::Scalar::all(0));

cv::Mat matV2(6, 6, CV_32F, cv::Scalar::all(0));

// 对JTJ进行特征分解

cv::eigen(matAtA, matE, matV);

matV.copyTo(matV2);

isDegenerate = false;

float eignThre[6] = {

100, 100, 100, 100, 100, 100};

for (int i = 5; i >= 0; i--) {

// 特征值由小到大进行遍历,如果小于阈值就认为退化,特征值可能存在0空间,无法得到最优估计(例如在走廊中,无法估计前进的方向,会存在0空间)

if (matE.at<float>(0, i) < eignThre[i]) {

// 对应的特征向量全部置0

for (int j = 0; j < 6; j++) {

matV2.at<float>(i, j) = 0;

}

isDegenerate = true;

} else {

break;

}

}

matP = matV.inv() * matV2;

}

// 如果发生退化,就对增量进行修改,退化方向不更新

if (isDegenerate)

{

cv::Mat matX2(6, 1, CV_32F, cv::Scalar::all(0));

matX.copyTo(matX2);

matX = matP * matX2;

}

把求得的位姿增量加到当前的位姿上,实现当前轮次对位姿的修正:

// 增量更新 x = x + delta_x

transformTobeMapped[0] += matX.at<float>(0, 0);

transformTobeMapped[1] += matX.at<float>(1, 0);

transformTobeMapped[2] += matX.at<float>(2, 0);

transformTobeMapped[3] += matX.at<float>(3, 0);

transformTobeMapped[4] += matX.at<float>(4, 0);

transformTobeMapped[5] += matX.at<float>(5, 0);

计算俩阈值,判断是否满足停止循环的条件:

float deltaR = sqrt(

pow(pcl::rad2deg(matX.at<float>(0, 0)), 2) +

pow(pcl::rad2deg(matX.at<float>(1, 0)), 2) +

pow(pcl::rad2deg(matX.at<float>(2, 0)), 2));

// 计算更新的旋转和平移大小

float deltaT = sqrt(

pow(matX.at<float>(3, 0) * 100, 2) +

pow(matX.at<float>(4, 0) * 100, 2) +

pow(matX.at<float>(5, 0) * 100, 2));

// 旋转和平移增量足够小,认为优化问题收敛

if (deltaR < 0.05 && deltaT < 0.05) {

return true; // converged

}

return false; // keep optimizing

1.1.3.5. transformUpdate() :把结果和imu进行一些加权融合

void transformUpdate()

{

// 可以获取九轴IMU的世界系下的姿态

if (cloudInfo.imuAvailable == true)

{

// 因为roll和pitch原则上全程可观,因此这里吧lidar推算出来的姿态和磁力计结果做一个加权平均

// 首先判断车翻了没有.. 当然手持设备可以pitch很大,这里主要表面插值产生的奇异性

if (std::abs(cloudInfo.imuPitchInit) < 1.4)

{

double imuWeight = imuRPYWeight; // 陀螺仪角度权重

tf::Quaternion imuQuaternion;

tf::Quaternion transformQuaternion;

double rollMid, pitchMid, yawMid;

// slerp roll

// lidar匹配获得roll角转成四元数

transformQuaternion.setRPY(transformTobeMapped[0], 0, 0);

// imu获得的roll角

imuQuaternion.setRPY(cloudInfo.imuRollInit, 0, 0);

// 使用四元数球面插值

tf::Matrix3x3(transformQuaternion.slerp(imuQuaternion, imuWeight)).getRPY(rollMid, pitchMid, yawMid);

// 插值结果作为roll的最终结果

transformTobeMapped[0] = rollMid;

// slerp pitch

transformQuaternion.setRPY(0, transformTobeMapped[1], 0);

imuQuaternion.setRPY(0, cloudInfo.imuPitchInit, 0);

tf::Matrix3x3(transformQuaternion.slerp(imuQuaternion, imuWeight)).getRPY(rollMid, pitchMid, yawMid);

transformTobeMapped[1] = pitchMid;

}

}

// 对roll pitch和z进行一些约束,主要针对室内2d场景下,已知2d先验可以加上这些约束

transformTobeMapped[0] = constraintTransformation(transformTobeMapped[0], rotation_tollerance);

transformTobeMapped[1] = constraintTransformation(transformTobeMapped[1], rotation_tollerance);

transformTobeMapped[5] = constraintTransformation(transformTobeMapped[5], z_tollerance);

// 最终结果也可以转成eigen的结构

incrementalOdometryAffineBack = trans2Affine3f(transformTobeMapped);

}

1.1.4. saveKeyFramesAndFactor() : 根据配准结果确定是否是关键帧,添加gtsam的因子

- 首先加入lidar里程计的因子

对于第一帧,他把yaw角和平移分量的方差设的非常大,看来是要固定住这几个数。

对于后续的帧,方差设的就很小了。往因子图里加的都是当前帧和上一帧的序号和两帧之间的位姿变换, 同时再把当前帧的位姿加到initialEstimate里。

void addOdomFactor()

{

// 如果是第一帧关键帧

if (cloudKeyPoses3D->points.empty())

{

// 置信度就设置差一点,尤其是不可观的平移和yaw角

noiseModel::Diagonal::shared_ptr priorNoise = noiseModel::Diagonal::Variances((Vector(6) << 1e-2, 1e-2, M_PI*M_PI, 1e8, 1e8, 1e8).finished()); // rad*rad, meter*meter

// 增加先验约束,对第0个节点增加约束

gtSAMgraph.add(PriorFactor<Pose3>(0, trans2gtsamPose(transformTobeMapped), priorNoise));

// 加入节点信息

initialEstimate.insert(0, trans2gtsamPose(transformTobeMapped));

}else{

// 如果不是第一帧,就增加帧间约束

// 这时 帧间约束置信度就设置高一点

noiseModel::Diagonal::shared_ptr odometryNoise = noiseModel::Diagonal::Variances((Vector(6) << 1e-6, 1e-6, 1e-6, 1e-4, 1e-4, 1e-4).finished());

// 转成gtsam的格式

gtsam::Pose3 poseFrom = pclPointTogtsamPose3(cloudKeyPoses6D->points.back());

gtsam::Pose3 poseTo = trans2gtsamPose(transformTobeMapped);

// gtsam::BetweenFactor : 状态量间的约束,约束相邻两状态量之间的差值不会距离该约束过远

// 这是一个帧间约束,分别输入两个节点的id,帧间约束大小以及置信度

gtSAMgraph.add(BetweenFactor<Pose3>(cloudKeyPoses3D->size()-1, cloudKeyPoses3D->size(), poseFrom.between(poseTo), odometryNoise));

// 加入节点信息

initialEstimate.insert(cloudKeyPoses3D->size(), poseTo);

}

}

- 添加GPS因子:

void addGPSFactor()

{

// 如果没有gps信息就算了

if (gpsQueue.empty())

return;

// wait for system initialized and settles down

// 如果有gps信息,但是没有关键帧信息也算了

if (cloudKeyPoses3D->points.empty())

return;

else

{

// 第一个关键帧和最后一个关键帧相差很近也算了,要么刚起步,要么会触发回环

if (pointDistance(cloudKeyPoses3D->front(), cloudKeyPoses3D->back()) < 5.0)

return;

}

// pose covariance small, no need to correct

// gtsam反馈的当前x,y的置信度,如果置信度比较高也不需要gps来打扰

if (poseCovariance(3,3) < poseCovThreshold && poseCovariance(4,4) < poseCovThreshold)

return;

// last gps position

static PointType lastGPSPoint;

while (!gpsQueue.empty())

{

// 把距离当前帧比较早的帧都抛弃

if (gpsQueue.front().header.stamp.toSec() < timeLaserInfoCur - 0.2)

{

// message too old

gpsQueue.pop_front();

}

// 比较晚就索性再等等lidar计算

else if (gpsQueue.front().header.stamp.toSec() > timeLaserInfoCur + 0.2)

{

// message too new

break;

}

else

{

// 说明这个gps时间距离当前帧已经比较近了,那么就把这个数据取出来

nav_msgs::Odometry thisGPS = gpsQueue.front();

gpsQueue.pop_front();

// GPS too noisy, skip

float noise_x = thisGPS.pose.covariance[0];

float noise_y = thisGPS.pose.covariance[7];

float noise_z = thisGPS.pose.covariance[14];

// 如果gps的置信度不高,也就没必要使用了

if (noise_x > gpsCovThreshold || noise_y > gpsCovThreshold)

continue;

// 取出gps的位置

float gps_x = thisGPS.pose.pose.position.x;

float gps_y = thisGPS.pose.pose.position.y;

float gps_z = thisGPS.pose.pose.position.z;

// 通常gps的z没有x,y准,因此这里可以不使用z值

if (!useGpsElevation)

{

gps_z = transformTobeMapped[5];

noise_z = 0.01;

}

// GPS not properly initialized (0,0,0)

// gps的x或者y太小说明还没有初始化好

if (abs(gps_x) < 1e-6 && abs(gps_y) < 1e-6)

continue;

// Add GPS every a few meters

PointType curGPSPoint;

curGPSPoint.x = gps_x;

curGPSPoint.y = gps_y;

curGPSPoint.z = gps_z;

// 加入gps观测不宜太频繁,相邻不超过5m

if (pointDistance(curGPSPoint, lastGPSPoint) < 5.0)

continue;

else

lastGPSPoint = curGPSPoint;

gtsam::Vector Vector3(3);

// gps的置信度,标准差设置成最小1m,也就是不会特别信任gps信号

Vector3 << max(noise_x, 1.0f), max(noise_y, 1.0f), max(noise_z, 1.0f);

noiseModel::Diagonal::shared_ptr gps_noise = noiseModel::Diagonal::Variances(Vector3);

// 调用gtsam中集成的gps约束

gtsam::GPSFactor gps_factor(cloudKeyPoses3D->size(), gtsam::Point3(gps_x, gps_y, gps_z), gps_noise);

gtSAMgraph.add(gps_factor);

// 加入gps之后等同于回环,需要触发较多的isam update

aLoopIsClosed = true;

break;

}

}

}

- 添加回环因子:

void addLoopFactor()

{

// 有一个专门的回环检测线程会检测回环,检测到就会给这个队列塞入回环结果

if (loopIndexQueue.empty())

return;

// 把队列里所有的回环约束都添加进来

for (int i = 0; i < (int)loopIndexQueue.size(); ++i)

{

int indexFrom = loopIndexQueue[i].first;

int indexTo = loopIndexQueue[i].second;

// 闭环边的位姿变换,作为一个帧间约束

gtsam::Pose3 poseBetween = loopPoseQueue[i];

// 回环的置信度就是icp的得分

gtsam::noiseModel::Diagonal::shared_ptr noiseBetween = loopNoiseQueue[i];

// gtsam::BetweenFactor : 状态量间的约束,约束相邻两状态量之间的差值不会距离该约束过远

// 加入约束

gtSAMgraph.add(BetweenFactor<Pose3>(indexFrom, indexTo, poseBetween, noiseBetween));

}

// 清空回环相关队列

loopIndexQueue.clear();

loopPoseQueue.clear();

loopNoiseQueue.clear();

aLoopIsClosed = true;

}

- 所有因子加完了,就调用isam接口更新图模型

isam->update(gtSAMgraph, initialEstimate);

isam->update();

// 这里加入了gps的约束或者回环约束,isam需要进行更多次的优化

if (aLoopIsClosed == true)

{

isam->update();

isam->update();

isam->update();

isam->update();

isam->update();

}

- 将约束和节点信息清空,他们已经被加入到isam中了,因此这里清空不会影响整个优化:

gtSAMgraph.resize(0);

initialEstimate.clear();

- 得到优化后的位姿和累计的方差:

// 优化结果

isamCurrentEstimate = isam->calculateEstimate();

// 取出优化后的最新关键帧位姿

latestEstimate = isamCurrentEstimate.at<Pose3>(isamCurrentEstimate.size()-1);

// 平移信息取出来保存进cloudKeyPoses3D这个结构中,其中索引作为intensity值

thisPose3D.x = latestEstimate.translation().x();

thisPose3D.y = latestEstimate.translation().y();

thisPose3D.z = latestEstimate.translation().z();

thisPose3D.intensity = cloudKeyPoses3D->size(); // this can be used as index

cloudKeyPoses3D->push_back(thisPose3D);

// 保存6d姿态

thisPose6D.x = thisPose3D.x;

thisPose6D.y = thisPose3D.y;

thisPose6D.z = thisPose3D.z;

thisPose6D.intensity = thisPose3D.intensity ; // this can be used as index

thisPose6D.roll = latestEstimate.rotation().roll();

thisPose6D.pitch = latestEstimate.rotation().pitch();

thisPose6D.yaw = latestEstimate.rotation().yaw();

thisPose6D.time = timeLaserInfoCur;

cloudKeyPoses6D->push_back(thisPose6D);

// 保存当前位姿的置信度

poseCovariance = isam->marginalCovariance(isamCurrentEstimate.size()-1);

// save updated transform

// 将优化后的位姿更新到transformTobeMapped数组中,作为当前最佳估计值

transformTobeMapped[0] = latestEstimate.rotation().roll();

transformTobeMapped[1] = latestEstimate.rotation().pitch();

transformTobeMapped[2] = latestEstimate.rotation().yaw();

transformTobeMapped[3] = latestEstimate.translation().x();

transformTobeMapped[4] = latestEstimate.translation().y();

transformTobeMapped[5] = latestEstimate.translation().z();

- 把当前帧点云加到全局地图里,注意它的坐标系是当前时刻的lidar坐标系下,相比保存在world系下的好处就是当位姿被优化之后不用再调整点云坐标了

pcl::PointCloud<PointType>::Ptr thisCornerKeyFrame(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr thisSurfKeyFrame(new pcl::PointCloud<PointType>());

// 当前帧的点云的角点和面点分别拷贝一下

pcl::copyPointCloud(*laserCloudCornerLastDS, *thisCornerKeyFrame);

pcl::copyPointCloud(*laserCloudSurfLastDS, *thisSurfKeyFrame);

// save key frame cloud

// 关键帧点云保存下来

cornerCloudKeyFrames.push_back(thisCornerKeyFrame);

surfCloudKeyFrames.push_back(thisSurfKeyFrame);

// 根据当前最新位姿更新rviz可视化

updatePath(thisPose6D);

1.1.4.1. updatePath():根据当前最新位姿更新rviz可视化

void updatePath(const PointTypePose& pose_in)

{

geometry_msgs::PoseStamped pose_stamped;

pose_stamped.header.stamp = ros::Time().fromSec(pose_in.time);

pose_stamped.header.frame_id = odometryFrame;

pose_stamped.pose.position.x = pose_in.x;

pose_stamped.pose.position.y = pose_in.y;

pose_stamped.pose.position.z = pose_in.z;

tf::Quaternion q = tf::createQuaternionFromRPY(pose_in.roll, pose_in.pitch, pose_in.yaw);

pose_stamped.pose.orientation.x = q.x();

pose_stamped.pose.orientation.y = q.y();

pose_stamped.pose.orientation.z = q.z();

pose_stamped.pose.orientation.w = q.w();

globalPath.poses.push_back(pose_stamped);

}

1.1.5. correctPoses() :调整全局轨迹

void correctPoses()

{

// 没有关键帧,自然也没有意义

if (cloudKeyPoses3D->points.empty())

return;

// 只有回环以及gps信息这些会触发全局调整信息, 才会触发

if (aLoopIsClosed == true)

{

// clear map cache

// 很多位姿会变化,因子之前的容器内转到世界坐标系下的很多点云就需要调整,因此这里索性清空

laserCloudMapContainer.clear();

// clear path

// 清空里程计轨迹

globalPath.poses.clear();

// update key poses

// 更新因子图中所有变量节点的位姿,也就是所有历史关键帧的位姿

int numPoses = isamCurrentEstimate.size();

for (int i = 0; i < numPoses; ++i)

{

// 更新所有关键帧的位姿

cloudKeyPoses3D->points[i].x = isamCurrentEstimate.at<Pose3>(i).translation().x();

cloudKeyPoses3D->points[i].y = isamCurrentEstimate.at<Pose3>(i).translation().y();

cloudKeyPoses3D->points[i].z = isamCurrentEstimate.at<Pose3>(i).translation().z();

cloudKeyPoses6D->points[i].x = cloudKeyPoses3D->points[i].x;

cloudKeyPoses6D->points[i].y = cloudKeyPoses3D->points[i].y;

cloudKeyPoses6D->points[i].z = cloudKeyPoses3D->points[i].z;

cloudKeyPoses6D->points[i].roll = isamCurrentEstimate.at<Pose3>(i).rotation().roll();

cloudKeyPoses6D->points[i].pitch = isamCurrentEstimate.at<Pose3>(i).rotation().pitch();

cloudKeyPoses6D->points[i].yaw = isamCurrentEstimate.at<Pose3>(i).rotation().yaw();

// 更新里程计轨迹

updatePath(cloudKeyPoses6D->points[i]);

}

// 标志位清空

aLoopIsClosed = false;

}

}

1.1.6. publishOdometry() :发布里程计数据

pubLaserOdometryGlobal发布单纯由mapOptimization经过GTSAM优化后的位姿,它的话题是"lio_sam/mapping/odometry",这个是由transformFusion订阅的,用于与IMU里程计进行融合。

nav_msgs::Odometry laserOdometryROS;

laserOdometryROS.header.stamp = timeLaserInfoStamp;

laserOdometryROS.header.frame_id = odometryFrame;

laserOdometryROS.child_frame_id = "odom_mapping";

laserOdometryROS.pose.pose.position.x = transformTobeMapped[3];

laserOdometryROS.pose.pose.position.y = transformTobeMapped[4];

laserOdometryROS.pose.pose.position.z = transformTobeMapped[5];

laserOdometryROS.pose.pose.orientation = tf::createQuaternionMsgFromRollPitchYaw(transformTobeMapped[0], transformTobeMapped[1], transformTobeMapped[2]);

pubLaserOdometryGlobal.publish(laserOdometryROS);

pubLaserOdometryIncremental的话题是"lio_sam/mapping/odometry_incremental",它发布的laserOdomIncremental位姿是经过IMU数据加权融合的,这个是由IMUPreintegration订阅的,用于制作高频的IMU里程计;

两个位姿(pubLaserOdometryGlobal, pubLaserOdometryIncremental)虽然数值可能区别不大,但是用途有着巨大的区别。注意laserOdomIncremental会有一个是否退化isDegenerate的判断,这个判断是在scan2map的LMOptimization那里计算匹配的J矩阵时获得的,如果发生退化,那么标志位covariance[0] = 1,在IMUPreintegration那里的图优化会给当前位姿的计算加一个大的方差。

if (lastIncreOdomPubFlag == false)

{

lastIncreOdomPubFlag = true;

// 记录当前位姿

laserOdomIncremental = laserOdometryROS;

increOdomAffine = trans2Affine3f(transformTobeMapped);

} else {

// 上一帧的最佳位姿和当前帧的最佳位姿(scan matching之后,而不是根据回环或者gos调整之后的位姿)之间的位姿增量

Eigen::Affine3f affineIncre = incrementalOdometryAffineFront.inverse() * incrementalOdometryAffineBack;

// 位姿增量叠加到上一帧位姿上

increOdomAffine = increOdomAffine * affineIncre;

float x, y, z, roll, pitch, yaw;

// 分解成欧拉角+平移向量

pcl::getTranslationAndEulerAngles (increOdomAffine, x, y, z, roll, pitch, yaw);

// 如果没有IMU信息,同样对roll和pitch做插值

if (cloudInfo.imuAvailable == true)

{

if (std::abs(cloudInfo.imuPitchInit) < 1.4)

{

double imuWeight = 0.1;

tf::Quaternion imuQuaternion;

tf::Quaternion transformQuaternion;

double rollMid, pitchMid, yawMid;

// slerp roll

transformQuaternion.setRPY(roll, 0, 0);

imuQuaternion.setRPY(cloudInfo.imuRollInit, 0, 0);

tf::Matrix3x3(transformQuaternion.slerp(imuQuaternion, imuWeight)).getRPY(rollMid, pitchMid, yawMid);

roll = rollMid;

// slerp pitch

transformQuaternion.setRPY(0, pitch, 0);

imuQuaternion.setRPY(0, cloudInfo.imuPitchInit, 0);

tf::Matrix3x3(transformQuaternion.slerp(imuQuaternion, imuWeight)).getRPY(rollMid, pitchMid, yawMid);

pitch = pitchMid;

}

}

laserOdomIncremental.header.stamp = timeLaserInfoStamp;

laserOdomIncremental.header.frame_id = odometryFrame;

laserOdomIncremental.child_frame_id = "odom_mapping";

laserOdomIncremental.pose.pose.position.x = x;

laserOdomIncremental.pose.pose.position.y = y;

laserOdomIncremental.pose.pose.position.z = z;

laserOdomIncremental.pose.pose.orientation = tf::createQuaternionMsgFromRollPitchYaw(roll, pitch, yaw);

// 协方差这一位作为是否退化的标志

if (isDegenerate)

laserOdomIncremental.pose.covariance[0] = 1;

else

laserOdomIncremental.pose.covariance[0] = 0;

}

pubLaserOdometryIncremental.publish(laserOdomIncremental);

lio-sam的处理方式与VINS有着较大的区别。非常有意思的是它的图优化分散在了多个类里分别进行,优化后的高频和低频的位姿又相互依赖:高频的IMU里程计是基于低频的lidar里程计的(IMUPreintegration订阅mapOptimization),但是下一帧低频的lidar里程计的初值又是由高频的IMU里程计提供的(imageProjection订阅IMUPreintegration),这样做的一个重要优点就是位姿的变换非常平滑。

2. loopClosureThread() :回环检测线程

在main()里,还开了一个回环检测线程,不同于V-SLAM可以计算特征和描述子来构建词袋,lidar-SLAM回环检测只能依赖位置关系进行回环判断:

void loopClosureThread()

{

// 如果不需要进行回环检测,那么就退出这个线程

if (loopClosureEnableFlag == false)

return;

// 设置回环检测的频率

ros::Rate rate(loopClosureFrequency);

while (ros::ok())

{

// 执行完一次就必须sleep一段时间,否则该线程的cpu占用会非常高

rate.sleep();

// 执行回环检测

performLoopClosure();

visualizeLoopClosure();

}

}

2.1. performLoopClosure(): 执行回环检测

- 基于位姿远近和时间差大小判断回环关系

void performLoopClosure()

{

// 如果没有关键帧,就没法进行回环检测了

if (cloudKeyPoses3D->points.empty() == true)

return;

mtx.lock();

// 把存储关键帧的位姿的点云copy出来,避免线程冲突

*copy_cloudKeyPoses3D = *cloudKeyPoses3D;

*copy_cloudKeyPoses6D = *cloudKeyPoses6D;

mtx.unlock();

// find keys

int loopKeyCur;

int loopKeyPre;

// 检测是否有外部通知的线程消息

if (detectLoopClosureExternal(&loopKeyCur, &loopKeyPre) == false)

// 在历史关键帧中查找与当前关键帧距离最近的关键帧集合,选择时间相隔较远的一帧作为候选闭环帧

// 基于位姿远近和时间差大小判断回环关系

if (detectLoopClosureDistance(&loopKeyCur, &loopKeyPre) == false)

return;

2.1.1. detectLoopClosureDistance(): 基于位姿远近和时间差大小判断回环关系

bool detectLoopClosureDistance(int *latestID, int *closestID)

{

// 检测最新帧是否和其他帧形成回环,所以后面一帧的id就是最后一个关键帧

int loopKeyCur = copy_cloudKeyPoses3D->size() - 1;

int loopKeyPre = -1;

// check loop constraint added before

// 检查一下较晚帧是否和别的形成了回环,如果有就算了

auto it = loopIndexContainer.find(loopKeyCur);

if (it != loopIndexContainer.end())

return false;

// find the closest history key frame

// 在历史关键帧中查找与当前关键帧距离最近的关键帧集合

std::vector<int> pointSearchIndLoop;

std::vector<float> pointSearchSqDisLoop;

// 把只包含关键帧位移信息的点云填充kdtree

kdtreeHistoryKeyPoses->setInputCloud(copy_cloudKeyPoses3D);

// 根据最后一个关键帧的平移信息,寻找离他一定距离(15m)内的其他关键帧

kdtreeHistoryKeyPoses->radiusSearch(copy_cloudKeyPoses3D->back(), historyKeyframeSearchRadius, pointSearchIndLoop, pointSearchSqDisLoop, 0);

// 在候选关键帧集合中,找到与当前帧时间相隔较远的帧,设为候选匹配帧

for (int i = 0; i < (int)pointSearchIndLoop.size(); ++i)

{

int id = pointSearchIndLoop[i];

// 必须满足时间上超过一定阈值,才认为是一个有效的回环

if (abs(copy_cloudKeyPoses6D->points[id].time - timeLaserInfoCur) > historyKeyframeSearchTimeDiff)

{

// 此时就退出了

loopKeyPre = id;

break;

}

}

// 如果没有找到回环或者回环找到自己身上去了,就认为是此次回环寻找失败

if (loopKeyPre == -1 || loopKeyCur == loopKeyPre)

return false;

*latestID = loopKeyCur;

*closestID = loopKeyPre;

return true;

}

- 当找到两个回环帧分别的id之后,那么就可以把分别的点云找出来

pcl::PointCloud<PointType>::Ptr cureKeyframeCloud(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr prevKeyframeCloud(new pcl::PointCloud<PointType>());

{

// 稍晚的帧就把自己取了出来

loopFindNearKeyframes(cureKeyframeCloud, loopKeyCur, 0);

// 稍早一点的就把自己和周围一些点云取出来,也就是构成一个帧到局部地图的一个匹配问题

loopFindNearKeyframes(prevKeyframeCloud, loopKeyPre, historyKeyframeSearchNum);

// 如果点云数量太少就算了

if (cureKeyframeCloud->size() < 300 || prevKeyframeCloud->size() < 1000)

return;

if (pubHistoryKeyFrames.getNumSubscribers() != 0)

// 把局部地图发布出去供RVIZ可视化使用

publishCloud(&pubHistoryKeyFrames, prevKeyframeCloud, timeLaserInfoStamp, odometryFrame);

}

当然了,不能分别只找一帧的点云,因为可能视角不够,点云数不够,导致包含的特征不够丰富,从而共视特征不足,这会导致匹配失败,所以就分别多找几帧相邻帧的点云来丰富点云,在loopFindNearKeyframes()中实现:

2.1.1.1. loopFindNearKeyframes(): 寻找邻近关键帧共同提取点云

void loopFindNearKeyframes(pcl::PointCloud<PointType>::Ptr& nearKeyframes, const int& key, const int& searchNum)

{

// extract near keyframes

nearKeyframes->clear();

int cloudSize = copy_cloudKeyPoses6D->size();

// searchNum是搜索范围

for (int i = -searchNum; i <= searchNum; ++i)

{

// 找到这个idx

int keyNear = key + i;

// 如果超出范围就算了

if (keyNear < 0 || keyNear >= cloudSize )

continue;

// 否则把对应角点和面点的点云转到世界坐标系下

*nearKeyframes += *transformPointCloud(cornerCloudKeyFrames[keyNear], ©_cloudKeyPoses6D->points[keyNear]);

*nearKeyframes += *transformPointCloud(surfCloudKeyFrames[keyNear], ©_cloudKeyPoses6D->points[keyNear]);

}

// 如果没有有效点云就算了

if (nearKeyframes->empty())

return;

// downsample near keyframes

// 把点云下采样

pcl::PointCloud<PointType>::Ptr cloud_temp(new pcl::PointCloud<PointType>());

downSizeFilterICP.setInputCloud(nearKeyframes);

downSizeFilterICP.filter(*cloud_temp);

*nearKeyframes = *cloud_temp;

}

- 回环帧分别的点云找出来之后,就可以做icp匹配了,这里作者匹配的方式和scan2map不一样了,他直接使用了pcl原生的点点icp匹配的方式:

// 使用简单的icp来进行帧到局部地图的配准

static pcl::IterativeClosestPoint<PointType, PointType> icp;

icp.setMaxCorrespondenceDistance(historyKeyframeSearchRadius*2);

icp.setMaximumIterations(100);

icp.setTransformationEpsilon(1e-6);

icp.setEuclideanFitnessEpsilon(1e-6);

icp.setRANSACIterations(0);

// Align clouds

// 设置两个点云

icp.setInputSource(cureKeyframeCloud);

icp.setInputTarget(prevKeyframeCloud);

pcl::PointCloud<PointType>::Ptr unused_result(new pcl::PointCloud<PointType>());

// 执行点云配准

icp.align(*unused_result);

// 检查icp是否收敛且得分是否满足要求

if (icp.hasConverged() == false || icp.getFitnessScore() > historyKeyframeFitnessScore)

return;

// publish corrected cloud

// 把修正后的当前点云发布供可视化使用

if (pubIcpKeyFrames.getNumSubscribers() != 0)

{

pcl::PointCloud<PointType>::Ptr closed_cloud(new pcl::PointCloud<PointType>());

pcl::transformPointCloud(*cureKeyframeCloud, *closed_cloud, icp.getFinalTransformation());

publishCloud(&pubIcpKeyFrames, closed_cloud, timeLaserInfoStamp, odometryFrame);

}

- 如果icp匹配成功了话,那么就更新位姿,并记录误差:

// 闭环优化得到的当前关键帧与闭环关键帧之间的位姿变换

float x, y, z, roll, pitch, yaw;

Eigen::Affine3f correctionLidarFrame;

// 获得两个点云的变换矩阵结果

correctionLidarFrame = icp.getFinalTransformation();

// transform from world origin to wrong pose

// 闭环优化前当前帧位姿

Eigen::Affine3f tWrong = pclPointToAffine3f(copy_cloudKeyPoses6D->points[loopKeyCur]);

// transform from world origin to corrected pose

// 将icp的结果补偿过去,就是闭环优化后当前帧位姿

Eigen::Affine3f tCorrect = correctionLidarFrame * tWrong;// pre-multiplying -> successive rotation about a fixed frame

// 将优化后当前帧的位姿转成平移+欧拉角

pcl::getTranslationAndEulerAngles (tCorrect, x, y, z, roll, pitch, yaw);

gtsam::Pose3 poseFrom = Pose3(Rot3::RzRyRx(roll, pitch, yaw), Point3(x, y, z));

// 闭环匹配帧的位姿

gtsam::Pose3 poseTo = pclPointTogtsamPose3(copy_cloudKeyPoses6D->points[loopKeyPre]);

gtsam::Vector Vector6(6);

// 使用icp的得分作为他们的约束的噪声项

float noiseScore = icp.getFitnessScore();

Vector6 << noiseScore, noiseScore, noiseScore, noiseScore, noiseScore, noiseScore;

noiseModel::Diagonal::shared_ptr constraintNoise = noiseModel::Diagonal::Variances(Vector6);

- 把回环帧id和位姿加到buffer里,下一轮图优化的时候加进入,同时把回环帧id加到字典里去:

// 添加闭环因子需要的数据

mtx.lock();

// 将两帧索引,两帧相对应位姿和噪声作为回环约束送入队列

loopIndexQueue.push_back(make_pair(loopKeyCur, loopKeyPre));

loopPoseQueue.push_back(poseFrom.between(poseTo));

loopNoiseQueue.push_back(constraintNoise);

mtx.unlock();

// add loop constriant

// 保存已经存在的约束对

loopIndexContainer[loopKeyCur] = loopKeyPre;

2.2.visualizeLoopClosure() :rviz展示闭环边

略。就是把回环帧位姿的地方画上几个大点,回环帧之间用线连起来给rviz看。

3. visualizeGlobalMapThread(): 全局可视化线程

干了两个事,一个是向某个topic发点云,另一个是把点云地图保存成文件

void visualizeGlobalMapThread()

{

// 更新频率设置为0.2hz

ros::Rate rate(0.2);

while (ros::ok()){

rate.sleep();

publishGlobalMap();

}

// 当ros被杀死,执行保存地图功能

if (savePCD == false)

return;

lio_sam::save_mapRequest req;

lio_sam::save_mapResponse res;

if(!saveMapService(req, res)){

cout << "Fail to save map" << endl;

}

}

3.1. publishGlobalMap():发布可视化全局地图

- 找出最新帧附近的点云:

void publishGlobalMap()

{

// 如果没有订阅者就不发布,节省系统负载

if (pubLaserCloudSurround.getNumSubscribers() == 0)

return;

// 没有自然帧自然也没有全局地图了

if (cloudKeyPoses3D->points.empty() == true)

return;

pcl::KdTreeFLANN<PointType>::Ptr kdtreeGlobalMap(new pcl::KdTreeFLANN<PointType>());;

pcl::PointCloud<PointType>::Ptr globalMapKeyPoses(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalMapKeyPosesDS(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalMapKeyFrames(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalMapKeyFramesDS(new pcl::PointCloud<PointType>());

// kd-tree to find near key frames to visualize

std::vector<int> pointSearchIndGlobalMap;

std::vector<float> pointSearchSqDisGlobalMap;

// search near key frames to visualize

mtx.lock();

// 把所有关键帧送入kdtree

kdtreeGlobalMap->setInputCloud(cloudKeyPoses3D);

// 寻找具体最新关键帧一定范围内的其他关键帧

kdtreeGlobalMap->radiusSearch(cloudKeyPoses3D->back(), globalMapVisualizationSearchRadius, pointSearchIndGlobalMap, pointSearchSqDisGlobalMap, 0);

mtx.unlock();

// 把这些找到的关键帧的位姿保存起来

for (int i = 0; i < (int)pointSearchIndGlobalMap.size(); ++i)

globalMapKeyPoses->push_back(cloudKeyPoses3D->points[pointSearchIndGlobalMap[i]]);

// downsample near selected key frames

// 简单的下采样

pcl::VoxelGrid<PointType> downSizeFilterGlobalMapKeyPoses; // for global map visualization

downSizeFilterGlobalMapKeyPoses.setLeafSize(globalMapVisualizationPoseDensity, globalMapVisualizationPoseDensity, globalMapVisualizationPoseDensity); // for global map visualization

downSizeFilterGlobalMapKeyPoses.setInputCloud(globalMapKeyPoses);

downSizeFilterGlobalMapKeyPoses.filter(*globalMapKeyPosesDS);

- 由于这些点云都是保存在各自的lidar坐标系下, 所以得根据各自的位姿转换到world系下:

// 提取局部相邻关键帧对应的特征点云

for (int i = 0; i < (int)globalMapKeyPosesDS->size(); ++i){

// 距离过大

if (pointDistance(globalMapKeyPosesDS->points[i], cloudKeyPoses3D->back()) > globalMapVisualizationSearchRadius)

continue;

int thisKeyInd = (int)globalMapKeyPosesDS->points[i].intensity;

// 将每一帧的点云通过位姿转到世界坐标系下

*globalMapKeyFrames += *transformPointCloud(cornerCloudKeyFrames[thisKeyInd], &cloudKeyPoses6D->points[thisKeyInd]);

*globalMapKeyFrames += *transformPointCloud(surfCloudKeyFrames[thisKeyInd], &cloudKeyPoses6D->points[thisKeyInd]);

}

- 转换后的点云进行下采样并发布

pcl::VoxelGrid<PointType> downSizeFilterGlobalMapKeyFrames; // for global map visualization

downSizeFilterGlobalMapKeyFrames.setLeafSize(globalMapVisualizationLeafSize, globalMapVisualizationLeafSize, globalMapVisualizationLeafSize); // for global map visualization

downSizeFilterGlobalMapKeyFrames.setInputCloud(globalMapKeyFrames);

downSizeFilterGlobalMapKeyFrames.filter(*globalMapKeyFramesDS);

publishCloud(&pubLaserCloudSurround, globalMapKeyFramesDS, timeLaserInfoStamp, odometryFrame);

3.2. saveMapService():地图的保存

- 创建路径

if(req.destination.empty()) saveMapDirectory = std::getenv("HOME") + savePCDDirectory;

else saveMapDirectory = std::getenv("HOME") + req.destination;

cout << "Save destination: " << saveMapDirectory << endl;

// create directory and remove old files;

int unused = system((std::string("exec rm -r ") + saveMapDirectory).c_str());

unused = system((std::string("mkdir -p ") + saveMapDirectory).c_str());

- 保存path

pcl::io::savePCDFileBinary(saveMapDirectory + "/trajectory.pcd", *cloudKeyPoses3D);

pcl::io::savePCDFileBinary(saveMapDirectory + "/transformations.pcd", *cloudKeyPoses6D);

- 全局地图从各自的lidar坐标系下转换到world系下

pcl::PointCloud<PointType>::Ptr globalCornerCloud(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalCornerCloudDS(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalSurfCloud(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalSurfCloudDS(new pcl::PointCloud<PointType>());

pcl::PointCloud<PointType>::Ptr globalMapCloud(new pcl::PointCloud<PointType>());

for (int i = 0; i < (int)cloudKeyPoses3D->size(); i++) {

*globalCornerCloud += *transformPointCloud(cornerCloudKeyFrames[i], &cloudKeyPoses6D->points[i]);

*globalSurfCloud += *transformPointCloud(surfCloudKeyFrames[i], &cloudKeyPoses6D->points[i]);

cout << "\r" << std::flush << "Processing feature cloud " << i << " of " << cloudKeyPoses6D->size() << " ...";

}

- 分别保存角点点云全局地图和平面点点云全局地图:

if(req.resolution != 0)

{

cout << "\n\nSave resolution: " << req.resolution << endl;

// down-sample and save corner cloud

downSizeFilterCorner.setInputCloud(globalCornerCloud);

downSizeFilterCorner.setLeafSize(req.resolution, req.resolution, req.resolution);

downSizeFilterCorner.filter(*globalCornerCloudDS);

pcl::io::savePCDFileBinary(saveMapDirectory + "/CornerMap.pcd", *globalCornerCloudDS);

// down-sample and save surf cloud

downSizeFilterSurf.setInputCloud(globalSurfCloud);

downSizeFilterSurf.setLeafSize(req.resolution, req.resolution, req.resolution);

downSizeFilterSurf.filter(*globalSurfCloudDS);

pcl::io::savePCDFileBinary(saveMapDirectory + "/SurfMap.pcd", *globalSurfCloudDS);

}

else

{

// save corner cloud

pcl::io::savePCDFileBinary(saveMapDirectory + "/CornerMap.pcd", *globalCornerCloud);

// save surf cloud

pcl::io::savePCDFileBinary(saveMapDirectory + "/SurfMap.pcd", *globalSurfCloud);

}

- 保存总的全局地图:

*globalMapCloud += *globalCornerCloud;

*globalMapCloud += *globalSurfCloud;

int ret = pcl::io::savePCDFileBinary(saveMapDirectory + "/GlobalMap.pcd", *globalMapCloud);

res.success = ret == 0;

downSizeFilterCorner.setLeafSize(mappingCornerLeafSize, mappingCornerLeafSize, mappingCornerLeafSize);

downSizeFilterSurf.setLeafSize(mappingSurfLeafSize, mappingSurfLeafSize, mappingSurfLeafSize);

cout << "****************************************************" << endl;

cout << "Saving map to pcd files completed\n" << endl;

return true;

}