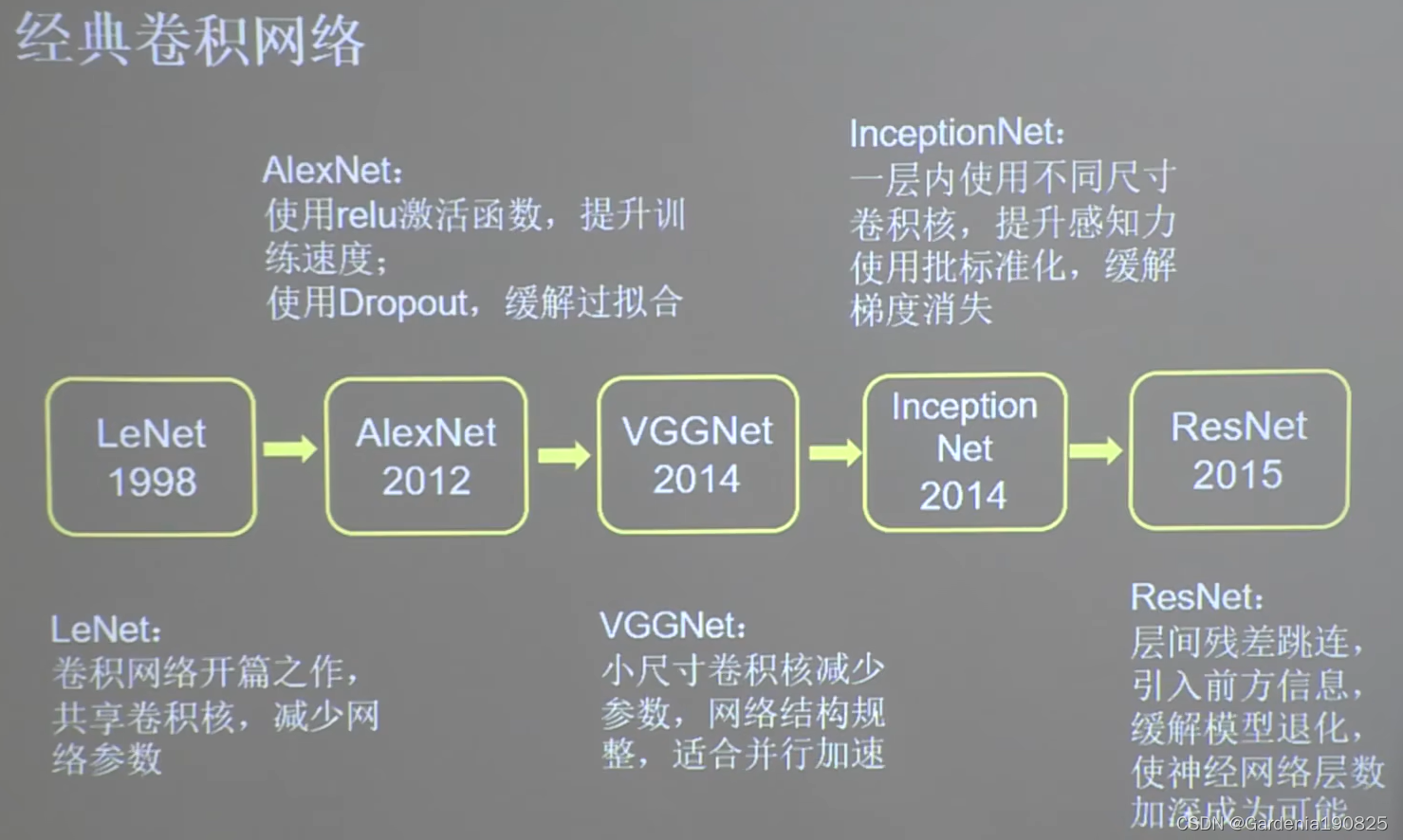

【TensorFlow】经典卷积神经网络(InceptionNet,ResNet)

1.InceptionNet

InceptionNet诞生于2014年,是当年ImageNet竞赛的冠军,Top5错误率减小到6.67% InceptionNet引入了Inception结构块,在同一层网络内,使用不同尺寸的卷积核,提升了模型感知力,使用了批标准化缓解了梯度消失。

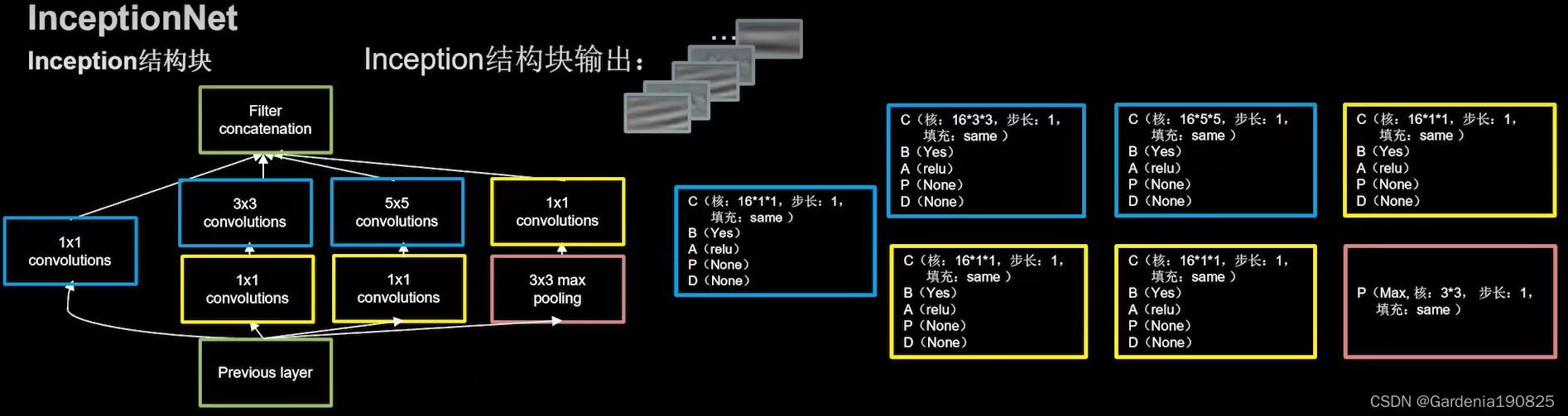

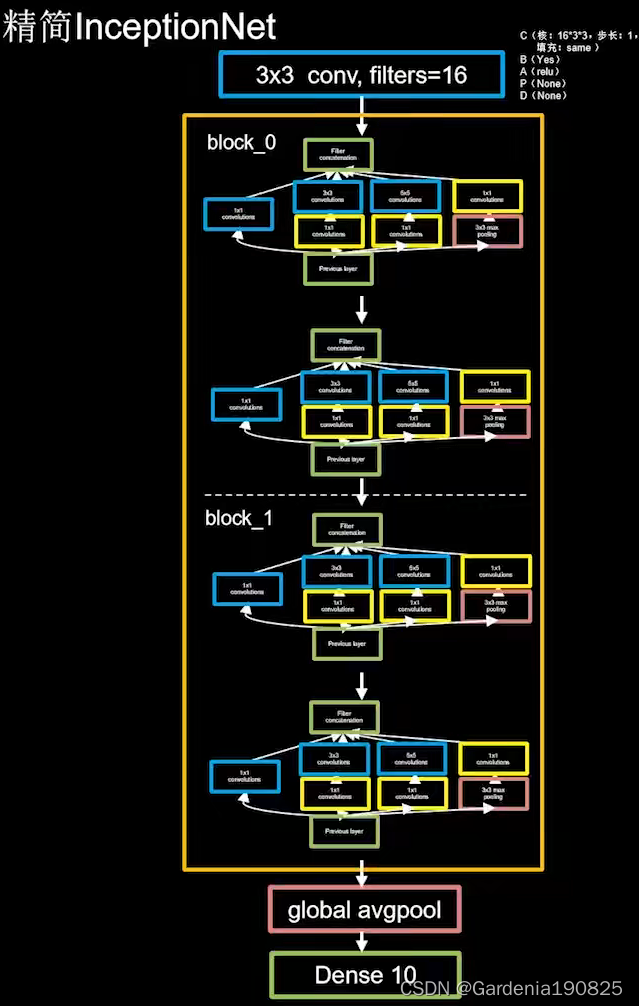

下图为Inception结构块示意图,输入经过不同的卷积操作最后得到尺寸相同的特征数据,输出到卷积连接器,卷积连接器会按照深度方向将四个输出进行拼接,形成Inception结构块的输出。

代码思想:

- 由于输出Inception结构块需要经过CBA操作(Convolution,BatchNormalization,Activation),因此将这三个操作封装为一个新的类ConvBNRelu,每两个结构块形成一个block,第一个block设置步长为2,这使得第一个输出特征图尺寸减半,因此要将输出特征图深度加深,尽可能保证特征抽取中信息的承载量一致。

代码如下:

class ConvBNRelu(Model): # CBA封装成类

def __init__(self, ch, kernelsz=3, strides=1, padding='same'): # 设置卷积核边长默认为3(正方形),步长为1,全零填充,ch是输出深度,也就是卷积核个数

super(ConvBNRelu, self).__init__()

self.model = tf.keras.models.Sequential([

Conv2D(ch, kernelsz, strides=strides, padding=padding), # 卷积核边长

BatchNormalization(),

Activation('relu')

])

def call(self, x):

x = self.model(x, training=False) #在training=False时,BN通过整个训练集计算均值、方差去做批归一化,training=True时,通过当前batch的均值、方差去做批归一化。推理时 training=False效果好

return x

class InceptionBlk(Model):

def __init__(self, ch, strides=1):

super(InceptionBlk, self).__init__()

self.ch = ch

self.strides = strides

self.c1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c2_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c2_2 = ConvBNRelu(ch, kernelsz=3, strides=1)

self.c3_1 = ConvBNRelu(ch, kernelsz=1, strides=strides)

self.c3_2 = ConvBNRelu(ch, kernelsz=5, strides=1)

self.p4_1 = MaxPool2D(3, strides=1, padding='same')

self.c4_2 = ConvBNRelu(ch, kernelsz=1, strides=strides)

def call(self, x):

x1 = self.c1(x)

x2_1 = self.c2_1(x)

x2_2 = self.c2_2(x2_1)

x3_1 = self.c3_1(x)

x3_2 = self.c3_2(x3_1)

x4_1 = self.p4_1(x)

x4_2 = self.c4_2(x4_1)

# concat along axis=channel

x = tf.concat([x1, x2_2, x3_2, x4_2], axis=3)

return x

class Inception10(Model):

def __init__(self, num_blocks, num_classes, init_ch=16, **kwargs): # 默认输出深度inin_ch是16

super(Inception10, self).__init__(**kwargs)

self.in_channels = init_ch

self.out_channels = init_ch

self.num_blocks = num_blocks

self.init_ch = init_ch

self.c1 = ConvBNRelu(init_ch)

self.blocks = tf.keras.models.Sequential()

for block_id in range(num_blocks): # Inception块,实例中输入是2块

for layer_id in range(2):

if layer_id == 0: # block_0

block = InceptionBlk(self.out_channels, strides=2)

else: # block_1

block = InceptionBlk(self.out_channels, strides=1)

self.blocks.add(block)

# enlarger out_channels per block

self.out_channels *= 2 # 把输出特征图深度加深,尽量保证特征抽取中信息的承载量一致

self.p1 = GlobalAveragePooling2D()

self.f1 = Dense(num_classes, activation='softmax')

def call(self, x):

x = self.c1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return y

model = Inception10(num_blocks=2, num_classes=10)

2.ResNet

ResNet诞生于2015年,是当年的ImageNet竞赛的冠军,Top5错误率为3.57%。ResNet提出了层间残差跳连,引入了前方信息,缓解梯度消失,使神经网络层数增加成为可能。

| 模型名称 | 网络层数 |

|---|---|

| LeNet | 5 |

| AlexNet | 8 |

| VGGNet | 16 / 19 |

| InceptionNet | 22 |

ResNet由8个ResNet块组成,输出过一个平均池化,再过一个全连接层。虚线连接的是维度不同的ResNet块(堆叠卷积前后维度不同),实线连接的是维度相同的ResNet块(堆叠卷积前后维度相同)。每一个ResNet块由两层卷积组成,一共是18层网络(平均池化归入卷积中)。

ResNet示意图如下:

代码思想:

如果堆叠卷积层前后维度不同,residual_path = 1, 调用如下代码:

使用1*1卷积操作,调整输入特征图inputs的尺寸或深度后将堆叠卷积输出特征y和if语句计算出的residual相加,过激活,输出。如果堆叠卷积层前后维度相同,直接将堆叠卷积输出特征y和输入特征图inputs相加,过激活,输出。

代码如下:

class ResnetBlock(Model):

def __init__(self, filters, strides=1, residual_path=False):

super(ResnetBlock, self).__init__()

self.filters = filters

self.strides = strides

self.residual_path = residual_path

self.c1 = Conv2D(filters, (3, 3), strides=strides, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.c2 = Conv2D(filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b2 = BatchNormalization()

# residual_path为True时,对输入进行下采样,即用1x1的卷积核做卷积操作,保证x能和F(x)维度相同,顺利相加,True是虚线,False是实线

if residual_path:

self.down_c1 = Conv2D(filters, (1, 1), strides=strides, padding='same', use_bias=False)

self.down_b1 = BatchNormalization()

self.a2 = Activation('relu')

def call(self, inputs):

residual = inputs # residual等于输入值本身,即residual=x

# 将输入通过卷积、BN层、激活层,计算F(x)

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.c2(x)

y = self.b2(x)

if self.residual_path:

residual = self.down_c1(inputs)

residual = self.down_b1(residual)

out = self.a2(y + residual) # 最后输出的是两部分的和,即F(x)+x或F(x)+Wx,再过激活函数

return out

class ResNet18(Model):

def __init__(self, block_list, initial_filters=64): # block_list表示每个block有几个卷积层

super(ResNet18, self).__init__()

self.num_blocks = len(block_list) # 共有几个block

self.block_list = block_list

self.out_filters = initial_filters

self.c1 = Conv2D(self.out_filters, (3, 3), strides=1, padding='same', use_bias=False)

self.b1 = BatchNormalization()

self.a1 = Activation('relu')

self.blocks = tf.keras.models.Sequential()

# 构建ResNet网络结构

for block_id in range(len(block_list)): # 第几个ResNet block

for layer_id in range(block_list[block_id]): # 第几个卷积层

if block_id != 0 and layer_id == 0: # 对除第一个block以外的每个block的输入进行下采样

block = ResnetBlock(self.out_filters, strides=2, residual_path=True)

else:

block = ResnetBlock(self.out_filters, residual_path=False)

self.blocks.add(block) # 将构建好的block加入resnet

self.out_filters *= 2 # 下一个block的卷积核数是上一个block的2倍

self.p1 = tf.keras.layers.GlobalAveragePooling2D()

self.f1 = tf.keras.layers.Dense(10, activation='softmax', kernel_regularizer=tf.keras.regularizers.l2())

def call(self, inputs):

x = self.c1(inputs)

x = self.b1(x)

x = self.a1(x)

x = self.blocks(x)

x = self.p1(x)

y = self.f1(x)

return y

model = ResNet18([2, 2, 2, 2])

总结

此图片出自【北京大学】Tensorflow2.0 B站课程第41节。

继续学习,加油!