torch.nn.RRELU

原型

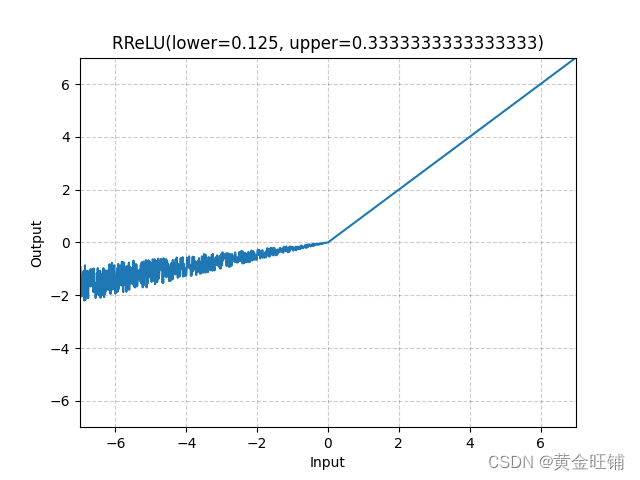

CLASS torch.nn.RReLU(lower=0.125, upper=0.3333333333333333, inplace=False)

参数

- lower (float) – lower bound of the uniform distribution. Default: 1 / 8 1/8 1/8

- upper (float) – upper bound of the uniform distribution. Default: 1 / 3 1/3 1/3

- inplace (bool) – can optionally do the operation in-place. Default: False

定义

RReLU ( x ) = { x , if x ≥ 0 a x , otherwise \text{RReLU}(x) = \begin{cases} x, & \text{if } x \geq 0 \\ ax, & \text{otherwise} \end{cases} RReLU(x)={ x,ax,if x≥0otherwise

其中 a a a 是从 μ ( l o w e r , u p p e r ) \mu(lower, upper) μ(lower,upper) 随机均匀采样获得。

图

代码

import torch

import torch.nn as nn

m = nn.RReLU(0.1, 0.3)

input = torch.randn(4)

output = m(input)

print("input: ", input) # input: tensor([ 0.8879, -0.4108, -0.8519, -0.2371])

print("output: ", output) # output: tensor([ 0.8879, -0.1141, -0.1299, -0.0599])

【参考】

ReLU6 — PyTorch 1.13 documentation

Empirical Evaluation of Rectified Activations in Convolutional Network.