torch.nn.SELU

原型

CLASS torch.nn.SELU(inplace=False)

参数

- inplace (bool, optional) – 可选的是否为内部处理. 默认为

False

定义

Applied element-wise, as:

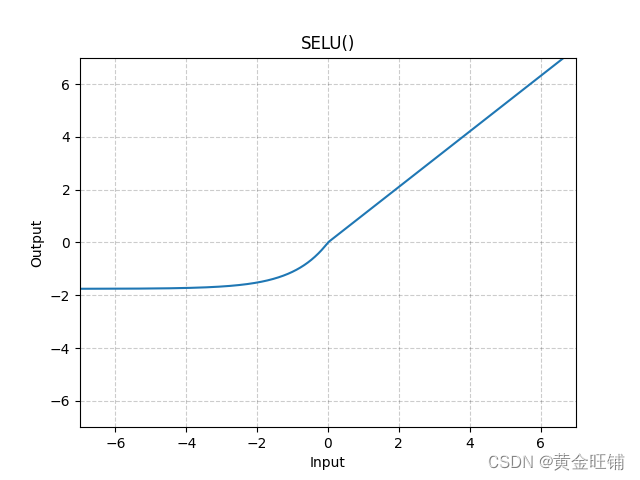

SELU ( x ) = s c a l e ∗ ( max ( 0 , x ) + min ( 0 , α ∗ ( exp ( x ) − 1 ) ) ) \text{SELU}(x)=scale∗(\max(0,x)+\min(0, \alpha∗(\exp(x)−1))) SELU(x)=scale∗(max(0,x)+min(0,α∗(exp(x)−1)))

图

代码

import torch

import torch.nn as nn

m = nn.SELU()

input = torch.randn(4)

output = m(input)

print("input: ", input) # input: tensor([-0.5830, -1.3986, 0.2560, -2.6909])

print("output: ", output)# output: tensor([-0.7767, -1.3240, 0.2689, -1.6389])