torch.nn.SiLU

原型

CLASS torch.nn.SiLU(inplace=False)

定义

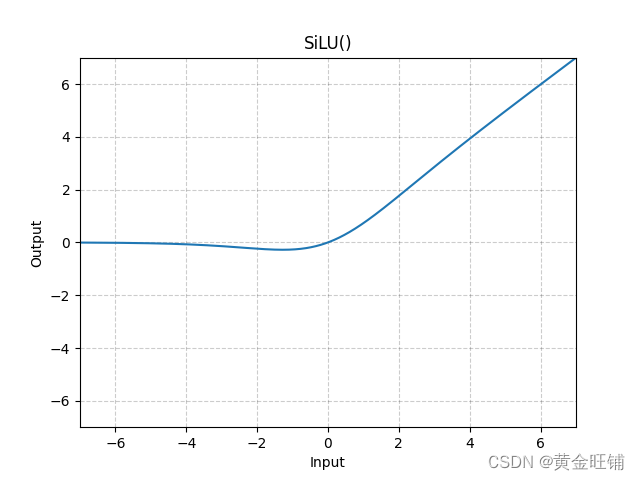

s i l u ( x ) = x ∗ σ ( x ) , where σ ( x ) is logistic sigmoid silu(x)=x*\sigma(x), \text{where } \sigma(x) \text{ is logistic sigmoid} silu(x)=x∗σ(x),where σ(x) is logistic sigmoid

图

代码

import torch

import torch.nn as nn

m = nn.SiLU()

input = torch.randn(4)

output = m(input)

print("input: ", input) # input: tensor([ 0.3852, -0.5519, 0.8902, 2.1292])

print("output: ", output) # output: tensor([ 0.2292, -0.2017, 0.6311, 1.9029])