dataset is MNIST

# Imports

import torch

import torch.nn as nn # All neural network modules, nn.Linear, nn.Conv2d, BatchNorm, Loss functions

import torch.optim as optim # For all Optimization algorithms, SGD, Adam, etc.

import torch.nn.functional as F # All functions that don't have any parameters

from torch.utils.data import DataLoader # Gives easier dataset management and creates mini batches

import torchvision.datasets as datasets # Has standard datasets we can import in a nice way

import torchvision.transforms as transforms # Transformations we can perform on our dataset

# TODO:Create simple CNN

class CNN(nn.Module):

def __init__(self,in_channels=1,num_classes=10):

super(CNN, self).__init__()

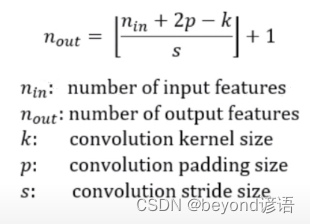

self.conv1 = nn.Conv2d(in_channels=1,out_channels=8,kernel_size=(3,3),stride=(1,1),padding=(1,1))

self.pool = nn.MaxPool2d(kernel_size=(2,2),stride=(2,2))

self.conv2 = nn.Conv2d(in_channels=8,out_channels=16,kernel_size=(3,3),stride=(1,1),padding=(1,1))

self.fc1 = nn.Linear(16*7*7,num_classes)

def forward(self,x):

x = F.relu(self.conv1(x))

x = self.pool(x)

x = F.relu(self.conv2(x))

x = self.pool(x)

x = x.reshape(x.shape[0],-1)

x = self.fc1(x)

return x

# model = CNN()

# x = torch.randn(64,1,28,28)

# print(model(x).shape) # torch.Size([64, 10])

# exit()

# Set device

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# Hyperparameters

input_size = 1

num_classes = 10

learning_rate = 1e-3

batch_size = 64

num_epochs = 5

# Load Data

train_dataset = datasets.MNIST("../",train=True,transform=transforms.ToTensor(),download=True)

test_dataset = datasets.MNIST("../",train=False,transform=transforms.ToTensor(),download=True)

train_loader = DataLoader(dataset=train_dataset,batch_size=batch_size,shuffle=True)

test_loader = DataLoader(dataset=test_dataset,batch_size=batch_size,shuffle=True)

# Initialize network

model = CNN().to(device=device)

# Initialize network

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(params=model.parameters(),lr=learning_rate)

# Train Network

for epoch in range(num_epochs):

for batch_idx,(data,targets) in enumerate(train_loader):

# Get data to cuda if possible

data = data.to(device)

targets = targets.to(device)

# print(data.shape)

# forward

scores = model(data)

loss = criterion(scores,targets)

# backward

optimizer.zero_grad()

loss.backward()

# gradient descent or adam step

optimizer.step()

# Check accuracy on training & test to see how good our model

def check_accuracy(loader,model):

if loader.dataset.train:

print("Checking accuracy on training data")

else:

print("Checking accuracy on testing data")

num_correct = 0

num_samples = 0

model.eval()

with torch.no_grad():

for x,y in loader:

x = x.to(device)

y = y.to(device)

scores = model(x)

_, predictions = scores.max(1)

num_correct += (predictions==y).sum()

num_samples += predictions.size(0)

print(f'Got {

num_correct}/{

num_samples} with accuracy {

float(num_correct)/float(num_samples)*100:.2f}')

model.train()

check_accuracy(train_loader,model)

check_accuracy(test_loader,model)