大多数情况下,mxnet都使用python接口进行机器学习程序的编写,方便快捷,但是有的时候,需要把机器学习训练和识别的程序部署到生产版的程序中去,比如游戏或者云服务,此时采用C++等高级语言去编写才能提高性能,本文介绍了如何在windows系统下从源码编译mxnet,安装python版的包,并使用C++原生接口创建示例程序。

目标

- 编译出libmxnet.lib和libmxnet.dll的gpu版本

- 从源码安装mxnet python包

- 构建mxnet C++示例程序

环境

- windows10

- vs2015

- cmake3.7.2

- Miniconda2(python2.7.14)

- CUDA8.0

- mxnet1.2

- opencv3.4.1

- OpenBLAS-v0.2.19-Win64-int32

- cudnn-8.0-windows10-x64-v7.1(如果编译cpu版本的mxnet,则此项不需要)

步骤

下载源码

最好用git下载,递归地下载所有依赖的子repo,源码的根目录为mxnet

git clone --recursive https://github.com/dmlc/mxnet

依赖库

在此之前确保cmake和python已经正常安装,并且添加到环境变量,然后再下载第三方依赖库

- 下载安装cuda,确保机器是英伟达显卡,且支持cuda,地址:https://developer.nvidia.com/cuda-toolkit

- 下载安装opencv预编译版,地址:https://sourceforge.net/projects/opencvlibrary/files/opencv-win/3.4.1/opencv-3.4.1-vc14_vc15.exe/download

- 下载openblas预编译版,地址:https://sourceforge.net/projects/openblas/files/v0.2.19/

- 下载cudnn预编译版,注意与cuda版本对应,地址:https://developer.nvidia.com/compute/machine-learning/cudnn/secure/v7.0.5/prod/8.0_20171129/cudnn-8.0-windows10-x64-v7

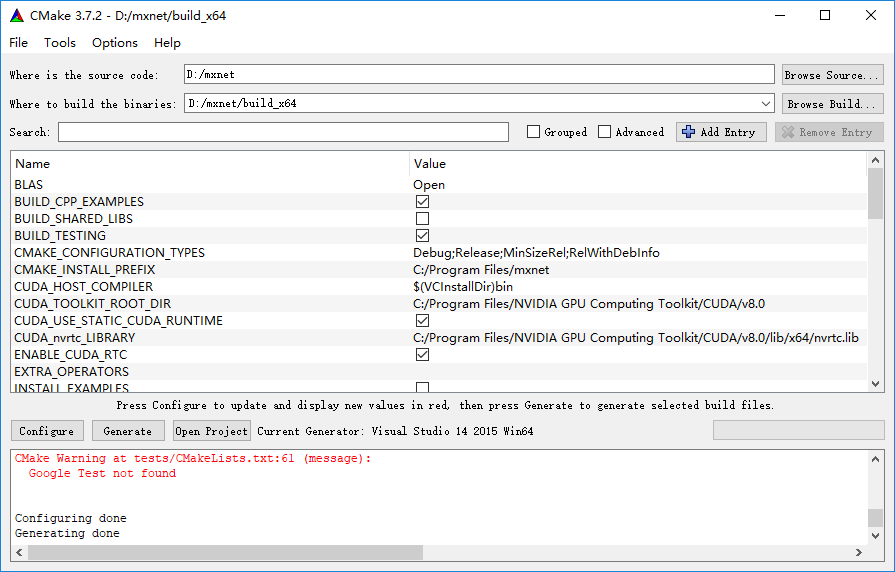

cmake配置

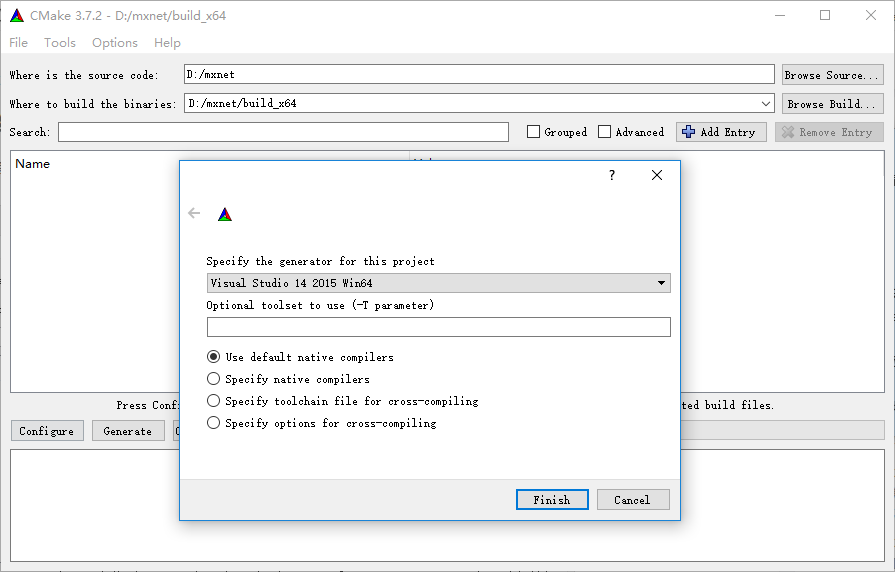

打开cmake-gui,配置源码目录和生成目录,编译器选择vs2015 win64

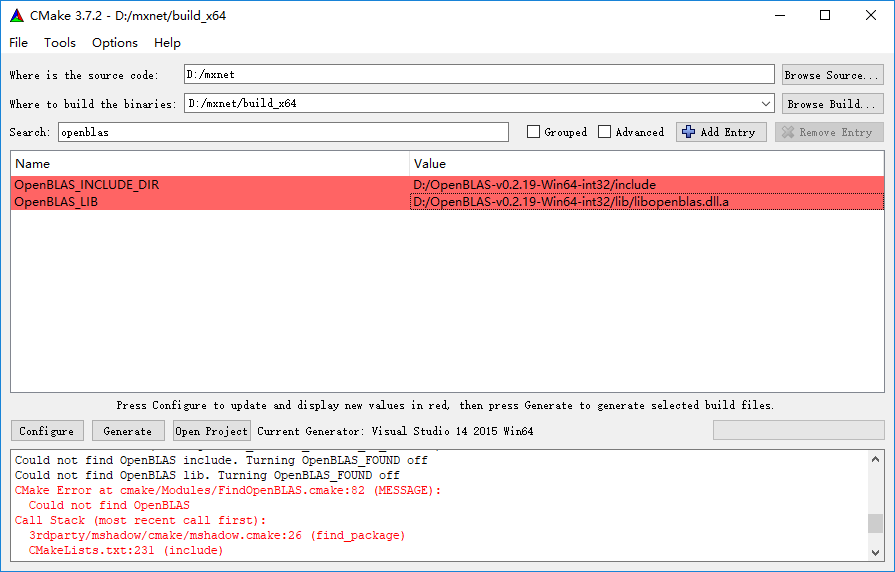

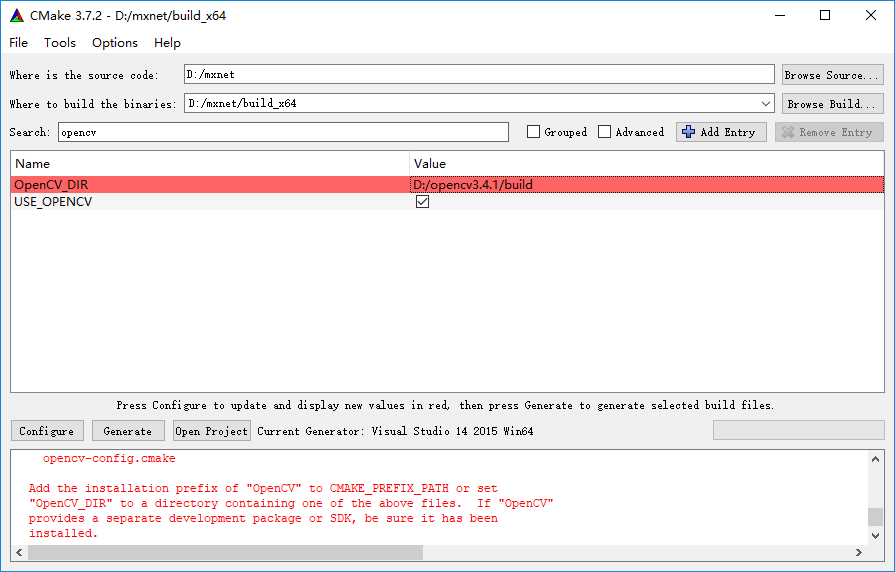

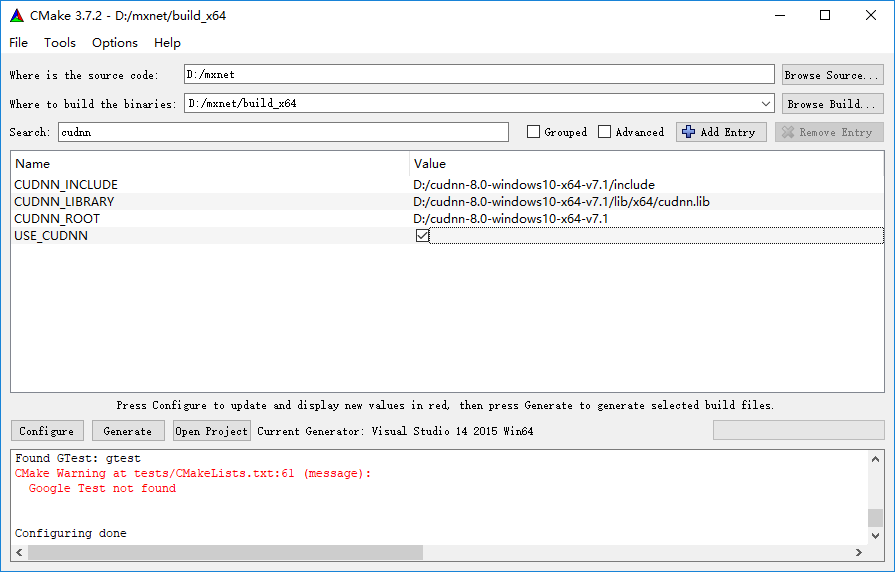

配置第三方依赖库

configure和generate

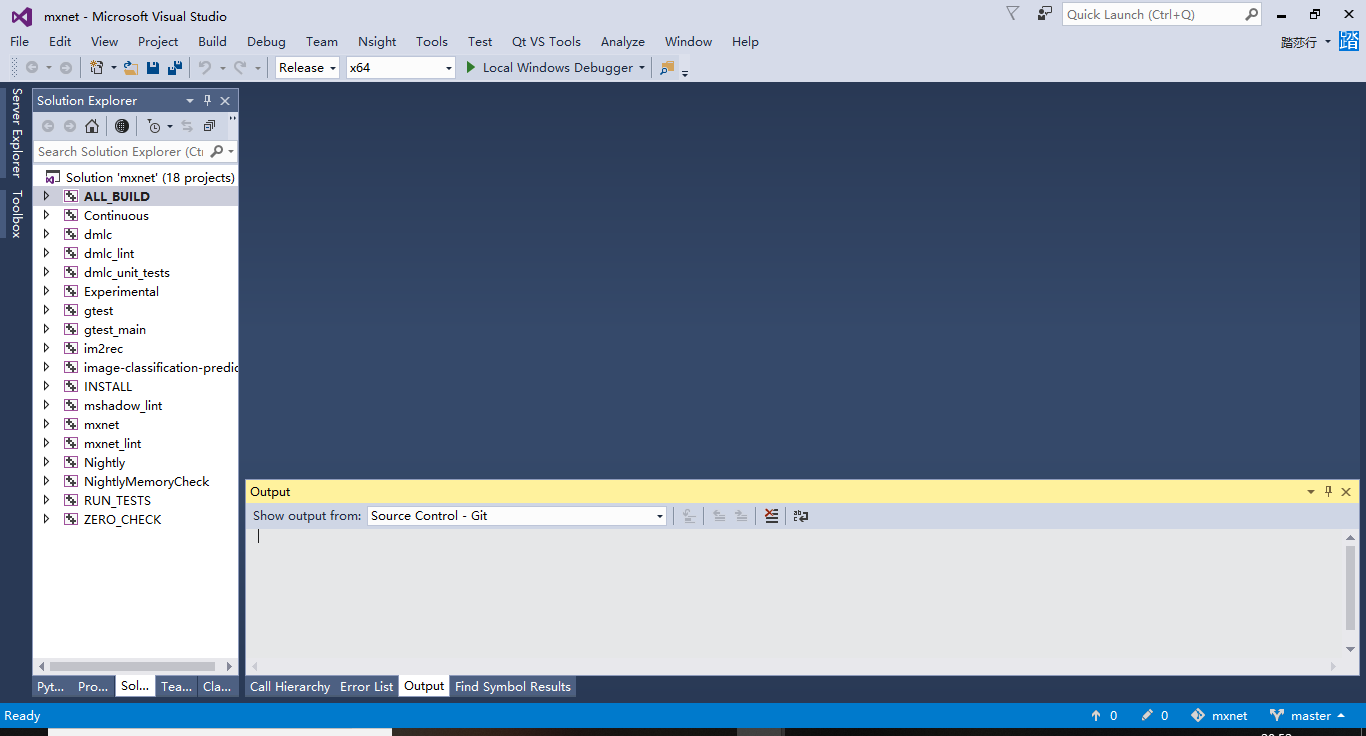

编译vs工程

打开mxnet.sln,配置成release x64模式,编译整个solution

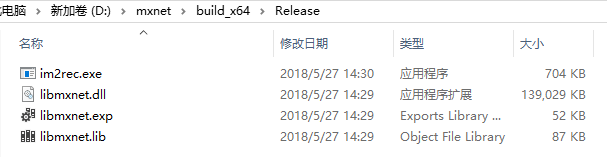

编译完成后会在对应文件夹生成mxnet的lib和dll

此时整个过程成功了一半

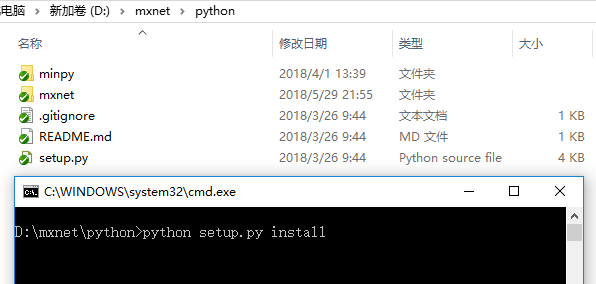

安装mxnet的python包

有了libmxnet.dll就可以同源码安装python版的mxnet包了

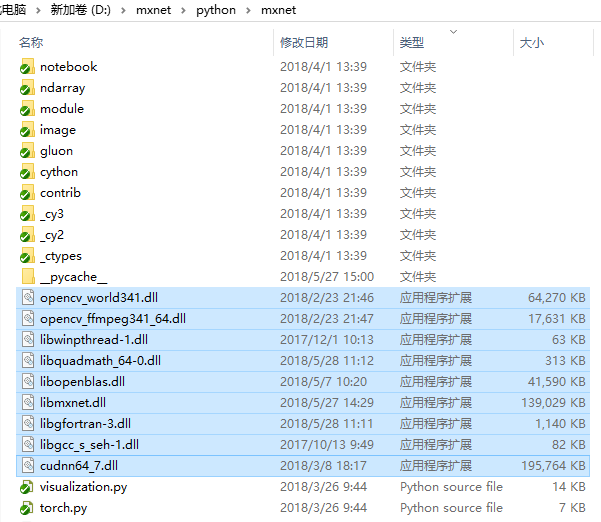

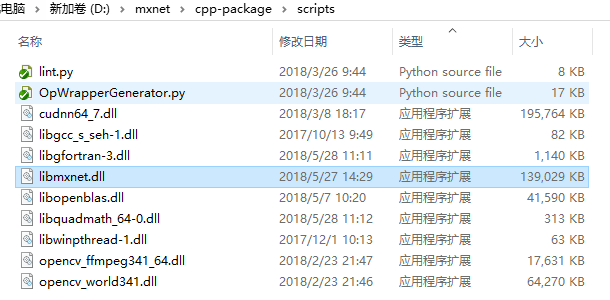

不过,前提是需要集齐所有依赖到的其他dll,如图所示,将这些dll全部拷贝到mxnet/python/mxnet目录下

tip: 关于dll的来源

- opencv,openblas,cudnn相关dll都是从这几个库的目录里拷过来的

- libgcc_s_seh-1.dll和libwinpthread-1.dll是从mingw相关的库目录里拷过来的,git,qt等这些目录都有

- libgfortran-3.dll和libquadmath_64-0.dll是从adda(https://github.com/adda-team/adda/releases)这个库里拷过来的,注意改名

然后,在mxnet/python目录下使用命令行安装mxnet的python包

python setup.py install

安装过程中,python会自动把对应的dll考到安装目录,正常安装完成后,在python中就可以 import mxnet 了

生成C++依赖头文件

为了能够使用C++原生接口,这一步是很关键的一步,目的是生成mxnet C++程序依赖的op.h文件

在mxnet/cpp-package/scripts目录,将所有依赖到的dll拷贝进来

在此目录运行命令行

python OpWrapperGenerator.py libmxnet.dll

正常情况下就可以在mxnet/cpp-package/include/mxnet-cpp目录下生成op.h了

如果这个过程中出现一些error,多半是dll文件缺失或者版本不对,很好解决

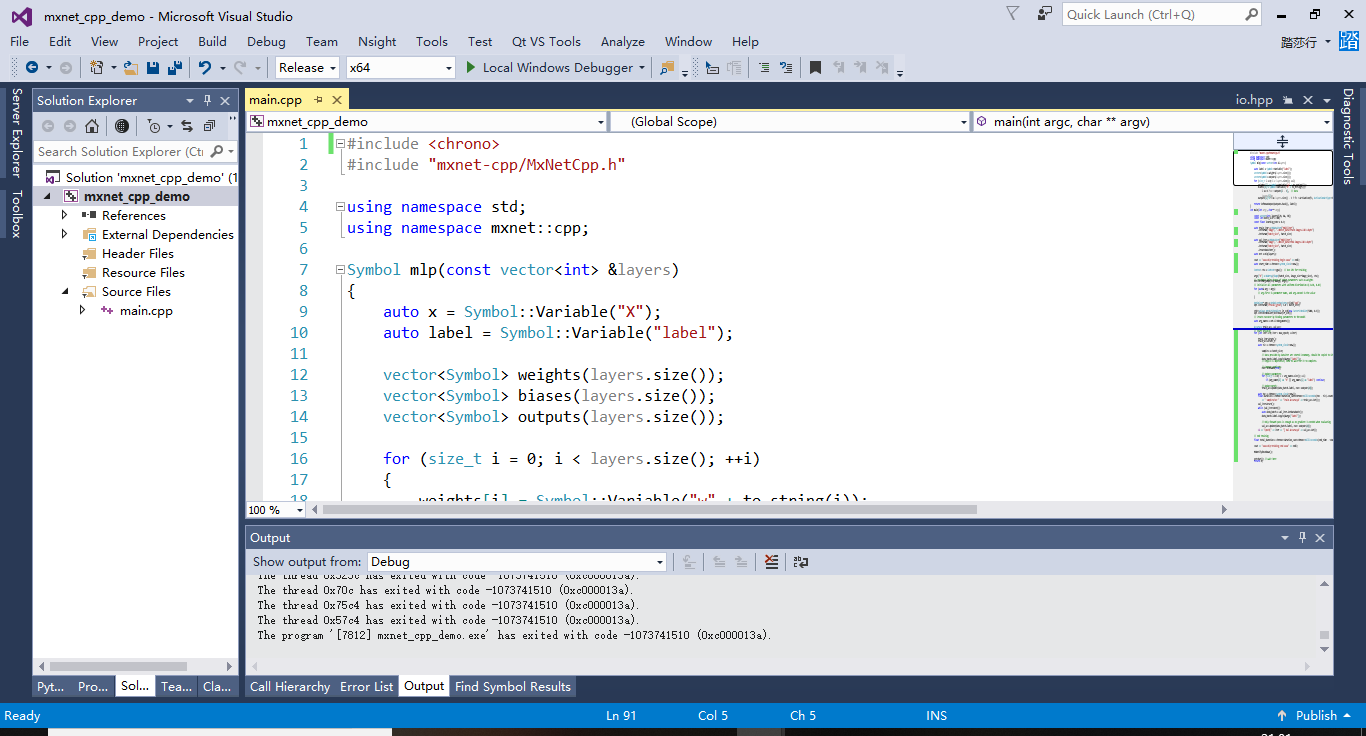

构建C++示例程序

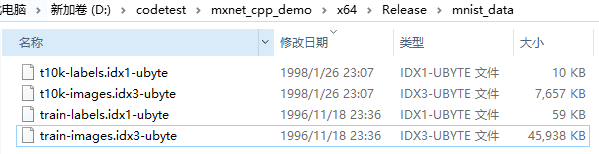

建立cpp工程,这里使用经典的mnist手写数字识别示例(请提前下载好mnist数据,地址:mnist)

选择release x64模式

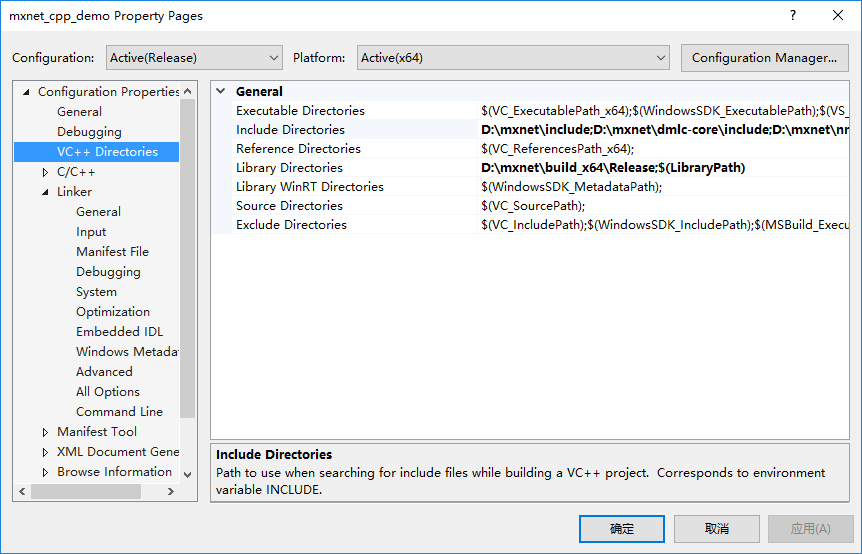

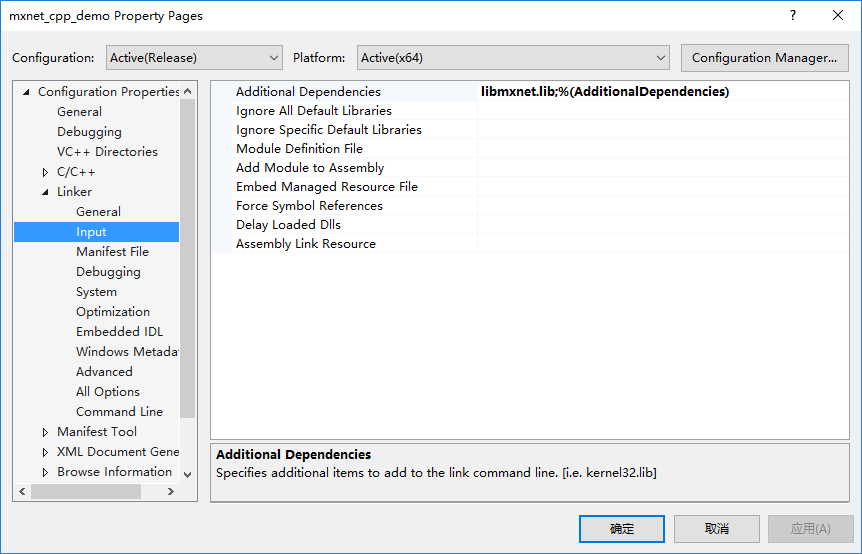

配置include和lib目录以及附加依赖项

include目录包括:

- D:\mxnet\include

- D:\mxnet\dmlc-core\include

- D:\mxnet\nnvm\include

- D:\mxnet\cpp-package\include

lib目录:

- D:\mxnet\build_x64\Release

附加依赖项:

- libmxnet.lib

代码 main.cpp

#include <chrono>

#include "mxnet-cpp/MxNetCpp.h"

using namespace std;

using namespace mxnet::cpp;

Symbol mlp(const vector<int> &layers)

{

auto x = Symbol::Variable("X");

auto label = Symbol::Variable("label");

vector<Symbol> weights(layers.size());

vector<Symbol> biases(layers.size());

vector<Symbol> outputs(layers.size());

for (size_t i = 0; i < layers.size(); ++i)

{

weights[i] = Symbol::Variable("w" + to_string(i));

biases[i] = Symbol::Variable("b" + to_string(i));

Symbol fc = FullyConnected(

i == 0 ? x : outputs[i - 1], // data

weights[i],

biases[i],

layers[i]);

outputs[i] = i == layers.size() - 1 ? fc : Activation(fc, ActivationActType::kRelu);

}

return SoftmaxOutput(outputs.back(), label);

}

int main(int argc, char** argv)

{

const int image_size = 28;

const vector<int> layers{128, 64, 10};

const int batch_size = 100;

const int max_epoch = 10;

const float learning_rate = 0.1;

const float weight_decay = 1e-2;

auto train_iter = MXDataIter("MNISTIter")

.SetParam("image", "./mnist_data/train-images.idx3-ubyte")

.SetParam("label", "./mnist_data/train-labels.idx1-ubyte")

.SetParam("batch_size", batch_size)

.SetParam("flat", 1)

.CreateDataIter();

auto val_iter = MXDataIter("MNISTIter")

.SetParam("image", "./mnist_data/t10k-images.idx3-ubyte")

.SetParam("label", "./mnist_data/t10k-labels.idx1-ubyte")

.SetParam("batch_size", batch_size)

.SetParam("flat", 1)

.CreateDataIter();

auto net = mlp(layers);

// start traning

cout << "==== mlp training begin ====" << endl;

auto start_time = chrono::system_clock::now();

//Context ctx = Context::cpu();

Context ctx = Context::gpu(); // Use GPU for training

std::map<string, NDArray> args;

args["X"] = NDArray(Shape(batch_size, image_size*image_size), ctx);

args["label"] = NDArray(Shape(batch_size), ctx);

// Let MXNet infer shapes of other parameters such as weights

net.InferArgsMap(ctx, &args, args);

// Initialize all parameters with uniform distribution U(-0.01, 0.01)

auto initializer = Uniform(0.01);

for (auto& arg : args)

{

// arg.first is parameter name, and arg.second is the value

initializer(arg.first, &arg.second);

}

// Create sgd optimizer

Optimizer* opt = OptimizerRegistry::Find("sgd");

opt->SetParam("rescale_grad", 1.0 / batch_size)

->SetParam("lr", learning_rate)

->SetParam("wd", weight_decay);

std::unique_ptr<LRScheduler> lr_sch(new FactorScheduler(5000, 0.1));

opt->SetLRScheduler(std::move(lr_sch));

// Create executor by binding parameters to the model

auto *exec = net.SimpleBind(ctx, args);

auto arg_names = net.ListArguments();

// Create metrics

Accuracy train_acc, val_acc;

// Start training

for (int iter = 0; iter < max_epoch; ++iter)

{

int samples = 0;

train_iter.Reset();

train_acc.Reset();

auto tic = chrono::system_clock::now();

while (train_iter.Next())

{

samples += batch_size;

auto data_batch = train_iter.GetDataBatch();

// Data provided by DataIter are stored in memory, should be copied to GPU first.

data_batch.data.CopyTo(&args["X"]);

data_batch.label.CopyTo(&args["label"]);

// CopyTo is imperative, need to wait for it to complete.

NDArray::WaitAll();

// Compute gradients

exec->Forward(true);

exec->Backward();

// Update parameters

for (size_t i = 0; i < arg_names.size(); ++i)

{

if (arg_names[i] == "X" || arg_names[i] == "label") continue;

opt->Update(i, exec->arg_arrays[i], exec->grad_arrays[i]);

}

// Update metric

train_acc.Update(data_batch.label, exec->outputs[0]);

}

// one epoch of training is finished

auto toc = chrono::system_clock::now();

float duration = chrono::duration_cast<chrono::milliseconds>(toc - tic).count() / 1000.0;

LG << "Epoch[" << iter << "] " << samples / duration \

<< " samples/sec " << "Train-Accuracy=" << train_acc.Get();;

val_iter.Reset();

val_acc.Reset();

while (val_iter.Next())

{

auto data_batch = val_iter.GetDataBatch();

data_batch.data.CopyTo(&args["X"]);

data_batch.label.CopyTo(&args["label"]);

NDArray::WaitAll();

// Only forward pass is enough as no gradient is needed when evaluating

exec->Forward(false);

val_acc.Update(data_batch.label, exec->outputs[0]);

}

LG << "Epoch[" << iter << "] Val-Accuracy=" << val_acc.Get();

}

// end training

auto end_time = chrono::system_clock::now();

float total_duration = chrono::duration_cast<chrono::milliseconds>(end_time - start_time).count() / 1000.0;

cout << "total duration: " << total_duration << " s" << endl;

cout << "==== mlp training end ====" << endl;

//delete exec;

MXNotifyShutdown();

getchar(); // wait here

return 0;

}

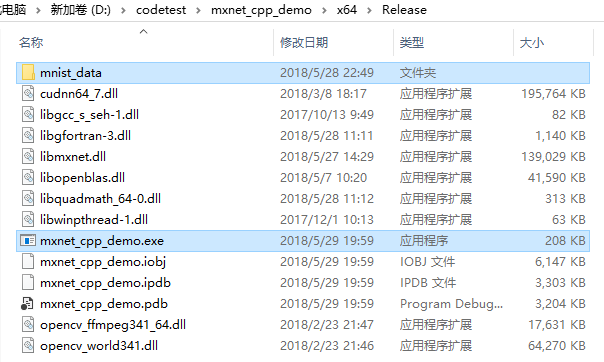

编译生成目录

- 预先把mnist数据拷进去,维持相对目录结构

- 在执行目录也要把所有依赖的dll拷贝进来

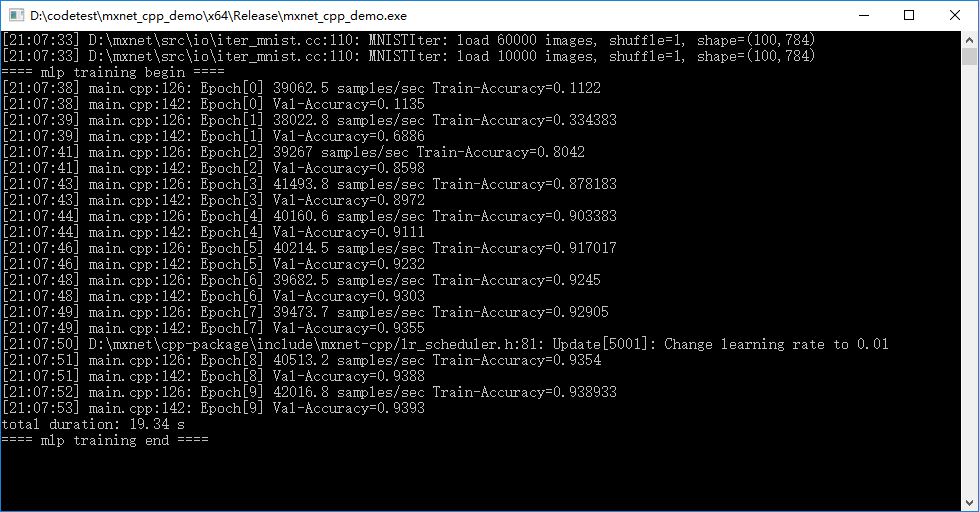

运行结果

可以看出,在数据量小的情况下,gpu版本并不明显比cpu版本消耗的训练时间少

至此,大功告成