# 实时数仓项目-数据采集与ODS层

配置canal实时采集mysql数据

一、mysql开启binlog

- 修改mysql的配置文件(linux:/etc/my.cnf,Windows:\my.ini)

log-bin=mysql-bin # 开期binlog

binlog-format=ROW #选择ROW模式

binglog-do-db=dwshow #dwshow是数据库的名称

binlog-format可以选择statement,row,mixed,区别在于:

| 模式 | 区别 |

|---|---|

| statement | 记录写操作的语句,节省空间,但可能造成数据不一致 |

| row | 记录每次操作后每行记录的变化,占用空间较大 |

| mixed | 包含UUID(),udf是row模式极端情况仍旧会有不一致,对于binglong监控不方便 |

- 重启mysql,并创建按canal用户

systemctl restart mysqld

#MySQL重启之后,到下面路径中看有没有mysql-bin.*****文件

cd /var/lib/mysq

GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%' IDENTIFIED BY 'canal' ;

二、安装配置canal采集数据到kafka

- canal下载安装包并解压到安装目录

下载:https://github.com/alibaba/canal/releases

把下载的Canal.deployer-1.1.4.tar.gz拷贝到linux,解压缩(路径可自行调整)

[root@linux123 ~]# mkdir /opt/modules/canal

[root@linux123 mysql]# tar -zxf canal.deployer-1.1.4.tar.gz -C /opt/modules/canal

- 修改配置conf/canal.properties,配置zk和kafka地址

# 配置zookeeper地址

canal.zkServers =linux121:2181,linux123:2181

# tcp, kafka, RocketMQ

canal.serverMode = kafka

# 配置kafka地址

canal.mq.servers =linux121:9092,linux123:9092

- 修改配置conf/example/instance.properties

配置mysql的主机、用户名密码以及监控的kafka主题

# 配置MySQL数据库所在的主机

canal.instance.master.address = linux123:3306

# username/password,配置数据库用户和密码

canal.instance.dbUsername =canal

canal.instance.dbPassword =canal

# mq config,对应Kafka主题:

canal.mq.topic=test

- 启动canal

sh bin/startup.sh - 关闭canal

sh bin/stop.sh

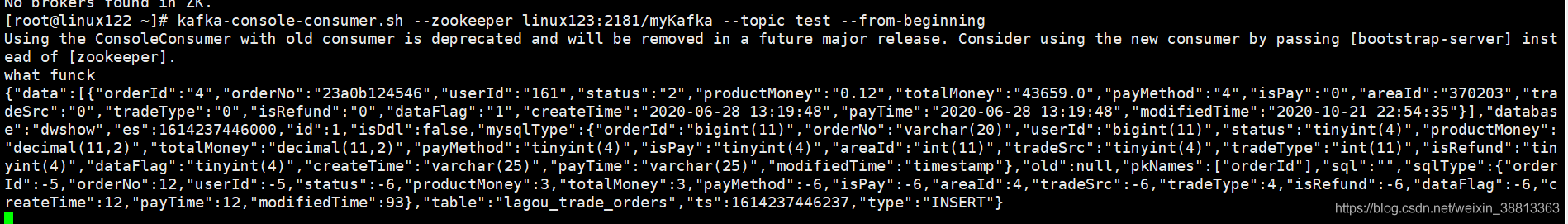

三、启动kafka消费者验证

ODS层数据处理导入hbase

一、flink采集kafka数据

- 编写工具类获取kafka消费者作为flink数据源,需要设置server地址、key和value反序列化器、消费组Id、消费开始的offset

package myUtils

import java.util.Properties

import org.apache.flink.api.common.serialization.SimpleStringSchema

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer

class SourceKafka {

def getKafkaSource(topicName:String): FlinkKafkaConsumer[String] ={

val props = new Properties()

props.setProperty("bootstrap.servers","linux121:9092,linux122:9092,linux123:9092");

props.setProperty("group.id","consumer-group")

props.setProperty("key.deserializer","org.apache.kafka.common.serialization.StringDeserializer");

props.setProperty("value.deserializer","org.apache.kafka.common.serialization.StringDeserializer");

props.setProperty("auto.offset.reset","latest")

new FlinkKafkaConsumer[String](topicName,new SimpleStringSchema(),props);

}

}

- 从kafka获取数据并写入hbase,从kafka中获取json格式的数据,使用alibaba的fastjson进行解析

package ods

import java.util

import com.alibaba.fastjson.{

JSON, JSONObject}

import models.TableObject

import myUtils.SourceKafka

import org.apache.flink.streaming.api.scala.{

DataStream, StreamExecutionEnvironment}

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer

import org.apache.flink.api.scala._

object KafkaToHbase {

def main(args: Array[String]): Unit = {

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

val kafkaConsumer: FlinkKafkaConsumer[String] = new SourceKafka().getKafkaSource("test")

kafkaConsumer.setStartFromLatest()

val sourceStream: DataStream[String] = env.addSource(kafkaConsumer)//需要隐式转换

val mappedStream: DataStream[util.ArrayList[TableObject]] = sourceStream.map(x => {

val jsonObj: JSONObject = JSON.parseObject(x)

val database: AnyRef = jsonObj.get("database")

val table: AnyRef = jsonObj.get("table")

val typeInfo: AnyRef = jsonObj.get("type")

val objects = new util.ArrayList[TableObject]()

jsonObj.getJSONArray("data").forEach(x => {

println(database.toString + "...." + table.toString + ".." + typeInfo.toString + "..." + x.toString)

objects.add(TableObject(database.toString, table.toString, typeInfo.toString, x.toString))

})

objects

})

mappedStream.addSink(new SinkHbase)

env.execute()

}

}

- 编写flink的hbasesink

编写hbase连接方法

package myUtils

import org.apache.hadoop.conf.Configuration

import org.apache.hadoop.hbase.{

HBaseConfiguration, HConstants}

import org.apache.hadoop.hbase.client.{

Connection, ConnectionFactory}

class ConnHBase {

def connToHabse:Connection={

val conf: Configuration = HBaseConfiguration.create()

conf.set("hbase.zookeeper.quorum","linux121,linux122,linux123")

conf.set("hbase.zookeeper.property.clinetPort","2181")

conf.setInt(HConstants.HBASE_CLIENT_OPERATION_TIMEOUT,30000)

conf.setInt(HConstants.HBASE_CLIENT_SCANNER_TIMEOUT_PERIOD,30000)

val connection: Connection = ConnectionFactory.createConnection(conf)

connection

}

}

继承RichSinkFunction编写flink到hbase的下沉器

package ods

import models.{

AreaInfo, DataInfo, TableObject}

import org.apache.flink.streaming.api.functions.sink.{

RichSinkFunction, SinkFunction}

import java.util

import com.alibaba.fastjson.JSON

import myUtils.ConnHBase

import org.apache.flink.configuration.Configuration

import org.apache.hadoop.hbase.TableName

import org.apache.hadoop.hbase.client.{

Connection, Delete, Put, Table}

class SinkHbase extends RichSinkFunction[util.ArrayList[TableObject]]{

var connection:Connection= _

var hbtable:Table =_

override def invoke(value: util.ArrayList[TableObject], context: SinkFunction.Context[_]): Unit = {

value.forEach(x=>{

println(x.toString)

val database: String = x.dataBase

val tableName: String = x.tableName

val typeInfo: String = x.typeInfo

hbtable = connection.getTable(TableName.valueOf(tableName))

if(database.equalsIgnoreCase("dwshow")&&tableName.equalsIgnoreCase("lagou_trade_orders")){

if(typeInfo.equalsIgnoreCase("insert")){

value.forEach(x=>{

val info: DataInfo = JSON.parseObject(x.dataInfo, classOf[DataInfo])

insertTradeOrders(hbtable,info);

})

}else if(typeInfo.equalsIgnoreCase("update")){

value.forEach(x=>{

val info: DataInfo = JSON.parseObject(x.dataInfo, classOf[DataInfo])

insertTradeOrders(hbtable,info)

})

}else if(typeInfo.equalsIgnoreCase("delete")){

value.forEach(x=>{

val info: DataInfo = JSON.parseObject(x.dataInfo, classOf[DataInfo])

deleteTradeOrders(hbtable,info)

})

}

}

if(database.equalsIgnoreCase("dwshow")&&tableName.equalsIgnoreCase("lagou_area")){

value.forEach(x=>{

val info: AreaInfo = JSON.parseObject(x.dataInfo, classOf[AreaInfo])

if(typeInfo.equalsIgnoreCase("insert")){

insertArea(hbtable,info)

}else if(typeInfo.equalsIgnoreCase("update")){

insertArea(hbtable,info)

}else if(typeInfo.equalsIgnoreCase("delete")){

deleteArea(hbtable,info)

}

})

}

})

}

override def open(parameters: Configuration): Unit = {

connection = new ConnHBase().connToHabse

}

override def close(): Unit = {

if(hbtable!=null){

hbtable.close()

}

if(connection!=null){

connection.close()

}

}

def insertTradeOrders(hbTable:Table,dataInfo:DataInfo)={

val put = new Put(dataInfo.orderId.getBytes)

put.addColumn("f1".getBytes,"modifiedTime".getBytes,dataInfo.modifiedTime.getBytes())

put.addColumn("f1".getBytes,"orderNo".getBytes,dataInfo.orderNo.getBytes())

put.addColumn("f1".getBytes,"isPay".getBytes,dataInfo.isPay.getBytes())

put.addColumn("f1".getBytes,"tradeSrc".getBytes,dataInfo.tradeSrc.getBytes())

put.addColumn("f1".getBytes,"payTime".getBytes,dataInfo.payTime.getBytes())

put.addColumn("f1".getBytes,"productMoney".getBytes,dataInfo.productMoney.getBytes())

put.addColumn("f1".getBytes,"totalMoney".getBytes,dataInfo.totalMoney.getBytes())

put.addColumn("f1".getBytes,"dataFlag".getBytes,dataInfo.dataFlag.getBytes())

put.addColumn("f1".getBytes,"userId".getBytes,dataInfo.userId.getBytes())

put.addColumn("f1".getBytes,"areaId".getBytes,dataInfo.areaId.getBytes())

put.addColumn("f1".getBytes,"createTime".getBytes,dataInfo.createTime.getBytes())

put.addColumn("f1".getBytes,"payMethod".getBytes,dataInfo.payMethod.getBytes())

put.addColumn("f1".getBytes,"isRefund".getBytes,dataInfo.isRefund.getBytes())

put.addColumn("f1".getBytes,"tradeType".getBytes,dataInfo.tradeType.getBytes())

put.addColumn("f1".getBytes,"status".getBytes,dataInfo.status.getBytes())

hbTable.put(put)

}

def deleteTradeOrders(hbtable:Table,dataInfo: DataInfo)={

val delete = new Delete(dataInfo.orderId.getBytes());

hbtable.delete(delete)

}

def insertArea(hbTable:Table,areaInfo: AreaInfo)={

val put = new Put(areaInfo.id.getBytes())

put.addColumn("f1".getBytes(),"name".getBytes(),areaInfo.name.getBytes())

put.addColumn("f1".getBytes(),"pid".getBytes(),areaInfo.pid.getBytes())

put.addColumn("f1".getBytes(),"sname".getBytes(),areaInfo.sname.getBytes())

put.addColumn("f1".getBytes(),"level".getBytes(),areaInfo.level.getBytes())

put.addColumn("f1".getBytes(),"citycode".getBytes(),areaInfo.citycode.getBytes())

put.addColumn("f1".getBytes(),"yzcode".getBytes(),areaInfo.yzcode.getBytes())

put.addColumn("f1".getBytes(),"mername".getBytes(),areaInfo.mername.getBytes())

put.addColumn("f1".getBytes(),"Lng".getBytes(),areaInfo.Lng.getBytes())

put.addColumn("f1".getBytes(),"Lat".getBytes(),areaInfo.Lat.getBytes())

put.addColumn("f1".getBytes(),"pinyin".getBytes(),areaInfo.pinyin.getBytes())

hbTable.put(put)

}

def deleteArea(hbTable:Table,areaInfo: AreaInfo)={

val delete = new Delete(areaInfo.id.getBytes())

hbTable.delete(delete)

}

}