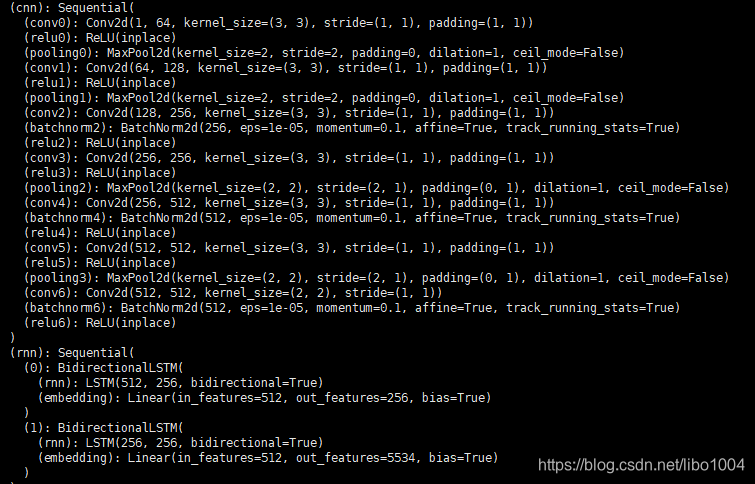

一、网络模型

二、不同层设置不同学习率

以优化器 Adam 为例:

# 不同层设置不同的学习率

train_params = list(map(id,crnn.rnn.parameters()))

rest_params = filter(lambda x:id(x) not in train_params, crnn.parameters())

# loss averager

loss_avg = utils.averager() # 对loss取平均对象

# setup optimizer

if opt.adam:

# 对不同层设置不同学习率

# optimizer = optim.Adam(crnn.parameters(), lr=opt.lr, betas=(opt.beta1, 0.999))

# optimizer = torch.nn.DataParallel(optimizer, device_ids=range(opt.ngpu))

### weight_decay 防止过拟合的参数

optimizer = optim.Adam([

{

'params':crnn.rnn[0].rnn.parameters(), 'lr':0.0000001, 'betas':(0.5,0.999)},

{

'params':crnn.rnn[0].embedding.parameters(), 'lr':0.0000001, 'betas':(0.5,0.999)},

{

'params':crnn.rnn[1].rnn.parameters(), 'lr':0.0000001, 'betas':(0.5,0.999)},

{

'params':crnn.rnn[1].embedding.parameters(), 'lr':opt.lr, 'betas':(0.5,0.999)},

{

'params':rest_params, 'lr':opt.lr, 'betas':(0.5,0.999)}

])

三、训练过程更新学习率

上述我们只对 rnn 网络进行训练,并对最后一层进行 lr 的更新。

def adjust_learning_rate(optimizer, epoch):

"""Sets the learning rate to the initial LR decayed by 10 every 5 epochs"""

lr = opt.lr * (0.1 ** (epoch // 5))

# for param_group in optimizer.param_groups: # 每一层的学习率都会下降

optimizer.param_groups[3]['lr'] = lr

for epoch in range(opt.nepoch):

# 每 5 个 epoch 修改一次学习率(只修改最后一个全连接层)

adjust_learning_rate(optimizer, epoch)

四、CNN层冻结

for p in crnn.named_parameters():

p[1].requires_grad = True

# 训练 rnn 层

if 'rnn' in p[0]: # 训练最后一层 #rnn rnn.1.embedding

p[1].requires_grad = True

else:

p[1].requires_grad = False # 冻结模型层

crnn.train()