朴素贝叶斯法(Naive Bayes)是基于贝叶斯定理与特征条件独立假设的分类方法。朴素贝叶斯法实现简单,学习与预测的效率都很高,是一种常用的方法。人工智能数学基础之概率论 。

设输入空间

X

⊆

R

n

\mathcal{X} \subseteq R^n

X ⊆ R n

n

n

n

Y

=

{

c

1

,

c

2

,

⋯

,

c

K

}

\mathcal{Y} = \{c_1,c_2,\cdots,c_K\}

Y = { c 1 , c 2 , ⋯ , c K }

x

∈

X

x \in \mathcal{X}

x ∈ X

y

∈

Y

y \in \mathcal{Y}

y ∈ Y

X

X

X

X

\mathcal X

X

Y

Y

Y

Y

\mathcal Y

Y

P

(

X

,

Y

)

P(X,Y)

P ( X , Y )

X

X

X

Y

Y

Y

训练数据集由

P

(

X

,

Y

)

P(X,Y)

P ( X , Y )

朴素贝叶斯法通过训练数据集学习联合概率分布

P

(

X

,

Y

)

P(X,Y)

P ( X , Y )

P

(

Y

=

c

K

)

,

k

=

1

,

2

,

⋯

,

K

(4.1)

P(Y = c_K) ,\ k = 1,2,\cdots, K \tag {4.1}

P ( Y = c K ) , k = 1 , 2 , ⋯ , K ( 4 . 1 )

先验概率是通过经验来判断事情发生的概率,通常是可以直接得到的,比如这里可以用样本中某个类的样本数除以总样本数。

条件概率分布

P

(

X

=

x

∣

Y

=

c

k

)

=

P

(

X

(

1

)

=

x

(

1

)

,

⋯

,

X

(

n

)

=

x

(

n

)

∣

Y

=

c

k

)

,

k

=

1

,

2

,

⋯

,

K

(4.2)

P(X=x|Y=c_k) = P(X^{(1)} = x^{(1)},\cdots, X^{(n)}=x^{(n)} | Y=c_k), \ k = 1,2, \cdots, K \tag{4.2}

P ( X = x ∣ Y = c k ) = P ( X ( 1 ) = x ( 1 ) , ⋯ , X ( n ) = x ( n ) ∣ Y = c k ) , k = 1 , 2 , ⋯ , K ( 4 . 2 )

于是学习到联合概率分布

P

(

X

,

Y

)

P(X,Y)

P ( X , Y )

这里要复习一下条件概率公式,设

A

,

B

A,B

A , B

P

(

A

)

>

0

P(A)>0

P ( A ) > 0

P

(

B

∣

A

)

=

P

(

A

B

)

P

(

A

)

P(B∣A) = \frac{P(AB)}{P(A)}

P ( B ∣ A ) = P ( A ) P ( A B )

A

A

A

B

B

B

P

(

A

B

)

P(AB)

P ( A B )

A

,

B

A,B

A , B

P

(

A

,

B

)

,

P

(

A

B

)

,

P

(

A

⋂

B

)

P(A,B),P(AB),P(A\bigcap B)

P ( A , B ) , P ( A B ) , P ( A ⋂ B )

事件

A

,

B

A,B

A , B

P

(

A

B

)

=

P

(

A

)

P

(

B

)

P(AB)=P(A)P(B)

P ( A B ) = P ( A ) P ( B ) 人工智能数学基础之概率论

那么

P

(

X

,

Y

)

=

P

(

X

=

x

∣

Y

=

c

k

)

⋅

P

(

Y

=

c

K

)

P(X,Y) = P(X=x|Y=c_k) \cdot P(Y = c_K)

P ( X , Y ) = P ( X = x ∣ Y = c k ) ⋅ P ( Y = c K )

朴素贝叶斯法对条件概率分布作了条件独立的假设,这就是朴素的意思。具体地,条件独立性假设是

P

(

X

=

x

∣

Y

=

c

k

)

=

P

(

X

(

1

)

=

x

(

1

)

,

⋯

,

X

(

n

)

=

x

(

n

)

∣

Y

=

c

k

)

=

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

(4.3)

P(X=x|Y=c_k) = P(X^{(1)} = x^{(1)},\cdots, X^{(n)}=x^{(n)} | Y=c_k) = \prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k) \tag {4.3}

P ( X = x ∣ Y = c k ) = P ( X ( 1 ) = x ( 1 ) , ⋯ , X ( n ) = x ( n ) ∣ Y = c k ) = j = 1 ∏ n P ( X ( j ) = x ( j ) ∣ Y = c k ) ( 4 . 3 )

朴素说的是

X

(

1

)

=

x

(

1

)

,

⋯

,

X

(

n

)

=

x

(

n

)

X^{(1)} = x^{(1)},\cdots, X^{(n)}=x^{(n)}

X ( 1 ) = x ( 1 ) , ⋯ , X ( n ) = x ( n )

朴素贝叶斯实际上学习到生成数据的机制,属于生成模型。条件独立假设是说用于分类的特征在类确定的条件下是条件独立的。这一假设使朴素贝叶斯法变得简单,但有时会牺牲一定的分类准确率。

朴素贝叶斯法分类时,对给定的输入

x

x

x

P

(

Y

=

c

k

∣

X

=

x

)

P(Y=c_k|X=x)

P ( Y = c k ∣ X = x )

x

x

x

P

(

Y

=

c

k

∣

X

=

x

)

=

P

(

X

=

x

∣

Y

=

c

k

)

P

(

Y

=

c

k

)

∑

k

P

(

X

=

x

∣

Y

=

c

k

)

P

(

Y

=

c

k

)

(4.4)

P(Y=c_k|X=x) = \frac{P(X=x|Y=c_k)P(Y=c_k)}{\sum_{k} P(X=x|Y=c_k)P(Y=c_k)} \tag{4.4}

P ( Y = c k ∣ X = x ) = ∑ k P ( X = x ∣ Y = c k ) P ( Y = c k ) P ( X = x ∣ Y = c k ) P ( Y = c k ) ( 4 . 4 )

上式的分母用到了全概率公式:

A

1

,

A

2

,

⋯

,

A

n

A_1,A_2,\cdots,A_n

A 1 , A 2 , ⋯ , A n

P

(

B

)

=

P

(

B

∣

A

1

)

P

(

A

1

)

+

P

(

B

∣

A

2

)

P

(

A

2

)

+

⋯

+

P

(

B

∣

A

n

)

P

(

A

n

)

=

∑

i

=

1

n

P

(

A

i

)

P

(

B

∣

A

i

)

P(B) = P(B|A_1)P(A_1) + P(B|A_2)P(A_2) + \cdots + P(B|A_n)P(A_n) = \sum_{i=1}^nP(A_i)P(B|A_i)

P ( B ) = P ( B ∣ A 1 ) P ( A 1 ) + P ( B ∣ A 2 ) P ( A 2 ) + ⋯ + P ( B ∣ A n ) P ( A n ) = i = 1 ∑ n P ( A i ) P ( B ∣ A i ) 人工智能数学基础之概率论

这里

P

(

Y

=

c

k

)

,

k

=

1

,

2

,

⋯

,

K

P(Y = c_k) ,\ k = 1,2,\cdots, K

P ( Y = c k ) , k = 1 , 2 , ⋯ , K

P

(

Y

=

c

k

)

P(Y = c_k)

P ( Y = c k )

P

(

Y

=

c

k

)

P(Y = c_k)

P ( Y = c k )

P

(

Y

=

c

k

)

P(Y=c_k)

P ( Y = c k )

P

(

Y

=

c

k

∣

X

=

x

)

P(Y=c_k|X=x)

P ( Y = c k ∣ X = x )

c

k

c_k

c k

x

x

x

P

(

X

=

x

∣

Y

=

c

k

)

P(X=x∣Y=c_k)

P ( X = x ∣ Y = c k )

c

k

c_k

c k

将式(4.3)代入式(4.4),有

P

(

Y

=

c

k

∣

X

=

x

)

=

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

∑

k

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

(4.5)

P(Y=c_k|X=x) = \frac{P(Y=c_k)\prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k)}{\sum_{k}P(Y=c_k) \prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k)} \tag{4.5}

P ( Y = c k ∣ X = x ) = ∑ k P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) ( 4 . 5 )

这是朴素贝叶斯法分类的基本公式。求解在给定

X

=

x

X=x

X = x

x

x

x

y

=

f

(

x

)

=

arg

max

c

k

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

∑

k

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

(4.6)

y = f(x) = \arg\,\max_{c_k}\frac{P(Y=c_k)\prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k)}{\sum_{k}P(Y=c_k) \prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k)} \tag{4.6}

y = f ( x ) = arg c k max ∑ k P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) ( 4 . 6 )

在上式中分母对所有

c

k

c_k

c k

X

X

X

y

=

f

(

x

)

=

arg

max

c

k

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

(4.7)

y = f(x) = \arg\,\max_{c_k}P(Y=c_k)\prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k) \tag{4.7}

y = f ( x ) = arg c k max P ( Y = c k ) j = 1 ∏ n P ( X ( j ) = x ( j ) ∣ Y = c k ) ( 4 . 7 )

朴素贝叶斯法将实例分到后验概率最大的类中。等价于期望风险最小化,假设选择0-1损失函数:

L

(

Y

,

f

(

X

)

)

=

{

1

if

Y

≠

f

(

X

)

0

if

Y

=

f

(

X

)

L(Y,f(X)) = \begin{cases} 1 & \text{if } Y \neq f(X) \\ 0 & \text{if } Y = f(X) \end{cases}

L ( Y , f ( X ) ) = { 1 0 if Y = f ( X ) if Y = f ( X )

损失函数期望公式

R

e

x

p

(

f

)

=

E

p

[

L

(

Y

,

f

(

X

)

)

]

=

∫

X

×

Y

L

(

y

,

f

(

x

)

)

P

(

x

,

y

)

d

x

d

y

R_{exp}(f) = E_p[L(Y,f(X))] = \int_{\mathcal X \times \mathcal Y} L(y,f(x))P(x,y)dxdy

R e x p ( f ) = E p [ L ( Y , f ( X ) ) ] = ∫ X × Y L ( y , f ( x ) ) P ( x , y ) d x d y

上面

f

(

X

)

f(X)

f ( X )

R

e

x

p

(

f

)

=

E

[

L

(

Y

,

f

(

X

)

)

]

=

∫

X

×

Y

L

(

y

,

f

(

x

)

)

P

(

x

,

y

)

d

x

d

y

=

∫

X

×

Y

L

(

y

,

f

(

x

)

)

P

(

y

∣

x

)

p

(

x

)

d

x

d

y

=

∫

X

∫

Y

L

(

y

,

f

(

x

)

)

P

(

y

∣

x

)

p

(

x

)

d

x

d

y

=

∫

X

(

∫

Y

L

(

y

,

f

(

x

)

)

P

(

y

∣

x

)

d

y

)

p

(

x

)

d

x

\begin{aligned} R_{exp}(f) &= E[L(Y,f(X))] \\ &= \int_{\mathcal X \times \mathcal Y} L(y,f(x))P(x,y)dxdy \\ &= \int_{\mathcal X \times \mathcal Y}L(y,f(x))P(y|x)p(x)dxdy \\ &= \int_{\mathcal X} \int_{\mathcal Y} L(y,f(x))P(y|x)p(x)dxdy \\ &= \int_{\mathcal X} \left( \int_{\mathcal Y} L(y,f(x))P(y|x)dy \right) p(x) dx \end{aligned}

R e x p ( f ) = E [ L ( Y , f ( X ) ) ] = ∫ X × Y L ( y , f ( x ) ) P ( x , y ) d x d y = ∫ X × Y L ( y , f ( x ) ) P ( y ∣ x ) p ( x ) d x d y = ∫ X ∫ Y L ( y , f ( x ) ) P ( y ∣ x ) p ( x ) d x d y = ∫ X ( ∫ Y L ( y , f ( x ) ) P ( y ∣ x ) d y ) p ( x ) d x

要使期望风险最小,就是要对于任意

X

=

x

X=x

X = x

P

(

X

=

x

)

P(X=x)

P ( X = x )

对于离散型随机变量,括号内的式子可以写成:

∑

k

=

1

K

L

(

c

k

,

f

(

x

)

)

P

(

c

k

∣

x

)

\sum_{k=1}^K L(c_k,f(x))P(c_k|x)

k = 1 ∑ K L ( c k , f ( x ) ) P ( c k ∣ x )

为了使期望风险最小化,只需要

X

=

x

X=x

X = x

x

x

x

f

(

x

)

=

arg

min

y

∈

Y

∑

k

=

1

K

L

(

c

k

,

y

)

P

(

c

k

∣

X

=

x

)

f(x) = \arg \, \min_{y \in \mathcal Y} \sum_{k=1}^K L(c_k,y)P(c_k|X=x)

f ( x ) = arg y ∈ Y min k = 1 ∑ K L ( c k , y ) P ( c k ∣ X = x )

对于

y

=

c

k

y=c_k

y = c k

x

x

x

y

≠

c

k

y \neq c_k

y = c k

1

1

1

f

(

x

)

=

arg

min

y

∈

Y

∑

k

=

1

K

L

(

c

k

,

y

)

P

(

c

k

∣

X

=

x

)

=

arg

min

y

∈

Y

∑

k

=

1

K

P

(

y

≠

c

k

∣

X

=

x

)

=

arg

min

y

∈

Y

(

1

−

P

(

y

=

c

k

∣

X

=

x

)

)

=

arg

max

y

∈

Y

P

(

y

=

c

k

∣

X

=

x

)

\begin{aligned} f(x) &= \arg \, \min_{y \in \mathcal Y} \sum_{k=1}^K L(c_k,y)P(c_k|X=x) \\ &= \arg \, \min_{y \in \mathcal Y} \sum_{k=1}^K P(y \neq c_k | X=x) \\ &= \arg \, \min_{y \in \mathcal Y} (1 -P(y=c_k|X=x)) \\ &= \arg \, \max_{y \in \mathcal Y} P(y=c_k|X=x) \end{aligned}

f ( x ) = arg y ∈ Y min k = 1 ∑ K L ( c k , y ) P ( c k ∣ X = x ) = arg y ∈ Y min k = 1 ∑ K P ( y = c k ∣ X = x ) = arg y ∈ Y min ( 1 − P ( y = c k ∣ X = x ) ) = arg y ∈ Y max P ( y = c k ∣ X = x )

因此,根据期望风险最小化准则就得到了后验概率最大化准则:

f

(

x

)

=

arg

max

c

k

P

(

c

k

∣

X

=

x

)

f(x) = \arg \, \max_{c_k} P(c_k|X=x)

f ( x ) = arg c k max P ( c k ∣ X = x )

也就是朴素贝叶斯法的原理。

在朴素贝叶斯法中,学习意味着估计

P

(

Y

=

c

k

)

P(Y=c_k)

P ( Y = c k )

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

P(X^{(j)} = x^{(j)} | Y=c_k)

P ( X ( j ) = x ( j ) ∣ Y = c k )

先验概率

P

(

Y

=

c

k

)

P(Y=c_k)

P ( Y = c k )

P

(

Y

=

c

k

)

=

∑

i

=

1

N

I

(

y

i

=

c

k

)

N

,

k

=

1

,

2

,

⋯

,

K

(4.8)

P(Y=c_k) = \frac{\sum_{i=1}^N I(y_i=c_k)}{N} , \ k = 1,2,\cdots, K \tag{4.8}

P ( Y = c k ) = N ∑ i = 1 N I ( y i = c k ) , k = 1 , 2 , ⋯ , K ( 4 . 8 )

就是用类别

c

k

c_k

c k

P

(

Y

=

c

k

)

P(Y=c_k)

P ( Y = c k )

设第

j

j

j

x

(

j

)

x^{(j)}

x ( j )

{

a

j

1

,

a

j

2

,

⋯

,

a

j

S

j

}

\{a_{j1} ,a_{j2},\cdots,a_{jS_j}\}

{ a j 1 , a j 2 , ⋯ , a j S j }

P

(

X

(

j

)

=

a

j

l

∣

Y

=

c

k

)

P(X^{(j)} = a_{jl} | Y =c_k)

P ( X ( j ) = a j l ∣ Y = c k )

P

(

X

(

j

)

=

a

j

l

∣

Y

=

c

k

)

=

∑

i

=

1

N

I

(

x

i

(

j

)

=

a

j

l

,

y

i

=

c

k

)

∑

i

=

1

N

I

(

y

i

=

c

k

)

j

=

1

,

2

,

⋯

,

n

;

l

=

1

,

2

,

⋯

,

S

j

;

k

=

1

,

2

,

⋯

,

K

(4.9)

P(X^{(j)} = a_{jl} | Y =c_k) = \frac{\sum_{i=1}^N I(x^{(j)}_i = a_{jl},y_i=c_k)}{\sum_{i=1}^N I(y_i = c_k)} \tag{4.9} \\ j=1,2,\cdots, n; l =1,2,\cdots,S_j; k= 1,2,\cdots,K

P ( X ( j ) = a j l ∣ Y = c k ) = ∑ i = 1 N I ( y i = c k ) ∑ i = 1 N I ( x i ( j ) = a j l , y i = c k ) j = 1 , 2 , ⋯ , n ; l = 1 , 2 , ⋯ , S j ; k = 1 , 2 , ⋯ , K ( 4 . 9 )

式中,

x

i

(

j

)

x^{(j)}_i

x i ( j )

i

i

i

j

j

j

a

j

l

a_{jl}

a j l

j

j

j

l

l

l

I

I

I

说的是给定类别

c

k

c_k

c k

j

j

j

a

j

l

a_{jl}

a j l

c

k

c_k

c k

j

j

j

a

j

l

a_{jl}

a j l

c

k

c_k

c k

下面给出朴素贝叶斯法的学习与分类算法。

输入:训练数据

T

T

T

x

x

x

计算先验概率及条件概率

P

(

Y

=

c

k

)

=

∑

i

=

1

N

I

(

y

i

=

c

k

)

N

,

k

=

1

,

2

,

⋯

,

K

P

(

X

(

j

)

=

a

j

l

∣

Y

=

c

k

)

=

∑

i

=

1

N

I

(

x

i

(

j

)

=

a

j

l

,

y

i

=

c

k

)

∑

i

=

1

N

I

(

y

i

=

c

k

)

j

=

1

,

2

,

⋯

,

n

;

l

=

1

,

2

,

⋯

,

S

j

;

k

=

1

,

2

,

⋯

,

K

P(Y=c_k) = \frac{\sum_{i=1}^N I(y_i = c_k)}{N}, \ k = 1,2,\cdots, K \\ P(X^{(j)} = a_{jl} | Y =c_k) = \frac{\sum_{i=1}^N I(x^{(j)}_i = a_{jl},y_i=c_k)}{\sum_{i=1}^N I(y_i = c_k)} \\ j=1,2,\cdots, n; l =1,2,\cdots,S_j; k= 1,2,\cdots,K

P ( Y = c k ) = N ∑ i = 1 N I ( y i = c k ) , k = 1 , 2 , ⋯ , K P ( X ( j ) = a j l ∣ Y = c k ) = ∑ i = 1 N I ( y i = c k ) ∑ i = 1 N I ( x i ( j ) = a j l , y i = c k ) j = 1 , 2 , ⋯ , n ; l = 1 , 2 , ⋯ , S j ; k = 1 , 2 , ⋯ , K

对于给定的实例

x

=

(

x

(

1

)

,

x

(

2

)

,

⋯

,

x

(

n

)

)

T

x = (x^{(1)},x^{(2)},\cdots, x^{(n)})^T

x = ( x ( 1 ) , x ( 2 ) , ⋯ , x ( n ) ) T

P

(

Y

=

c

k

)

=

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

,

k

=

1

,

2

,

⋯

,

K

P(Y=c_k) = \prod _{j=1}^n P(X^{(j)} = x^{(j)} | Y= c_k), \ k=1,2,\cdots, K

P ( Y = c k ) = j = 1 ∏ n P ( X ( j ) = x ( j ) ∣ Y = c k ) , k = 1 , 2 , ⋯ , K

确定实例

x

x

x

y

=

arg

max

c

k

P

(

Y

=

c

k

)

∏

j

=

1

n

P

(

X

(

j

)

=

x

(

j

)

∣

Y

=

c

k

)

y = \arg \, \max_{c_k} P(Y=c_k) \prod_{j=1}^n P(X^{(j)} = x^{(j)} | Y = c_k)

y = arg c k max P ( Y = c k ) j = 1 ∏ n P ( X ( j ) = x ( j ) ∣ Y = c k )

就是公式

(

4.7

)

(4.7)

( 4 . 7 )

下面用一个例子来应用下上面的算法,本书最厉害的地方就是例题很多,每个例题都很详细。通过例题可以理解上面的公式。

这里

Y

Y

Y

P

(

Y

=

1

)

=

9

15

,

P

(

Y

=

−

1

)

=

6

15

P(Y=1) = \frac{9}{15},P(Y=-1) = \frac{6}{15}

P ( Y = 1 ) = 1 5 9 , P ( Y = − 1 ) = 1 5 6

总共有15个样本,其中9个类别为

1

1

1

−

1

-1

− 1

再计算条件概率,这里为了简单只计算用得到的条件概率。

我们要计算

X

=

(

2

,

S

)

T

X=(2,S)^T

X = ( 2 , S ) T

(

4.7

)

(4.7)

( 4 . 7 )

P

(

Y

=

−

1

)

P

(

X

(

1

)

=

2

,

X

(

2

)

=

S

∣

Y

=

−

1

)

P(Y=-1)P(X^{(1)}=2,X^{(2)}=S|Y=-1)

P ( Y = − 1 ) P ( X ( 1 ) = 2 , X ( 2 ) = S ∣ Y = − 1 )

P

(

Y

=

−

1

)

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

P(Y=-1)P(X^{(1)}=2|Y=-1)P(X^{(2)}=S|Y=-1)

P ( Y = − 1 ) P ( X ( 1 ) = 2 ∣ Y = − 1 ) P ( X ( 2 ) = S ∣ Y = − 1 )

P

(

Y

=

1

)

P

(

X

(

1

)

=

2

∣

Y

=

1

)

P

(

X

(

2

)

=

S

∣

Y

=

1

)

P(Y=1)P(X^{(1)}=2|Y=1)P(X^{(2)}=S|Y=1)

P ( Y = 1 ) P ( X ( 1 ) = 2 ∣ Y = 1 ) P ( X ( 2 ) = S ∣ Y = 1 )

下面我们分别计算上午所需的条件概率

P

(

X

(

1

)

=

2

∣

Y

=

1

)

=

3

9

P(X^{(1)}=2|Y=1) = \frac{3}{9}

P ( X ( 1 ) = 2 ∣ Y = 1 ) = 9 3

Y

=

1

Y=1

Y = 1

X

(

1

)

=

2

X^{(1)}=2

X ( 1 ) = 2

3

9

\frac{3}{9}

9 3

P

(

X

(

2

)

=

S

∣

Y

=

1

)

=

1

9

P(X^{(2)}=S|Y=1) = \frac{1}{9}

P ( X ( 2 ) = S ∣ Y = 1 ) = 9 1

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

=

2

6

P(X^{(1)}=2|Y=-1) =\frac{2}{6}

P ( X ( 1 ) = 2 ∣ Y = − 1 ) = 6 2

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

=

3

6

P(X^{(2)}=S|Y=-1) =\frac{3}{6}

P ( X ( 2 ) = S ∣ Y = − 1 ) = 6 3

下面就比较

P

(

Y

=

−

1

)

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

=

6

15

×

2

6

×

3

6

=

1

15

P(Y=-1)P(X^{(1)}=2|Y=-1)P(X^{(2)}=S|Y=-1) = \frac{6}{15} \times \frac{2}{6} \times \frac{3}{6} = \frac{1}{15}

P ( Y = − 1 ) P ( X ( 1 ) = 2 ∣ Y = − 1 ) P ( X ( 2 ) = S ∣ Y = − 1 ) = 1 5 6 × 6 2 × 6 3 = 1 5 1

和

P

(

Y

=

1

)

P

(

X

(

1

)

=

2

∣

Y

=

1

)

P

(

X

(

2

)

=

S

∣

Y

=

1

)

=

9

15

×

3

9

×

1

9

=

1

45

P(Y=1)P(X^{(1)}=2|Y=1)P(X^{(2)}=S|Y=1) =\frac{9}{15} \times \frac{3}{9} \times \frac{1}{9} = \frac{1}{45}

P ( Y = 1 ) P ( X ( 1 ) = 2 ∣ Y = 1 ) P ( X ( 2 ) = S ∣ Y = 1 ) = 1 5 9 × 9 3 × 9 1 = 4 5 1

显然,前者更大,因此

y

=

−

1

y = -1

y = − 1

用极大似然估计可能会出现所要估计的概率值为0的情况,假如上面计算

P

(

Y

=

−

1

)

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

P(Y=-1)P(X^{(1)}=2|Y=-1)P(X^{(2)}=S|Y=-1)

P ( Y = − 1 ) P ( X ( 1 ) = 2 ∣ Y = − 1 ) P ( X ( 2 ) = S ∣ Y = − 1 )

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

=

0

P(X^{(2)}=S|Y=-1)=0

P ( X ( 2 ) = S ∣ Y = − 1 ) = 0

0

0

0

通常是因为某个类别下

X

X

X

P

λ

(

X

(

j

)

=

a

j

l

∣

Y

=

c

k

)

=

∑

i

=

1

N

I

(

x

i

(

j

)

=

a

j

l

,

y

i

=

c

k

)

+

λ

∑

i

=

1

N

I

(

y

i

=

c

k

)

+

S

j

λ

(4.10)

P_{\lambda}(X^{(j)}=a_{jl}|Y=c_k) = \frac{\sum_{i=1}^N I(x_i^{(j)} = a_{jl},y_i=c_k) +\lambda}{\sum_{i=1}^N I(y_i=c_k) +S_j\lambda} \tag{4.10}

P λ ( X ( j ) = a j l ∣ Y = c k ) = ∑ i = 1 N I ( y i = c k ) + S j λ ∑ i = 1 N I ( x i ( j ) = a j l , y i = c k ) + λ ( 4 . 1 0 )

其中

λ

≥

0

\lambda \geq 0

λ ≥ 0

X

X

X

λ

\lambda

λ

λ

=

0

\lambda = 0

λ = 0

λ

=

1

\lambda=1

λ = 1

分母加上

S

j

S_j

S j

λ

\lambda

λ

1

1

1

同样,先验概率的贝叶斯估计是

P

λ

(

Y

=

c

k

)

=

∑

i

=

1

N

I

(

y

i

=

c

k

)

+

λ

N

+

K

λ

(4.11)

P_\lambda(Y=c_k) = \frac{\sum_{i=1}^N I(y_i = c_k) + \lambda}{N+ K\lambda} \tag{4.11}

P λ ( Y = c k ) = N + K λ ∑ i = 1 N I ( y i = c k ) + λ ( 4 . 1 1 )

例4.2 问题同例4 . 1,按照拉普拉斯平滑估计概率,即取

λ

\lambda

λ

先例出所有的取值,

A

1

=

{

1

,

2

,

3

}

,

A

2

=

{

S

,

M

,

L

}

A_1 = \{1,2, 3\} , A_2 = \{S,M,L\}

A 1 = { 1 , 2 , 3 } , A 2 = { S , M , L }

C

=

{

1

,

−

1

}

C = \{1 , -1\}

C = { 1 , − 1 }

P

(

Y

=

−

1

)

=

6

+

1

15

+

2

=

7

17

P(Y=-1) = \frac{6+1}{15+2} = \frac{7}{17}

P ( Y = − 1 ) = 1 5 + 2 6 + 1 = 1 7 7

P

(

Y

=

1

)

=

9

+

1

15

+

2

=

10

17

P(Y=1) = \frac{9+1}{15+2} = \frac{10}{17}

P ( Y = 1 ) = 1 5 + 2 9 + 1 = 1 7 1 0

P

(

X

(

1

)

=

2

∣

Y

=

1

)

=

3

+

1

9

+

3

=

4

12

P(X^{(1)}=2|Y=1) = \frac{3+1}{9+3} = \frac{4}{12}

P ( X ( 1 ) = 2 ∣ Y = 1 ) = 9 + 3 3 + 1 = 1 2 4

P

(

X

(

2

)

=

S

∣

Y

=

1

)

=

2

12

P(X^{(2)}=S|Y=1) = \frac{2}{12}

P ( X ( 2 ) = S ∣ Y = 1 ) = 1 2 2

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

=

3

9

P(X^{(1)}=2|Y=-1) =\frac{3}{9}

P ( X ( 1 ) = 2 ∣ Y = − 1 ) = 9 3

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

=

4

9

P(X^{(2)}=S|Y=-1) =\frac{4}{9}

P ( X ( 2 ) = S ∣ Y = − 1 ) = 9 4

比较

P

(

Y

=

−

1

)

P

(

X

(

1

)

=

2

∣

Y

=

−

1

)

P

(

X

(

2

)

=

S

∣

Y

=

−

1

)

=

7

17

×

3

9

×

4

9

=

28

459

=

0.0610

P(Y=-1)P(X^{(1)}=2|Y=-1)P(X^{(2)}=S|Y=-1) = \frac{7}{17} \times \frac{3}{9} \times \frac{4}{9} = \frac{28}{459}=0.0610

P ( Y = − 1 ) P ( X ( 1 ) = 2 ∣ Y = − 1 ) P ( X ( 2 ) = S ∣ Y = − 1 ) = 1 7 7 × 9 3 × 9 4 = 4 5 9 2 8 = 0 . 0 6 1 0

和

P

(

Y

=

1

)

P

(

X

(

1

)

=

2

∣

Y

=

1

)

P

(

X

(

2

)

=

S

∣

Y

=

1

)

=

10

17

×

4

12

×

2

12

=

5

153

=

0.0327

P(Y=1)P(X^{(1)}=2|Y=1)P(X^{(2)}=S|Y=1) =\frac{10}{17} \times \frac{4}{12} \times \frac{2}{12} = \frac{5}{153} = 0.0327

P ( Y = 1 ) P ( X ( 1 ) = 2 ∣ Y = 1 ) P ( X ( 2 ) = S ∣ Y = 1 ) = 1 7 1 0 × 1 2 4 × 1 2 2 = 1 5 3 5 = 0 . 0 3 2 7

import numpy as np

class NaiveBayes :

def __init__ ( self, labda= 1 ) :

self. py = None

self. pxy = None

self. labda = labda

def fit ( self, X_train, y_train) :

size = len ( y_train)

size_plus = size + len ( set ( y_train) ) * self. labda

py = { }

for label in set ( y_train) :

py[ label] = ( np. sum ( y_train == label) + self. labda) / size_plus

feature_nums = np. zeros( ( X_train. shape[ 1 ] , 1 ) )

for i in range ( X_train. shape[ 1 ] ) :

feature_nums[ i] = len ( set ( X_train[ : , i] ) )

pxy = { }

for label in set ( y_train) :

for i in range ( X_train. shape[ 1 ] ) :

for feature in X_train[ : , i] :

if ( i, feature, label) not in pxy:

pxy[ ( i, feature, label) ] = ( sum ( X_train[ y_train== label] [ : , i] == feature) + self. labda) / ( np. sum ( y_train == label) + feature_nums[ i] * self. labda)

print ( 'P(X[%s]=%s|Y=%s)=%d/%d' % ( i+ 1 , feature, label, sum ( X_train[ y_train== label] [ : , i] == feature) + self. labda, np. sum ( y_train == label) + feature_nums[ i] * self. labda) )

self. py = py

self. pxy = pxy

def predict ( self, X_test) :

return np. array( [ self. _predict( X_test[ i] ) for i in range ( X_test. shape[ 0 ] ) ] )

def _predict ( self, x) :

pck = [ ]

for label in self. py:

p = self. py[ label]

for i in range ( x. shape[ 0 ] ) :

p *= self. pxy[ ( i, x[ i] , label) ]

pck. append( ( p, label) )

return sorted ( pck, key = lambda x: x[ 0 ] ) [ - 1 ] [ 1 ]

def score ( self, X_test, y_test) :

pass

我们用例题4.2的数据来测试一下:

X = np. array( [

[ '1' , 'S' ] ,

[ '1' , 'M' ] ,

[ '1' , 'M' ] ,

[ '1' , 'S' ] ,

[ '1' , 'S' ] ,

[ '2' , 'S' ] ,

[ '2' , 'M' ] ,

[ '2' , 'M' ] ,

[ '2' , 'L' ] ,

[ '2' , 'L' ] ,

[ '3' , 'L' ] ,

[ '3' , 'M' ] ,

[ '3' , 'M' ] ,

[ '3' , 'L' ] ,

[ '3' , 'L' ]

] )

y = np. array( [ - 1 , - 1 , 1 , 1 , - 1 , - 1 , - 1 , 1 , 1 , 1 , 1 , 1 , 1 , 1 , - 1 ] )

nb = NaiveBayes( )

nb. fit( X, y)

X_test = np. array( [ '2' , 'S' ] ) . reshape( 1 , - 1 )

print ( nb. predict( X_test) )

输出:

P(X[1]=1|Y=1)=3/12

P(X[1]=2|Y=1)=4/12

P(X[1]=3|Y=1)=5/12

P(X[2]=S|Y=1)=2/12

P(X[2]=M|Y=1)=5/12

P(X[2]=L|Y=1)=5/12

P(X[1]=1|Y=-1)=4/9

P(X[1]=2|Y=-1)=3/9

P(X[1]=3|Y=-1)=2/9

P(X[2]=S|Y=-1)=4/9

P(X[2]=M|Y=-1)=3/9

P(X[2]=L|Y=-1)=2/9

[-1]

最终打印所属类别为

−

1

-1

− 1

我们再试下iris数据集。

from sklearn. datasets import load_iris

from sklearn. model_selection import train_test_split

import pandas as pd

def create_data ( ) :

iris = load_iris( )

df = pd. DataFrame( iris. data, columns= iris. feature_names)

df[ 'label' ] = iris. target

df. columns = [ 'sepal length' , 'sepal width' , 'petal length' , 'petal width' , 'label' ]

data = np. array( df. iloc[ : 100 , : ] )

return data[ : , : - 1 ] , data[ : , - 1 ]

X, y = create_data( )

X_train, X_test, y_train, y_test = train_test_split( X, y, test_size= 0.3 )

同时需要优化下代码:

import numpy as np

class NaiveBayes :

def __init__ ( self, labda= 1 ) :

self. py = None

self. pxy = None

self. labda = labda

def fit ( self, X_train, y_train) :

size = len ( y_train)

size_plus = size + len ( set ( y_train) ) * self. labda

py = { }

for label in set ( y_train) :

py[ label] = np. log( ( np. sum ( y_train == label) + self. labda) / size_plus)

feature_nums = np. zeros( ( X_train. shape[ 1 ] , 1 ) )

for i in range ( X_train. shape[ 1 ] ) :

feature_nums[ i] = len ( set ( X_train[ : , i] ) )

pxy = { }

for label in set ( y_train) :

for i in range ( X_train. shape[ 1 ] ) :

for feature in X_train[ : , i] :

if ( i, feature, label) not in pxy:

pxy[ ( i, feature, label) ] = np. log( ( sum ( X_train[ y_train== label] [ : , i] == feature) + self. labda)

/ ( np. sum ( y_train == label) + feature_nums[ i] * self. labda) )

self. py = py

self. pxy = pxy

def predict ( self, X_test) :

return np. array( [ self. _predict( X_test[ i] ) for i in range ( X_test. shape[ 0 ] ) ] )

def _predict ( self, x) :

pck = [ ]

for label in self. py:

p = self. py[ label]

for i in range ( x. shape[ 0 ] ) :

if ( i, x[ i] , label) in self. pxy:

p += self. pxy[ ( i, x[ i] , label) ]

pck. append( ( p, label) )

return sorted ( pck, key = lambda x: x[ 0 ] ) [ - 1 ] [ 1 ]

def score ( self, X_test, y_test) :

return np. sum ( self. predict( X_test) == y_test) / len ( y_test)

主要有把概率练乘取对数变成累加,防止概率连乘过小溢出;

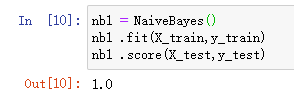

nb1 = NaiveBayes( )

nb1 . fit( X_train, y_train)

nb1 . score( X_test, y_test)

统计学习方法

https://blog.csdn.net/weixin_41575207/article/details/81742874

https://blog.csdn.net/rea_utopia/article/details/78881415