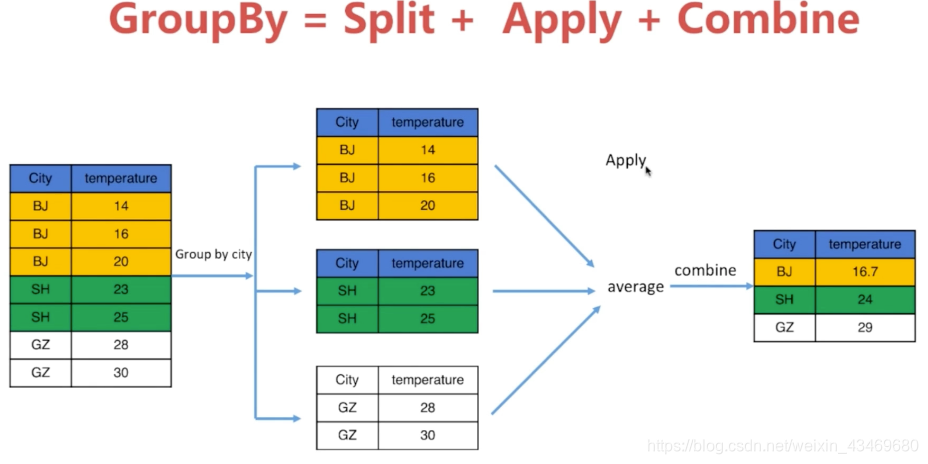

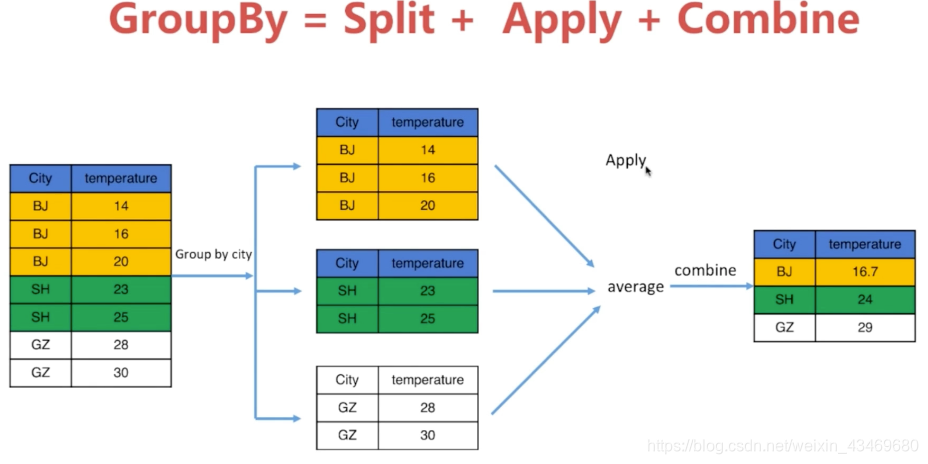

理解GroupBy

类似于数据库分组的

GroupBy操作和数据库类似

城市天气进行GroupBy操作

对group的单个列求平均值是Series

对group求平均值返回DataFrame

import numpy as np

import pandas as pd

from pandas import Series,DataFrame

df = pd.read_csv('/Users/bennyrhys/Desktop/数据分析可视化-数据集/homework/city_weather.csv')

df

|

date |

city |

temperature |

wind |

| 0 |

03/01/2016 |

BJ |

8 |

5 |

| 1 |

17/01/2016 |

BJ |

12 |

2 |

| 2 |

31/01/2016 |

BJ |

19 |

2 |

| 3 |

14/02/2016 |

BJ |

-3 |

3 |

| 4 |

28/02/2016 |

BJ |

19 |

2 |

| 5 |

13/03/2016 |

BJ |

5 |

3 |

| 6 |

27/03/2016 |

SH |

-4 |

4 |

| 7 |

10/04/2016 |

SH |

19 |

3 |

| 8 |

24/04/2016 |

SH |

20 |

3 |

| 9 |

08/05/2016 |

SH |

17 |

3 |

| 10 |

22/05/2016 |

SH |

4 |

2 |

| 11 |

05/06/2016 |

SH |

-10 |

4 |

| 12 |

19/06/2016 |

SH |

0 |

5 |

| 13 |

03/07/2016 |

SH |

-9 |

5 |

| 14 |

17/07/2016 |

GZ |

10 |

2 |

| 15 |

31/07/2016 |

GZ |

-1 |

5 |

| 16 |

14/08/2016 |

GZ |

1 |

5 |

| 17 |

28/08/2016 |

GZ |

25 |

4 |

| 18 |

11/09/2016 |

SZ |

20 |

1 |

| 19 |

25/09/2016 |

SZ |

-10 |

4 |

g = df.groupby(df['city'])

g

<pandas.core.groupby.generic.DataFrameGroupBy object at 0x11a393610>

g.groups

{'BJ': Int64Index([0, 1, 2, 3, 4, 5], dtype='int64'),

'GZ': Int64Index([14, 15, 16, 17], dtype='int64'),

'SH': Int64Index([6, 7, 8, 9, 10, 11, 12, 13], dtype='int64'),

'SZ': Int64Index([18, 19], dtype='int64')}

df_bj = g.get_group('BJ')

df_bj

|

date |

city |

temperature |

wind |

| 0 |

03/01/2016 |

BJ |

8 |

5 |

| 1 |

17/01/2016 |

BJ |

12 |

2 |

| 2 |

31/01/2016 |

BJ |

19 |

2 |

| 3 |

14/02/2016 |

BJ |

-3 |

3 |

| 4 |

28/02/2016 |

BJ |

19 |

2 |

| 5 |

13/03/2016 |

BJ |

5 |

3 |

df_bj.mean()

temperature 10.000000

wind 2.833333

dtype: float64

type(df_bj.mean())

pandas.core.series.Series

g.mean()

|

temperature |

wind |

| city |

|

|

| BJ |

10.000 |

2.833333 |

| GZ |

8.750 |

4.000000 |

| SH |

4.625 |

3.625000 |

| SZ |

5.000 |

2.500000 |

g.max()

|

date |

temperature |

wind |

| city |

|

|

|

| BJ |

31/01/2016 |

19 |

5 |

| GZ |

31/07/2016 |

25 |

5 |

| SH |

27/03/2016 |

20 |

5 |

| SZ |

25/09/2016 |

20 |

4 |

g.min()

|

date |

temperature |

wind |

| city |

|

|

|

| BJ |

03/01/2016 |

-3 |

2 |

| GZ |

14/08/2016 |

-1 |

2 |

| SH |

03/07/2016 |

-10 |

2 |

| SZ |

11/09/2016 |

-10 |

1 |

g

<pandas.core.groupby.generic.DataFrameGroupBy object at 0x11a393610>

list(g)

[('BJ', date city temperature wind

0 03/01/2016 BJ 8 5

1 17/01/2016 BJ 12 2

2 31/01/2016 BJ 19 2

3 14/02/2016 BJ -3 3

4 28/02/2016 BJ 19 2

5 13/03/2016 BJ 5 3),

('GZ', date city temperature wind

14 17/07/2016 GZ 10 2

15 31/07/2016 GZ -1 5

16 14/08/2016 GZ 1 5

17 28/08/2016 GZ 25 4),

('SH', date city temperature wind

6 27/03/2016 SH -4 4

7 10/04/2016 SH 19 3

8 24/04/2016 SH 20 3

9 08/05/2016 SH 17 3

10 22/05/2016 SH 4 2

11 05/06/2016 SH -10 4

12 19/06/2016 SH 0 5

13 03/07/2016 SH -9 5),

('SZ', date city temperature wind

18 11/09/2016 SZ 20 1

19 25/09/2016 SZ -10 4)]

dict(list(g))

{'BJ': date city temperature wind

0 03/01/2016 BJ 8 5

1 17/01/2016 BJ 12 2

2 31/01/2016 BJ 19 2

3 14/02/2016 BJ -3 3

4 28/02/2016 BJ 19 2

5 13/03/2016 BJ 5 3,

'GZ': date city temperature wind

14 17/07/2016 GZ 10 2

15 31/07/2016 GZ -1 5

16 14/08/2016 GZ 1 5

17 28/08/2016 GZ 25 4,

'SH': date city temperature wind

6 27/03/2016 SH -4 4

7 10/04/2016 SH 19 3

8 24/04/2016 SH 20 3

9 08/05/2016 SH 17 3

10 22/05/2016 SH 4 2

11 05/06/2016 SH -10 4

12 19/06/2016 SH 0 5

13 03/07/2016 SH -9 5,

'SZ': date city temperature wind

18 11/09/2016 SZ 20 1

19 25/09/2016 SZ -10 4}

dict(list(g))['BJ']

|

date |

city |

temperature |

wind |

| 0 |

03/01/2016 |

BJ |

8 |

5 |

| 1 |

17/01/2016 |

BJ |

12 |

2 |

| 2 |

31/01/2016 |

BJ |

19 |

2 |

| 3 |

14/02/2016 |

BJ |

-3 |

3 |

| 4 |

28/02/2016 |

BJ |

19 |

2 |

| 5 |

13/03/2016 |

BJ |

5 |

3 |

for name, group_df in g:

print(name)

print(group_df)

BJ

date city temperature wind

0 03/01/2016 BJ 8 5

1 17/01/2016 BJ 12 2

2 31/01/2016 BJ 19 2

3 14/02/2016 BJ -3 3

4 28/02/2016 BJ 19 2

5 13/03/2016 BJ 5 3

GZ

date city temperature wind

14 17/07/2016 GZ 10 2

15 31/07/2016 GZ -1 5

16 14/08/2016 GZ 1 5

17 28/08/2016 GZ 25 4

SH

date city temperature wind

6 27/03/2016 SH -4 4

7 10/04/2016 SH 19 3

8 24/04/2016 SH 20 3

9 08/05/2016 SH 17 3

10 22/05/2016 SH 4 2

11 05/06/2016 SH -10 4

12 19/06/2016 SH 0 5

13 03/07/2016 SH -9 5

SZ

date city temperature wind

18 11/09/2016 SZ 20 1

19 25/09/2016 SZ -10 4