Docerk overlay 网络需要一个 key-value 数据库用于保存网络状态信息,包括 Network、Endpoint、IP 等。Consul、Etcd 和 ZooKeeper 都是 Docker 支持的 key-vlaue 软件,我们这里使用 Consul。

一、准备实验环境:

搭建实验环境:

| 主机名 | 系统 | 内核版本 | IP |

|---|---|---|---|

| master | CentOS7 | kernel-5.2.11 | 10.1.1.17 |

| node1 | CentOS7 | kernel-5.2.11 | 10.1.1.13 |

| node2 | CentOS7 | kernel-5.2.11 | 10.1.1.14 |

如果内核版本低于kernel-3.18,实验很大可能会失败,所以建议升级Linux的系统内核,具体步骤如下:

导入key

rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

如果你修改了repo的gpgcheck=0也可以不导入key

安装elrepo的yum源

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-2.el7.elrepo.noarch.rpm

安装内核

在yum的ELRepo源中,有mainline(3.18.3)这个内核版本

yum --enablerepo=elrepo-kernel install kernel-ml-devel kernel-ml -y

选择了使用新安装的repo来安装内核,如果使用其他的repo,看不到3.18版本内核

更新后查看内核版本

[root@ip-10-10-17-4 tmp]# uname -r

3.10.0-123.el7.x86_64

重要:目前内核还是默认的版本,如果在这一步完成后你就直接reboot了,重启后使用的内核版本还是默认的3.10,不会使用新的内核,想修改启动的顺序,需要进行下一步

查看默认启动顺序:

awk -F\' '$1=="menuentry " {print $2}' /etc/grub2.cfg

CentOS Linux (3.18.3-1.el7.elrepo.x86_64) 7 (Core)

CentOS Linux, with Linux 3.10.0-123.el7.x86_64

CentOS Linux, with Linux 0-rescue-893b160e363b4ec7834719a7f06e67cf

默认启动的顺序是从0开始,但我们新内核是从头插入(目前位置在0,而3.10的是在1),所以需要选择0,如果想生效最新的内核,需要执行:

grub2-set-default 0

然后reboot重启,使用新的内核,下面是重启后使用的内核版本

[root@ip-10-10-17-4 tmp]# uname -r

Kernel 5.22

二、配置consul环境

首先在docker部署支持的组件,比如Consul,最简单的方法是以容器的方式运行Consul:

[root@localhost ~]#

[root@localhost ~]# docker run -d -p 8500:8500 -h consul --name consul progrium/consul -server -bootstrap

Consul容器启动完成后,可以通过10.1.1.17:8500访问Consul:

修改完后,需要重启。

接下来修改docker daemon的配置文件,CentOS7环境下docker的daemon文件在/usr/lib/systemd/system/docker.service:

先修改master的配置文件,添加以下内容:

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --cluster-store=consul://192.168.1.4:8500 --cluster-advertise=ens33:2376

修改node1文件,添加以下内容:

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --cluster-store=consul://192.168.1.4:8500 --cluster-advertise=ens33:2376

修改node2文件,添加以下内容:

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock --cluster-store=consul://192.168.1.4:8500 --cluster-advertise=ens33:2376

然后master、node1和node2,均执行以下命令:

[root@localhost ~]# systemctl daemon-reload

[root@localhost ~]# systemctl restart docker

上面重启docker服务的时候,master上面的consul容器也会被停掉,所以这里要记得把master节点上的容器consul拉起来:

docker start consul

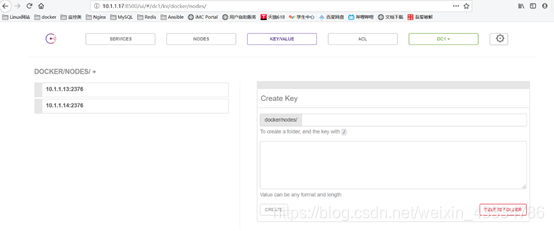

打开http://10.1.1.17:8500/ui/#/dc1/kv/docker/nodes/,可以看到node1和node2节点已经注册到consul上:

三、创建overlay网络:

创建网络:

[root@localhost ~]# docker network create --driver overlay overlay_net1

406055e7a5b56511a6b7e021fef688a69d262fa3112094d43b4bdbea2eceda09

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

afe6548e7c82 bridge bridge local

2ca6a9334230 host host local

467bdef50c36 none null local

406055e7a5b5 overlay_net1 overlay global

在node1上查看存在的网络:

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

2ff0c772489f bridge bridge local

5a657b6beb24 host host local

e69a4839e6c6 none null local

406055e7a5b5 overlay_net1 overlay global

在node2上查看存在的网络:

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

1b6bc781bff0 bridge bridge local

0d9c3303b3d9 host host local

c828711c060a none null local

406055e7a5b5 overlay_net1 overlay global

结果表明,在node1和node2两台主机上也能看到overlay_net1。这是因为创建overlay_net1时第一台主机将网络信息存入了consul,第二台主机从consul中读取到了新的网络数据,之后overlay_net1的任何变化都会同步到两台主机。

用docker network inspect overlay_net1来查看创建的overlay的详细信息:

[root@localhost ~]# docker network inspect overlay_net1

[

{

"Name": "overlay_net1",

"Id": "406055e7a5b56511a6b7e021fef688a69d262fa3112094d43b4bdbea2eceda09",

"Created": "2019-09-01T20:58:55.359158994+08:00",

"Scope": "global",

"Driver": "overlay",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": {},

"Config": [

{

"Subnet": "10.0.0.0/24",

"Gateway": "10.0.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {},

"Options": {},

"Labels": {}

}

]

四、使用overlay网络创建容器:

创建容器:

[root@localhost ~]# docker run -itd --name test1 --network=overlay_net1 busybox

查看容器内的网络:

root@ab8002497b00:/# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.0.0.2 netmask 255.255.255.0 broadcast 0.0.0.0

inet6 fe80::42:aff:fe00:2 prefixlen 64 scopeid 0x20<link>

ether 02:42:0a:00:00:02 txqueuelen 0 (Ethernet)

RX packets 13 bytes 1046 (1.0 KB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 8 bytes 656 (656.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.18.0.2 netmask 255.255.0.0 broadcast 0.0.0.0

inet6 fe80::42:acff:fe12:2 prefixlen 64 scopeid 0x20<link>

ether 02:42:ac:12:00:02 txqueuelen 0 (Ethernet)

RX packets 3308 bytes 17464056 (17.4 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 2944 bytes 164029 (164.0 KB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

容器有两个网络接口eth0和eth1。eth0的IP为10.0.0.2,连接的是overlay网络。eth1的IP为172.18.0.2,容器默认路由是走eth1。docker会创建一个bridge网络“docker_gwbridge”,为所有连接到overlay网络的容器提供访问外网的能力 :

[root@localhost ~]# docker network ls

NETWORK ID NAME DRIVER SCOPE

afe6548e7c82 bridge bridge local

3bf7bf927f91 docker_gwbridge bridge local

2ca6a9334230 host host local

467bdef50c36 none null local

406055e7a5b5 overlay_net1 overlay global

可以用docker network inspect来查看它的详细信息:

[

{

"Name": "docker_gwbridge",

"Id": "3bf7bf927f917efb87786861e1344c5fb192fbb73cbae676b418da896b4f132c",

"Created": "2019-09-01T21:15:51.434098175+08:00",

"Scope": "local",

"Driver": "bridge",

"EnableIPv6": false,

"IPAM": {

"Driver": "default",

"Options": null,

"Config": [

{

"Subnet": "172.18.0.0/16",

"Gateway": "172.18.0.1"

}

]

},

"Internal": false,

"Attachable": false,

"Containers": {

"ab8002497b00c650bcfe683f8b2d355245d93a10a415ca2c51ea67c9c4ba5016": {

"Name": "gateway_ab8002497b00",

"EndpointID": "7c22d5f91feba38a05feac65dd2ac0a7e1d11d9f9e11b9e7c6d2a74d856f9baa",

"MacAddress": "02:42:ac:12:00:02",

"IPv4Address": "172.18.0.2/16",

"IPv6Address": ""

}

},

"Options": {

"com.docker.network.bridge.enable_icc": "false",

"com.docker.network.bridge.enable_ip_masquerade": "true",

"com.docker.network.bridge.name": "docker_gwbridge"

},

"Labels": {}

}

]

这样容器就可以通过docker_gwbridge访问外网 :

root@ab8002497b00:/# ping -c 4 www.baidu.com

PING www.a.shifen.com (39.156.66.14): 56 data bytes

64 bytes from 39.156.66.14: icmp_seq=0 ttl=127 time=12.543 ms

64 bytes from 39.156.66.14: icmp_seq=1 ttl=127 time=12.203 ms

64 bytes from 39.156.66.14: icmp_seq=2 ttl=127 time=12.978 ms

64 bytes from 39.156.66.14: icmp_seq=3 ttl=127 time=12.589 ms

--- www.a.shifen.com ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max/stddev = 12.203/12.578/12.978/0.275 ms

root@ab8002497b00:/#

五、overlay实现跨主机通信

前面在master节点上运行了test1容器,现在我们在node1和node2上分别运行test2和test3容器:

node1:

[root@localhost ~]# docker run -itd --name test2 --network=overlay_net1 busybox

root@7a00c1b68c8c:/# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.0.0.3 netmask 255.255.255.0 broadcast 0.0.0.0

inet6 fe80::42:aff:fe00:3 prefixlen 64 scopeid 0x20<link>

ether 02:42:0a:00:00:03 txqueuelen 0 (Ethernet)

RX packets 13 bytes 1046 (1.0 KB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 8 bytes 656 (656.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

eth1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.18.0.2 netmask 255.255.0.0 broadcast 0.0.0.0

inet6 fe80::42:acff:fe12:2 prefixlen 64 scopeid 0x20<link>

ether 02:42:ac:12:00:02 txqueuelen 0 (Ethernet)

RX packets 3920 bytes 17497056 (17.4 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3714 bytes 205561 (205.5 KB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

node2:

[root@localhost ~]# docker run -itd --name test3 --network=overlay_net1 busybox

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.0.0.4 netmask 255.255.255.0 broadcast 0.0.0.0

inet6 fe80::42:aff:fe00:4 prefixlen 64 scopeid 0x20<link>

ether 02:42:0a:00:00:04 txqueuelen 0 (Ethernet)

RX packets 13 bytes 1046 (1.0 KB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 8 bytes 656 (656.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

以上可以看到,test1的IP为10.0.0.2,test2的IP为10.0.0.3,test3的IP为10.0.0.4。

尝试ping一下对方:

master:

root@ea32f3a8ab1c:/# ping -c 1 10.0.0.3

PING 10.0.0.3 (10.0.0.3): 56 data bytes

64 bytes from 10.0.0.3: icmp_seq=0 ttl=64 time=1.380 ms

--- 10.0.0.3 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 1.380/1.380/1.380/0.000 ms

root@ea32f3a8ab1c:/# ping -c 1 10.0.0.4

PING 10.0.0.4 (10.0.0.4): 56 data bytes

64 bytes from 10.0.0.4: icmp_seq=0 ttl=64 time=2.301 ms

--- 10.0.0.4 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 2.301/2.301/2.301/0.000 ms

master可以ping通node1和node2;

node1:

root@1ab0f0f1a7da:/# ping -c 1 10.0.0.2

PING 10.0.0.2 (10.0.0.2): 56 data bytes

64 bytes from 10.0.0.2: icmp_seq=0 ttl=64 time=1.699 ms

--- 10.0.0.2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 1.699/1.699/1.699/0.000 ms

root@1ab0f0f1a7da:/# ping -c 1 10.0.0.4

PING 10.0.0.4 (10.0.0.4): 56 data bytes

64 bytes from 10.0.0.4: icmp_seq=0 ttl=64 time=1.738 ms

--- 10.0.0.4 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 1.738/1.738/1.738/0.000 ms

root@1ab0f0f1a7da:/#

node1可以ping通master和node2;

node2:

root@5269c3ad014d:/# ping -c 1 10.0.0.2

PING 10.0.0.2 (10.0.0.2): 56 data bytes

64 bytes from 10.0.0.2: icmp_seq=0 ttl=64 time=2.568 ms

--- 10.0.0.2 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 2.568/2.568/2.568/0.000 ms

root@5269c3ad014d:/# ping -c 1 10.0.0.3

PING 10.0.0.3 (10.0.0.3): 56 data bytes

64 bytes from 10.0.0.3: icmp_seq=0 ttl=64 time=1.683 ms

--- 10.0.0.3 ping statistics ---

1 packets transmitted, 1 packets received, 0% packet loss

round-trip min/avg/max/stddev = 1.683/1.683/1.683/0.000 ms

node2可以ping通master和node1。

六、overlay网络隔离

以上的实验步骤。container的ip都是自动分配的,如果需要静态的固定ip,怎么办?

需要进行以下配置:

在创建overlay网络的时候,指定IP范围,网段,子网等:

[root@node1 ~]# docker network create -d overlay --ip-range=192.168.2.0/24 --gateway=192.168.2.1 --subnet=192.168.2.0/24 overlay_net1

启动容器时,手动指定IP:

[root@node1 ~]# docker run -d --name host1 --network=overlay_net1 --ip=192.168.2.2 hanxt/centos:7