文章目录

1. 插件安装

1.1 基础环境准备

1.2 ant环境配置

- 文件上传

- 解压缩

[root@master ~]# unzip -o -d /root hadoop2x-eclipse-plugin.zip

[root@master ~]# unzip -o -d /root apache-ant-1.10.7-bin.zip

- 配置环境变量

[root@master ~]# vi .bash_profile

配置如下信息,然后保存退出,并执行[root@master ~]# source .bash_profile使之生效。

export ANT_HOME=/root/apache-ant-1.10.7

export PATH=$JAVA_HOME/bin:$ANT_HOME/bin:$PATH

- 验证ant

1.3 编译hadoop-eclipse-plugin-2.6.5.jar

进入到下面的路径:

[root@master ~]# cd hadoop2x-eclipse-plugin/src/contrib/eclipse-plugin/

执行下面的命令进行编译:

[root@master eclipse-plugin]# ant jar -Dversion=2.6.5 -Dhadoop.version=2.6.5 -Declipse.home=/root/eclipse -Dhadoop.home=/root/hadoop-2.6.5

说明

-Dhadoop.version是hadoop的版本号

-Declipse.home是eclipse的安装路径

-Dhadoop.home是hadoop的安装路径

出现ivy-resolve-common:一直停留的情况。

修改build.xml文件,去除对ivy的依赖:

删除depends="init, ivy-retrieve-common",保存并退出。

<target name="compile" depends="init, ivy-retrieve-common" unless="skip.contrib">

<echo message="contrib: ${name}"/>

<javac

encoding="${build.encoding}"

srcdir="${src.dir}"

includes="**/*.java"

destdir="${build.classes}"

debug="${javac.debug}"

deprecation="${javac.deprecation}">

<classpath refid="classpath"/>

</javac>

</target>

继续执行编译操作,出现如下信息,错误提示为Warning: Could not find file /root/hadoop-2.6.5/share/hadoop/common/lib/commons-collections-3.2.1.jar to copy.

按照错误提示进入到相应的路径[root@master eclipse-plugin]# cd /root/hadoop-2.6.5/share/hadoop/common/lib/查看需要的jar包

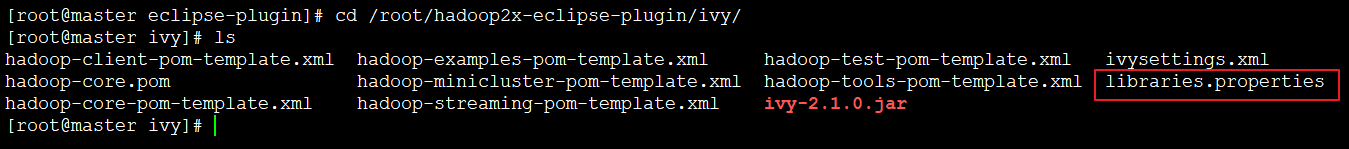

进入下面的路径[root@master eclipse-plugin]# cd /root/hadoop2x-eclipse-plugin/ivy/,修改libraries.properties

将commons-collections.version=3.2.1修改为commons-collections.version=3.2.2

再回到/root/hadoop2x-eclipse-plugin/src/contrib/eclipse-plugin,重新编译,出现如下页面,证明编译成功!

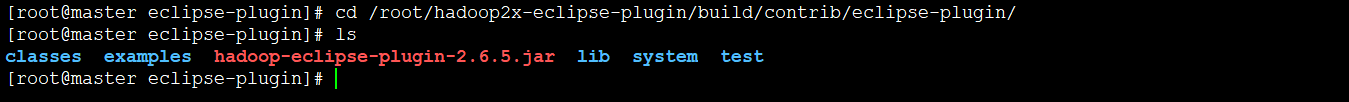

进入如下路径[root@master eclipse-plugin]# cd /root/hadoop2x-eclipse-plugin/build/contrib/eclipse-plugin/进行查看。

编译成功!!!

2. eclipse环境配置

2.1 上传插件

将编译好的hadoop-eclipse-plugin-2.6.5.jar拷贝到eclipse的plugins目录下

[root@master eclipse-plugin]# cp hadoop-eclipse-plugin-2.6.5.jar /root/eclipse/plugins/

2.2 启动eclipse

2.3 设置Hadoop安装路径

依次点击Window 、Preference 、Hadoop Map/Reduce,设置Hadoop的安装路径。

2.4 显示Hadoop连接配置窗口

依次点击Window—>OpenPerspective—>Other—>MapReduce

2.5 启动Hadoop集群,连接Hadoop

填写相应的信息,点击Finish

出现下面的页面,证明eclipse的配置成功!!!

3. 运行MapReduce程序

求一年之内最大最小温度;气象数据集下载地址:ftp://ftp.ncdc.noaa.gov/pub/data/noaa/1901/

3.1 新建MapReduce项目

3.2数据上传

3.3 创建类

新建类MaxTemperatureWithCombiner、MaxTemperatureMapper、MaxTemperatureReducer;将源代码(见文章末尾)拷贝进入,并作调试。

3.4 运行程序

右键MaxTemperatureWithCombiner,选择Run As —> Run Configurations;选择Java Application ,点击左上角的图标,之后点击Arguments,填入下面的内容。

hdfs://master:9000/user/root/

hdfs://master:9000/user/output/

然后点击Run,稍等片刻,刷新,即可看到如下的内容输出。

源代码来自《Hadoop权威指南》,稍作调试。

- MaxTemperatureWithCombiner.java

package cust.test;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class MaxTemperatureWithCombiner {

public static void main(String[] args) throws Exception {

if (args.length != 2) {

System.err.println("Usage: MaxTemperatureWithCombiner <input path> " +

"<output path>");

System.exit(-1);

}

@SuppressWarnings("deprecation")

Job job = new Job();

job.setJarByClass(MaxTemperatureWithCombiner.class);

job.setJobName("Max temperature");

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

job.setMapperClass(MaxTemperatureMapper.class);

/*[*/job.setCombinerClass(MaxTemperatureReducer.class)/*]*/;

job.setReducerClass(MaxTemperatureReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

- MaxTemperatureMapper.java

package cust.test;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

public class MaxTemperatureMapper

extends Mapper<LongWritable, Text, Text, IntWritable> {

private static final int MISSING = 9999;

@Override

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line = value.toString();

String year = line.substring(15, 19);

int airTemperature;

if (line.charAt(87) == '+') { // parseInt doesn't like leading plus signs

airTemperature = Integer.parseInt(line.substring(88, 92));

} else {

airTemperature = Integer.parseInt(line.substring(87, 92));

}

String quality = line.substring(92, 93);

if (airTemperature != MISSING && quality.matches("[01459]")) {

context.write(new Text(year), new IntWritable(airTemperature));

}

}

}

- MaxTemperatureReducer.java

package cust.test;

import java.io.IOException;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

public class MaxTemperatureReducer

extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

public void reduce(Text key, Iterable<IntWritable> values,

Context context)

throws IOException, InterruptedException {

int maxValue = Integer.MIN_VALUE;

for (IntWritable value : values) {

maxValue = Math.max(maxValue, value.get());

}

context.write(key, new IntWritable(maxValue));

}

}