本部分介绍三款标准图像识别数据集,分别为MNIST、Fashion-MNIST、SmallNORB。

目录

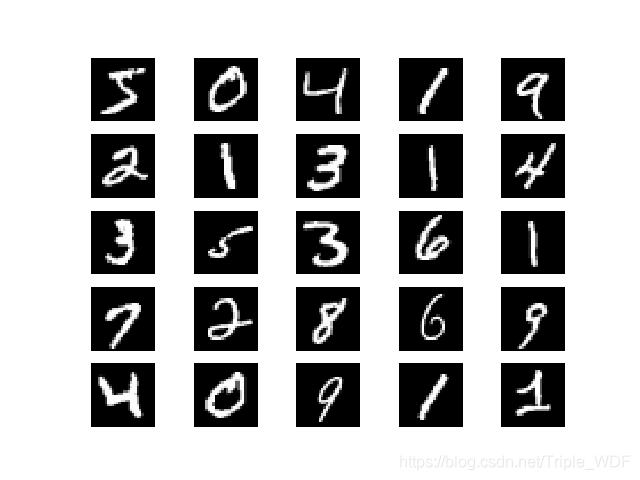

一、MNIST

链接:https://pan.baidu.com/s/1onS1F4jWgtbUURymJXFtAg

提取码:5lfn

二、Fashion-MNIST

链接:https://pan.baidu.com/s/1NFqZ1SJ__EZJ8boWbx_Mng

提取码:je02

三、SmallNORB

链接:https://pan.baidu.com/s/1VapMXBTZwRP1vcCsznN7Lg

提取码:ziek

四、解析代码

code1~code3用于提取三款数据集数据,code4将三种数据集进行集中操作。最后地数据集形式转化为,[图像样本个数,单一图像拉伸为一维向量长度],例如:MNIST解析之后为[60000, 784]。

code1:py for MNIST

# import cPickle

import pickle

import gzip

import numpy as np

def load_data():

f = gzip.open('D:\dataset\mnist.pkl.gz', 'rb')

training_data, validation_data, test_data = pickle.load(f, encoding='bytes')

# print (training_data)

# print (validation_data)

# print (test_data)

f.close()

return (training_data, validation_data, test_data)

def vectorized_result(j):

e = np.zeros((10, 1))

e[j] = 1.0

return e

def load_data_wrapper():

tr_d, va_d, te_d = load_data()

#training_data

training_inputs = [np.reshape(x, (784, 1)) for x in tr_d[0]]

training_results = [vectorized_result(y) for y in tr_d[1]]

training_data = zip(training_inputs, training_results)

#validation_data

validation_inputs = [np.reshape(x, (784, 1)) for x in va_d[0]]

validation_results = [vectorized_result(y) for y in va_d[1]]

validation_data = zip(validation_inputs, validation_results)

#test_data

test_inputs = [np.reshape(x, (784, 1)) for x in te_d[0]]

test_data = zip(test_inputs, te_d[1])

return (training_data, validation_data, test_data)

code2:py for Fashion-MNIST

import os

import gzip

import numpy as np

# import matplotlib.pyplot as plt

'''

0:T-shirt/top

1:Trouser

2:Pullover

3:Dress

4:Coat

5:Sandal

6:Shirt

7:Sneaker

8:Bag

9:Ankle boot

'''

def vectorized_result(j):

e = np.zeros((10, 1))

e[j] = 1.0

return e

def load_mnist(path, kind='train'):

labels_path = os.path.join(path,'%s-labels-idx1-ubyte.gz' % kind)

images_path = os.path.join(path,'%s-images-idx3-ubyte.gz' % kind)

with gzip.open(labels_path, 'rb') as lbpath:

labels = np.frombuffer(lbpath.read(), dtype=np.uint8,offset=8)

with gzip.open(images_path, 'rb') as imgpath:

images = np.frombuffer(imgpath.read(), dtype=np.uint8,offset=16).reshape(len(labels), 784)

return images, labels

def fashionmnist_loader():

x_train, y_train = load_mnist('D:\dataset\\fashionMnist')

x_test, y_test = load_mnist('D:\dataset\\fashionMnist', 't10k')

y_tr = np.zeros((len(x_train), 10))

for i in range(len(x_train)):

y_tr[i][int(y_train[i])] = 1.0

return x_train/255.0, y_tr, x_test/255.0, y_test

code3:py for SmallNORB

import numpy as np

import pickle

# from sklearn.decomposition import PCA

def smallnorb_loader():

f_x_train = open('D:\dataset\smallnorb_x_train_24300x2048.pkl', 'rb')

x_train = pickle.load(f_x_train, encoding='bytes')

f_y_train = open('D:\dataset\smallnorb_y_train.pkl', 'rb')

y_train = pickle.load(f_y_train, encoding='bytes')

f_x_test = open('D:\dataset\smallnorb_x_test_24300x2048.pkl', 'rb')

x_test = pickle.load(f_x_test, encoding='bytes')

f_y_test = open('D:\dataset\smallnorb_y_test.pkl', 'rb')

y_test = pickle.load(f_y_test, encoding='bytes')

return x_train, y_train, x_test, y_testcode4:py for get_Dataset

import numpy as np

import mnist_loader

import fashionmnist_loader

import smallNORBpkl_loader

def get_Dataset(name='mnist'):

if name == 'mnist':

t, v, tt = mnist_loader.load_data_wrapper()

validation_data = list(v)

training_data = list(t) + validation_data

testing_data = list(tt)

len_t = len(training_data)

len_tdi = len(training_data[0][0])

len_tl = len(training_data[0][1])

x_train = np.zeros((len_t, len_tdi))

y_train = np.zeros((len_t, len_tl))

for i in range(len_t):

x_train[i] = np.array(training_data[i][0]).transpose()

y_train[i] = np.array(training_data[i][1]).transpose()

len_tt = len(testing_data)

x_test = np.zeros((len_tt, len_tdi))

y_test = np.zeros(len_tt)

for i in range(len_tt):

x_test[i] = np.array(testing_data[i][0]).transpose()

y_test[i] = testing_data[i][1]

return x_train, y_train, x_test, y_test

elif name == 'fashion':

return fashionmnist_loader.fashionmnist_loader()

elif name == 'smallnorb':

x_train, y_tr, x_test, y_test = smallNORBpkl_loader.smallnorb_loader()

length = len(y_tr)

y_train = np.zeros((length, 5))

for i in range(length):

y_train[i][int(y_tr[i])] = 1.0

return x_train, y_train, x_test, y_test

else:

pass使用code:

import get_Dataset

x_train, y_train, x_test, y_test = get_Dataset.get_Dataset(name='mnist')