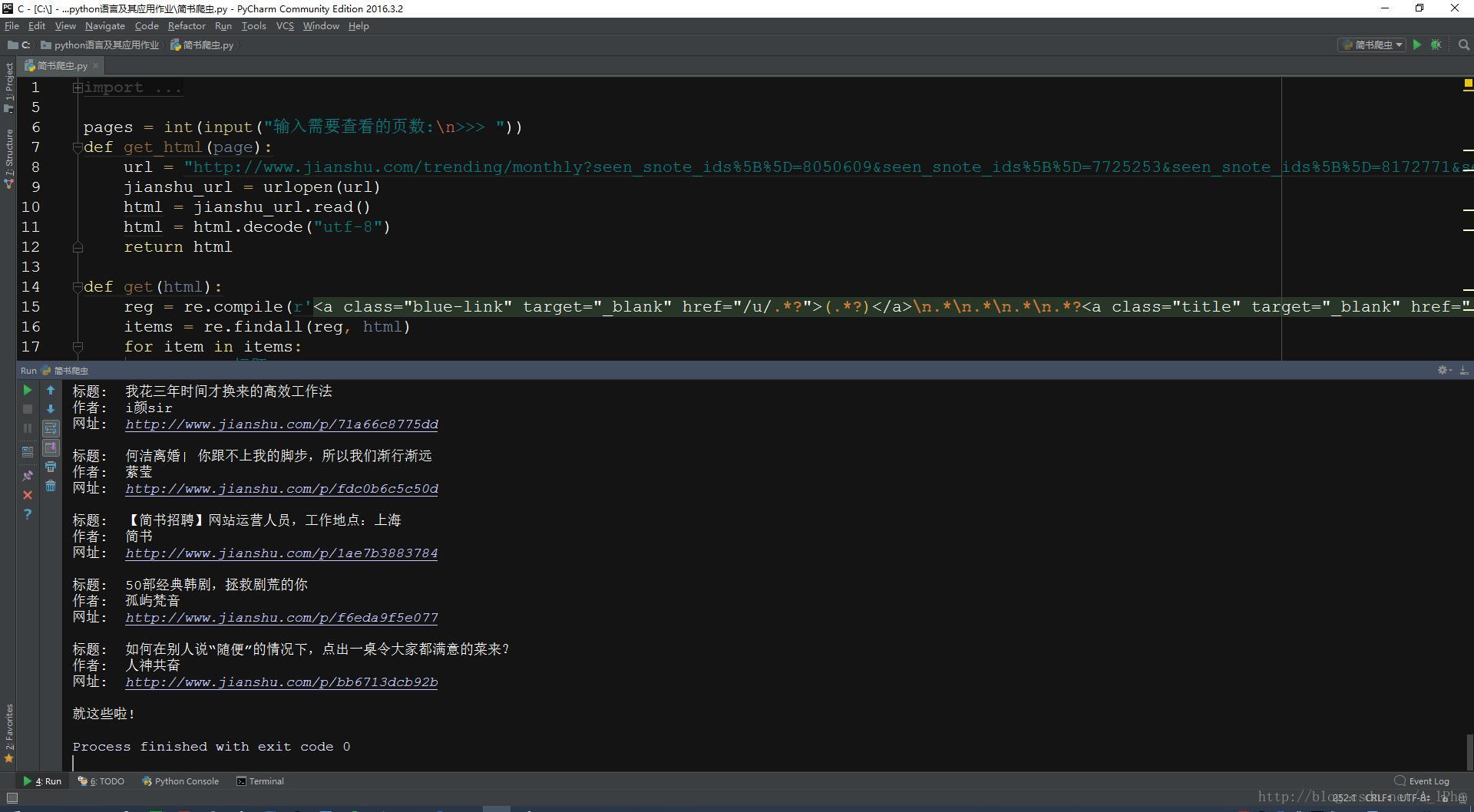

#Python 3.5

#By A_lPha

#http://blog.csdn.net/a_lpha

from urllib.request import urlopen

from bs4 import BeautifulSoup

import requests

import re

pages = int(input("输入需要查看的页数:\n>>> "))

def get_html(page):

url = "http://www.jianshu.com/trending/monthly?seen_snote_ids%5B%5D=8050609&seen_snote_ids%5B%5D=7725253&seen_snote_ids%5B%5D=8172771&seen_snote_ids%5B%5D=8305658&seen_snote_ids%5B%5D=8130459&seen_snote_ids%5B%5D=7786714&seen_snote_ids%5B%5D=8002283&seen_snote_ids%5B%5D=8106586&seen_snote_ids%5B%5D=7825805&seen_snote_ids%5B%5D=8145811&seen_snote_ids%5B%5D=8029843&seen_snote_ids%5B%5D=8141292&seen_snote_ids%5B%5D=8196034&seen_snote_ids%5B%5D=8029739&seen_snote_ids%5B%5D=8340220&seen_snote_ids%5B%5D=7756664&seen_snote_ids%5B%5D=1673306&seen_snote_ids%5B%5D=7677003&seen_snote_ids%5B%5D=7979784&seen_snote_ids%5B%5D=8238976&page={0}".format(page)

jianshu_url = urlopen(url)

html = jianshu_url.read()

html = html.decode("utf-8")

return html

def get(html):

reg = re.compile(r'<a class="blue-link" target="_blank" href="/u/.*?">(.*?)</a>\n.*\n.*\n.*\n.*?<a class="title" target="_blank" href="(.*?)">(.*?)</a>')

items = re.findall(reg, html)

for item in items:

print("标题: ", item[2])

print("作者: ", item[0])

print("网址: ", "http://www.jianshu.com" + item[1], "\n")

return items

print("简书30日排行:\n")

for i in range(1,pages + 1):

print("这是第%s页: "%i)

html = get_html(i)

get(html)

print("就这些啦!")

get_html(i)简书30日排行爬虫代码

猜你喜欢

转载自blog.csdn.net/A_lPha/article/details/54564549

今日推荐

周排行