上篇:第7章 函数

1、Hadoop源码编译支持Snappy压缩

1.1 资源准备

1.CentOS联网

配置CentOS能连接外网。Linux虚拟机ping www.baidu.com 是畅通的

注意:采用root角色编译,减少文件夹权限出现问题

2.jar包准备(hadoop源码、JDK8 、maven、protobuf)

(1)hadoop-2.7.2-src.tar.gz

(2)jdk-8u144-linux-x64.tar.gz

(3)snappy-1.1.3.tar.gz

(4)apache-maven-3.0.5-bin.tar.gz

(5)protobuf-2.5.0.tar.gz

1.2 jar包安装

注意:所有操作必须在root用户下完成

(1)JDK解压、配置环境变量JAVA_HOME和PATH,验证java-version(如下都需要验证是否配置成功)

[root@hadoop101 software] # tar -zxf jdk-8u144-linux-x64.tar.gz -C /opt/module/

[root@hadoop101 software]# vi /etc/profile

#JAVA_HOME

export JAVA_HOME=/opt/module/jdk1.8.0_144

export PATH=$PATH:$JAVA_HOME/bin

[root@hadoop101 software]#source /etc/profil

验证命令:java -version

(2)Maven解压、配置 MAVEN_HOME和PATH

[root@hadoop101 software]# tar -zxvf apache-maven-3.0.5-bin.tar.gz -C /opt/module/

[root@hadoop101 apache-maven-3.0.5]# vi /etc/profile

#MAVEN_HOME

export MAVEN_HOME=/opt/module/apache-maven-3.0.5

export PATH=$PATH:$MAVEN_HOME/bin

[root@hadoop101 software]#source /etc/profile

验证命令:mvn -version

1.3、检查hadoop本地库

[root@hadoop101 hadoop-2.7.2]# hadoop checknative

snappy: false

我们能查看到snappy不支持,所以需要把false改成true;

a、首先我们,需要把hadoop-2.7.2.tar.gz文件拷贝到/opt/module文件目录下:

解压该文件

[root@hadoop101 module]# tar -zxvf hadoop-2.7.2.tar.gz

刚解压这个文件后,查看这个文件信息

[root@hadoop101 module]# cd hadoop-2.7.2/lib

[root@hadoop101 lib]# ll

total 0

drwxr-xr-x 2 root root 285 Sep 1 2017 native

[root@hadoop101 lib]# cd native/

[root@hadoop101 native]# ll

total 5188

-rw-r--r-- 1 root root 1210260 Sep 1 2017 libhadoop.a

-rw-r--r-- 1 root root 1487268 Sep 1 2017 libhadooppipes.a

lrwxrwxrwx 1 root root 18 Sep 1 2017 libhadoop.so -> libhadoop.so.1.0.0

-rwxr-xr-x 1 root root 716316 Sep 1 2017 libhadoop.so.1.0.0

-rw-r--r-- 1 root root 582048 Sep 1 2017 libhadooputils.a

-rw-r--r-- 1 root root 364860 Sep 1 2017 libhdfs.a

lrwxrwxrwx 1 root root 16 Sep 1 2017 libhdfs.so -> libhdfs.so.0.0.0

-rwxr-xr-x 1 root root 229113 Sep 1 2017 libhdfs.so.0.0.0

-rw-r--r-- 1 root root 472950 Sep 1 2017 libsnappy.a

-rwxr-xr-x 1 root root 955 Sep 1 2017 libsnappy.la

lrwxrwxrwx 1 root root 18 Sep 1 2017 libsnappy.so -> libsnappy.so.1.3.0

lrwxrwxrwx 1 root root 18 Sep 1 2017 libsnappy.so.1 -> libsnappy.so.1.3.0

-rwxr-xr-x 1 root root 228177 Sep 1 2017 libsnappy.so.1.3.0

[root@hadoop101 native]#

以往的hadoop文件时支持的,如图所示:

不支持snappy:

所,我们需要做的就是,在可支持snappy文件把所有的文件拷贝到不支持文件下

[root@hadoop101 native]# ll

total 5188

-rw-r--r-- 1 root root 1210260 Sep 1 2017 libhadoop.a

-rw-r--r-- 1 root root 1487268 Sep 1 2017 libhadooppipes.a

lrwxrwxrwx 1 root root 18 Sep 1 2017 libhadoop.so -> libhadoop.so.1.0.0

-rwxr-xr-x 1 root root 716316 Sep 1 2017 libhadoop.so.1.0.0

-rw-r--r-- 1 root root 582048 Sep 1 2017 libhadooputils.a

-rw-r--r-- 1 root root 364860 Sep 1 2017 libhdfs.a

lrwxrwxrwx 1 root root 16 Sep 1 2017 libhdfs.so -> libhdfs.so.0.0.0

-rwxr-xr-x 1 root root 229113 Sep 1 2017 libhdfs.so.0.0.0

-rw-r--r-- 1 root root 472950 Sep 1 2017 libsnappy.a

-rwxr-xr-x 1 root root 955 Sep 1 2017 libsnappy.la

lrwxrwxrwx 1 root root 18 Sep 1 2017 libsnappy.so -> libsnappy.so.1.3.0

lrwxrwxrwx 1 root root 18 Sep 1 2017 libsnappy.so.1 -> libsnappy.so.1.3.0

-rwxr-xr-x 1 root root 228177 Sep 1 2017 libsnappy.so.1.3.0

[root@hadoop101 native]# cp libsnappy.a /usr/local/hadoop/module/hadoop-2.7.2/lib/native

覆盖,一直y,即可!

当前使用的文件已经拷贝覆盖进来了:

[root@hadoop101 native]# pwd

/usr/local/hadoop/module/hadoop-2.7.2/lib/native

[root@hadoop101 native]# ll

total 5636

-rw-r--r-- 1 root root 1210260 Jan 6 19:58 libhadoop.a

-rw-r--r-- 1 root root 1487268 Jan 6 20:04 libhadooppipes.a

lrwxrwxrwx 1 root root 18 May 22 2017 libhadoop.so -> libhadoop.so.1.0.0

-rwxr-xr-x 1 root root 716316 Jan 6 20:04 libhadoop.so.1.0.0

-rw-r--r-- 1 root root 582048 Jan 6 20:04 libhadooputils.a

-rw-r--r-- 1 root root 364860 Jan 6 20:04 libhdfs.a

lrwxrwxrwx 1 root root 16 May 22 2017 libhdfs.so -> libhdfs.so.0.0.0

-rwxr-xr-x 1 root root 229113 Jan 6 20:04 libhdfs.so.0.0.0

-rw-r--r-- 1 root root 472950 Jan 6 20:04 libsnappy.a

-rwxr-xr-x 1 root root 955 Jan 6 20:04 libsnappy.la

-rwxr-xr-x 1 root root 228177 Jan 6 20:04 libsnappy.so

-rwxr-xr-x 1 root root 228177 Jan 6 20:04 libsnappy.so.1

-rwxr-xr-x 1 root root 228177 Jan 6 20:04 libsnappy.so.1.3.0

[root@hadoop101 native]#

这时,我们需要关闭集群,重新启动集群

[root@hadoop101 native]# stop-all.sh

[root@hadoop101 native]# start-all.sh

这时,我们再次查看,hadoop本地库,发现支持为true

[root@hadoop101 native]# hadoop checknative

snappy: true /usr/local/hadoop/module/hadoop-2.7.2/lib/native/libsnappy.so.1

2、编译源码

(1)准备编译环境

[root@hadoop101 software]# yum install svn

[root@hadoop101 software]# yum install autoconf automake libtool cmake

[root@hadoop101 software]# yum install ncurses-devel

[root@hadoop101 software]# yum install openssl-devel

[root@hadoop101 software]# yum install gcc*

(2)编译安装snappy

[root@hadoop101 software]# tar -zxvf snappy-1.1.3.tar.gz -C /opt/module/

[root@hadoop101 module]# cd snappy-1.1.3/

[root@hadoop101 snappy-1.1.3]# ./configure

[root@hadoop101 snappy-1.1.3]# make

[root@hadoop101 snappy-1.1.3]# make install

# 查看snappy库文件

[root@hadoop101 snappy-1.1.3]# ls -lh /usr/local/lib |grep snappy

(3) 编译安装protobuf

[root@hadoop101 software]# tar -zxvf protobuf-2.5.0.tar.gz -C /opt/module/

[root@hadoop101 module]# cd protobuf-2.5.0/

[root@hadoop101 protobuf-2.5.0]# ./configure

[root@hadoop101 protobuf-2.5.0]# make

[root@hadoop101 protobuf-2.5.0]# make install

# 查看protobuf版本以测试是否安装成功

[root@hadoop101 protobuf-2.5.0]# protoc --version

(4)编译hadoop native

[root@hadoop101 software]# tar -zxvf hadoop-2.7.2-src.tar.gz

[root@hadoop101 software]# cd hadoop-2.7.2-src/

[root@hadoop101 software]# mvn clean package -DskipTests -Pdist,native -Dtar -Dsnappy.lib=/usr/local/lib -Dbundle.snappy

执行成功后,/opt/software/hadoop-2.7.2-src/hadoop-dist/target/hadoop-2.7.2.tar.gz即为新生成的支持snappy压缩的二进制安装包。

3、Hadoop压缩配置

3.1 MR支持的压缩编码

为了支持多种压缩/解压缩算法,Hadoop引入了编码/解码器,如下表所示:

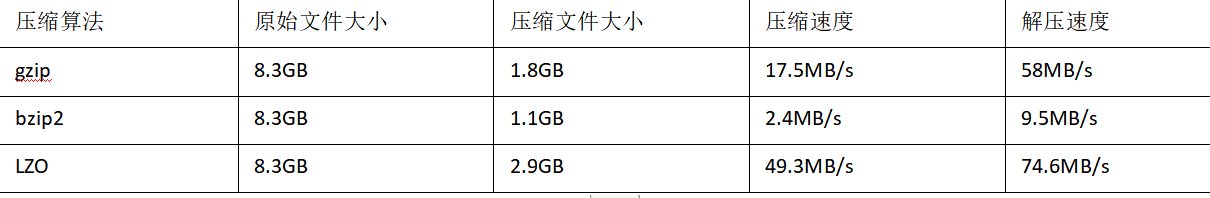

压缩性能的比较:

http://google.github.io/snappy/

On a single core of a Core i7 processor in 64-bit mode, Snappy compresses at about 250 MB/sec or more and decompresses at about 500 MB/sec or more.

3.2 压缩参数配置

要在Hadoop中启用压缩,可以配置如下参数(mapred-site.xml文件中):

4、开启Map输出阶段压缩

开启map输出阶段压缩可以减少job中map和Reduce task间数据传输量。具体配置如下:

案例实操:

(1)开启hive中间传输数据压缩功能(启动:hive、beeline服务)

[root@hadoop101 ~]# cd /usr/local/hadoop/module/hive-1.2.1/bin/

[root@hadoop101 bin]# ./beeline

Beeline version 1.2.1 by Apache Hive

beeline> !connect jdbc:hive2://localhost:10000

Connecting to jdbc:hive2://localhost:10000

Enter username for jdbc:hive2://localhost:10000: root

Enter password for jdbc:hive2://localhost:10000:

Connected to: Apache Hive (version 1.2.1)

Driver: Hive JDBC (version 1.2.1)

Transaction isolation: TRANSACTION_REPEATABLE_READ

0: jdbc:hive2://localhost:10000>

0: jdbc:hive2://localhost:10000>

hadoop默认是:false,方式如下:

0: jdbc:hive2://localhost:10000> set hive.exec.compress.intermediate;

+----------------------------------------+--+

| set |

+----------------------------------------+--+

| hive.exec.compress.intermediate=false |

+----------------------------------------+--+

1 row selected (0.318 seconds)

0: jdbc:hive2://localhost:10000>

需要把它打开:

0: jdbc:hive2://localhost:10000> set hive.exec.compress.intermediate=true;

No rows affected (0.024 seconds)

0: jdbc:hive2://localhost:10000>

注意:需要指定它的编码格式:

0: jdbc:hive2://localhost:10000> set mapreduce.map.output.compress.codec;

+---------------------------------------------------------------------------------+--+

| set |

+---------------------------------------------------------------------------------+--+

| mapreduce.map.output.compress.codec=org.apache.hadoop.io.compress.DefaultCodec |

+---------------------------------------------------------------------------------+--+

1 row selected (0.02 seconds)

0: jdbc:hive2://localhost:10000>

设置mapreduce中map输出数据的压缩方式为:

0: jdbc:hive2://localhost:10000> set mapreduce.map.output.compress.codec=

0: jdbc:hive2://localhost:10000> org.apache.hadoop.io.compress.SnappyCodec;

No rows affected (0.004 seconds)

0: jdbc:hive2://localhost:10000>

接下来,我们尝试去执行查询语句

0: jdbc:hive2://localhost:10000> select count(ename) name from emp;

........

+-------+--+

| name |

+-------+--+

| 14 |

+-------+--+

1 row selected (39.854 seconds)

0: jdbc:hive2://localhost:10000>

5、开启Reduce输出阶段压缩

开启map输出阶段压缩可以减少job中map和Reduce task间数据传输量。具体配置如下:

案例实操:

(1)开启hive中间传输数据压缩功能

hive (default)> set hive.exec.compress.intermediate=true;

(2)开启mapreduce中map输出压缩功能

hive (default)>set hive.exec.compress.output=true;

(3)设置mapreduce最终数据输出压缩方式

hive (default)> set mapreduce.output.fileoutputformat.compress.codec =

org.apache.hadoop.io.compress.SnappyCodec;

(4)设置mapreduce最终数据输出压缩为块压缩

hive (default)> set mapreduce.output.fileoutputformat.compress.type=BLOCK;

(5)测试一下输出结果是否是压缩文件

hive (default)> insert overwrite local directory '/usr/local/hadoop/module/datas/distribute-result' select * from emp distribute by deptno sort by empno desc;

Query ID = root_20200106214406_879398ac-d7a1-4912-b421-e09eca1962d5

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks not specified. Estimated from input data size: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Starting Job = job_1578341511756_0003, Tracking URL = http://hadoop101:8088/proxy/application_1578341511756_0003/

Kill Command = /usr/local/hadoop/module/hadoop-2.7.2/bin/hadoop job -kill job_1578341511756_0003

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2020-01-06 21:44:25,101 Stage-1 map = 0%, reduce = 0%

2020-01-06 21:44:38,272 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.82 sec

2020-01-06 21:44:48,365 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 3.61 sec

MapReduce Total cumulative CPU time: 3 seconds 610 msec

Ended Job = job_1578341511756_0003

Copying data to local directory /usr/local/hadoop/module/datas/distribute-result

Copying data to local directory /usr/local/hadoop/module/datas/distribute-result

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 3.61 sec HDFS Read: 8330 HDFS Write: 661 SUCCESS

Total MapReduce CPU Time Spent: 3 seconds 610 msec

OK

emp.empno emp.ename emp.job emp.mgr emp.hiredate emp.sal emp.comm emp.deptno

Time taken: 44.329 seconds

hive (default)>

6、文件存储格式

Hive支持的存储数的格式主要有:TEXTFILE 、SEQUENCEFILE、ORC、PARQUET。

6.1 列式存储和行式存储

如图所示:左边为逻辑表,右边第一个为行式存储,第二个为列式存储。

(1)行存储的特点

查询满足条件的一整行数据的时候,列存储则需要去每个聚集的字段找到对应的每个列的值,行存储只需要找到其中一个值,其余的值都在相邻地方,所以此时行存储查询的速度更快。

(2)列存储的特点

因为每个字段的数据聚集存储,在查询只需要少数几个字段的时候,能大大减少读取的数据量;每个字段的数据类型一定是相同的,列式存储可以针对性的设计更好的设计压缩算法。

TEXTFILE和SEQUENCEFILE的存储格式都是基于行存储的;

ORC和PARQUET是基于列式存储的。

7、TextFile格式

默认格式,数据不做压缩,磁盘开销大,数据解析开销大。可结合Gzip、Bzip2使用,但使用Gzip这种方式,hive不会对数据进行切分,从而无法对数据进行并行操作。

Orc格式

Orc (Optimized Row Columnar)是Hive 0.11版里引入的新的存储格式。

如图6-11所示可以看到每个Orc文件由1个或多个stripe组成,每个stripe250MB大小,这个Stripe实际相当于RowGroup概念,不过大小由4MB->250MB,这样应该能提升顺序读的吞吐率。每个Stripe里有三部分组成,分别是Index Data,Row Data,Stripe Footer:

1)Index Data:一个轻量级的index,默认是每隔1W行做一个索引。这里做的索引应该只是记录某行的各字段在Row Data中的offset。

2)Row Data:存的是具体的数据,先取部分行,然后对这些行按列进行存储。对每个列进行了编码,分成多个Stream来存储。

3)Stripe Footer:存的是各个Stream的类型,长度等信息。

每个文件有一个File Footer,这里面存的是每个Stripe的行数,每个Column的数据类型信息等;每个文件的尾部是一个PostScript,这里面记录了整个文件的压缩类型以及FileFooter的长度信息等。在读取文件时,会seek到文件尾部读PostScript,从里面解析到File Footer长度,再读FileFooter,从里面解析到各个Stripe信息,再读各个Stripe,即从后往前读。

8、Parquet格式

Parquet是面向分析型业务的列式存储格式,由Twitter和Cloudera合作开发,2015年5月从Apache的孵化器里毕业成为Apache顶级项目。

Parquet文件是以二进制方式存储的,所以是不可以直接读取的,文件中包括该文件的数据和元数据,因此Parquet格式文件是自解析的。

通常情况下,在存储Parquet数据的时候会按照Block大小设置行组的大小,由于一般情况下每一个Mapper任务处理数据的最小单位是一个Block,这样可以把每一个行组由一个Mapper任务处理,增大任务执行并行度。

Parquet文件的格式如图

上图展示了一个Parquet文件的内容,一个文件中可以存储多个行组,文件的首位都是该文件的Magic Code,用于校验它是否是一个Parquet文件,Footer length记录了文件元数据的大小,通过该值和文件长度可以计算出元数据的偏移量,文件的元数据中包括每一个行组的元数据信息和该文件存储数据的Schema信息。除了文件中每一个行组的元数据,每一页的开始都会存储该页的元数据,在Parquet中,有三种类型的页:数据页、字典页和索引页。数据页用于存储当前行组中该列的值,字典页存储该列值的编码字典,每一个列块中最多包含一个字典页,索引页用来存储当前行组下该列的索引,目前Parquet中还不支持索引页。

9、主流文件存储格式对比实验

从存储文件的压缩比和查询速度两个角度对比。

存储文件的压缩比测试:

操作步骤

(1)首先把log.data文件上传到 /usr/local/hadoop/module/datas文件目录下:

(2)创建表,存储数据格式为TEXTFILE

2: jdbc:hive2://localhost:10000> create table log_text (

2: jdbc:hive2://localhost:10000> track_time string,

2: jdbc:hive2://localhost:10000> url string,

2: jdbc:hive2://localhost:10000> session_id string,

2: jdbc:hive2://localhost:10000> referer string,

2: jdbc:hive2://localhost:10000> ip string,

2: jdbc:hive2://localhost:10000> end_user_id string,

2: jdbc:hive2://localhost:10000> city_id string

2: jdbc:hive2://localhost:10000> )

2: jdbc:hive2://localhost:10000> row format delimited fields terminated by '\t'

2: jdbc:hive2://localhost:10000> stored as textfile ;

No rows affected (0.507 seconds)

2: jdbc:hive2://localhost:10000>

注意:创建数据表必须以root方式创建才行,不然会出错!

(3)向表中加载数据

2: jdbc:hive2://localhost:10000> load data local inpath '/usr/local/hadoop/module/datas/log.data'

2: jdbc:hive2://localhost:10000> into table log_text ;

INFO : Loading data to table default.log_text from file:/usr/local/hadoop/module/datas/log.data

INFO : Table default.log_text stats: [numFiles=1, totalSize=19014996]

No rows affected (3.312 seconds)

2: jdbc:hive2://localhost:10000>

在HDFS文件系统查看:数据加载进来了

(4)查看表中数据大小

2: jdbc:hive2://localhost:10000> dfs -du -h /user/hive/warehouse/log_text;

+-------------------------------------------------+--+

| DFS Output |

+-------------------------------------------------+--+

| 18.1 M /user/hive/warehouse/log_text/log.data |

+-------------------------------------------------+--+

1 row selected (0.109 seconds)

2: jdbc:hive2://localhost:10000>

9.1 ORC

(1)创建表,存储数据格式为ORC

2: jdbc:hive2://localhost:10000> create table log_orc(

2: jdbc:hive2://localhost:10000> track_time string,

2: jdbc:hive2://localhost:10000> url string,

2: jdbc:hive2://localhost:10000> session_id string,

2: jdbc:hive2://localhost:10000> referer string,

2: jdbc:hive2://localhost:10000> ip string,

2: jdbc:hive2://localhost:10000> end_user_id string,

2: jdbc:hive2://localhost:10000> city_id string

2: jdbc:hive2://localhost:10000> )

2: jdbc:hive2://localhost:10000> row format delimited fields terminated by '\t'

2: jdbc:hive2://localhost:10000> stored as orc ;

No rows affected (0.209 seconds)

No rows affected (0.273 seconds)

2: jdbc:hive2://localhost:10000>

(2)向表中加载数据

2: jdbc:hive2://localhost:10000> insert into table log_orc select * from log_text ;

在HDFS文件系统查看:数据加载进来了

(3) 查看插入后数据

2: jdbc:hive2://localhost:10000> dfs -du -h /user/hive/warehouse/log_orc/ ;

+-----------------------------------------------+--+

| DFS Output |

+-----------------------------------------------+--+

| 2.8 M /user/hive/warehouse/log_orc/000000_0 |

+-----------------------------------------------+--+

1 row selected (0.04 seconds)

2: jdbc:hive2://localhost:10000>

由此可见:创建出来的TEXTFILE文件格式与ORC文件格式所占的内存不一样:

TEXTFILE文件格式: 18.1 M

ORC文件格式:2.8 M

9.2 Parquet格式

(1)创建表,存储数据格式为parquet

1 row selected (0.04 seconds)

2: jdbc:hive2://localhost:10000> create table log_parquet(

2: jdbc:hive2://localhost:10000> track_time string,

2: jdbc:hive2://localhost:10000> url string,

2: jdbc:hive2://localhost:10000> session_id string,

2: jdbc:hive2://localhost:10000> referer string,

2: jdbc:hive2://localhost:10000> ip string,

2: jdbc:hive2://localhost:10000> end_user_id string,

2: jdbc:hive2://localhost:10000> city_id string

2: jdbc:hive2://localhost:10000> )

2: jdbc:hive2://localhost:10000> row format delimited fields terminated by '\t'

2: jdbc:hive2://localhost:10000> stored as parquet ;

(2)向表中加载数据

2: jdbc:hive2://localhost:10000> insert into table log_parquet select * from log_text ;

在HDFS文件系统查看:数据加载进来了

(3)查看表中数据大小

2: jdbc:hive2://localhost:10000> dfs -du -h /user/hive/warehouse/log_parquet/ ;

+----------------------------------------------------+--+

| DFS Output |

+----------------------------------------------------+--+

| 13.1 M /user/hive/warehouse/log_parquet/000000_0 |

+----------------------------------------------------+--+

1 row selected (0.071 seconds)

2: jdbc:hive2://localhost:10000>

(4)查看这张表使用是数据量与查询数据

log_parquet表:

2: jdbc:hive2://localhost:10000> select count(*)from log_parquet;

+---------+--+

| _c0 |

+---------+--+

| 100000 |

+---------+--+

1 row selected (46.892 seconds)

2: jdbc:hive2://localhost:10000>

有10万条数据,消耗了46S

log_orc表

2: jdbc:hive2://localhost:10000> select count(*)from log_orc;

+---------+--+

| _c0 |

+---------+--+

| 100000 |

+---------+--+

1 row selected (39.835 seconds)

有10万条数据,消耗了39S

0: jdbc:hive2://localhost:10000> select count(*)from log_text;

log_text数据表

+---------+--+

| _c0 |

+---------+--+

| 100000 |

+---------+--+

1 row selected (38.502 seconds)

有10万条数据,消耗了38S

在这几种方式之中,调优建议使用:orc

ORC与TEXTFILE结合使用

0: jdbc:hive2://localhost:10000> insert into table log_parquet select * from log_text ;

(1)创建一个非压缩的的ORC存储方式

0: jdbc:hive2://localhost:10000> create table log_orc_none(

0: jdbc:hive2://localhost:10000> track_time string,

0: jdbc:hive2://localhost:10000> url string,

0: jdbc:hive2://localhost:10000> session_id string,

0: jdbc:hive2://localhost:10000> referer string,

0: jdbc:hive2://localhost:10000> ip string,

0: jdbc:hive2://localhost:10000> end_user_id string,

0: jdbc:hive2://localhost:10000> city_id string

0: jdbc:hive2://localhost:10000> )

0: jdbc:hive2://localhost:10000> row format delimited fields terminated by '\t'

0: jdbc:hive2://localhost:10000> stored as orc tblproperties ("orc.compress"="NONE");

(2)插入数据

hive (default)> insert into table log_orc_none select * from log_text ;

(3)查看插入后数据

0: jdbc:hive2://localhost:10000> dfs -du -h /user/hive/warehouse/log_orc_none/ ;

+-----------------------------------------------------------+--+

| DFS Output |

+-----------------------------------------------------------+--+

| 7.7 M /user/hive/warehouse/log_orc_none/000000_0 |

| 7.7 M /user/hive/warehouse/log_orc_none/000000_0_copy_1 |

+-----------------------------------------------------------+--+

2 rows selected (0.055 seconds)

0: jdbc:hive2://localhost:10000>

2、创建一个SNAPPY压缩的ORC存储方式

(1)建表语句

0: jdbc:hive2://localhost:10000> create table log_orc_snappy(

0: jdbc:hive2://localhost:10000> track_time string,

0: jdbc:hive2://localhost:10000> url string,

0: jdbc:hive2://localhost:10000> session_id string,

0: jdbc:hive2://localhost:10000> referer string,

0: jdbc:hive2://localhost:10000> ip string,

0: jdbc:hive2://localhost:10000> end_user_id string,

0: jdbc:hive2://localhost:10000> city_id string

0: jdbc:hive2://localhost:10000> )

0: jdbc:hive2://localhost:10000> row format delimited fields terminated by '\t'

0: jdbc:hive2://localhost:10000> stored as orc tblproperties ("orc.compress"="SNAPPY");

No rows affected (0.257 seconds)

0: jdbc:hive2://localhost:10000>

(2) 插入数据

0: jdbc:hive2://localhost:10000> insert into table log_orc_snappy select * from log_text ;

(3)查看插入后数据

3、默认创建的ORC存储方式,导入数据后的大小为

2.8 M /user/hive/warehouse/log_orc/000000_0

比Snappy压缩的还小。原因是orc存储文件默认采用ZLIB压缩。比snappy压缩的小。

4.存储方式和压缩总结

在实际的项目开发当中,hive表的数据存储格式一般选择:orc或parquet。压缩方式一般选择snappy,lzo。

5、测试存储和压缩

官网:https://cwiki.apache.org/confluence/display/Hive/LanguageManual+ORC

ORC存储方式的压缩: