1、hadoop集群配置,需要配置如下文件:

hadoop-env.sh

core-site.xml

hdfs-site.xml

mapred-site.xml.template(先拷贝 mapred-site.xml.template文件并重命名为: mapred-site.xml)

yarn-site.xml

操作如下:

(1)进入hadoop-2.7.2/etc/hadoop文件目录下,编辑 hadoop-env.sh文件

hadoop-env.sh配置:

[root@hadoop105 hadoop]# vim hadoop-env.sh

# The java implementation to use.

export JAVA_HOME=/usr/local/java/module/jdk1.8

(2)进入hadoop-2.7.2/etc/hadoop文件目录下,编辑 core-site.xml文件

core-site.xml配置:

[root@hadoop105 hadoop]# vim core-site.xml

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://hadoop105:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/local/hadoop/module/hadoop-2.7.2/tmp</value>

</property>

</configuration>

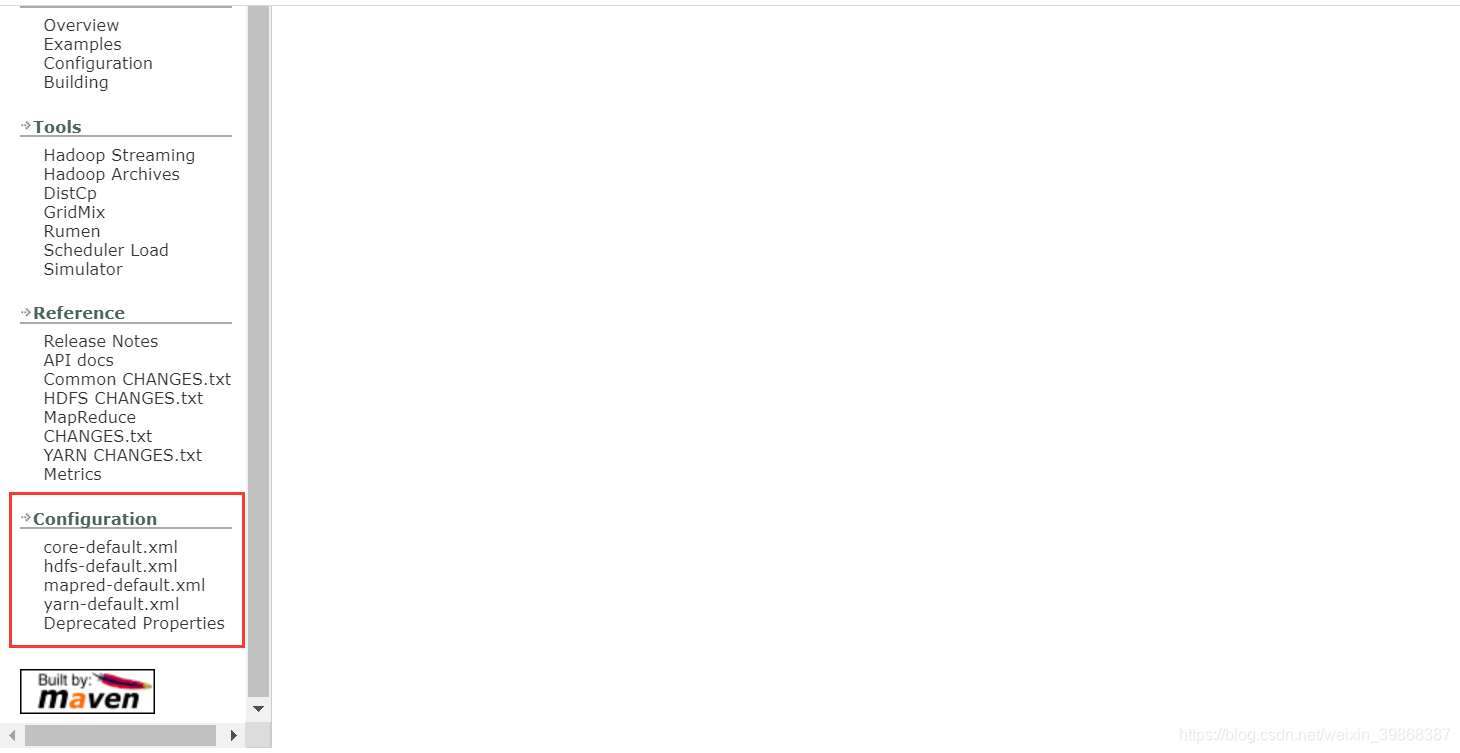

配置参考官方文档:http://hadoop.apache.org/docs/r2.7.2/

(3)进入hadoop-2.7.2/etc/hadoop文件目录下,编辑hdfs-site.xml

hdfs-site.xml配置:

[root@hadoop105 hadoop]# vim hdfs-site.xml

<configuration>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<!-- 指定Hadoop辅助名称节点主机配置 -->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>hadoop105:50090</value>

</property>

</configuration>

~

2:表示是副本,数据为2,防止机器宕机,默认是三台的

(4)进入hadoop-2.7.2/etc/hadoop文件目录下,先拷贝 mapred-site.xml.template文件并重命名为: mapred-site.xml

mapred-site.xml配置

[root@hadoop105 hadoop]# cp mapred-site.xml.template mapred-site.xml

[root@hadoop105 hadoop]# vim mapred-site.xml

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

(5)进入hadoop-2.7.2/etc/hadoop文件目录下,编辑 yarn-site.xml文件

[root@hadoop105 hadoop]# vim yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop105</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>

现在hadoop完全分布式配置完成

接下来要做的就是分发到别的机器上

操作步骤如下:

(1)把配置好的第一台(hadoop105)机器分发到别的机器上(第二台机{hadoop}器与第三台机器),执行命令如下:

[root@hadoop105 module]# scp -r hadoop-2.7.2/ hadoop106:/usr/local/hadoop/module/

[root@hadoop105 module]# scp -r hadoop-2.7.2/ hadoop107:/usr/local/hadoop/module/

分发完毕!

接下来,需要做格式化

操作步骤如下:

格式化:

[root@hadoop105 module]# hadoop namenode -format

检验格式化是否成功:

20/01/14 13:17:30 INFO common.Storage: Storage directory /usr/local/hadoop/module/hadoop-2.7.2/tmp/dfs/name has been successfully formatted.

启动namenode

[root@hadoop105 sbin]# ls

distribute-exclude.sh hdfs-config.sh refresh-namenodes.sh start-balancer.sh start-yarn.cmd stop-balancer.sh stop-yarn.cmd

hadoop-daemon.sh httpfs.sh slaves.sh start-dfs.cmd start-yarn.sh stop-dfs.cmd stop-yarn.sh

hadoop-daemons.sh kms.sh start-all.cmd start-dfs.sh stop-all.cmd stop-dfs.sh yarn-daemon.sh

hdfs-config.cmd mr-jobhistory-daemon.sh start-all.sh start-secure-dns.sh stop-all.sh stop-secure-dns.sh yarn-daemons.sh

[root@hadoop105 sbin]# hadoop-daemon.sh start namenode

starting namenode, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/hadoop-root-namenode-hadoop105.out

查看启动进程namenode

[root@hadoop105 sbin]# jps

11056 NameNode

11123 Jps

[root@hadoop105 sbin]#

访问之前需要关闭

[root@hadoop105 sbin]# systemctl stop firewalld.service

[root@hadoop105 sbin]#

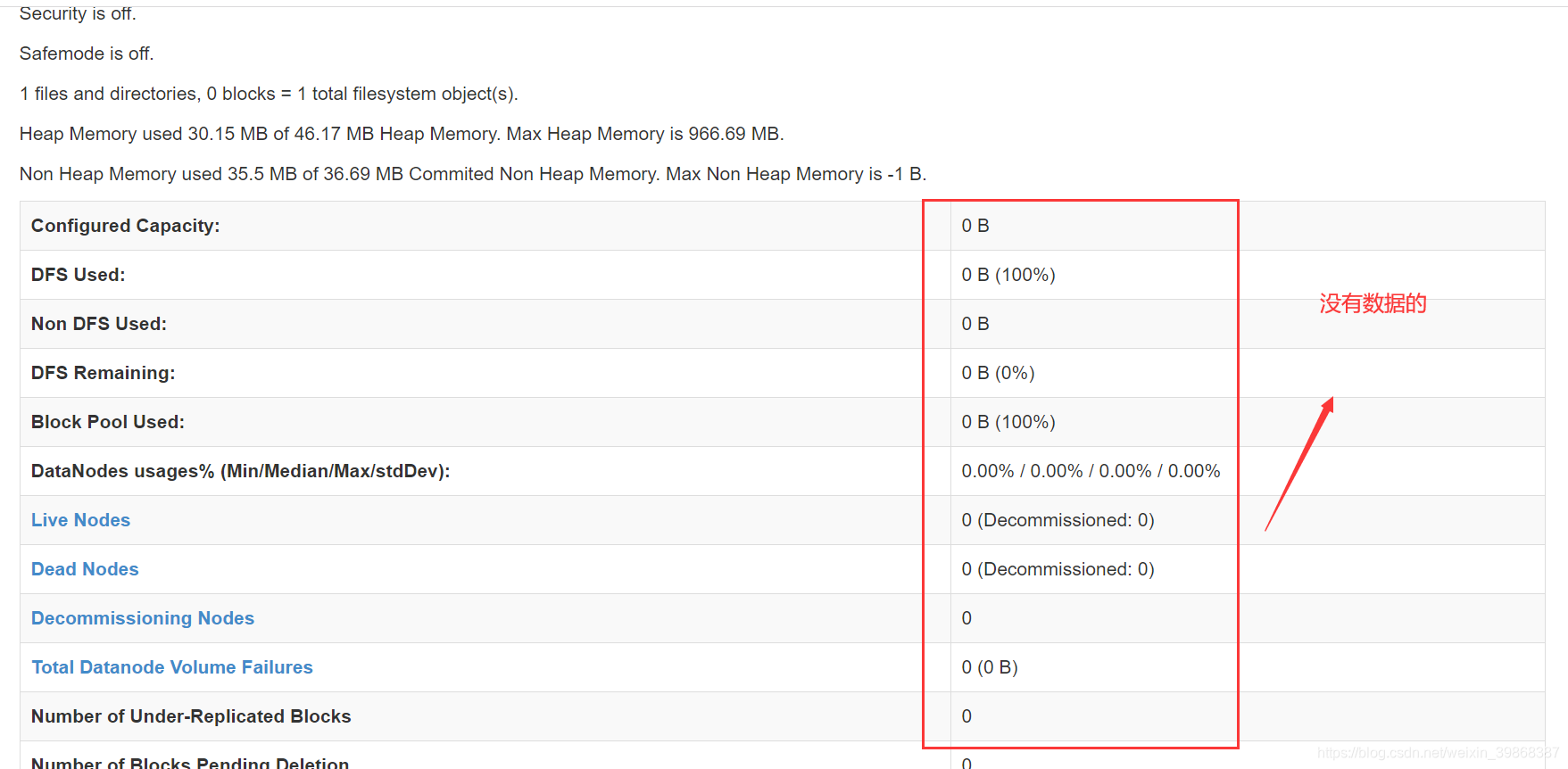

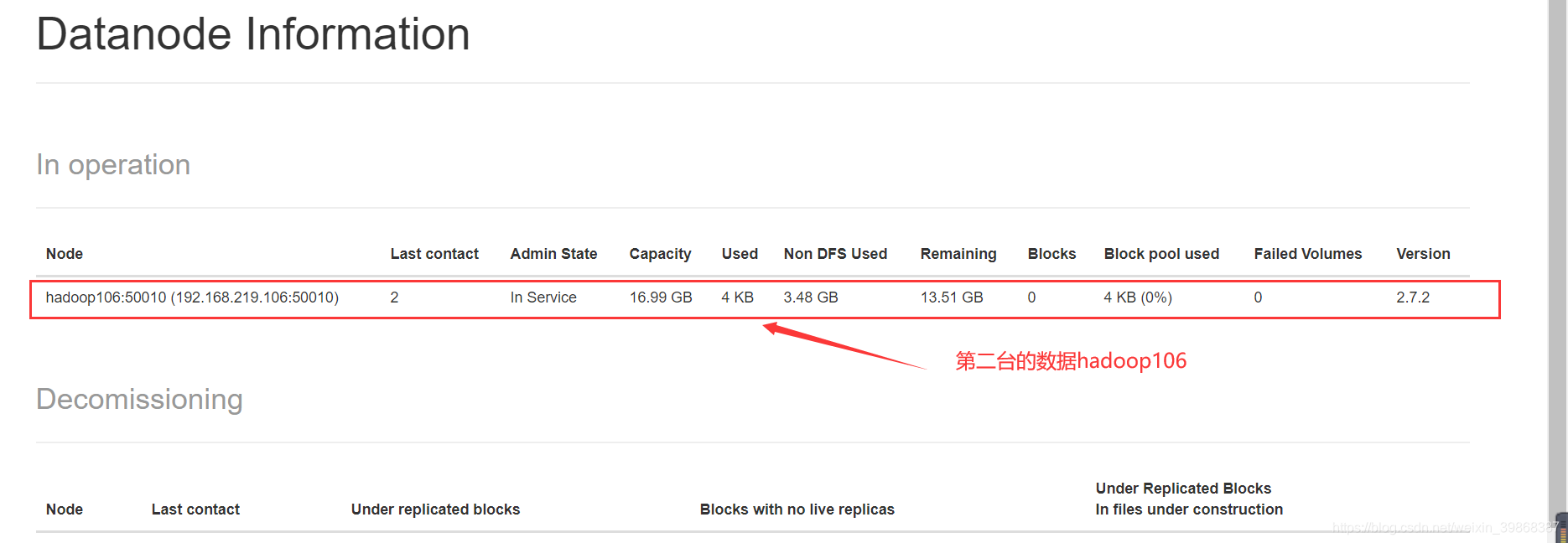

启动第二台机器hadoop106

[root@hadoop106 sbin]# source /etc/profile

[root@hadoop106 sbin]# hadoop-daemon.sh start datanode

starting datanode, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/hadoop-root-datanode-hadoop106.out

[root@hadoop106 sbin]#

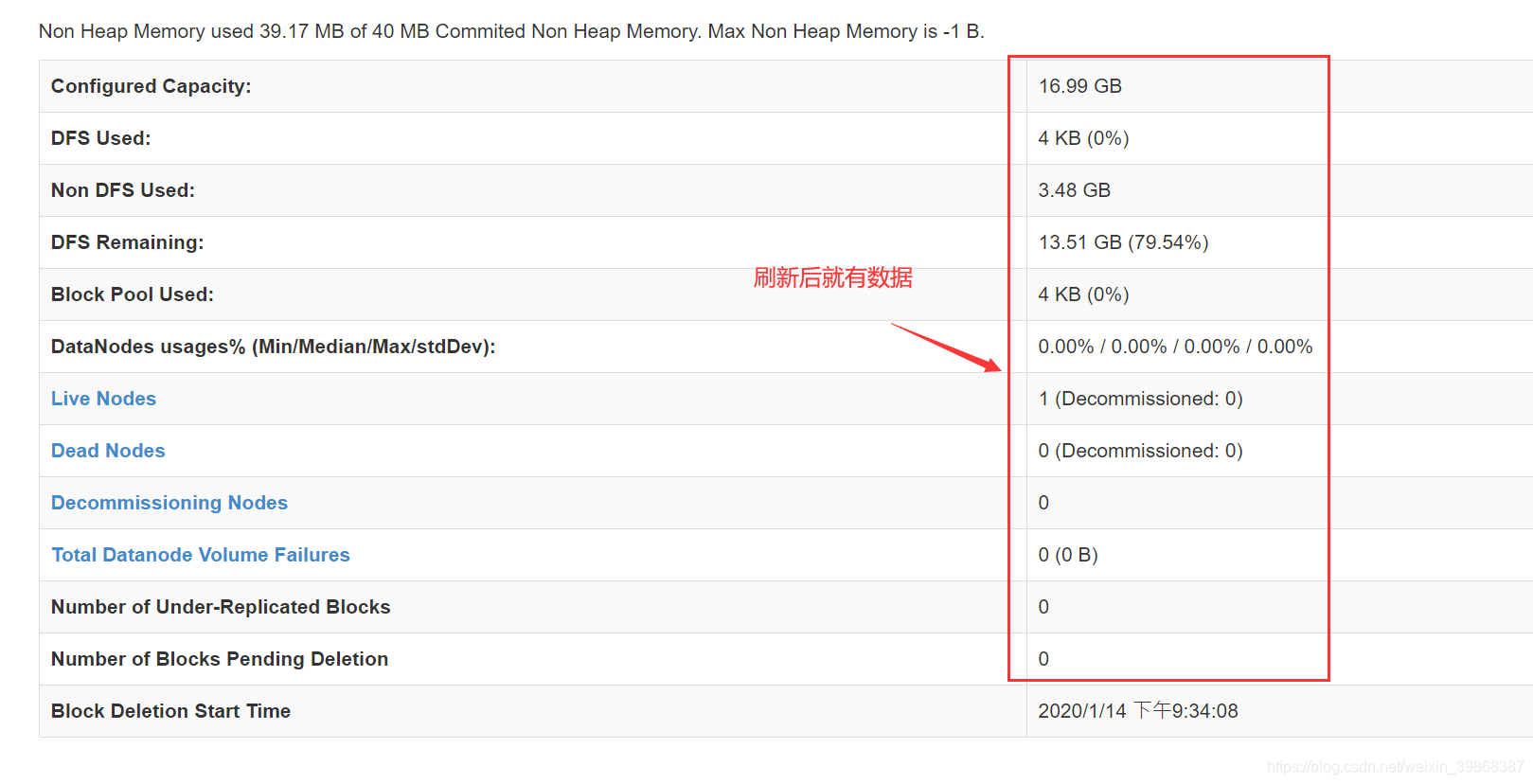

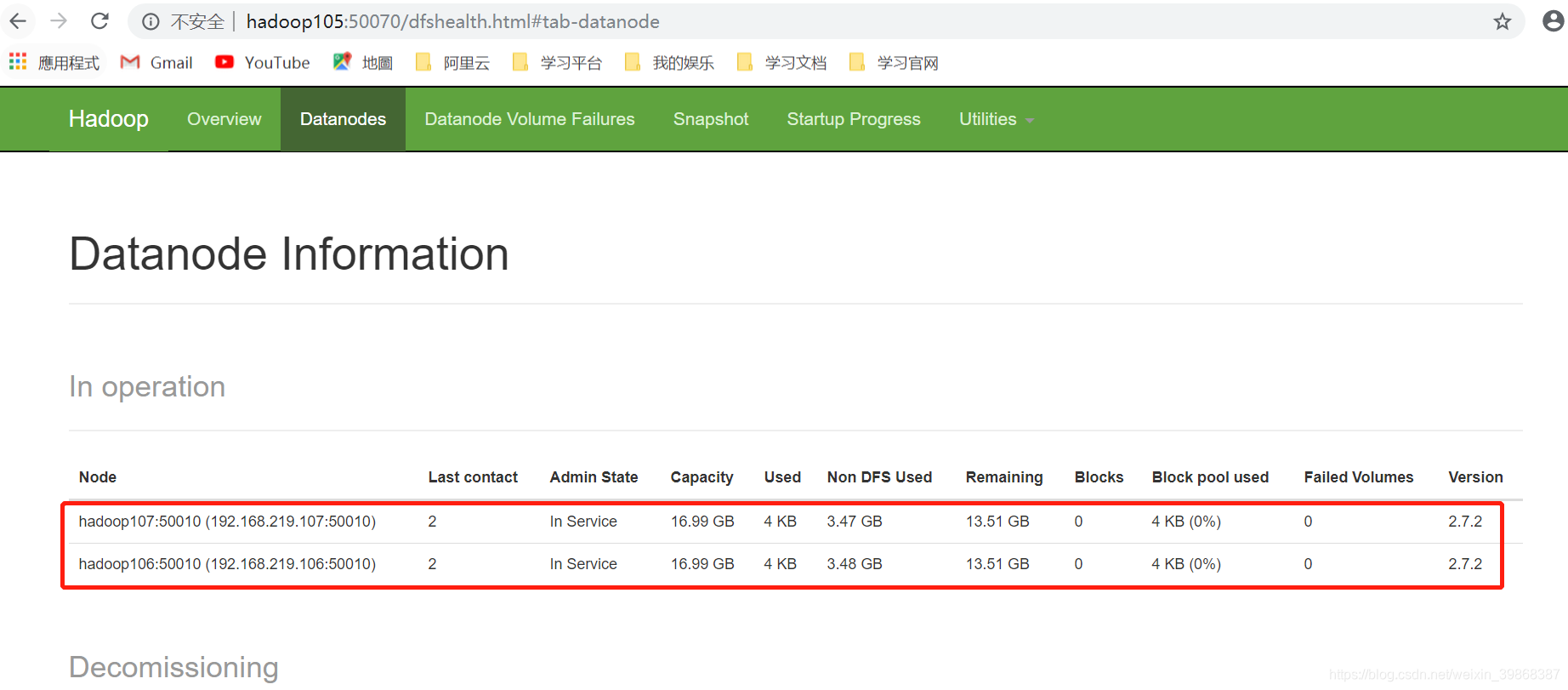

刷新后,就有数据

接着,启动第三台机器

[root@hadoop107 sbin]# hadoop-daemon.sh start datanode

starting datanode, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/hadoop-root-datanode-hadoop107.out

刷新后

接下来,还需要配置slave,在/hadoop-2.7.2/etc/hadoop文件目录下:

[root@hadoop105 hadoop]# pwd

/usr/local/hadoop/module/hadoop-2.7.2/etc/hadoop

[root@hadoop105 hadoop]# vim slaves

hadoop105

hadoop106

hadoop107

启动hadoop105、Hadoop06、hadoop107命令

[root@hadoop105 hadoop]# start-dfs.sh

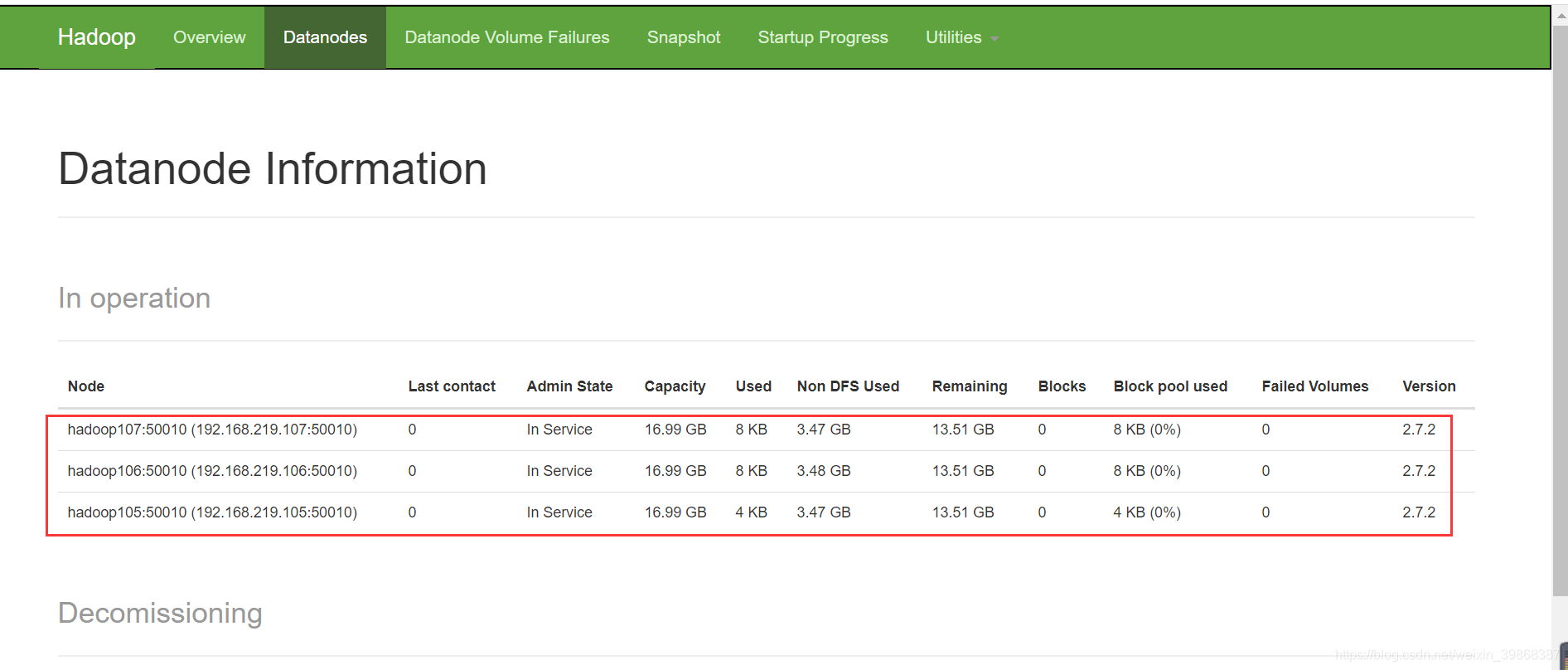

刷新后

想要访问8088,还需要yarn-site.xml文件配置

操作步骤

(1)进入hadoop-2.7.2/etc/hadoop文件目录下编辑yarn-site.xml配置文件

[root@hadoop105 hadoop]# vim yarn-site.xml

<configuration>

<!-- Site specific YARN configuration properties -->

<!-- 指定YARN的ResourceManager的地址 -->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>hadoop105</value>

</property>

<!-- Reducer获取数据的方式 -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>hadoop105:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>hadoop105:8030</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>hadoop105:8031</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>hadoop105:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>hadoop105:8088</value>

</property>

</configuration>

关闭yarn.sh服务

[root@hadoop105 hadoop-2.7.2]# stop-yarn.sh

重新启动yarn.sh服务

[root@hadoop105 hadoop-2.7.2]# start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/yarn-root-resourcemanager-hadoop105.out

hadoop107: starting nodemanager, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/yarn-root-nodemanager-hadoop107.out

hadoop106: starting nodemanager, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/yarn-root-nodemanager-hadoop106.out

hadoop105: starting nodemanager, logging to /usr/local/hadoop/module/hadoop-2.7.2/logs/yarn-root-nodemanager-hadoop105.out

查看监听端口进程

[root@hadoop105 hadoop-2.7.2]# netstat -tpnl | grep java

tcp 0 0 192.168.219.105:9000 0.0.0.0:* LISTEN 11056/java

tcp 0 0 192.168.219.105:50090 0.0.0.0:* LISTEN 11537/java

tcp 0 0 127.0.0.1:41485 0.0.0.0:* LISTEN 11385/java

tcp 0 0 0.0.0.0:50070 0.0.0.0:* LISTEN 11056/java

tcp 0 0 0.0.0.0:50010 0.0.0.0:* LISTEN 11385/java

tcp 0 0 0.0.0.0:50075 0.0.0.0:* LISTEN 11385/java

tcp 0 0 0.0.0.0:50020 0.0.0.0:* LISTEN 11385/java

tcp6 0 0 :::8040 :::* LISTEN 12612/java

tcp6 0 0 :::8042 :::* LISTEN 12612/java

tcp6 0 0 192.168.219.105:8088 :::* LISTEN 12510/java

tcp6 0 0 :::13562 :::* LISTEN 12612/java

tcp6 0 0 192.168.219.105:8030 :::* LISTEN 12510/java

tcp6 0 0 192.168.219.105:8031 :::* LISTEN 12510/java

tcp6 0 0 192.168.219.105:8032 :::* LISTEN 12510/java

tcp6 0 0 192.168.219.105:8033 :::* LISTEN 12510/java

tcp6 0 0 :::44898 :::* LISTEN 12612/java

关闭防火墙

systemctl stop firewalld.service

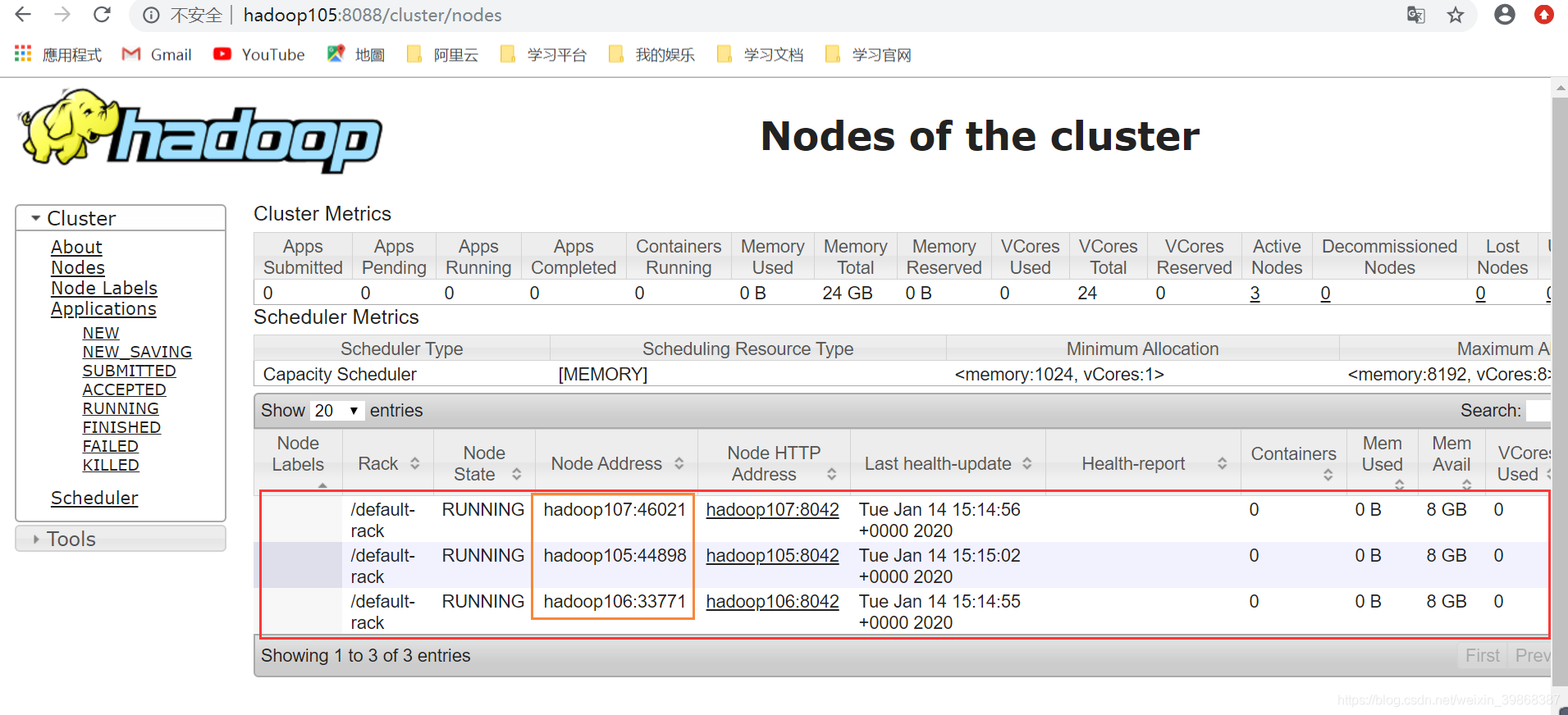

访问:http://hadoop105:8088

hadoop完全分布式启动ok!