启动Spark-shell:

[root@node1 ~]# spark-shell Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /___/ .__/\_,_/_/ /_/\_\ version 1.6.0 /_/ Using Scala version 2.10.5 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_131) Type in expressions to have them evaluated. Type :help for more information. Spark context available as sc (master = yarn-client, app id = application_1554951897984_0111). SQL context available as sqlContext. scala> sc res0: org.apache.spark.SparkContext = org.apache.spark.SparkContext@272485a6 scala> sqlContext res1: org.apache.spark.sql.SQLContext = org.apache.spark.sql.hive.HiveContext@11c95035

上下文已经包含 sc 和 sqlContext:

Spark context available as sc (master = yarn-client, app id = application_1554951897984_0111).

SQL context available as sqlContext.

本地创建people07041119.json

{"name":"zhangsan","job number":"101","age":33,"gender":"male","deptno":1,"sal":18000}

{"name":"lisi","job number":"102","age":30,"gender":"male","deptno":2,"sal":20000}

{"name":"wangwu","job number":"103","age":35,"gender":"female","deptno":3,"sal":50000}

{"name":"zhaoliu","job number":"104","age":31,"gender":"male","deptno":1,"sal":28000}

{"name":"tianqi","job number":"105","age":36,"gender":"female","deptno":3,"sal":90000}

本地创建dept.json

{"name":"development","deptno":1}

{"name":"personnel","deptno":2}

{"name":"testing","deptno":3}

将本地文件上传到HDFS上:

bash-4.2$ hadoop dfs -put /home/**/data/people07041119.json /user/** bash-4.2$ hadoop dfs -put /home/**/data/dept.json /user/**

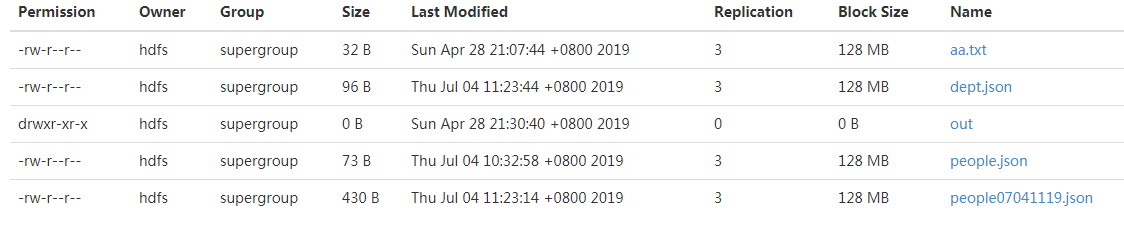

结果如下:

执行Scala脚本,加载文件:

scala> val people=sqlContext.jsonFile("/user/**/people07041119.json") warning: there were 1 deprecation warning(s); re-run with -deprecation for details people: org.apache.spark.sql.DataFrame = [age: bigint, deptno: bigint, gender: string, job number: string, name: string, sal: bigint] scala> val dept=sqlContext.jsonFile("/user/**/dept.json") warning: there were 1 deprecation warning(s); re-run with -deprecation for details people: org.apache.spark.sql.DataFrame = [deptno: bigint, name: string]

执行Scala脚本,查看文件内容:

scala> people.show +---+------+------+----------+--------+-----+ |age|deptno|gender|job number| name| sal| +---+------+------+----------+--------+-----+ | 33| 1| male| 101|zhangsan|18000| | 30| 2| male| 102| lisi|20000| | 35| 3|female| 103| wangwu|50000| | 31| 1| male| 104| zhaoliu|28000| | 36| 3|female| 105| tianqi|90000| +---+------+------+----------+--------+-----+

显示前三条记录:

scala> people.show(3) +---+------+------+----------+--------+-----+ |age|deptno|gender|job number| name| sal| +---+------+------+----------+--------+-----+ | 33| 1| male| 101|zhangsan|18000| | 30| 2| male| 102| lisi|20000| | 35| 3|female| 103| wangwu|50000| +---+------+------+----------+--------+-----+ only showing top 3 rows

查看列信息:

scala> people.columns

res5: Array[String] = Array(age, deptno, gender, job number, name, sal)

添加过滤条件:

scala> people.filter("gender='male'").count res6: Long = 3

参考: