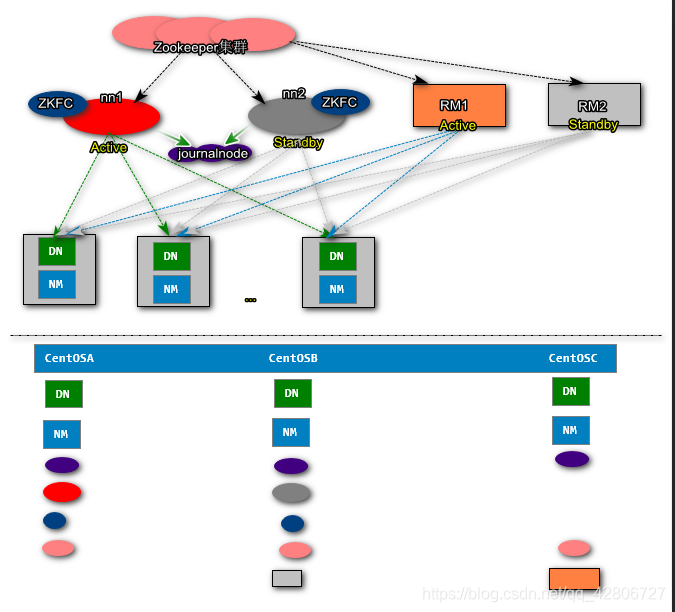

HDFS|YRAN HA

环境准备

- CentOS-6.5 64 bit

- jdk-7u79-linux-x64.rpm

- hadoop-2.6.0.tar.gz

- zookeeper-3.6.4.tar.gz

安装CentOS主机-物理节点

| CentOSA | CentOSB | CentOSC |

|---|---|---|

| 192.168.29.129 | 192.168.29.130 | 192.168.29.131 |

基础配置

- 主机名和IP映射关系

[root@CentOSX ~]# clear

[root@CentOSX ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.128.133 CentOSA

192.168.128.134 CentOSB

192.168.128.135 CentOSC

- 关闭防火墙

[root@CentOSX ~]# service iptables stop

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

[root@CentOSX ~]# chkconfig iptables off

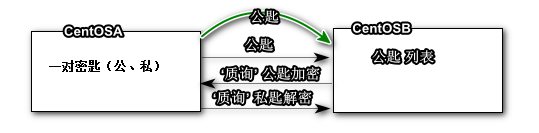

- SSH免密码登录

[root@CentOSX ~]# ssh-keygen -t rsa

[root@CentOSX ~]# ssh-copy-id CentOSA

[root@CentOSX ~]# ssh-copy-id CentOSB

[root@CentOSX ~]# ssh-copy-id CentOSC

- 同步所有物理节点的时钟

[root@CentOSA ~]# date -s '2018-09-16 11:28:00'

Sun Sep 16 11:28:00 CST 2018

[root@CentOSA ~]# clock -w

[root@CentOSA ~]# date

Sun Sep 16 11:28:13 CST 2018

安装JDK配置JAVA_HOME环境变量

[root@CentOSX ~]# rpm -ivh jdk-8u171-linux-x64.rpm

[root@CentOSX ~]# vi .bashrc

JAVA_HOME=/usr/java/latest

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin

export JAVA_HOME

export CLASSPATH

export PATH

[root@CentOSX ~]# source .bashrc

安装zookeeper&启动Zookeeper

[root@CentOSX ~]# tar -zxf zookeeper-3.4.6.tar.gz -C /usr/

[root@CentOSX ~]# vi /usr/zookeeper-3.4.6/conf/zoo.cfg

tickTime=2000

dataDir=/root/zkdata

clientPort=2181

initLimit=5

syncLimit=2

server.1=CentOSA:2887:3887

server.2=CentOSB:2887:3887

server.3=CentOSC:2887:3887

[root@CentOSX ~]# mkdir /root/zkdata

[root@CentOSA ~]# echo 1 >> zkdata/myid

[root@CentOSB ~]# echo 2 >> zkdata/myid

[root@CentOSC ~]# echo 3 >> zkdata/myid

[root@CentOSX zookeeper-3.4.6]# ./bin/zkServer.sh start zoo.cfg

JMX enabled by default

Using config: /usr/zookeeper-3.4.6/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[root@CentOSX zookeeper-3.4.6]# ./bin/zkServer.sh status zoo.cfg

Hadoop配置与安装

[root@CentOSX ~]# tar -zxf hadoop-2.6.0_x64.tar.gz -C /usr/

[root@CentOSX ~]# vi .bashrc

HADOOP_HOME=/usr/hadoop-2.6.0

JAVA_HOME=/usr/java/latest

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export JAVA_HOME

export CLASSPATH

export PATH

export HADOOP_HOME

[root@CentOSX ~]# source .bashrc

- core-site.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/hadoop-2.6.0/hadoop-${user.name}</value>

</property>

<property>

<name>fs.trash.interval</name>

<value>30</value>

</property>

<property>

<name>net.topology.script.file.name</name>

<value>/usr/hadoop-2.6.0/etc/hadoop/rack.sh</value>

</property>

创建机架脚本文件,该脚本可以根据IP判断机器所处的物理位置

[root@CentOSX ~]# vi /usr/hadoop-2.6.0/etc/hadoop/rack.sh

while [ $# -gt 0 ] ; do

nodeArg=$1

exec</usr/hadoop-2.6.0/etc/hadoop/topology.data

result=""

while read line ; do

ar=( $line )

if [ "${ar[0]}" = "$nodeArg" ] ; then

result="${ar[1]}"

fi

done

shift

if [ -z "$result" ] ; then

echo -n "/default-rack"

else

echo -n "$result "

fi

done

[root@CentOSX ~]# chmod u+x /usr/hadoop-2.6.0/etc/hadoop/rack.sh

[root@CentOSX ~]# vi /usr/hadoop-2.6.0/etc/hadoop/topology.data

192.168.128.133 /rack1

192.168.128.134 /rack1

192.168.128.135 /rack2

[root@CentOSX ~]# /usr/hadoop-2.6.0/etc/hadoop/rack.sh 192.168.128.133

/rack1

- hdfs-site.xml

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>CentOSA:2181,CentOSB:2181,CentOSC:2181</value>

</property>

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>CentOSA:9000</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>CentOSB:9000</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://CentOSA:8485;CentOSB:8485;CentOSC:8485/mycluster</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_rsa</value>

</property>

- slaves

CentOSC[root@CentOSX ~]# vi /usr/hadoop-2.6.0/etc/hadoop/slaves

CentOSA

CentOSB

CentOSC

HDFS启动

[root@CentOSX ~]# hadoop-daemon.sh start journalnode //等上10秒钟,再进行下一步操作

[root@CentOSA ~]# hdfs namenode -format

[root@CentOSA ~]# hadoop-daemon.sh start namenode

[root@CentOSB ~]# hdfs namenode -bootstrapStandby (下载active的namenode元数据)

[root@CentOSB ~]# hadoop-daemon.sh start namenode

[root@CentOSA|B ~]# hdfs zkfc -formatZK (可以在CentOSA或者CentOSB任意一台注册namenode信息)

[root@CentOSA ~]# hadoop-daemon.sh start zkfc (哨兵)

[root@CentOSB ~]# hadoop-daemon.sh start zkfc (哨兵)

[root@CentOSX ~]# hadoop-daemon.sh start datanode

查看机架

[root@CentOSA ~]# hdfs dfsadmin -printTopology

Rack: /rack1

192.168.29.129:50010 (CentOSA)

192.168.29.130:50010 (CentOSB)

Rack: /rack2

192.168.29.131:50010 (CentOSC)

集群启动和关闭

[root@CentOSA ~]# start|stop-dfs.sh #任意一台都可以执行

如果重启过程中,因为journalnode初始化过慢,导致namenode启动失败,请在执行失败的namenode节点上执行

hadoop-daemon.sh start namenode

构建Yarn的集群

- 修改mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

- 修改yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>cluster1</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>CentOSB</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>CentOSC</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>CentOSA:2181,CentOSB:2181,CentOSC:2181</value>

</property>

- 启动YARN

[root@CentOSB ~]# yarn-daemon.sh start resourcemanager

[root@CentOSC ~]# yarn-daemon.sh start resourcemanager

[root@CentOSX ~]# yarn-daemon.sh start nodemanager

查看ResourceManager HA状态

[root@CentOSA ~]# yarn rmadmin -getServiceState rm1

active

[root@CentOSA ~]# yarn rmadmin -getServiceState rm2

standby