作者:jiangzz 电话:15652034180 微信:jiangzz_wx 微信公众账号:jiangzz_wy

环境搭建

Hadoop环境

- 设置CentOS进程数和文件数(重启生效)

[root@CentOS ~]# vi /etc/security/limits.conf

* soft nofile 204800

* hard nofile 204800

* soft nproc 204800

* hard nproc 204800

优化linux性能,可能修改这个最大值

- 配置主机名(重启生效)

[root@CentOS ~]# vi /etc/sysconfig/network

NETWORKING=yes

HOSTNAME=CentOS

[root@CentOS ~]# rebbot

- 设置IP映射

[root@CentOS ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.40.128 CentOS

- 防火墙服务

# 临时关闭服务

[root@CentOS ~]# service iptables stop

iptables: Setting chains to policy ACCEPT: filter [ OK ]

iptables: Flushing firewall rules: [ OK ]

iptables: Unloading modules: [ OK ]

[root@CentOS ~]# service iptables status

iptables: Firewall is not running.

# 关闭开机自动启动

[root@CentOS ~]# chkconfig iptables off

[root@CentOS ~]# chkconfig --list | grep iptables

iptables 0:off 1:off 2:off 3:off 4:off 5:off 6:off

- 安装JDK1.8+

[root@CentOS ~]# rpm -ivh jdk-8u171-linux-x64.rpm

[root@CentOS ~]# ls -l /usr/java/

total 4

lrwxrwxrwx. 1 root root 16 Mar 26 00:56 default -> /usr/java/latest

drwxr-xr-x. 9 root root 4096 Mar 26 00:56 jdk1.8.0_171-amd64

lrwxrwxrwx. 1 root root 28 Mar 26 00:56 latest -> /usr/java/jdk1.8.0_171-amd64

[root@CentOS ~]# vi .bashrc

JAVA_HOME=/usr/java/latest

PATH=$PATH:$JAVA_HOME/bin

CLASSPATH=.

export JAVA_HOME

export PATH

export CLASSPATH

[root@CentOS ~]# source ~/.bashrc

- SSH配置免密

[root@CentOS ~]# ssh-keygen -t rsa

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

4b:29:93:1c:7f:06:93:67:fc:c5:ed:27:9b:83:26:c0 root@CentOS

The key's randomart image is:

+--[ RSA 2048]----+

| |

| o . . |

| . + + o .|

| . = * . . . |

| = E o . . o|

| + = . +.|

| . . o + |

| o . |

| |

+-----------------+

[root@CentOS ~]# ssh-copy-id CentOS

The authenticity of host 'centos (192.168.40.128)' can't be established.

RSA key fingerprint is 3f:86:41:46:f2:05:33:31:5d:b6:11:45:9c:64:12:8e.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added 'centos,192.168.40.128' (RSA) to the list of known hosts.

root@centos's password:

Now try logging into the machine, with "ssh 'CentOS'", and check in:

.ssh/authorized_keys

to make sure we haven't added extra keys that you weren't expecting.

[root@CentOS ~]# ssh root@CentOS

Last login: Tue Mar 26 01:03:52 2019 from 192.168.40.1

[root@CentOS ~]# exit

logout

Connection to CentOS closed.

- 配置HDFS|YARN

将hadoop-2.9.2.tar.gz解压到系统的/usr目录下然后配置[core|hdfs|yarn|mapred]-site.xml配置文件。

[root@CentOS ~]# vi /usr/hadoop-2.9.2/etc/hadoop/core-site.xml

<!--nn访问入口-->

<property>

<name>fs.defaultFS</name>

<value>hdfs://CentOS:9000</value>

</property>

<!--hdfs工作基础目录-->

<property>

<name>hadoop.tmp.dir</name>

<value>/usr/hadoop-2.9.2/hadoop-${user.name}</value>

</property>

[root@CentOS ~]# vi /usr/hadoop-2.9.2/etc/hadoop/hdfs-site.xml

<!--block副本因子-->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<!--配置Sencondary namenode所在物理主机-->

<property>

<name>dfs.namenode.secondary.http-address</name>

<value>CentOS:50090</value>

</property>

<!--设置datanode最大文件操作数-->

<property>

<name>dfs.datanode.max.xcievers</name>

<value>4096</value>

</property>

<!--设置datanode并行处理能力-->

<property>

<name>dfs.datanode.handler.count</name>

<value>6</value>

</property>

[root@CentOS ~]# vi /usr/hadoop-2.9.2/etc/hadoop/yarn-site.xml

<!--配置MapReduce计算框架的核心实现Shuffle-洗牌-->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<!--配置资源管理器所在的目标主机-->

<property>

<name>yarn.resourcemanager.hostname</name>

<value>CentOS</value>

</property>

<!--关闭物理内存检查-->

<property>

<name>yarn.nodemanager.pmem-check-enabled</name>

<value>false</value>

</property>

<!--关闭虚拟内存检查-->

<property>

<name>yarn.nodemanager.vmem-check-enabled</name>

<value>false</value>

</property>

[root@CentOS ~]# vi /usr/hadoop-2.9.2/etc/hadoop/mapred-site.xml

<!--MapRedcue框架资源管理器的实现-->

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

- 配置hadoop环境变量

[root@CentOS ~]# vi .bashrc

HADOOP_HOME=/usr/hadoop-2.9.2

JAVA_HOME=/usr/java/latest

CLASSPATH=.

PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export JAVA_HOME

export CLASSPATH

export PATH

export M2_HOME

export MAVEN_OPTS

export HADOOP_HOME

[root@CentOS ~]# source .bashrc

- 启动Hadoop服务

[root@CentOS ~]# hdfs namenode -format # 创建初始化所需的fsimage文件

[root@CentOS ~]# start-dfs.sh

[root@CentOS ~]# start-yarn.sh

Spark环境

下载spark-2.4.1-bin-without-hadoop.tgz解压到/usr目录,并且将Spark目录修改名字为spark-2.4.1然后修改spark-env.sh和spark-default.conf文件.

- 配置Spark服务

[root@CentOS ~]# vi /usr/spark-2.4.1/conf/spark-env.sh

# Options read in YARN client/cluster mode

# - SPARK_CONF_DIR, Alternate conf dir. (Default: ${SPARK_HOME}/conf)

# - HADOOP_CONF_DIR, to point Spark towards Hadoop configuration files

# - YARN_CONF_DIR, to point Spark towards YARN configuration files when you use YARN

# - SPARK_EXECUTOR_CORES, Number of cores for the executors (Default: 1).

# - SPARK_EXECUTOR_MEMORY, Memory per Executor (e.g. 1000M, 2G) (Default: 1G)

# - SPARK_DRIVER_MEMORY, Memory for Driver (e.g. 1000M, 2G) (Default: 1G)

HADOOP_CONF_DIR=/usr/hadoop-2.9.2/etc/hadoop

YARN_CONF_DIR=/usr/hadoop-2.9.2/etc/hadoop

SPARK_EXECUTOR_CORES=2

SPARK_EXECUTOR_MEMORY=1G

SPARK_DRIVER_MEMORY=1G

LD_LIBRARY_PATH=/usr/hadoop-2.9.2/lib/native

export HADOOP_CONF_DIR

export YARN_CONF_DIR

export SPARK_EXECUTOR_CORES

export SPARK_DRIVER_MEMORY

export SPARK_EXECUTOR_MEMORY

export LD_LIBRARY_PATH

export SPARK_DIST_CLASSPATH=$(hadoop classpath):$SPARK_DIST_CLASSPATH

export SPARK_HISTORY_OPTS="-Dspark.history.fs.logDirectory=hdfs:///spark-logs"

[root@CentOS ~]# vi /usr/spark-2.4.1/conf/spark-defaults.conf

spark.eventLog.enabled=true

spark.eventLog.dir=hdfs:///spark-logs

在HDFS上创建

spark-logs目录,用于作为Sparkhistory服务器存储数据的地方。

- 启动Spark history server

[root@CentOS spark-2.4.1]# ./sbin/start-history-server.sh

client模式

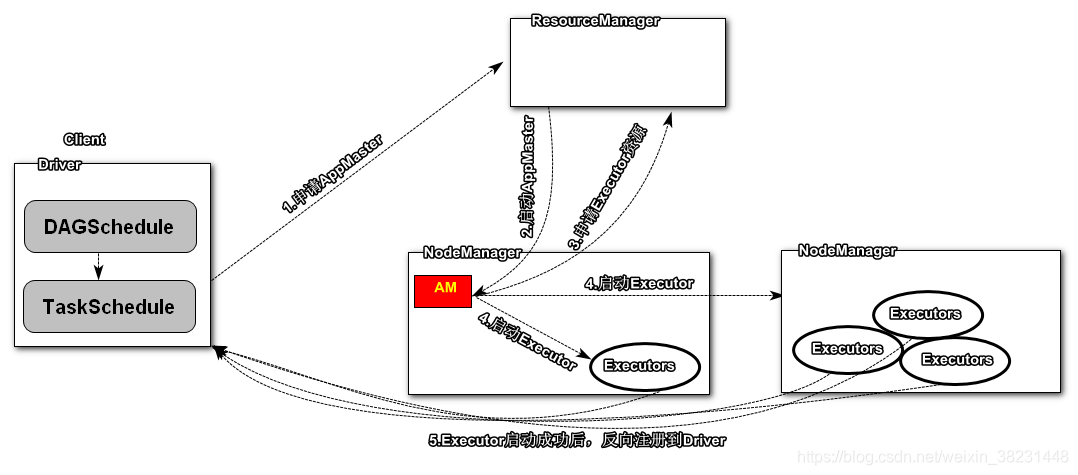

Driver指的是用户提交任务代码的main函数,这里会首先在本机计算出任务的阶段,然后在通过TaskSchedule进行任务调度,在TaskSchedule在做任务调度的前期会首先向ResourceManager申请资源启动AM(ExecutorLauncher),然后AM会根据Driver提供的num-executors参数向ResourceManager申请资源,启动Executor(CoarseGrainedExecutorBackend)进程,该进程启动完毕后,会反向注册到Driver中,由Driver的TaskSchedual负责任务的调度和分发。其中每个Executor进程能够执行对少个任务由启动参数executor-cores决定,如果不指定默认值是1。

例如进入client模式的方法:

[root@CentOS bin]# ./spark-shell --master yarn --deploy-mode client --executor-cores 4 --num-executors 2

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

19/04/17 01:46:04 WARN yarn.Client: Neither spark.yarn.jars nor spark.yarn.archive is set, falling back to uploading libraries under SPARK_HOME.

Spark context Web UI available at http://CentOS:4040

Spark context available as 'sc' (master = yarn, app id = application_1555383933869_0004).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.4.1

/_/

Using Scala version 2.11.12 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_171)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

注意:ApplicationMaster有launchExecutor和申请资源的功能,并没有作业调度的功能。

cluster模式

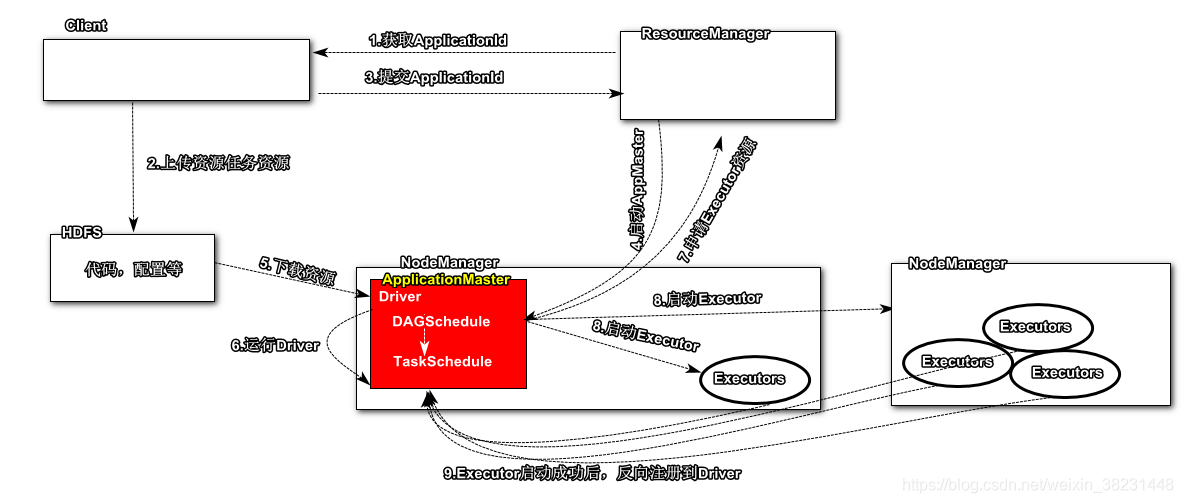

与client模式不同的是Driver程序是运行在ApplicationMaster中,此时客户端只负责链接ResourceManager获取任务id然后上传计算所需的资源代码片段,然后提交任务,由ResourceManager启动一个ApplicationMaster负责整个任务的执行。

例如进入cluster模式:

[root@CentOS bin]# ./spark-submit

--master yarn

--deploy-mode cluster

--class org.apache.spark.examples.SparkPi ../examples/jars/spark-examples_2.11-2.4.1.jar 100

--driver-memory 1g

----num-executors 3

--executor-cores 2

注意此时ApplicationMaster负责启动Executor同时还需要进行任务作业的调度,因为此时Driver运行在ApplicationMaster中。

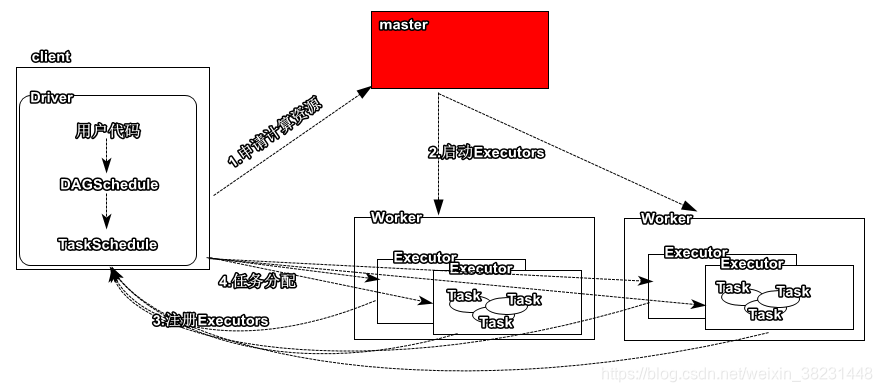

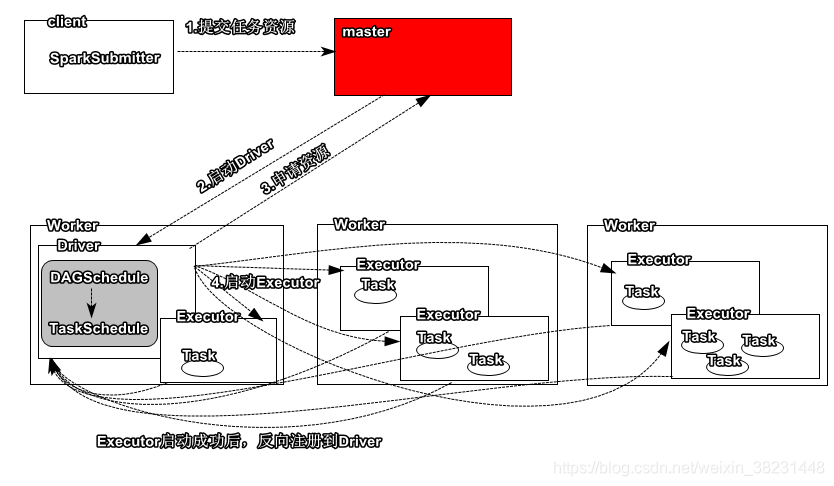

Spark Standalone

Spark集群采用了简单的Master-Slave架构模式,Master统一管理所有的Worker,这种模式很常见,我们简单地看下Spark Standalone集群启动的基本流程。

- 安装配置Spark

[root@CentOS ~]# tar -zxf spark-2.4.1-bin-without-hadoop.tgz -C /usr/

[root@CentOS ~]# mv /usr/spark-2.4.1-bin-without-hadoop /usr/spark-2.4.1

[root@CentOS ~]# vi /root/.bashrc

SPARK_HOME=/usr/spark-2.4.1

HADOOP_HOME=/usr/hadoop-2.9.2

JAVA_HOME=/usr/java/latestPATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$SPARK_HOME/bin

CLASSPATH=.

export JAVA_HOME

export PATH

export CLASSPATH

export HADOOP_HOME

export SPARK_HOME

[root@CentOS ~]# source .bashrc

[root@CentOS ~]# cd /usr/spark-2.4.1/

[root@CentOS spark-2.4.1]# mv conf/spark-env.sh.template conf/spark-env.sh

[root@CentOS spark-2.4.1]# mv conf/slaves.template conf/slaves

[root@CentOS spark-2.4.1]# mv conf/spark-defaults.conf.template conf/spark-defaults.conf

[root@CentOS spark-2.4.1]# vi conf/slaves

CentOS

[root@CentOS spark-2.4.1]# vi conf/spark-env.sh

SPARK_MASTER_HOST=CentOS

SPARK_MASTER_PORT=7077

SPARK_WORKER_CORES=4

SPARK_WORKER_MEMORY=4g

export SPARK_MASTER_HOST

export SPARK_MASTER_PORT

export SPARK_WORKER_CORES

export SPARK_WORKER_MEMORY

export LD_LIBRARY_PATH=/usr/hadoop-2.9.2/lib/native

export SPARK_DIST_CLASSPATH=$(hadoop classpath)

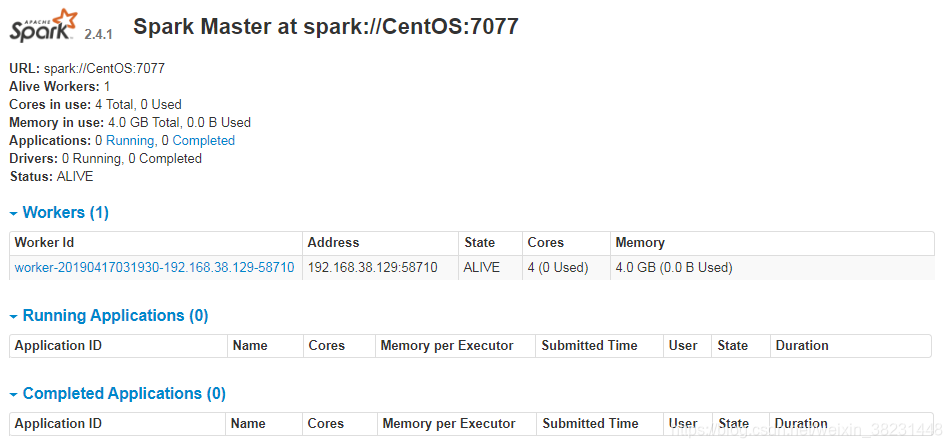

启动Spark

[root@CentOS spark-2.4.1]# ./sbin/start-all.sh

starting org.apache.spark.deploy.master.Master, logging to /usr/spark-2.4.1/logs/spark-root-org.apache.spark.deploy.master.Master-1-CentOS.out

CentOS: starting org.apache.spark.deploy.worker.Worker, logging to /usr/spark-2.4.1/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-CentOS.out

启动成功后可以访问:http://centos:8080/

Client模式

[root@CentOS spark-2.4.1]# ./bin/spark-shell --master spark://CentOS:7077 --deploy-mode client --executor-cores 2 --total-executor-cores 6

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://CentOS:4040

Spark context available as 'sc' (master = spark://CentOS:7077, app id = app-20190417052530-0005).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.4.1

/_/

Using Scala version 2.11.12 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_171)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

Cluster模式

[root@CentOS spark-2.4.1]# ./bin/spark-submit

--master spark://CentOS:7077

--deploy-mode cluster

--total-executor-cores 6 --executor-cores 2

--class org.apache.spark.examples.SparkPi examples/jars/spark-examples_2.11-2.4.1.jar 100

更多精彩内容关注