版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/qq_29350001/article/details/77941902

最近正好又用到 DM368 开发板,就将之前做的编解码的项目总结一下。话说一年多没碰,之前做的笔记全忘记是个什么鬼了。还好整理了一下出图像了。不过再看看做的这个东西,真是够渣的,只能作为参考了。

项目效果就是,编码 encode 然后通过 rtsp 传输在 VLC 上实时播放。用的是sensor 是 MT9P031。

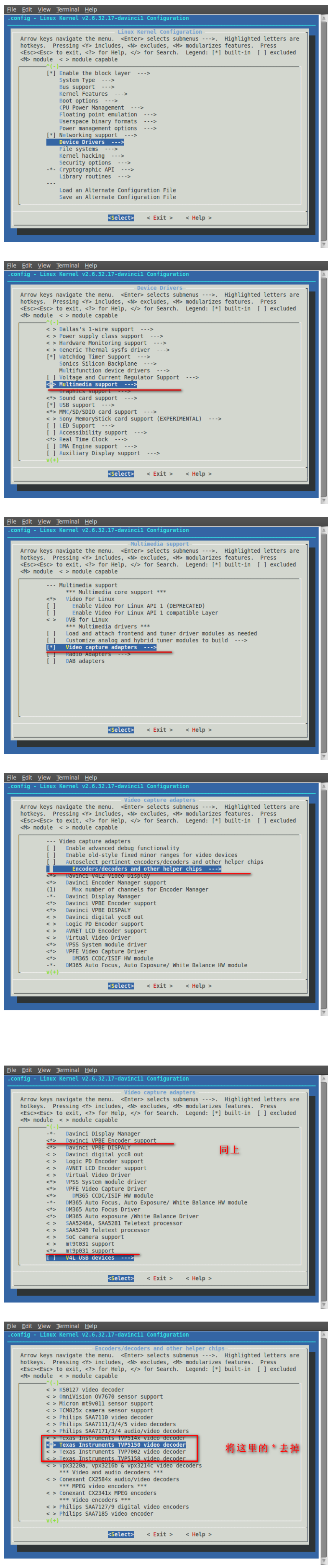

一、内核配置

让其支持MT9P031

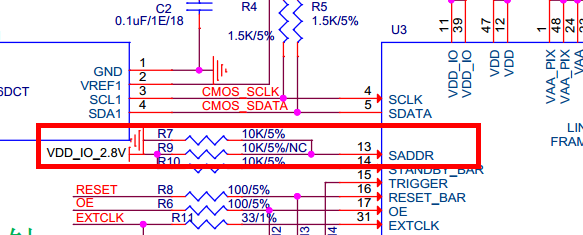

二、硬件设计错误排查

启动信息错误,无法检测到MT9P031芯片

mt9p031 1-005d: No MT9P031 chip detected, register read ffffff87

vpfe-capture vpfe-capture: v4l2 sub device mt9p031 register fails通过示波器测试,i2c 的有时钟信号,通过数据线波形可以看到写地址为 0xBA,但是没有应答 ACK。等待一段时间后再发,还是没有应答。 说明核心板有发送设备地址给 MT9P031 的,只不过没有应答。经查 MT9P031 小板,第13 引脚应为高电平才会回应设备 ID 0xBA。但是我们的硬件设计为低电平了。问题找到。

正确的内核启动信息:

mt9p031 1-005d: Detected a MT9P031 chip ID 1801

mt9p031 1-005d: mt9p031 1-005d decoder driver registered !!

vpfe-capture vpfe-capture: v4l2 sub device mt9p031 registered

vpfe_register_ccdc_device: DM365 ISIF

DM365 ISIF is registered with vpfe.三、测试demo里的encode

在 DVSDK 的文件夹 dvsdk-demos_4_02_00_01 下有编解码的 demo 的。

其中 encode.txt 、decode.txt、encodedecode.txt 有介绍编解码的使用。这里只贴出 encode 的。

/*

* encode.txt

*

* This readme file explains the options and describes how to use 'encode demo'

* on DM365 platform.

*

* Copyright (C) 2011 Texas Instruments Incorporated - http://www.ti.com/

*

*

* Redistribution and use in source and binary forms, with or without

* modification, are permitted provided that the following conditions

* are met:

*

* Redistributions of source code must retain the above copyright

* notice, this list of conditions and the following disclaimer.

*

* Redistributions in binary form must reproduce the above copyright

* notice, this list of conditions and the following disclaimer in the

* documentation and/or other materials provided with the

* distribution.

*

* Neither the name of Texas Instruments Incorporated nor the names of

* its contributors may be used to endorse or promote products derived

* from this software without specific prior written permission.

*

* THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

* "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

* LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

* A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT

* OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

* SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

* LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE,

* DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY

* THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

* (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

* OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

*

*/

NAME

encode - encode video and/or audio or speech files

SYNOPSIS

encode [options...]

DESCRIPTION

This demo uses the Codec Engine to encode data from peripheral device

drivers to files. Video, audio and speech files are supported. The files

created will be encoded elementary streams of video/audio/speech.

You must supply at least one file for the demo to run.

The DM365MM and CMEM kernel modules need to be inserted for this demo

to run. Use the script 'loadmodule-rc' in the DVSDK to make sure both

kernel modules are loaded with adequate parameters.

OPTIONS

-v <video file>, --videofile <video file>

Encodes video data to the given file. The file will be created if

it doesn't exist, and truncated if it does exist. The demo

detects which type of video file is supplied using the file

extension. Supported video algorithm is MPEG4 SP, H.264 MP, MPEG2

(.mpeg4 or .m4v extension, .264, .m2v).

-s <speech file>, --speechfile <speech file>

Encodes speech data to the given file. The file will be created

if it doesn't exist, and truncated if it does exist. The demo

detects which type of speech file is supplied using the file

extension. The only supported speech algorithm as of now is

G.711 (.g711 extension).

-a <audio file>, --audiofile <audio file>

Encodes audio data to the given file. The file will be created

if it doesn't exist, and truncated if it does exist. The demo

detects which type of audio file is supplied using the file

extension. The only supported speech algorithm as of now is

AAC (.aac extension).

-y <1-5>, --display_standard <1-5>

Sets the resolution of the display. If the captured resolution

is larger than the display it will be center clamped, and if it

is smaller the image will be centered.

1 D1 @ 30 fps (NTSC)

2 D1 @ 25 fps (PAL)

3 720P @ 60 fps [Default]

5 1080I @ 30 fps - for DM368

-r <resolution>, --resolution <resolution>

The resolution of video to encode in the format 'width'x'height'.

Default is the size of the video standard (720x480 for NTSC,

720x576 for PAL, 1280x720 for 720P).

-b <bit rate>, --videobitrate <bit rate>

This option sets the bit rate with which the video will be

encoded. Use a negative value for variable bit rate. Default is

variable bit rate.

-p <bit rate>, --soundbitrate <bit rate>

This option sets the bit rate with which the audio will be

encoded. Use a negative value for variable bit rate. Default is

96000.

-u <sample rate>, --samplerate <sample rate>

This option sets the sample rate with which the video will be

encoded. Default is 44100 Hz.

-w, --preview_disable

Disable preview of captured video frames.

-f, --write_disable

Disable recording of encoded file. This helps to validate

performance without file I/O.

-I, --video_input

Video input source to use.

1 Composite

2 S-video

3 Component

4 Imager/Camera - for DM368

When not specified, the video input is chosen based on the display

video standard selected. NTSC/PAL use Composite, 720P uses

Component, and 1080I uses the Imager/Camera.

-l, --linein

This option makes the input device for sound recording be the

'line in' as opposed to the 'mic in' default.

-k, --keyboard

Enables the keyboard input mode which lets the user input

commands using the keyboard in addition to the QT-based OSD

interface. At the prompt type 'help' for a list of available

commands.

-t <seconds>, --time <seconds>

The number of seconds to run the demo. Defaults to infinite time.

-o, --osd

Enables the On Screen Display for configuration and data

visualization using a QT-based UI. If this option is not passed,

the data will be output to stdout instead.

-h, --help

This will print the usage of the demo.

EXAMPLE USAGE

First execute this script to load kernel modules required:

./loadmodules.sh

General usage:

./encode -h

H264 HP video encode only @720p resolution with OSD:

./encode -v test.264 -y 3 -o

H264 HP video encode from s-video and G.711 speech encode:

./encode -v test.264 -s test.g711 -I 2

MPEG4 SP video encode only in CIF NTSC resolution with OSD:

./encode -v test.mpeg4 -r 352x240 -o

MPEG4 SP video encode at 1Mbps with keyboard interface on D1 PAL display:

./encode -v test.mpeg4 -b 1000000 -k -y 2

COPYRIGHT

Copyright (c) Texas Instruments Inc 2011

Use of this software is controlled by the terms and conditions found in

the license agreement under which this software has been supplied or

provided.

KNOWN ISSUES

VERSION

4.02

CHANGELOG

from 4.01:

Modified data flow to eliminate frame copies to maximize

performance.

Modified thread priorities to enhance performance.

from 4.0:

Added support of 1080I display video standard for DM368

Added support of Camera input for DM368

Removed s-video (-x) option.

Added -I option to select video input.

Added options to disable preview and recording of encoded output.

from 3.10:

Replaced old remote-driven interface with QT-based interface. This

can be turned on using the '-o' flag.

Added audio thread to allow audio encode.

Added options for audio support ('-a', '-p', '-u').

Added MPEG2 support.

SEE ALSO

For documentation and release notes on the individual components see

the html files in the host installation directory.root@dm368-evm:/# ./encode_demo -v t.264 -I 4 -y 3 -b 1000 -w

Encode demo started.

###### output_store ######

###### davinci_get_cur_encoder ######

###### vpbe_encoder_initialize ######

###### output is COMPONENT,outindex is 1 ######

###### vpbe_encoder_setoutput ######

###### dm365 = 1 ######

###### mode_info->std is 1 ######

###### mode is 480P-60 ######

###### 22VPBE Encoder initialized ######

###### vpbe_encoder_setoutput ######

###### dm365 = 1 ######

###### mode_info->std is 1 ######

###### mode is 480P-60 ######

###### davinci_enc_set_output : next davinci_enc_set_mode_platform ######

###### davinci_enc_set_mode_platform : next davinci_enc_priv_setmode ######

###### output_show ######

###### davinci_enc_get_output ######

###### davinci_get_cur_encoder ######

###### mode_store ######

###### davinci_enc_get_mode ######

###### davinci_get_cur_encoder ######

###### davinci_enc_set_mode ######

###### davinci_get_cur_encoder ######

###### dm365 = 1 ######

###### mode_info->std is 1 ######

###### mode is 576P-50 ######

###### davinci_enc_set_mode : next davinci_enc_set_mode ######

###### davinci_enc_set_mode_platform : next davinci_enc_priv_setmode ######

###### mode_show ######

###### davinci_enc_get_mode ######

###### davinci_get_cur_encoder ######

###### davinci_enc_get_mode ######

###### davinci_get_cur_encoder ######

###### davinci_enc_get_mode ######

###### davinci_get_cur_encoder ######

davinci_resizer davinci_resizer.2: RSZ_G_CONFIG:0:1:124

davinci_previewer davinci_previewer.2: ipipe_set_preview_config

davinci_previewer davinci_previewer.2: ipipe_set_preview_config

vpfe-capture vpfe-capture: IPIPE Chained

vpfe-capture vpfe-capture: Resizer present

dm365evm_enable_pca9543a

dm365evm_enable_pca9543a, status = -121

EVM: switch to HD imager video input

######vpfe_dev->current_subdev->is_camera.

-----Exposure time = 2f2############

v4l2_device_call_until_err sdinfo->grp_id : 4.

-----Exposure time = 2f2

vpfe-capture vpfe-capture: width = 1280, height = 720, bpp = 1

vpfe-capture vpfe-capture: adjusted width = 1280, height = 720, bpp = 1, bytesperline = 1280, sizeimage = 1382400

vpfe-capture vpfe-capture: width = 1280, height = 720, bpp = 1

vpfe-capture vpfe-capture: adjusted width = 1280, height = 720, bpp = 1, bytesperline = 1280, sizeimage = 1382400

ARM Load: 28% Video fps: 31 fps Video bit rate: 42 kbps Sound bit rate: 0 kbps Time: 08:00:01 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 10% Video fps: 30 fps Video bit rate: 32 kbps Sound bit rate: 0 kbps Time: 08:00:02 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 61% Video fps: 31 fps Video bit rate: 28 kbps Sound bit rate: 0 kbps Time: 08:00:03 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 9% Video fps: 30 fps Video bit rate: 27 kbps Sound bit rate: 0 kbps Time: 08:00:04 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 62% Video fps: 30 fps Video bit rate: 35 kbps Sound bit rate: 0 kbps Time: 08:00:06 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 10% Video fps: 30 fps Video bit rate: 37 kbps Sound bit rate: 0 kbps Time: 08:00:07 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 45% Video fps: 30 fps Video bit rate: 28 kbps Sound bit rate: 0 kbps Time: 08:00:08 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 29% Video fps: 31 fps Video bit rate: 27 kbps Sound bit rate: 0 kbps Time: 08:00:09 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 23% Video fps: 29 fps Video bit rate: 29 kbps Sound bit rate: 0 kbps Time: 08:00:10 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A

ARM Load: 51% Video fps: 31 fps Video bit rate: 43 kbps Sound bit rate: 0 kbps Time: 08:00:12 Demo: Encode Display: 720P 60Hz Video Codec: H.264 HP Resolution: 1280x720 Sound Codec: N/A Sampling Freq: N/A 四、搭建 RTSP 服务器

主要修改 writer.c 文件,其中的源码部分的,这里可以获取一帧数据。

if (fwrite(Buffer_getUserPtr(hOutBuf),

Buffer_getNumBytesUsed(hOutBuf), 1, outFile) != 1) {

ERR("Error writing the encoded data to video file\n");

cleanup(THREAD_FAILURE);

}

这协议过去这么久我都忘了,直接上代码吧。

/*

* writer.c

*

* This source file has the implementations for the writer thread

* functions implemented for 'DVSDK encode demo' on DM365 platform

*

* Copyright (C) 2010 Texas Instruments Incorporated - http://www.ti.com/

*

*

* Redistribution and use in source and binary forms, with or without

* modification, are permitted provided that the following conditions

* are met:

*

* Redistributions of source code must retain the above copyright

* notice, this list of conditions and the following disclaimer.

*

* Redistributions in binary form must reproduce the above copyright

* notice, this list of conditions and the following disclaimer in the

* documentation and/or other materials provided with the

* distribution.

*

* Neither the name of Texas Instruments Incorporated nor the names of

* its contributors may be used to endorse or promote products derived

* from this software without specific prior written permission.

*

* THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

* "AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

* LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

* A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT

* OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

* SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

* LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE,

* DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY

* THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

* (INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

* OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

*/

//#include <stdio.h>

#include <xdc/std.h>

#include <ti/sdo/dmai/Fifo.h>

#include <ti/sdo/dmai/BufTab.h>

#include <ti/sdo/dmai/BufferGfx.h>

#include <ti/sdo/dmai/Rendezvous.h>

#include "writer.h"

#include "../demo.h"

/* Number of buffers in writer pipe */

#define NUM_WRITER_BUFS 10

#include <unistd.h>

#include <stdlib.h>

#include <stdio.h>

#include <string.h>

#ifdef WIN32

#include <conio.h>

#include <windows.h>

#else

#include <pthread.h>

#endif

#include "LiveServiceRTSPInvoke.h"

unsigned long dwDeliverHandle;

unsigned long dwDeliverOne;

int g_nVideoPayloadType = 96;

#define __TEST_SEED_H264_DATA__

typedef struct

{

unsigned long ulFifoMediaHandle;

int nDeliverIsOk;

int nGetIDR;

int nServiceRun;

char szVideoFmtp[256];

int nExpired;

#ifdef __TEST_SEED_H264_DATA__

/*************************************************/

/*testing*/

int nSeedTerminal;

#ifndef WIN32

pthread_t hThread;

#else

HANDLE hThread;

#endif

/************************************************/

#endif

}DATA_CONTEXT;

DATA_CONTEXT dataContext; // 数据上下文

int OnVideoExpiredCallback( void* lpData, unsigned long dwSize, int nExpired, unsigned long dwContext )

{

DATA_CONTEXT * lpContext = (DATA_CONTEXT*)dwContext;

if( !nExpired )

{

unsigned char * lpH264Slice = (unsigned char *)lpData;

if( *(lpH264Slice+0) == 0 &&

*(lpH264Slice+1) == 0 &&

*(lpH264Slice+2) == 0 &&

*(lpH264Slice+3) == 1 )

{

if( 0x07 == ((*(lpH264Slice+4))&0x1f) )

lpContext->nExpired = 0; /* 废弃数据直到IDR*/

}

}

else

lpContext->nExpired = 1;

return lpContext->nExpired;

}

static const char cb64[]="ABCDEFGHIJKLMNOPQRSTUVWXYZabcdefghijklmnopqrstuvwxyz0123456789+/";

void live_base64encode(char *out,const unsigned char* in,int len)

{

for(;len >= 3; len -= 3)

{

*out ++ = cb64[ in[0]>>2];

*out ++ = cb64[ ((in[0]&0x03)<<4)|(in[1]>>4) ];

*out ++ = cb64[ ((in[1]&0x0f) <<2)|((in[2] & 0xc0)>>6) ];

*out ++ = cb64[ in[2]&0x3f];

in+=3;

}

if(len > 0)

{

unsigned char fragment;

*out ++ = cb64[ in[0]>>2];

fragment = (in[0] &0x03)<<4 ;

if(len > 1)

fragment |= in[1] >>4 ;

*out ++ = cb64[ fragment ];

*out ++ = (len <2) ? '=' : cb64[ ((in[1]&0x0f)<<2)];

*out ++ = '=';

}

*out = '\0';

}

/*---live_base64encode---*/

//视频RTSP发送

int ON_VIDEO_DELIVER_RTSP_SEND( void* lpStreamData, unsigned long * lpdwSize, unsigned long dwContext )

{

DATA_CONTEXT * lpDataContext = (DATA_CONTEXT*)dwContext;

unsigned char* lpDataBuf = NULL;

unsigned long dwSize = 0;

unsigned long dwTick = 0;

if( NULL == lpStreamData || NULL == lpdwSize )

{

/* THIS CHANNEL NOW TO DISABLE*/

lpDataContext->nDeliverIsOk = 0;

return 0;

}

*lpdwSize = 0;

lpDataContext->nDeliverIsOk = 1;

if( LiveDeliverRTSP_FifoMediaPopOne( lpDataContext->ulFifoMediaHandle, &lpDataBuf, &dwSize, &dwTick ) )

{

*lpdwSize = dwSize;

memcpy( lpStreamData, lpDataBuf, dwSize );

LiveDeliverRTSP_FifoMediaFreeOne( lpDataContext->ulFifoMediaHandle, lpDataBuf, dwSize );

}

#if 0 //cjk

else

{

*lpdwSize = 0;

}

#endif

return 0;

}

//检测H264帧数据OK

void OnH264FrameDataOK( DATA_CONTEXT * lpDataContext, unsigned char * lpDataBuf, unsigned long ulSize )

{

if( lpDataContext && lpDataBuf && ulSize )

{

if( !lpDataContext->nGetIDR )

{

unsigned char * lpSPS = NULL;

unsigned char * lpSPS_E = NULL;

unsigned char * lpPPS = NULL;

unsigned char * lpPPS_E = NULL;

unsigned int fNalType = 0;

if( ( 0 == *(lpDataBuf+0) ) &&

( 0 == *(lpDataBuf+1) ) &&

( 0 == *(lpDataBuf+2) ) &&

( 1 == *(lpDataBuf+3) ) )

{

fNalType = (0x01f&(*(lpDataBuf+4)));

}

if( 7 == fNalType ) /*SPS*/

{

unsigned char * pNextNal = lpDataBuf+4;

unsigned char * pEnd = lpDataBuf+ulSize;

lpSPS = lpDataBuf;

while( pNextNal < pEnd )

{

if( ( 0 == *(pNextNal+0) ) &&

( 0 == *(pNextNal+1) ) &&

( 0 == *(pNextNal+2) ) &&

( 1 == *(pNextNal+3) ) )

{

if( !lpSPS_E )

lpSPS_E = pNextNal;

fNalType = (0x01f&(*(pNextNal+4)));

if( 8 == fNalType ) /*PPS*/

{

if( !lpPPS )

lpPPS = pNextNal;

}

else

{

if( lpPPS && !lpPPS_E )

lpPPS_E = pNextNal;

}

if( lpPPS_E )

break;

}

pNextNal++;

}

if( lpSPS && lpSPS_E && lpPPS && lpPPS_E )

{

char szSPS[128];

char szPPS[32];

unsigned int profile_level_id = 0x420029;

unsigned char spsWEB[3];

live_base64encode( szSPS, lpSPS+5, lpSPS_E-lpSPS-5 );

live_base64encode( szPPS, lpPPS+5, lpPPS_E-lpPPS-5 );

// sprintf( szSPS, "%s", "Z00AKZpmA8ARPyzUBAQFAAADA+gAAMNQBA==" );

// sprintf( szPPS, "%s", "aO48gA==" );

spsWEB[0] = *(lpSPS+5);

spsWEB[1] = *(lpSPS+6);

spsWEB[2] = *(lpSPS+7);

profile_level_id = (spsWEB[0]<<16) | (spsWEB[1]<<8) | spsWEB[2];

sprintf( lpDataContext->szVideoFmtp,

"a=fmtp:%d packetization-mode=1;profile-level-id=%06X;sprop-parameter-sets=%s,%s",

g_nVideoPayloadType,

profile_level_id,

szSPS,

szPPS );

lpDataContext->nGetIDR = 1;

}

}

}

//管道FIFO新的一个

LiveDeliverRTSP_FifoMediaNewOne( lpDataContext->ulFifoMediaHandle, lpDataBuf, ulSize );

}

}

void iRelease (int signo)

{

if( dwDeliverOne )

{

LiveDeliverRTSP_StopOne( dwDeliverHandle, dwDeliverOne );

LiveDeliverRTSP_ReleaseOne( dwDeliverHandle, &dwDeliverOne );

}

LiveDeliverRTSP_Exit( &dwDeliverHandle );

if( dataContext.ulFifoMediaHandle )

{

LiveDeliverRTSP_FifoMediaRelease( dataContext.ulFifoMediaHandle );

dataContext.ulFifoMediaHandle = 0;

}

printf ("iRelease ok!!!!!!!!!!!\n");

//exit (0);

}

/******************************************************************************

* writerThrFxn

******************************************************************************/

Void *writerThrFxn(Void *arg)

{

signal (SIGINT, iRelease); //cjk

sleep (2);

WriterEnv *envp = (WriterEnv *) arg;

Void *status = THREAD_SUCCESS;

FILE *outFile = NULL;

Buffer_Attrs bAttrs = Buffer_Attrs_DEFAULT;

BufTab_Handle hBufTab = NULL;

Buffer_Handle hOutBuf;

Int fifoRet;

Int bufIdx;

//创建FIFO 创建的函数不太一样

dataContext.ulFifoMediaHandle = LiveDeliverRTSP_FifoMediaCreate( 1000, OnVideoExpiredCallback, (unsigned long)&dataContext );

dataContext.nDeliverIsOk = 0; //isok

dataContext.nGetIDR = 0; //IDR

dataContext.nExpired = 0;

memset( dataContext.szVideoFmtp, 0, sizeof(dataContext.szVideoFmtp) );

dwDeliverHandle = LiveDeliverRTSP_Init( 8765 );

dwDeliverOne = 0;

/* Open the output video file */

outFile = fopen(envp->videoFile, "w");

if (outFile == NULL) {

ERR("Failed to open %s for writing\n", envp->videoFile);

cleanup(THREAD_FAILURE);

}

/*

* Create a table of buffers for communicating buffers to

* and from the video thread.

*/

hBufTab = BufTab_create(NUM_WRITER_BUFS, envp->outBufSize, &bAttrs);

if (hBufTab == NULL) {

ERR("Failed to allocate contiguous buffers\n");

cleanup(THREAD_FAILURE);

}

/* Send all buffers to the video thread to be filled with encoded data */

for (bufIdx = 0; bufIdx < NUM_WRITER_BUFS; bufIdx++) {

if (Fifo_put(envp->hOutFifo, BufTab_getBuf(hBufTab, bufIdx)) < 0) {

ERR("Failed to send buffer to display thread\n");

cleanup(THREAD_FAILURE);

}

}

/* Signal that initialization is done and wait for other threads */

Rendezvous_meet(envp->hRendezvousInit);

int iRTSPCreateFlag = 0;

dataContext.nGetIDR = 0;

while (TRUE) {

if(0 == iRTSPCreateFlag)

{

if( dataContext.nGetIDR )

{

int nVideoBPS = 8*1024*1024;

dwDeliverOne = LiveDeliverRTSP_CreateOne( dwDeliverHandle, "pvm",

ON_VIDEO_DELIVER_RTSP_SEND, (unsigned long)&dataContext,

NULL, 0 );

LiveDeliverRTSP_SetupOne( dwDeliverHandle, dwDeliverOne, 1, g_nVideoPayloadType, "H264", 90000, dataContext.szVideoFmtp, nVideoBPS );

LiveDeliverRTSP_PlayOne( dwDeliverHandle, dwDeliverOne );

dataContext.nDeliverIsOk = 1;

dataContext.nServiceRun = 1;

iRTSPCreateFlag = 1;

//sleep (10);

//writerEnv.nDeliverIsOk = 0;

//writerEnv.nGetIDR = 0;

}

}

/* Get an encoded buffer from the video thread */

fifoRet = Fifo_get(envp->hInFifo, &hOutBuf);

if (fifoRet < 0) {

ERR("Failed to get buffer from video thread\n");

cleanup(THREAD_FAILURE);

}

/* Did the video thread flush the fifo? */

if (fifoRet == Dmai_EFLUSH) {

cleanup(THREAD_SUCCESS);

}

if (!envp->writeDisabled) {

/* Store the encoded frame to disk */

if (Buffer_getNumBytesUsed(hOutBuf)) {

#if 0

if (fwrite(Buffer_getUserPtr(hOutBuf),

Buffer_getNumBytesUsed(hOutBuf), 1, outFile) != 1) {

ERR("Error writing the encoded data to video file\n");

cleanup(THREAD_FAILURE);

#endif

unsigned char * lpReadBuf = (unsigned char *)Buffer_getUserPtr(hOutBuf);

unsigned long lpDateSize = (unsigned long)Buffer_getNumBytesUsed(hOutBuf);

unsigned char * lpFrameBuf = NULL;

unsigned char nFrameHeadFlag = 0;

while(!nFrameHeadFlag)

{

if((*lpReadBuf == 0 ) &&

(*(lpReadBuf+1) == 0 ) &&

(*(lpReadBuf+2) == 0 ) &&

(*(lpReadBuf+3) == 1 )

)

{

//int t = 0x1f &(*(lpReadBuf+4));

//if( t == 7 || t == 1 ){

nFrameHeadFlag = 1;

lpFrameBuf = lpReadBuf;

//}

}

else

{

lpDateSize -= 1;

lpReadBuf++;

}

OnH264FrameDataOK( &dataContext, lpReadBuf, lpDateSize );

usleep( 10000 );

}

}

else {

printf("Warning, writer received 0 byte encoded frame\n");

}

}

/* Return buffer to capture thread */

if (Fifo_put(envp->hOutFifo, hOutBuf) < 0) {

ERR("Failed to send buffer to display thread\n");

cleanup(THREAD_FAILURE);

}

}

cleanup:

/* Make sure the other threads aren't waiting for us */

Rendezvous_force(envp->hRendezvousInit);

Pause_off(envp->hPauseProcess);

Fifo_flush(envp->hOutFifo);

/* Meet up with other threads before cleaning up */

Rendezvous_meet(envp->hRendezvousCleanup);

/* Clean up the thread before exiting */

if (outFile) {

fclose(outFile);

}

if (hBufTab) {

BufTab_delete(hBufTab);

}

return status;

}五、项目下载

下载:编码并实时播放

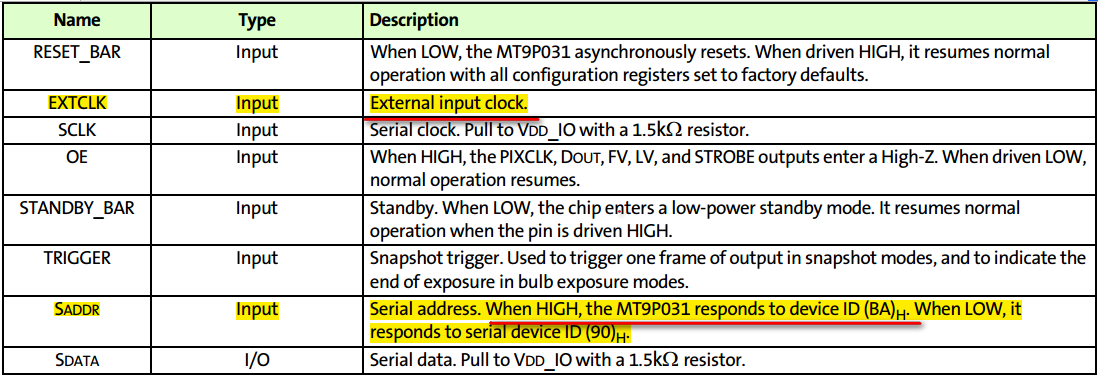

六、测试

开发板上执行:

./encode -v d.264 -I 4 -y 3 -w

在 VLC 输入网络 ULR:

rtsp://192.168.2.xxx:8765/pvm

点击播放

七、问题

我说这个项目很渣吧,存在很多问题。

第一个问题:在 VLC 输入网络 ULR,只能在执行encode的同时输入才能有效。比如encode执行过程中都不行。

第二个问题:图像无颜色,因为sensor没有加滤光片。

第三个问题:视频有一点延时。

第四个问题:encode 多次执行会出现段错误。

第五个问题:硬件 主频不是用的432Mhz

第六个问题:硬件 sensor对焦只能手动对焦

vpfe-capture vpfe-capture: width = 1280, height = 720, bpp = 1

vpfe-capture vpfe-capture: adjusted width = 1280, height = 720, bpp = 1, bytesperline = 1280, sizeimage = 1382400

vpfe-capture vpfe-capture: width = 1280, height = 720, bpp = 1

vpfe-capture vpfe-capture: adjusted width = 1280, height = 720, bpp = 1, bytesperline = 1280, sizeimage = 1382400

### EnableLiveOne - 1 ###

### EnableLiveOne - 2 ###

### EnableLiveOne - 3 ###

### EnableLiveOne - 5 ###

### EnableLiveOne - 6 ###

### EnableLiveOne - 7 ###

### EnableLiveOne - 8 ###

### EnableLiveOne - 9 ###

### m_lpLiveRTSPServerCore is null ###

Segmentation fault