GMS算法简要介绍

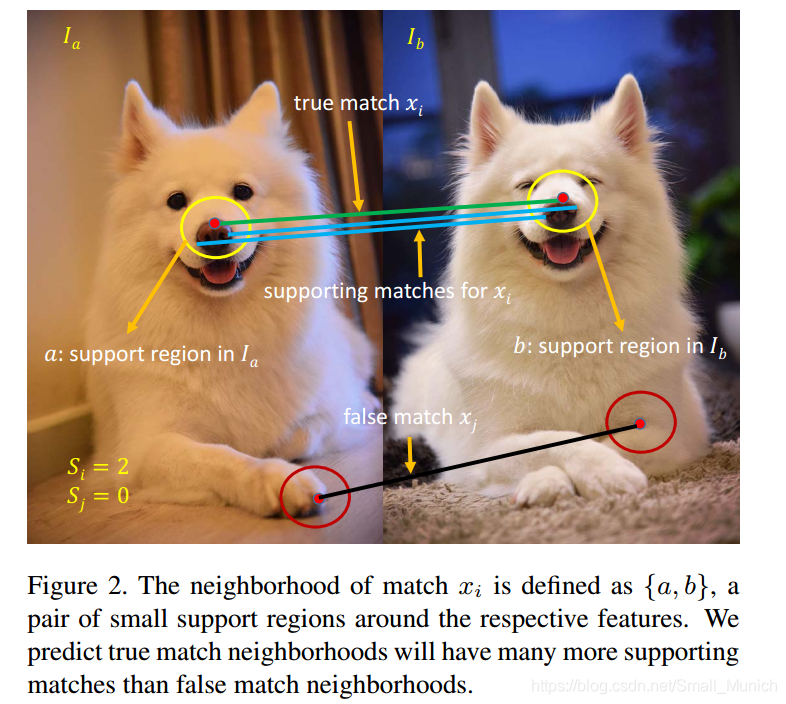

经典的特征匹配算法(SIFT、SURF、ORB等)存在的问题是鲁棒的算法速度较慢,快速的算法鲁棒性较差。局部特征匹配算法的核心问题在于邻域一致性的运用,稀疏邻域一致性特征又不能很好的定义邻域,因此导致特征匹配算法计算量大。Grid-based Motion Statistics(GMS)通过网格划分、运动统计特性的方法可以迅速剔除错误匹配,以此来提高匹配的稳定性。GMS核心思想在于:根据运动平滑性,在正确匹配的特征点附加的正确匹配点对数应该大于错误匹配点的特征点附近的正确匹配点对数。GMS算法主要流程如下:

1 检测两幅图像特征点和计算描述子;

2 通过BF暴力匹配算法进行匹配;

3 将图像划分成G个网格;

4 通过计算BF匹配好的特征点

附近的正确匹配个数n与阈值来判断是否该点被正确匹配;

OpenCV-GMS代码

#include <iostream>

#include <opencv2/core.hpp>

#include <opencv2/videoio.hpp>

#include <opencv2/highgui.hpp>

#include <opencv2/imgproc.hpp>

#include <opencv2/features2d.hpp>

#include <opencv2/flann.hpp>

#include <opencv2/xfeatures2d.hpp>

using namespace cv;

using namespace cv::xfeatures2d;

////////////////////////////////////////////////////

// This program demonstrates the GMS matching strategy.

int main(int argc, char* argv[])

{

const char* keys =

"{ h help | | print help message }"

"{ l left | | specify left (reference) image }"

"{ r right | | specify right (query) image }"

"{ camera | 0 | specify the camera device number }"

"{ nfeatures | 10000 | specify the maximum number of ORB features }"

"{ fastThreshold | 20 | specify the FAST threshold }"

"{ drawSimple | true | do not draw not matched keypoints }"

"{ withRotation | false | take rotation into account }"

"{ withScale | false | take scale into account }";

CommandLineParser cmd(argc, argv, keys);

//if (cmd.has("help"))

//{

// std::cout << "Usage: gms_matcher [options]" << std::endl;

// std::cout << "Available options:" << std::endl;

// cmd.printMessage();

// return EXIT_SUCCESS;

//}

Ptr<Feature2D> orb = ORB::create(1000); // cmd.get<int>("nfeatures")

orb.dynamicCast<cv::ORB>()->setFastThreshold(20); // cmd.get<int>("fastThreshold")

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create("BruteForce-Hamming");

// Image Pair to Match Using GMS Method

String imgL_path = "..//GMS_Matcher//images//01.jpg"; // cmd.get<String>("left")

String imgR_path = "..//GMS_Matcher//images//02.jpg"; // cmd.get<String>("right")

bool withRotation = true;// cmd.get<bool>("withRotation")

bool withScale = true; // cmd.get<bool>("withScale")

bool drawSimple = true; // cmd.get<bool>("drawSimple")

if (!imgL_path.empty() && !imgR_path.empty())

{

Mat imgL = imread(imgL_path);

Mat imgR = imread(imgR_path);

std::vector<KeyPoint> kpRef, kpCur;

Mat descRef, descCur;

orb->detectAndCompute(imgL, noArray(), kpRef, descRef);

orb->detectAndCompute(imgR, noArray(), kpCur, descCur);

std::vector<DMatch> matchesAll, matchesGMS;

matcher->match(descCur, descRef, matchesAll);

matchGMS(imgR.size(), imgL.size(), kpCur, kpRef, matchesAll, matchesGMS, withRotation, withScale);

std::cout << "matchesGMS: " << matchesGMS.size() << std::endl;

Mat frameMatches;

if (drawSimple)

drawMatches(imgR, kpCur, imgL, kpRef, matchesGMS, frameMatches, Scalar::all(-1), Scalar::all(-1),

std::vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS);

else

drawMatches(imgR, kpCur, imgL, kpRef, matchesGMS, frameMatches);

imshow("Matches GMS", frameMatches);

waitKey(0);

}

else // Video Match Real-Time

{

std::vector<KeyPoint> kpRef;

Mat descRef;

VideoCapture capture(cmd.get<int>("camera"));

//Camera warm-up

for (int i = 0; i < 10; i++)

{

Mat frame;

capture >> frame;

}

Mat frameRef;

for (;;)

{

Mat frame;

capture >> frame;

if (frameRef.empty())

{

frame.copyTo(frameRef);

orb->detectAndCompute(frameRef, noArray(), kpRef, descRef);

}

TickMeter tm;

tm.start();

std::vector<KeyPoint> kp;

Mat desc;

orb->detectAndCompute(frame, noArray(), kp, desc);

tm.stop();

double t_orb = tm.getTimeMilli();

tm.reset();

tm.start();

std::vector<DMatch> matchesAll, matchesGMS;

matcher->match(desc, descRef, matchesAll);

tm.stop();

double t_match = tm.getTimeMilli();

matchGMS(frame.size(), frameRef.size(), kp, kpRef, matchesAll, matchesGMS, cmd.get<bool>("withRotation"), cmd.get<bool>("withScale"));

tm.stop();

Mat frameMatches;

if (cmd.get<bool>("drawSimple"))

drawMatches(frame, kp, frameRef, kpRef, matchesGMS, frameMatches, Scalar::all(-1), Scalar::all(-1),

std::vector<char>(), DrawMatchesFlags::NOT_DRAW_SINGLE_POINTS);

else

drawMatches(frame, kp, frameRef, kpRef, matchesGMS, frameMatches);

String label = format("ORB: %.2f ms", t_orb);

putText(frameMatches, label, Point(20, 20), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

label = format("Matching: %.2f ms", t_match);

putText(frameMatches, label, Point(20, 40), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

label = format("GMS matching: %.2f ms", tm.getTimeMilli());

putText(frameMatches, label, Point(20, 60), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

putText(frameMatches, "Press r to reinitialize the reference image.", Point(frameMatches.cols - 380, 20), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

putText(frameMatches, "Press esc to quit.", Point(frameMatches.cols - 180, 40), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0, 0, 255));

imshow("Matches GMS", frameMatches);

int c = waitKey(30);

if (c == 27)

break;

else if (c == 'r')

{

frame.copyTo(frameRef);

orb->detectAndCompute(frameRef, noArray(), kpRef, descRef);

}

}

}

system("pause");

return EXIT_SUCCESS;

}

如果你配置好opencv与opencv_contrib的话,可以直接运行该部分代码。如果imgL_path 和imgR_path不为空的话,将会进行图像对的匹配。否则将会调用摄像头进行实时帧的匹配。相关参数可以人为的进行修改来调整匹配的点数与性能。

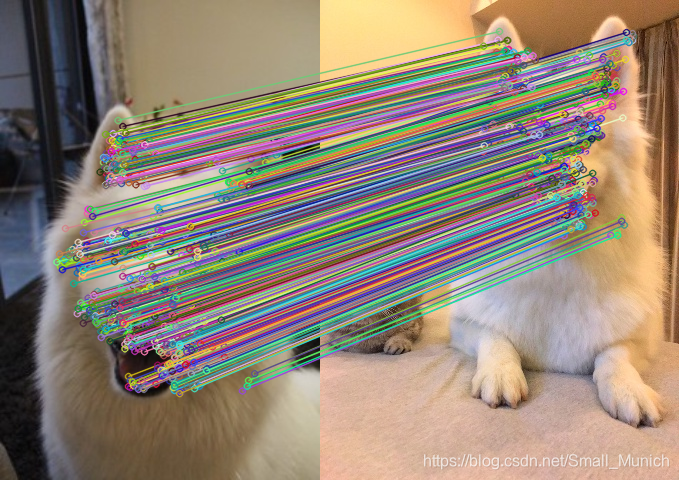

贴出几张实验结果

总结

GMS算法通过对比经典特征匹配算法在鲁棒性与实时性上面都优于SIFT、SURF、KAZE等算法。但是GMS算法对旋转不变性较差。同时GMS算法在图像网格的划分、与邻域点阈值的设置、匹配特征点

的小邻域范围的选择都需要进行考虑。后期会仔细研读GMS论文再进行解析。

如有错误,还请批评指正!

参考文献

https://github.com/JiawangBian/GMS-Feature-Matcher

https://github.com/opencv/opencv_contrib/pull/1532

https://zhuanlan.zhihu.com/p/53374827