版权声明:本文为博主原创文章,未经博主允许不得转载。 https://blog.csdn.net/mabozi08/article/details/79371306

mnist_inference.py

import tensorflow as tf

#定义神经网络结构相关的参数

INPUT_NODE=784

OUTPUT_NODE=10

LAYER1_NODE=500

def get_weight_variable(shape, regularizer):

weights = tf.get_variable("weights", shape, initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None:

tf.add_to_collection('losses',regularizer(weights))

return weights

#定义神经网络的前向传播

def inference(input_tensor, regularizer):

#申明第一层神经网络的变量并完成前向传播过程

with tf.variable_scope('layer1'):

weights = get_weight_variable([INPUT_NODE, LAYER1_NODE], regularizer)

biases = tf.get_variable("biases", [LAYER1_NODE], initializer=tf.constant_initializer(0.0))

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights) + biases)

#类似的申明第二层神经网络的变量并完成前向传播过程

with tf.variable_scope('layer2'):

weights = get_weight_variable([LAYER1_NODE, OUTPUT_NODE], regularizer)

biases = tf.get_variable("biases", [OUTPUT_NODE], initializer=tf.constant_initializer(0.0))

layer2 = tf.matmul(layer1, weights) + biases

return layer2mnist_train_tensorboard.py

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_inference

import os

BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARAZTION_RATE = 0.0001

TRAINING_STEPS = 3000

MOVING_AVERAGE_DEACY = 0.99

MODEL_SAVE_PATH = r"E:\test\to-model"

MODEL_NAME = "model.ckpt"

def train(mnist):

with tf.name_scope('input'):

x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name='y-cinput')

regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE)

#直接使用mnist_inference.py中定义的前向传播过程

y = mnist_inference.inference(x, regularizer)

global_step = tf.Variable(0, trainable=False)

with tf.name_scope("moving_average"):

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DEACY, global_step)

variable_averages_op = variable_averages.apply(tf.trainable_variables())

with tf.name_scope("loss_function"):

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

with tf.name_scope("train_step"):

learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE, global_step, mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY, staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variable_averages_op]):

train_op = tf.no_op(name='train')

saver = tf.train.Saver()

with tf.Session() as sess:

# tf.initialize_all_variables().run()

init = tf.global_variables_initializer()

sess.run(init)

for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

#每1000轮保存一次模型

if i % 1000 == 0:

#输出当前训练的情况,输出模型在当前训练batch上的损失函数大小

print("After %d training step(s), loss on training "

"batch is %g." % (step, loss_value))

#保存当前模型

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step)

writer = tf.summary.FileWriter(r"E:\test", tf.get_default_graph())

writer.close()

def main(argv=None):

mnist = input_data.read_data_sets(r"E:\data\data_mnist", one_hot=True)

train(mnist)

if __name__ == '__main__':

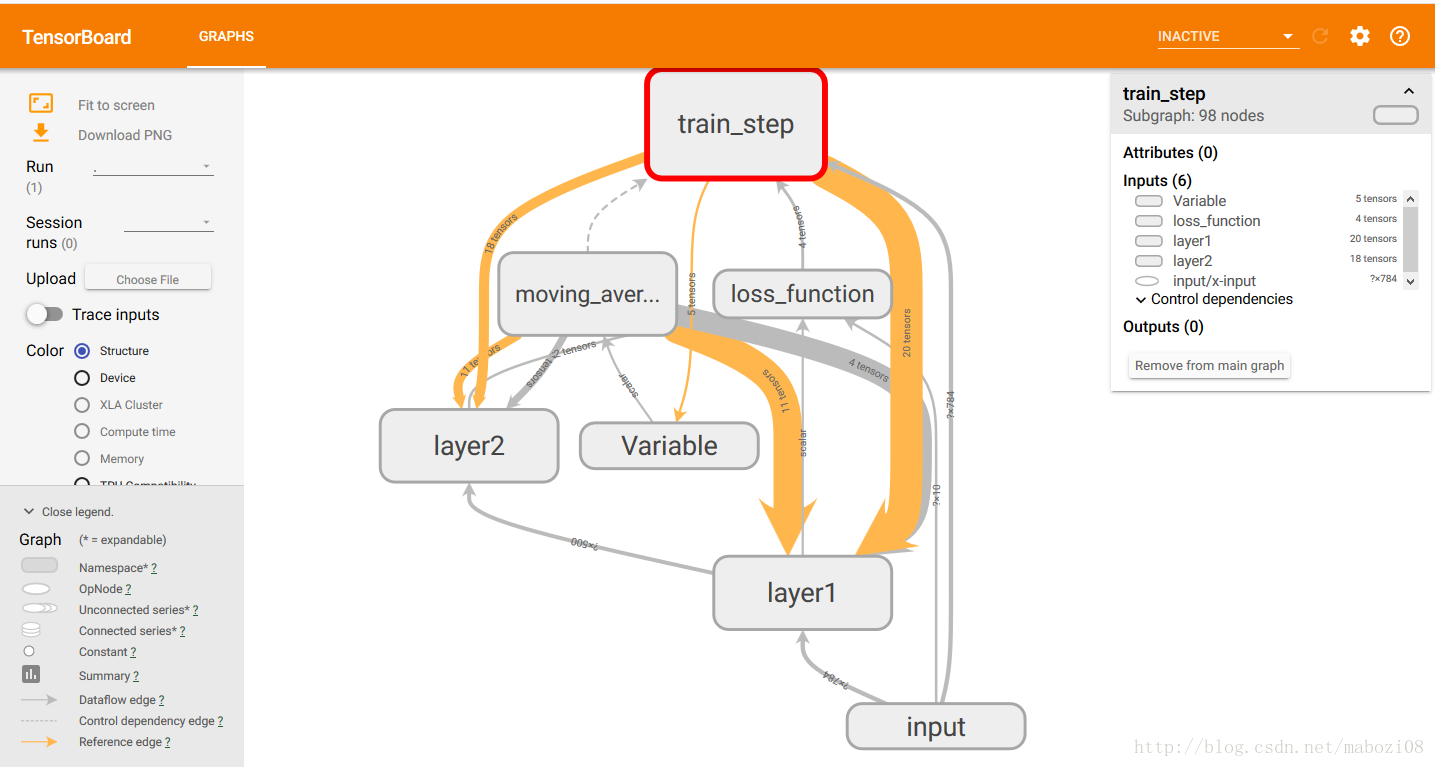

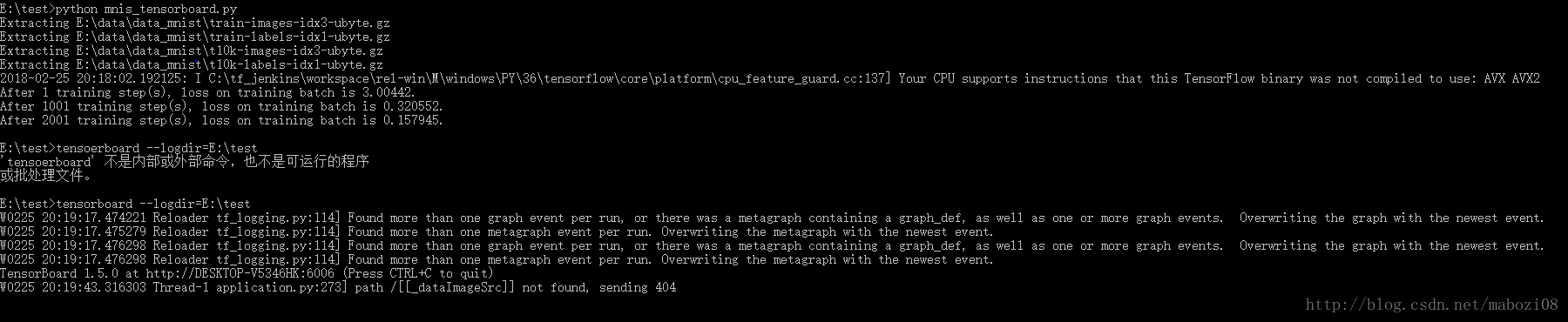

tf.app.run()在cmd中运行后,输入tensorboard –logdir=E:\test

打开浏览器,网址输入 http://DESKTOP-V5346HK:6006

便可得到计算图可视化效果图