#!/usr/bin/env python

# -*- coding: utf-8 -*-

# @Author : Woolei

# @File : book136_singleprocess.py

import requests

import time

import os

from bs4 import BeautifulSoup

headers = {

'User-Agent':

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.84 Safari/537.36'

}

# 获取小说章节内容,并写入文本

def getChapterContent(each_chapter_dict):

content_html = requests.get(each_chapter_dict['chapter_url'], headers=headers).text

soup = BeautifulSoup(content_html, 'lxml')

content_tag = soup.find('p', {'id': 'content'})

p_tag = content_tag.find_all('p')

print('正在保存的章节 --> ' + each_chapter_dict['name'])

for each in p_tag:

paragraph = each.get_text().strip()

with open(each_chapter_dict['name'] + r'.txt', 'a', encoding='utf8') as f:

f.write(' ' + paragraph + '\n\n')

f.close()

# 获取小说各个章节的名字和url

def getChapterInfo(novel_url):

chapter_html = requests.get(novel_url, headers=headers).text

soup = BeautifulSoup(chapter_html, 'lxml')

chapter_list = soup.find_all('li')

chapter_all_dict = {}

for each in chapter_list:

import re

chapter_each = {}

chapter_each['name'] = each.find('a').get_text() # 获取章节名字

chapter_each['chapter_url'] = each.find('a')['href'] # 获取章节url

chapter_num = int(re.findall('\d+', each.get_text())[0]) # 提取章节序号

chapter_all_dict[chapter_num] = chapter_each # 记录到所有的章节的字典中保存

return chapter_all_dict

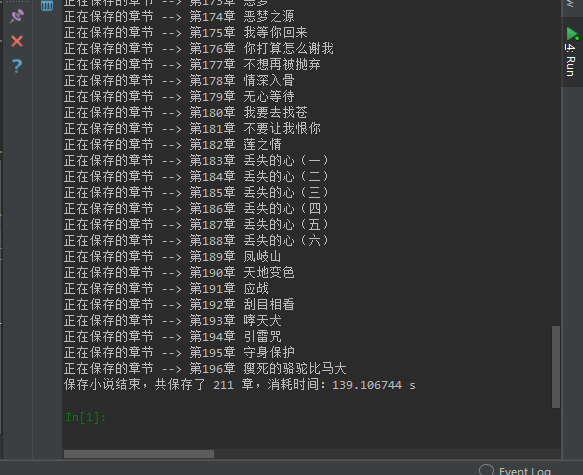

if __name__ == '__main__':

start = time.clock() # 记录程序运行起始时间

novel_url = 'https://www.136book.com/sanshengsanshimenglitaohua/' # 这里以三生三世十里桃花为例

novel_info = getChapterInfo(novel_url) # 获取小说章节记录信息

dir_name = '保存的小说路径'

if not os.path.exists(dir_name):

os.mkdir(dir_name)

os.chdir(dir_name) # 切换到保存小说的目录

for each in novel_info:

getChapterContent(novel_info[each])

# time.sleep(1)

end = time.clock() # 记录程序结束的时间

print('保存小说结束,共保存了 %d 章,消耗时间:%f s' % (len(novel_info), (end - start)))