架构 思路:

步骤1: 配置 flume

创建配置文件:flume-kafka.conf 内容如下:

# define

a1.sources = r1

a1.sinks = k1

a1.channels = c1

# source

a1.sources.r1.type = exec

a1.sources.r1.command = tail -F /opt/module/datas/flume.log

a1.sources.r1.shell = /bin/bash -c

# sink

a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink

#指定kafka集群地址以及topic主题

a1.sinks.k1.kafka.bootstrap.servers = hadoop201:9092,hadoop201:9092,hadoop203:9092

a1.sinks.k1.kafka.topic = first

a1.sinks.k1.kafka.flumeBatchSize = 20

a1.sinks.k1.kafka.producer.acks = 1

a1.sinks.k1.kafka.producer.linger.ms = 1

# channel

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

# bind

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

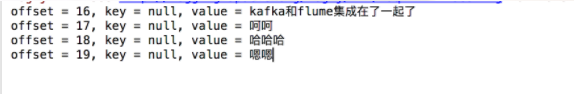

开启 idea 消费者

启动 Flume

flume-ng agent -c conf -f job/flume-kafka.conf -n a1

向/opt/module/datas/flume.log 写入数据

写入数据:

kafka和flume集成在了一起了

呵呵

哈哈哈

嗯嗯kafka 消费消费到的数据: