版权声明:站在巨人的肩膀上学习。 https://blog.csdn.net/zgcr654321/article/details/84678163

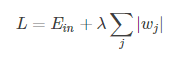

L1正则化公式:

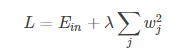

L2正则化公式:

tf.contrib.layers.l1_regularizer()和tf.contrib.layers.l2_regularizer()是Tensoflow中L1正则化函数和L2正则化函数的API。

其基本用法如下:

import tensorflow as tf

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

w = tf.constant([[1., -2.], [-3., 4.]])

# w即我们的正则化项的权重,0.5是我们正则化项的权重参数λ

l1_regular = tf.contrib.layers.l1_regularizer(0.5)(w)

l2_regular = tf.contrib.layers.l2_regularizer(0.5)(w)

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

l1_result, l2_result = sess.run([l1_regular, l2_regular])

# l1的值为(|1|+|-2|+|-3|+|4|)*0.5=5

print(l1_result)

# l2的值为((1²+(-2)²+(-3)²+4²)/2)*0.5=7.5

# L2的正则化损失值默认需要除以2,后面乘以0.5是我们设置的

print(l2_result)运行结果如下:

5.0

7.5

Process finished with exit code 0在tensorflow的模型中,我们想要在loss函数中加入正则化项,应当这么操作:

loss = tf.reduce_mean(tf.square(y-y_pred) + tf.contrib.layers.l2_regularizer(0.5)(w))即给每个样本的loss值都加上正则化项,再求其平均值。