import matplotlib.pyplot as plt

import pandas as pd

import string

import codecs

import os

import jieba

from sklearn.feature_extraction.text import CountVectorizer

from wordcloud import WordCloud

from sklearn import naive_bayes as bayes

from sklearn.model_selection import train_test_splitjoin(): 连接字符串数组。将字符串、元组、列表中的元素以指定的字符(分隔符)连接生成一个新的字符串

os.path.join(): 将多个路径组合后返回

第一个以”/”开头的参数开始拼接,之前的参数全部丢弃。

以上一种情况为先。在上一种情况确保情况下,若出现”./”开头的参数,会从”./”开头的参数的上一个参数开始拼接

file_path = "D:\\chrome download\\test-master"

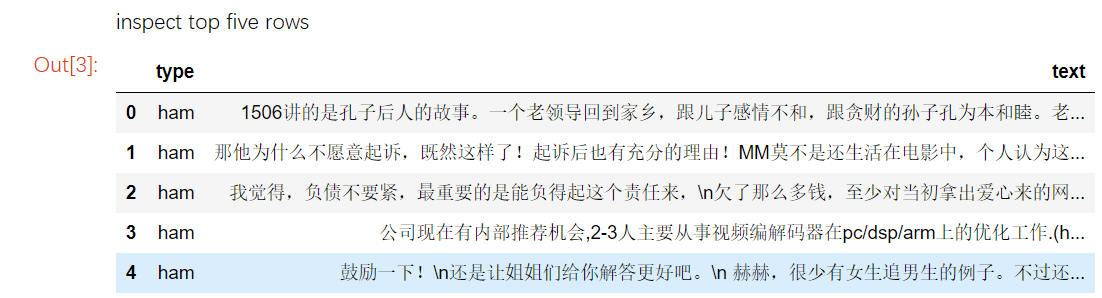

emailframe = pd.read_excel(os.path.join(file_path, "chinesespam.xlsx"), 0)print("inspect top five rows") # 审查前5个行

emailframe.head(5)运行结果:

可以发现,垃圾邮件50条,非垃圾邮件100条

载入停止词

stopwords = codecs.open(os.path.join(file_path,'stopwords.txt'),'r','UTF-8').read().split('\r\n')结巴分词,过滤停止词,空string,标点等

processed_texts = []

for text in emailframe["text"]:

words = []

seg_list = jieba.cut(text)

for seg in seg_list:

if (seg.isalpha()) & (seg not in stopwords):

words.append(seg)

sentence = " ".join(words)

processed_texts.append(sentence)

emailframe["text"] = processed_texts运行结果:

查看过滤的结果

emailframe.head()运行结果:

向量化

def transformTextToSparseMatrix(texts):

vectorizer = CountVectorizer(binary = False)

vectorizer.fit(texts)

vocabulary = vectorizer.vocabulary_

print("There are ", len(vocabulary), " word features")

vector = vectorizer.transform(texts)

result = pd.DataFrame(vector.toarray())

keys = pd.DataFrame(vector.toarray())

keys = []

values = []

for key,value in vectorizer.vocabulary_.items():

keys.append(key)

values.append(value)

df = pd.DataFrame(data = {"key":keys,"values":values})

colnames = df.sort_values("values")["key"].values

result.columns = colnames

return result矩阵

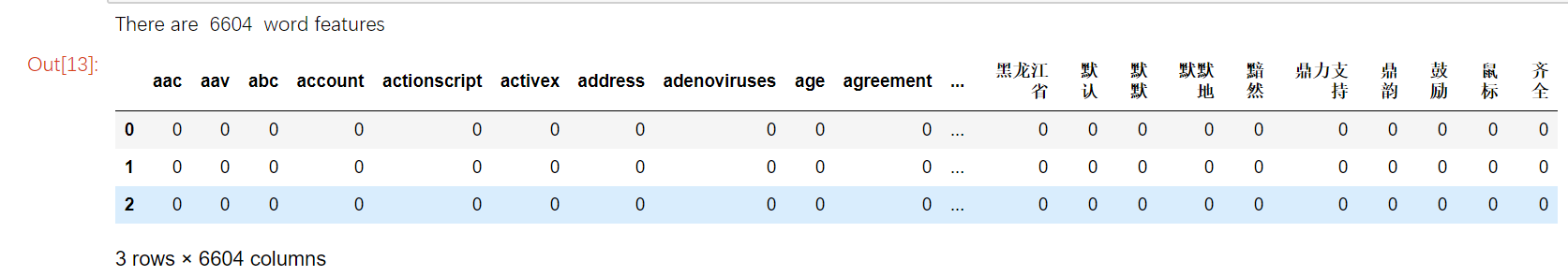

textmatrix = transformTextToSparseMatrix(emailframe["text"])

textmatrix.head(3)运行结果:

运行后可以看到,数据集中一共有5982个不同的单词,即有5982个不同的特征,维数太多,接下来进行过滤

过滤一些频繁出现的词

features = pd.DataFrame(textmatrix.apply(sum, axis=0))

extractedfeatures = [features.index[i] for i in range(features.shape[0]) if features.iloc[i,0] > 5]

textmatrix = textmatrix[extractedfeatures]

print("There are ",textmatrix.shape[1],"word features")过滤了其中>5的789个单词,然后按照0.2:0.8划分数据集和训练集

train,test,trainlabel,testlabel = train_test_split(textmatrix,emailframe["type"],test_size = 0.2)使用朴素贝叶斯训练模型

clf = bayes.BernoulliNB(alpha=1,binarize=True)

model = clf.fit(train, trainlabel)进行模型评分

model.score(test,testlabel)