1、虚拟机运行环境:

JDK: jdk1.8.0_171 64位

Scala:scala-2.12.6

Spark:spark-2.3.1-bin-hadoop2.7

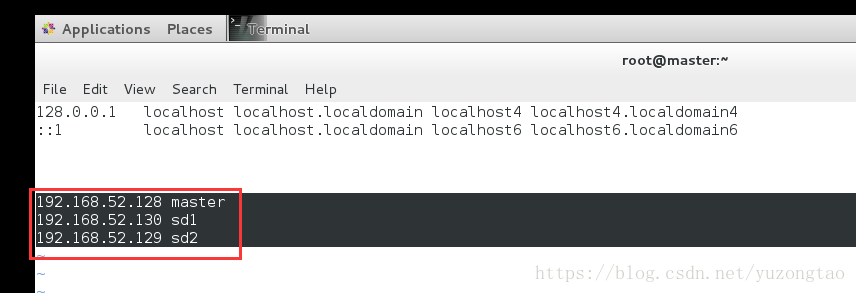

2、集群网络环境:

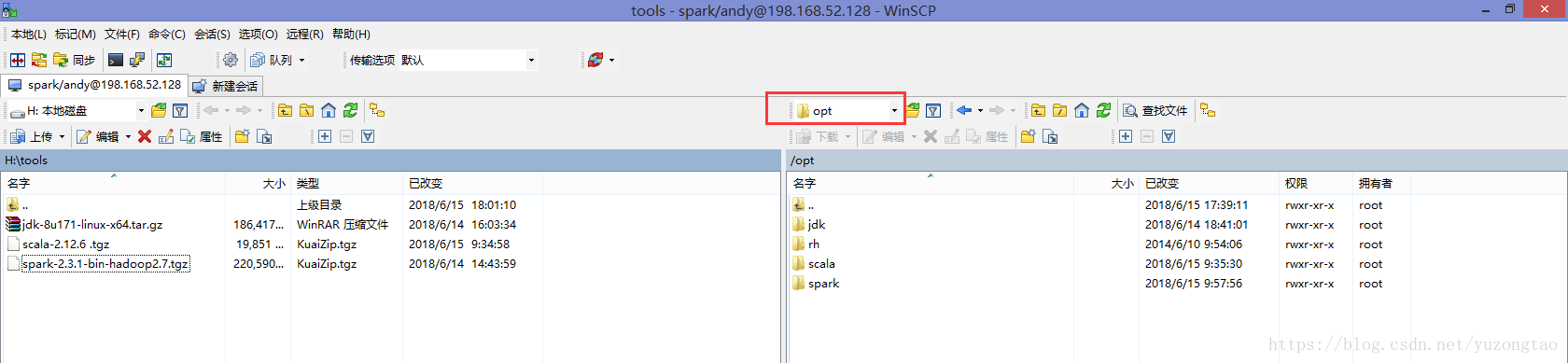

使用winscp工具上传jdk、scala、spark安装包到master主机/opt下新建的对应文件夹下

1)安装jdk

解压安装包到目录/usr/local下

[root@master conf]# tar -xvf jdk-8u171-linux-x64.tar.gz -C /usr/local/修改配置文件:

[root@master conf]# vi /etc/profile#jdk

export JAVA_HOME=/usr/local/jdk1.8.0_171

export CLASSPATH=.:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export PATH=$PATH:$JAVA_HOME/bin2)安装scala

解压scala安装包到目录/usr/local下

[root@master conf]# tar -xvf scala-2.12.6\ .tgz -C /usr/local/修改配置文件:

[root@master conf]# vi /etc/profile#scala

export SCALA_HOME=/usr/local/scala-2.12.6

export PATH=$PATH:$SCALA_HOME/bin3)安装spark

解压安装包/usr/local目录下

[root@master conf]# tar -xvf /opt/spark/spark-2.3.1-bin-hadoop2.7.tgz -C /usr/local修改配置文件:

[root@master conf]# vi /etc/profile#spark

export SPARK_HOME=/opt/spark/spark-2.3.1-bin-hadoop2.7

export PATH=$PATH:$SPARK_HOME/bin

export SPARK_EXAMPLES_JAR=$SPARK_HOME/examples/jars/spark-examples_3.11-2.3.1.jar配置spark:

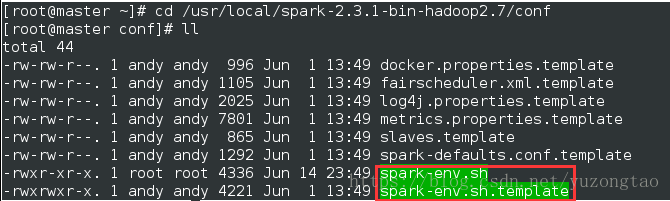

01.修改spark-env.sh如下:

[root@master conf]# cp spark-env.sh.template spark-env.sh 复制spark-env.sh.template模板到spark-env.sh,

[root@master conf]# vim spark-env.sh 打开文件,在最下方添加如下配置:

SPARK_MASTER_IP=192.168.52.128

export JAVA_HOME=/usr/local/jdk1.8.0_171

export SCALA_HOME=/usr/local/scala-2.12.6

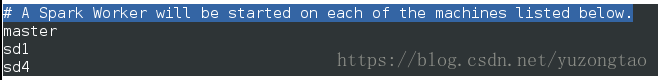

02. 修改配置文件slaves

[root@master conf]# cp slaves.template slaves

[root@master conf]# vim slaves

03.将文件从master复制到sd1和sd2:

[root@master conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf root@sd1:/usr/local/spark-2.3.1-bin-hadoop2.7/[root@master conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf root@sd2:/usr/local/spark-2.3.1-bin-hadoop2.7/ 复制目录:

(1)将本地目录拷贝到远程

scp -r 目录名用户名@计算机IP或者计算机名称:远程路径

(2)从远程将目录拷回本地

scp -r 用户名@计算机IP或者计算机名称:目录名本地路径

[root@master conf]# scp -r /usr/local/spark-2.3.1-bin-hadoop2.7/conf root@sd1:/usr/local/spark-2.3.1-bin-hadoop2.7/

spark-defaults.conf.template 100% 1292 1.3KB/s 00:00

slaves.template 100% 865 0.8KB/s 00:00

metrics.properties.template 100% 7801 7.6KB/s 00:00

spark-env.sh.template 100% 4221 4.1KB/s 00:00

fairscheduler.xml.template 100% 1105 1.1KB/s 00:00

log4j.properties.template 100% 2025 2.0KB/s 00:00

docker.properties.template 100% 996 1.0KB/s 00:00

spark-env.sh 100% 4221 4.1KB/s 00:00

slaves 100% 871 0.9KB/s 00:00

.slaves.swp 3、SSH免密码验证登陆:

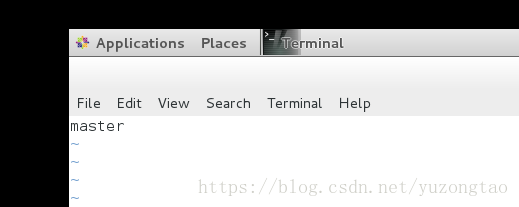

1)修改主机名称

[root@master ~]# vim /etc/hostname

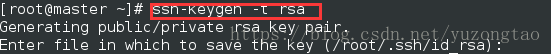

2)生成秘钥,执行命令ssh-keygen -t rsa,然后一直按回车键即可

[root@server ~]# ssh-keygen -b 1024 -t rsa

Generating public/private rsa key pair. #提示正在生成rsa密钥对

Enter file in which to save the key (/home/usrname/.ssh/id_dsa): #询问公钥和私钥存放的位置,回车用默认位置即可

Enter passphrase (empty for no passphrase): #询问输入私钥密语,输入密语

Enter same passphrase again: #再次提示输入密语确认

Your identification has been saved in /home/usrname/.ssh/id_dsa. #提示公钥和私钥已经存放在/root/.ssh/目录下

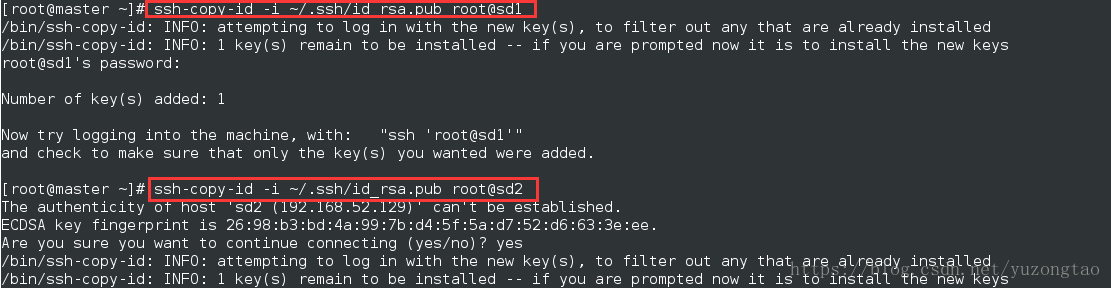

Your public key has been saved in /home/usrname/.ssh/id_dsa.pub.3)复制spark-master结点的id_rsa.pub文件到另外两个结点:

sup -r id_rsa.pub root@sd1:~/.ssh/

sup -r id_rsa.pub root@sd2:~/.ssh/

4.Centos7 防火墙关闭 :

#查看防火墙的状态

firewall-cmd --state

#关闭防火墙

systemctl stop firewalld.service

#禁止firewall开机启动

systemctl disable firewalld.service

如果防火墙没有关闭,sd1,sd2连接master中端口将被屏蔽掉,虽然网络可以PING通。但在启动Hadoop ,Spark 时Work 将连接不上Master