实现一个四层的神经网络

import numpy as np//定义一个激活函数

def sigmoid(x,deriv=False):

if (deriv == True):

return x*(1-x)

return 1/(1+np.exp(-x))//构造样本

X = np.array([

[1,0,1,0,1,1],

[1,1,1,0,1,1],

[1,0,1,0,0,1],

[1,0,0,0,0,1],

[1,1,1,1,1,1],

[0,0,1,0,1,0],

[0,0,1,1,1,1]

])//构造标签

y = np.array([

[1],

[0],

[0],

[1],

[1],

[1],

[0]

])//设定一个种子

np.random.seed(1)//随机化初始权重值,高斯初始化,或者随机0-1初始化

w0 = 2*np.random.random((6,5))

w1 = 2*np.random.random((5,7))

w2 = 2*np.random.random((7,1))//构造网络,这里迭代6000次

for i in range(60000):

# 前向传播

L0 = X

L1 = sigmoid(L0.dot(w0))

L2 = sigmoid(L1.dot(w1))

L3 = sigmoid(L2.dot(w2))

#计算错误

L3_error = L3 - y

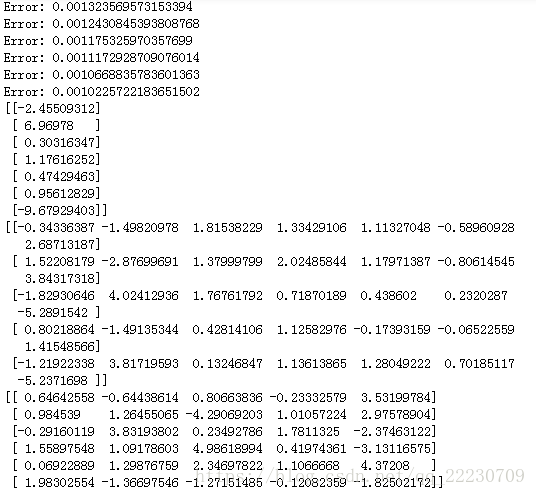

if(i%10000 == 0): #每10000次打印出来一个error

print("Error: "+np.str(np.mean(np.abs(L3_error))))

#进行反向传播

L3_delta = L3_error * sigmoid(L3,deriv = True)

L2_error = L3_delta.dot(w2.T)

L2_delta = L2_error * sigmoid(L2,deriv = True)

L1_error = L2_delta.dot(w1.T)

L1_delta = L1_error * sigmoid(L1,deriv = True)

L0_error = L1_delta.dot(w0.T)

L0_delta = L0_error * sigmoid(L0,deriv = True)

#更新梯度

w2 -= L2.T.dot(L3_delta)

w1 -= L1.T.dot(L2_delta)

w0 -= L0.T.dot(L1_delta)

print(w2)

print(w1)

print(w0)结果: