1 import requests 2 from queue import Queue 3 import threading 4 from lxml import etree 5 import re 6 import csv 7 8 9 class Producer(threading.Thread): 10 headers = {'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.75 Safari/537.36'} 11 def __init__(self, page_queue, poem_queue, *args, **kwargs): 12 super(Producer, self).__init__(*args, **kwargs) 13 self.page_queue = page_queue 14 self.poem_queue = poem_queue 15 16 17 def run(self): 18 while True: 19 if self.page_queue.empty(): 20 break 21 url = self.page_queue.get() 22 self.parse_html(url) 23 24 25 def parse_html(self, url): 26 # poems = [] 27 headers = {'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.75 Safari/537.36'} 28 response = requests.get(url, headers=headers) 29 response.raise_for_status() 30 html = response.text 31 html_element = etree.HTML(html) 32 titles = html_element.xpath('//div[@class="cont"]//b/text()') 33 contents = html_element.xpath('//div[@class="contson"]') 34 hrefs = html_element.xpath('//div[@class="cont"]/p[1]/a/@href') 35 for index, content in enumerate(contents): 36 title = titles[index] 37 content = etree.tostring(content, encoding='utf-8').decode('utf-8') 38 content = re.sub(r'<.*?>|\n|', '', content) 39 content = re.sub(r'\u3000\u3000', '', content) 40 content = content.strip() 41 href = hrefs[index] 42 self.poem_queue.put((title, content, href)) 43 44 45 class Consumer(threading.Thread): 46 47 def __init__(self, poem_queue, writer, gLock, *args, **kwargs): 48 super(Consumer, self).__init__(*args, **kwargs) 49 self.writer = writer 50 self.poem_queue = poem_queue 51 self.lock = gLock 52 53 def run(self): 54 while True: 55 try: 56 title, content, href = self.poem_queue.get(timeout=20) 57 self.lock.acquire() 58 self.writer.writerow((title, content, href)) 59 self.lock.release() 60 except: 61 break 62 63 64 def main(): 65 page_queue = Queue(100) 66 poem_queue = Queue(500) 67 gLock = threading.Lock() 68 fp = open('poem.csv', 'a',newline='', encoding='utf-8') 69 writer = csv.writer(fp) 70 writer.writerow(('title', 'content', 'href')) 71 72 73 for x in range(1, 100): 74 url = 'https://www.gushiwen.org/shiwen/default.aspx?page=%d&type=0&id=0' % x 75 page_queue.put(url) 76 77 for x in range(5): 78 t = Producer(page_queue, poem_queue) 79 t.start() 80 81 for x in range(5): 82 t = Consumer(poem_queue, writer, gLock) 83 t.start() 84 85 if __name__ == '__main__': 86 main()

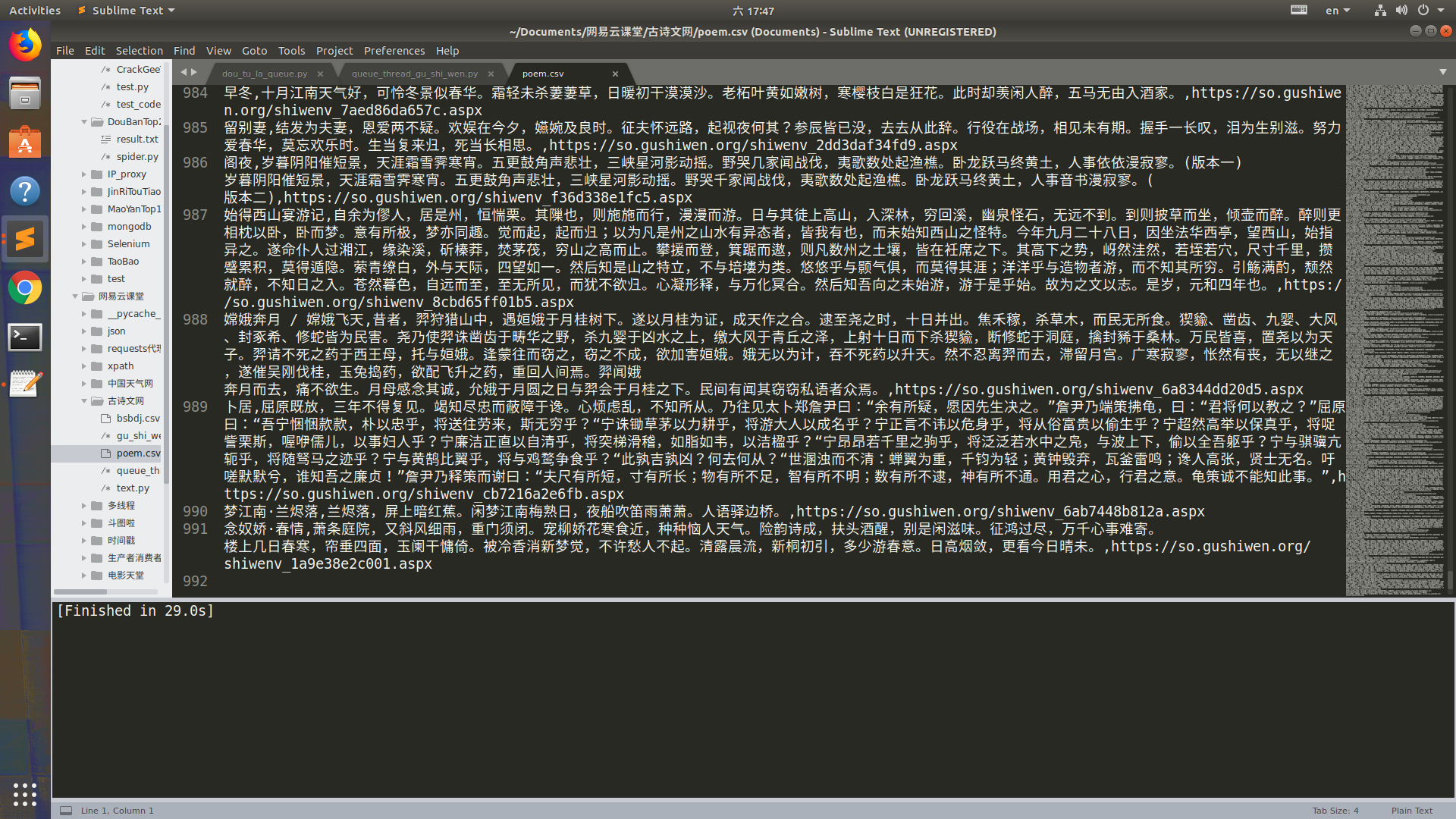

运行结果