一、模型文件简介

Tensorflow模型主要包含网络参数的网络设计或图,以及训练的网络参数的值,因此包含两个文件

a)

meta graph--存储

Tensorflow图形;即所有的变量,操作,集合等等。这个文件有扩展文件.meta。

b)

checkpoint --一个二进制文件,包含了所有的权重、偏差、梯度和所有其他保存的变量的值。这个文件有一个扩展文件.ckpt。然而,Tensorflow已经从0.11版本中改变了这一点。现在,我们有两个文件,而不是单独的ckpt文件。

mymodel.data-00000-of-00001 --包含训练的变量

mymodel.index

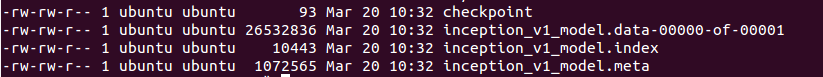

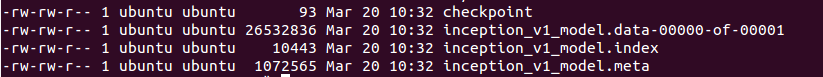

所以大于0.1版本的模型文件将有4个

0.11版本以前是三个文件

checkpoint

checkpoint

inception_v1.meta

inception_v1.ckpt

二、存储模型save

1,由于tf中变量只能存在会话session中,所以必须在会话中存储

import tensorflow as tf

w1 = tf.Variable(tf.random_normal(shape=[2]), name='w1')

w2 = tf.Variable(tf.random_normal(shape=[5]), name='w2')

saver = tf.train.Saver()

sess = tf.Session()

sess.run(tf.global_variables_initializer())

saver.save(sess, 'my_test_model')

# This will save following files in Tensorflow v >= 0.11

# my_test_model.data-00000-of-00001

# my_test_model.index

# my_test_model.meta

# checkpoint,2,每1000步迭代进行一次存储

saver.save(sess, 'my_test_model',global_step=1000)

存储文件结构

my_test_model-1000.index

my_test_model-1000.meta

my_test_model-1000.data-00000-of-00001

checkpoint

3,由于meta文件不会改变,如果不想每次迭代都存储该文件(即只存储一个.meta文件)

saver.save(sess, 'my-model', global_step=step,write_meta_graph=False)4,如果您想只保留4个最新模型,并希望在训练后每2小时保存一个模型,那么您可以使用max_to_keep和keep_checkpoint_every_n_hours。

#saves a model every 2 hours and maximum 4 latest models are saved.

saver = tf.train.Saver(max_to_keep=4, keep_checkpoint_every_n_hours=2)5,指定存储某些变量或者集合(variable/collection)

import tensorflow as tf

w1 = tf.Variable(tf.random_normal(shape=[2]), name='w1')

w2 = tf.Variable(tf.random_normal(shape=[5]), name='w2')

saver = tf.train.Saver([w1,w2])

sess = tf.Session()

sess.run(tf.global_variables_initializer())

saver.save(sess, 'my_test_model',global_step=1000)

三、导入预训练模型restore

1,使用预训练模型进行微调

a)创建网络,可以自己手动写。也可以使用.meta文件重建网络,但记得还要加载参数

saver = tf.train.import_meta_graph('my_test_model-1000.meta')b)加载参数

with tf.Session() as sess:

new_saver = tf.train.import_meta_graph('my_test_model-1000.meta')

new_saver.restore(sess, tf.train.latest_checkpoint('./'))2,对于只存储了某些变量或者集合的情况,如存储了w1和w2

with tf.Session() as sess:

saver = tf.train.import_meta_graph('my-model-1000.meta')

saver.restore(sess,tf.train.latest_checkpoint('./'))

print(sess.run('w1:0'))

##Model has been restored. Above statement will print the saved value of w1.

四、恢复任何预先训练过的模型,并将其用于预测,微调或进一步训练

1,当您使用Tensorflow时,您可以定义一个图表喂训练数据和一些超参数,比如学习速率,全局步骤等等。使用占位符来输入所有训练数据和超参数的标准做法。让我们用占位符构建一个小网络,并保存它。注意,当网络被保存时,占位符的值不会被保存。

import tensorflow as tf

#Prepare to feed input, i.e. feed_dict and placeholders

w1 = tf.placeholder("float", name="w1")

w2 = tf.placeholder("float", name="w2")

b1= tf.Variable(2.0,name="bias")

feed_dict ={w1:4,w2:8}

#Define a test operation that we will restore

w3 = tf.add(w1,w2)

w4 = tf.multiply(w3,b1,name="op_to_restore")

sess = tf.Session()

sess.run(tf.global_variables_initializer())

#Create a saver object which will save all the variables

saver = tf.train.Saver()

#Run the operation by feeding input

print(sess.run(w4,feed_dict))

#Prints 24 which is sum of (w1+w2)*b1

#Now, save the graph

saver.save(sess, 'my_test_model',global_step=1000)2,我们可以通过graph.get_tensor_by_name()方法获得这些保存的操作和占位符变量的引用。

#How to access saved variable/Tensor/placeholders

w1 = graph.get_tensor_by_name("w1:0")

## How to access saved operation

op_to_restore = graph.get_tensor_by_name("op_to_restore:0")3,如果只想用不同数据跑相同网络,通过feed_dict喂新数据就行

import tensorflow as tf

sess=tf.Session()

#First let's load meta graph and restore weights

saver = tf.train.import_meta_graph('my_test_model-1000.meta')

saver.restore(sess,tf.train.latest_checkpoint('./'))

# Now, let's access and create placeholders variables and

# create feed-dict to feed new data

graph = tf.get_default_graph()

w1 = graph.get_tensor_by_name("w1:0")

w2 = graph.get_tensor_by_name("w2:0")

feed_dict ={w1:13.0,w2:17.0}

#Now, access the op that you want to run.

op_to_restore = graph.get_tensor_by_name("op_to_restore:0")

print(sess.run(op_to_restore,feed_dict))

#This will print 60 which is calculated

#using new values of w1 and w2 and saved value of b1. 4, 如果想添加更多操作到图里

import tensorflow as tf

sess=tf.Session()

#First let's load meta graph and restore weights

saver = tf.train.import_meta_graph('my_test_model-1000.meta')

saver.restore(sess,tf.train.latest_checkpoint('./'))

# Now, let's access and create placeholders variables and

# create feed-dict to feed new data

graph = tf.get_default_graph()

w1 = graph.get_tensor_by_name("w1:0")

w2 = graph.get_tensor_by_name("w2:0")

feed_dict ={w1:13.0,w2:17.0}

#Now, access the op that you want to run.

op_to_restore = graph.get_tensor_by_name("op_to_restore:0")

#Add more to the current graph

add_on_op = tf.multiply(op_to_restore,2)

print(sess.run(add_on_op,feed_dict))

#This will print 120.5,恢复旧图的一部分功能进行微调的,使用graph.get_tensor_by_name()方法访问适当的操作,并在此基础上构建图表。这是一个真实的例子。在这里,我们使用元图加载一个vgg预先训练的网络,并在最后一层将输出的数量更改为2,以便对新数据进行微调。

......

......

saver = tf.train.import_meta_graph('vgg.meta')

# Access the graph

graph = tf.get_default_graph()

## Prepare the feed_dict for feeding data for fine-tuning

#Access the appropriate output for fine-tuning

fc7= graph.get_tensor_by_name('fc7:0')

#use this if you only want to change gradients of the last layer

fc7 = tf.stop_gradient(fc7) # It's an identity function

fc7_shape= fc7.get_shape().as_list()

new_outputs=2

weights = tf.Variable(tf.truncated_normal([fc7_shape[3], num_outputs], stddev=0.05))

biases = tf.Variable(tf.constant(0.05, shape=[num_outputs]))

output = tf.matmul(fc7, weights) + biases

pred = tf.nn.softmax(output)

# Now, you run this with fine-tuning data in sess.run()来自官方文档http://cv-tricks.com/tensorflow-tutorial/save-restore-tensorflow-models-quick-complete-tutorial/