预训练和微调代码

数据集:CIFAR10

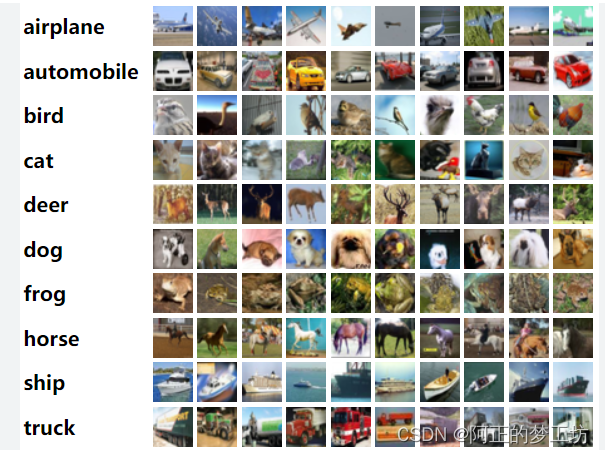

CIFAR-10数据集由10类32x32的彩色图片组成,一共包含60000张图片,每一类包含6000图片。其中50000张图片作为训练集,10000张图片作为测试集。数据集介绍来自:CIFAR10

图片来源:https://paperswithcode.com/dataset/cifar-10

预训练模型: vgg16

代码

# Imports

import torch

import torchvision

import torch.nn as nn # All neural network modules, nn.Linear, nn.Conv2d, BatchNorm, Loss functions

import torch.optim as optim # For all Optimization algorithms, SGD, Adam, etc.

import torch.nn.functional as F # All functions that don't have any parameters

from torch.utils.data import (

DataLoader,

) # Gives easier dataset managment and creates mini batches

import torchvision.datasets as datasets # Has standard datasets we can import in a nice way

import torchvision.transforms as transforms # Transformations we can perform on our dataset

from tqdm import tqdm

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

# Hyperparameters

num_classes = 10

learning_rate = 1e-3

batch_size = 1024

num_epochs = 2

class Identity(nn.Module):

def __init__(self):

super(Identity, self).__init__()

def forward(self, x):

return x

# Load pretrain model & modify it

model = torchvision.models.vgg16(weights='DEFAULT')

# # If you want to do finetuning then set requires_grad = False

# # Remove these two lines if you want to train entire model,

# # and only want to load the pretrain weights.

# for param in model.parameters():

# param.requires_grad = False

for param in model.parameters():

param.requires_grad = False

model.avgpool = Identity() # 站位层,使得该层啥事不做

model.classifier = nn.Sequential(nn.Linear(512, 100),

nn.ReLU(),

nn.Linear(100, 10)) # 修改原模型的后几层

model.to(device)

# Load Data

train_dataset = datasets.CIFAR10(

root="dataset/",

train=True,

transform=transforms.ToTensor(),

download=True)

train_loader = DataLoader(dataset=train_dataset, batch_size=batch_size, shuffle=True)

# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=learning_rate)

# Train Network

for epoch in range(num_epochs):

losses = []

for batch_idx, (data, targets) in enumerate(tqdm(train_loader)):

# Get data to cuda if possible

data = data.to(device=device)

targets = targets.to(device=device)

# forward

scores = model(data)

loss = criterion(scores, targets)

losses.append(loss.item())

# backward

optimizer.zero_grad()

loss.backward()

# gradient descent or adam step

optimizer.step()

print(f"Cost at epoch {

epoch} is {

sum(losses)/len(losses):.5f}")

# Check accuracy on training & test to see how good our model

def check_accuracy(loader, model):

if loader.dataset.train:

print("Checking accuracy on training data")

else:

print("Checking accuracy on test data")

num_correct = 0

num_samples = 0

model.eval()

with torch.no_grad():

for x, y in loader:

x = x.to(device=device)

y = y.to(device=device)

scores = model(x)

_, predictions = scores.max(1)

num_correct += (predictions == y).sum()

num_samples += predictions.size(0)

print(

f"Got {

num_correct} / {

num_samples} with accuracy {

float(num_correct)/float(num_samples)*100:.2f}%"

)

model.train()

check_accuracy(train_loader, model)

测试结果

Checking accuracy on training data

Got 29449 / 50000 with accuracy 58.90%

可以看到本次预训练模型的导入,测试结果并不理想。但并不妨碍我们对Pytorch预训练和微调的学习。

参考来源

【1】 https://www.youtube.com/watch?v=qaDe0qQZ5AQ&list=PLhhyoLH6IjfxeoooqP9rhU3HJIAVAJ3Vz&index=8

【2】https://github.com/aladdinpersson/Machine-Learning-Collection/blob/master/ML/Pytorch/Basics/pytorch_pretrain_finetune.py