cat > flink- conf.yaml << EOF

jobmanager.rpc.address : boshi- 146

jobmanager.rpc.port : 6123

jobmanager.bind-host : 0.0.0.0

jobmanager.memory.process.size : 1600m

taskmanager.bind-host : 0.0.0.0

taskmanager.host : 0.0.0.0

taskmanager.memory.process.size : 1728m

taskmanager.numberOfTaskSlots : 1

parallelism.default : 1

jobmanager.execution.failover-strategy : region

rest.address : boshi- 146

rest.bind-address : 0.0.0.0

classloader.check-leaked-classloader : false

EOF

cat > workers << EOF

boshi- 107

boshi- 124

boshi- 131

boshi- 139

EOF

cat > masters << EOF

boshi- 146: 8081

EOF

ansible cluster - m file - a "path=/data/app state=directory"

ansible cluster - m copy - a "src=/data/app/flink- 1.17.1 dest=/data/app/ owner=root group=root mode=0755"

taskmanager.host : hostname

bin/start- cluster.sh

http: //boshi- 146: 8081

dfs.permissions=false

export HADOOP_CLASSPATH=`hadoop classpath`

/data/app/flink- 1.17.1/bin/yarn- session.sh - d

CREATE DATABASE dlink;

create user 'dlink'@'%' IDENTIFIED WITH mysql_native_password by 'Dlink*2023';

grant ALL PRIVILEGES ON dlink.* to 'dlink'@'%';

flush privileges;

mysql - udlink - pDlink*2023

use dlink;

source /data/app/dlink/sql/dinky.sql;

cp /data/app/flink- 1.17.1/lib/* /data/app/dlink/plugins/flink1.17/

cp flink- shaded- hadoop- 3- uber- 3.1.1.7.2.9.0- 173- 9.0.jar /data/app/dlink/plugins/

sudo - u hdfs hdfs dfs - mkdir - p /dlink/jar/

sudo - u hdfs hdfs dfs - put /data/app/dlink/jar/dlink- app- 1.17- 0.7.3- jar- with- dependencies.jar /dlink/jar

sudo - u hdfs hadoop fs - mkdir /dlink/flink- dist- 17

sudo - u hdfs hadoop fs - put /data/app/flink- 1.17.1/lib /dlink/flink- dist- 17

sudo - u hdfs hadoop fs - put /data/app/flink- 1.17.1/plugins /dlink/flink- dist- 17

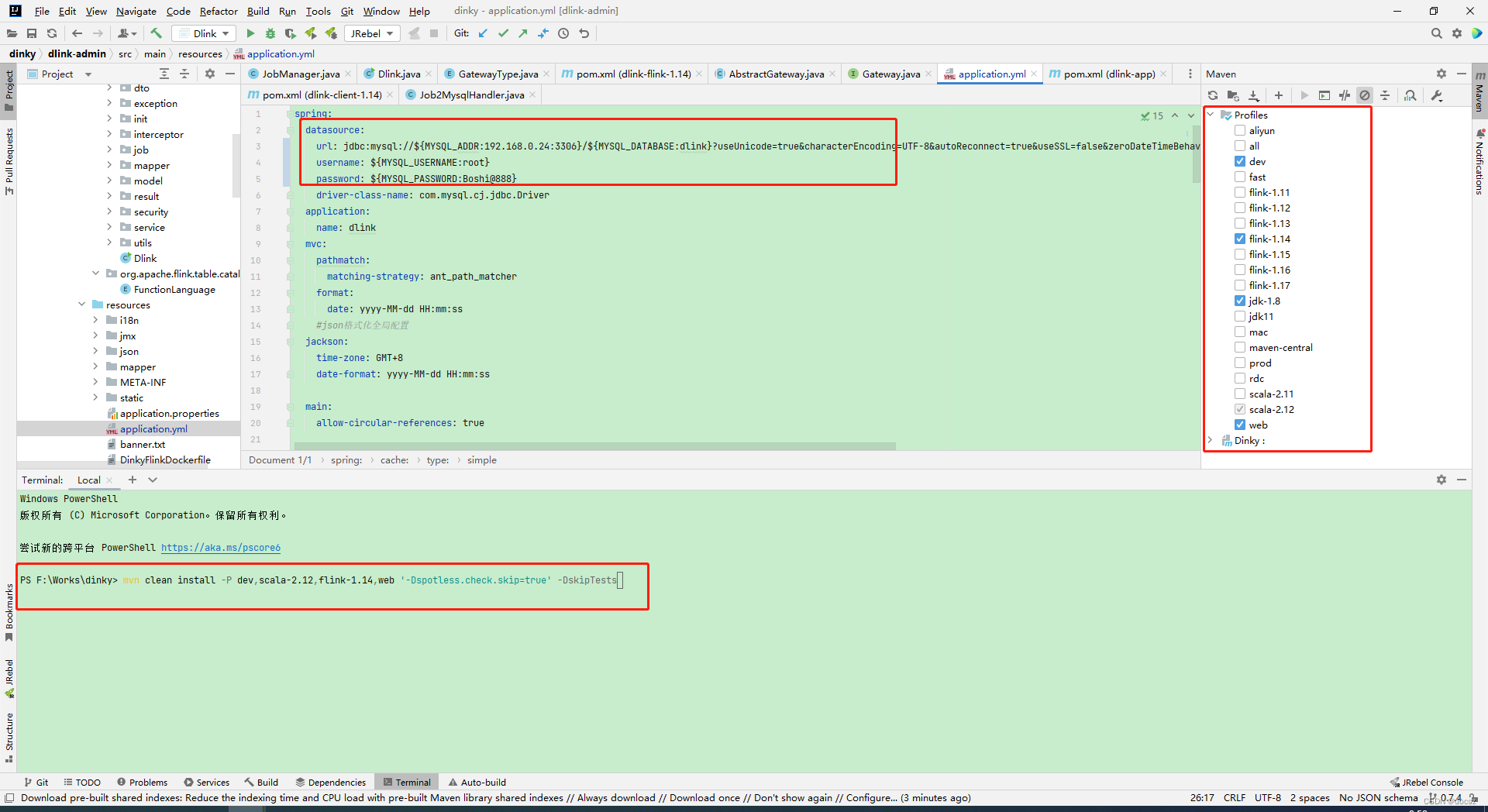

vi ./config/application.yml

spring :

datasource :

url : jdbc: mysql: //${

MYSQL_ADDR: boshi- 146: 3306 } /${

MYSQL_DATABASE: dinky} ? useUnicode=true&characterEncoding=UTF-8&autoReconnect=true&useSSL=false&zeroDateTimeBehavior=convertToNull&serverTimezone=Asia/Shanghai&allowPublicKeyRetrieval=true

username : ${

MYSQL_USERNAME: dinky}

password : ${

MYSQL_PASSWORD: dinky}

driver-class-name : com.mysql.cj.jdbc.Driver

application :

name : dinky

mvc :

pathmatch :

matching-strategy : ant_path_matcher

format :

date : yyyy- MM- dd HH: mm: ss

jackson :

time-zone : GMT+8

date-format : yyyy- MM- dd HH: mm: ss

main :

allow-circular-references : true

cd /data/app/dlink

sh auto.sh start 1.17

http: //boshi- 146: 8888

admin/admin

http:/ / www. dlink. top/docs/next/developer_guide/local_debug

首先按照官网的步骤安装环境。

npm 7. 19. 0

node. js 14. 17. 0

jdk 1. 8

maven 3. 6. 0+

lombok IDEA插件安装

mysql 5. 7+

版本必须一致。不然要踩很多坑。

<dependency>

<groupId>com. starrocks</ groupId>

<artifactId>flink-connector-starrocks</ artifactId>

<version>1. 2. 7_flink-1. 13_${

scala. binary. version} </ version>

<exclusions>

<exclusion>

<groupId>com. github. jsqlparser</ groupId>

<artifactId>jsqlparser</ artifactId>

</ exclusion>

</ exclusions>

</ dependency>

<mysql-connector-java. version>8. 0. 33</ mysql-connector-java. version>

mvn clean install - P dev, scala-2. 12, flink-1. 14, web '-Dspotless.check.skip=true' - DskipTests