Torchsummary库是深度学习网络结构可视化常用的库:安装地址

Torch-summary库是torchsummary的加强版,库的介绍和安装地址.

建议安装Torch-summary库而非Torchsummary库,前者在继承后者的函数外还解决了后者存在的诸多Bug

Torchsummary库常遇问题

一、问题一:使用torchsummary查看网络结构时报错:AttributeError: ‘list’ object has no attribute ‘size’

解决方法:

pip uninstall torchsummary # 卸载原来的torchsummary库

pip install torch-summary==1.4.4 # 安装升级版本torch-summary

例子:

from torchvision import models

import torchsummary as summary

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

vgg = models.vgg16().to(device)

summary(vgg,(3,224,224))

问题二:torchsummary报错:TypeError: ‘module’ object is not callable

解决方案:

from torchvision import models

import torchsummary as summary

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

vgg = models.vgg16().to(device)

summary.summary(vgg,(3,224,224))

Torch-summary库常见用法

# 使用样式

from torchsummary import summary

summary(model, input_size=(channels, H, W))

# 多输入情况并且打印不同层的特征图大小

from torchsummary import summary

summary(model,first_input,second_input)

# 打印不同的内容

import torch

import torch.nn as nn

from torch-summary import summary

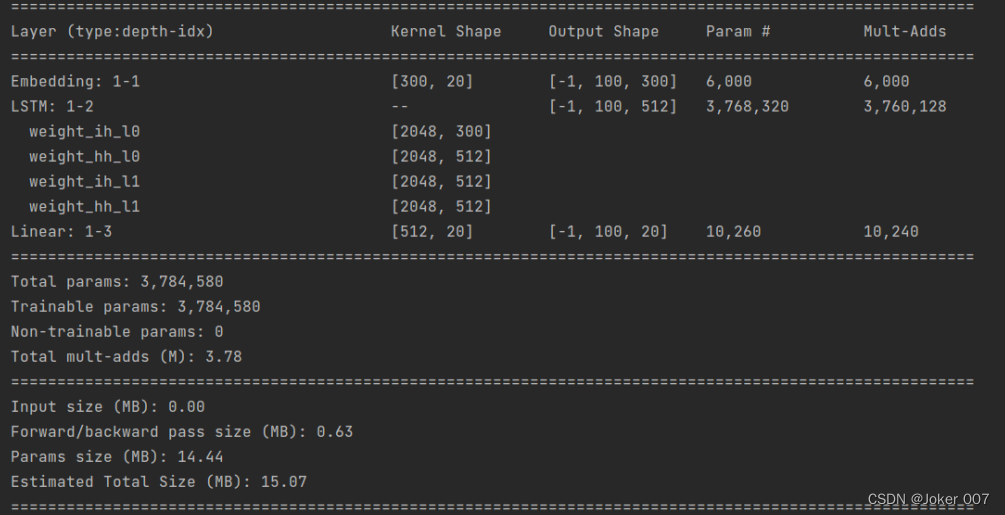

class LSTMNet(nn.Module):

""" Batch-first LSTM model. """

def __init__(self, vocab_size=20, embed_dim=300, hidden_dim=512, num_layers=2):

super().__init__()

self.hidden_dim = hidden_dim

self.embedding = nn.Embedding(vocab_size, embed_dim)

self.encoder = nn.LSTM(embed_dim, hidden_dim, num_layers=num_layers, batch_first=True)

self.decoder = nn.Linear(hidden_dim, vocab_size)

def forward(self, x):

embed = self.embedding(x)

out, hidden = self.encoder(embed)

out = self.decoder(out)

out = out.view(-1, out.size(2))

return out, hidden

summary(

LSTMNet(),

(100,),

dtypes=[torch.long],

branching=False,

verbose=2,

col_width=16,

col_names=["kernel_size", "output_size", "num_params", "mult_adds"],)

打印结果: