记得在当前工程中新建一个MNIST_data文件夹和model文件夹

终端cd到当前工程路径

train.py代码

import tensorflow as tf

#导入数据

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

#去除加速sse的warning

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

#x为训练图像,y_为训练图像标签

x = tf.placeholder(tf.float32, shape=[None, 784])

y_ = tf.placeholder(tf.float32, shape=[None, 10])

#权重偏置初始化

W = tf.Variable(tf.zeros([784,10]))

b = tf.Variable(tf.zeros([10]))

#权重在初始化时应该加入少量的噪声来打破对称性以及避免0梯度,避免神经元节点输出恒为0的问题(dead neurons)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

#第一层卷积层,32个卷积核去分别关注32个特征

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1,28,28,1])#将单张图片从784维向量重新还原为28x28的矩阵图片,-1表示取出所有的数据

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

#第二层卷积层

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

#全连接层

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

#使用Dropout,训练时为0.5,测试时为1,keep_prob表示保留不关闭的神经元的比例

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

#把1024维的向量转换成10维,对应10个类别

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

#交叉熵

cross_entropy = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y_conv))

#定义train_step

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

#定义测试准确率

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

#存储训练的模型

saver = tf.train.Saver()

#创建Session和变量初始化

sess = tf.InteractiveSession()

sess.run(tf.global_variables_initializer())

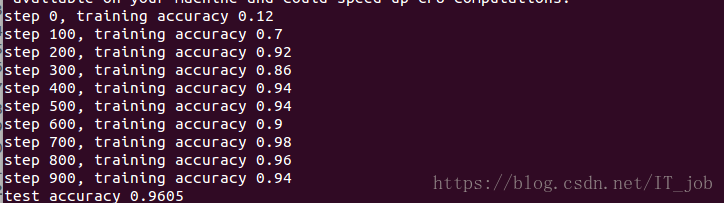

#标准训练是20000步,这里为节约时间训练1000步

for i in range(1000):

batch = mnist.train.next_batch(50)

if i%100 == 0:#每100步输出一次在验证集上的准确度

train_accuracy = accuracy.eval(feed_dict={

x:batch[0], y_: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g"%(i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

saver.save(sess, '/home/xy/highschool_myOwn614/model/model.ckpt') #模型存储的文件夹

#输出在测试集上的准确度

print("test accuracy %g"%accuracy.eval(feed_dict={

x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

训练结果

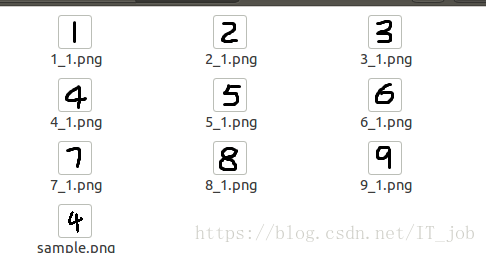

test_number文件夹中的内容为

test.py文件

from PIL import Image, ImageFilter

import tensorflow as tf

import cv2

#去除加速sse的warning

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

#语音播报预测结果

import pyttsx3

def imageprepare():

#导入自己的图片地址

file_name='/home/xy/highschool_myOwn614/test_number/1_1.png'

#in terminal 'mogrify -format png *.jpg' convert jpg to png

im = Image.open(file_name).convert('L')

tv = list(im.getdata()) #get pixel values

#normalize pixels to 0 and 1. 0 is pure white, 1 is pure black.我们写的是白底黑字,标准训练的是黑底白字,需要转换

tva = [ (255-x)*1.0/255.0 for x in tv]

return tva

result=imageprepare()

#详细解释见train.py

x = tf.placeholder(tf.float32, [None, 784])

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1,28,28,1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

W_fc1 = weight_variable([7 * 7 * 64, 1024])

b_fc1 = bias_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10])

y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2)

init_op = tf.global_variables_initializer()

saver = tf.train.Saver()

with tf.Session() as sess:

sess.run(init_op)

#使用之前保存的模型参数

saver.restore(sess, "/home/xy/highschool_myOwn614/model/model.ckpt")

prediction=tf.argmax(y_conv,1)

predint=prediction.eval(feed_dict={x: [result],keep_prob: 1.0}, session=sess)

#将预测结果写在predictNumber.txt文件里

fi_xu=open('/home/xy/highschool_myOwn614/predictNumber.txt','w')

fi_xu.write(str(predint[0]))

fi_xu.close()

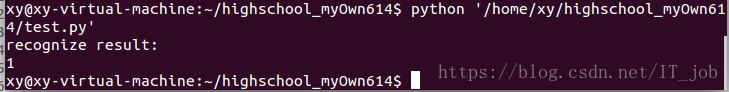

print('recognize result:')

print(predint[0])

#语音播报

engine = pyttsx3.init()

engine.say("hello,you predict number is"+str(predint[0]))

engine.runAndWait()

结果