SparkStreaming 概述

SparkStreaming 是什么

实时计算:毫秒级

离线计算: 天、小时、分钟

流处理:来一条处理一条

批处理:攒一批处理一批

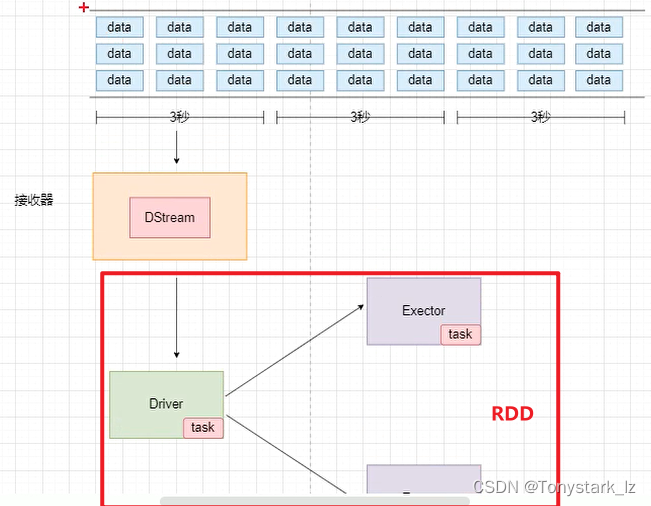

SparkStreaming介于实时和离线之间的,准实时,微批次

SparkStreaming 架构原理

SparkCore => RDD

SprakSQL => DataFrame、DataSet

Spark Streaming => DStream(离散化流)

DStream是随着时间推移而收到的数据的序列

实战

读取Kafka中的数据

添加依赖:

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.12</artifactId>

<version>3.3.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.3.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka-0-10_2.12</artifactId>

<version>3.3.0</version>

</dependency>

</dependencies>

public class Test01_kafka {

public static void main(String[] args) throws InterruptedException {

//1.配置spark 参数

SparkConf conf = new SparkConf().setAppName("SparkSQL").setMaster("local[2]");

//2.创建JavaStreamingContext上下文对象

//参数二:指定处理数据的时间区间

JavaStreamingContext javaStreamingContext = new JavaStreamingContext(conf, Duration.apply(3000));

//Kafka消费

//配置kafka消费的主题 和 参数

ArrayList<String> topics = new ArrayList<>();

topics.add("atguigu");

HashMap<String, Object> kafkaParams = new HashMap<>();

kafkaParams.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092,hadoop103:9092");

kafkaParams.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG,StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.GROUP_ID_CONFIG,"sparkStreaming001");

//DirectAPI 拉取Kafka的数据

JavaInputDStream<ConsumerRecord<String, String>> directStream = KafkaUtils.createDirectStream(

javaStreamingContext,//spark 参数

LocationStrategies.PreferBrokers(), // 指定计算所在的主机

ConsumerStrategies.Subscribe(topics, kafkaParams) //消费者参数

);

directStream.map(ConsumerRecord::value).print();

//开启流处理

javaStreamingContext.start();

//开启阻塞

javaStreamingContext.awaitTermination();

}

}

spark-streaming3.3.0框架使用的日志框架是log4j2

# Set everything to be logged to the console

rootLogger.level = ERROR

rootLogger.appenderRef.stdout.ref = console

# In the pattern layout configuration below, we specify an explicit `%ex` conversion

# pattern for logging Throwables. If this was omitted, then (by default) Log4J would

# implicitly add an `%xEx` conversion pattern which logs stacktraces with additional

# class packaging information. That extra information can sometimes add a substantial

# performance overhead, so we disable it in our default logging config.

# For more information, see SPARK-39361.

appender.console.type = Console

appender.console.name = console

appender.console.target = SYSTEM_ERR

appender.console.layout.type = PatternLayout

appender.console.layout.pattern = %d{

yy/MM/dd HH:mm:ss} %p %c{

1}: %m%n%ex

# Set the default spark-shell/spark-sql log level to WARN. When running the

# spark-shell/spark-sql, the log level for these classes is used to overwrite

# the root logger's log level, so that the user can have different defaults

# for the shell and regular Spark apps.

logger.repl.name = org.apache.spark.repl.Main

logger.repl.level = warn

logger.thriftserver.name = org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver

logger.thriftserver.level = warn

# Settings to quiet third party logs that are too verbose

logger.jetty1.name = org.sparkproject.jetty

logger.jetty1.level = warn

logger.jetty2.name = org.sparkproject.jetty.util.component.AbstractLifeCycle

logger.jetty2.level = error

logger.replexprTyper.name = org.apache.spark.repl.SparkIMain$exprTyper

logger.replexprTyper.level = info

logger.replSparkILoopInterpreter.name = org.apache.spark.repl.SparkILoop$SparkILoopInterpreter

logger.replSparkILoopInterpreter.level = info

logger.parquet1.name = org.apache.parquet

logger.parquet1.level = error

logger.parquet2.name = parquet

logger.parquet2.level = error

# SPARK-9183: Settings to avoid annoying messages when looking up nonexistent UDFs in SparkSQL with Hive support

logger.RetryingHMSHandler.name = org.apache.hadoop.hive.metastore.RetryingHMSHandler

logger.RetryingHMSHandler.level = fatal

logger.FunctionRegistry.name = org.apache.hadoop.hive.ql.exec.FunctionRegistry

logger.FunctionRegistry.level = error

# For deploying Spark ThriftServer

# SPARK-34128: Suppress undesirable TTransportException warnings involved in THRIFT-4805

appender.console.filter.1.type = RegexFilter

appender.console.filter.1.regex = .*Thrift error occurred during processing of message.*

appender.console.filter.1.onMatch = deny appender.console.filter.1.onMismatch = neutral

wordCount案例

public class Test02_WordCount {

public static void main(String[] args) throws InterruptedException {

SparkConf conf = new SparkConf().setAppName("SparkStream").setMaster("local[2]");

JavaStreamingContext jssc = new JavaStreamingContext(conf, Duration.apply(3000));

jssc.checkpoint("ck");//设置checkpoint检查点目录

ArrayList<String> topics = new ArrayList<>();

topics.add("atguigu");

HashMap<String, Object> kafkaParams = new HashMap<>();

kafkaParams.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092");

kafkaParams.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.GROUP_ID_CONFIG, "sparkStreaming001");

JavaInputDStream<ConsumerRecord<String, String>> directStream = KafkaUtils.createDirectStream(

jssc,

LocationStrategies.PreferBrokers(),

ConsumerStrategies.Subscribe(topics, kafkaParams)

);

directStream

.flatMapToPair(consumerRecord -> {

ArrayList<Tuple2<String, Integer>> list = new ArrayList<>();

if(consumerRecord.value()==null|| "".equals(consumerRecord.value())){

return list.iterator();

}

String[] words = consumerRecord.value().split("\\s+");

for (String word : words) {

list.add(new Tuple2<>(word,1));

}

return list.iterator();

})

// .reduceByKey((sum,elem)-> sum+elem) // 无状态转换算子

.updateStateByKey( // 有状态转换算子

new Function2<List<Integer>, Optional<Integer>, Optional<Integer>>() {

@Override

public Optional<Integer> call(List<Integer> integers, Optional<Integer> state) throws Exception {

Integer newState = state.orElse(0);

for (Integer integer : integers) {

newState+=integer;

}

return Optional.of(newState);

}

})

.print();

jssc.start();

jssc.awaitTermination();

}

}

wordCount案例 从checkpoint检查点恢复数据

public class Test03_State_CheckPoint {

public static void main(String[] args) throws InterruptedException {

//从checkpoint恢复数据

JavaStreamingContext jssc = JavaStreamingContext.getOrCreate("ck", new Function0<JavaStreamingContext>() {

@Override

public JavaStreamingContext call() throws Exception {

//创建JavaStreamingContext

SparkConf conf = new SparkConf().setAppName("SparkStreaming").setMaster("local[2]");

JavaSparkContext javaSparkContext = new JavaSparkContext(conf);

JavaStreamingContext jssc = new JavaStreamingContext(javaSparkContext, Duration.apply(3000));

//业务核心代码

ArrayList<String> topics = new ArrayList<>();

topics.add("atguigu");

HashMap<String, Object> kafkaParams = new HashMap<>();

kafkaParams.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092");

kafkaParams.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.GROUP_ID_CONFIG, "sparkStreaming001");

JavaInputDStream<ConsumerRecord<String, String>> directStream = KafkaUtils.createDirectStream(

jssc,

LocationStrategies.PreferBrokers(),

ConsumerStrategies.Subscribe(topics, kafkaParams)

);

directStream

.flatMapToPair(consumerRecord -> {

ArrayList<Tuple2<String, Integer>> list = new ArrayList<>();

if(consumerRecord.value()==null|| "".equals(consumerRecord.value())){

return list.iterator();

}

String[] words = consumerRecord.value().split("\\s+");

for (String word : words) {

list.add(new Tuple2<>(word,1));

}

return list.iterator();})

.updateStateByKey((list,state)-> {

Integer newState = (Integer) state.orElse(0);

for (Integer integer : list) {

newState+=integer;

}

return Optional.of(newState);

})

.print();

//返回JavaStreamingContext

return jssc;

}

});

jssc.start();

jssc.awaitTermination();

}

}

有状态转化算子window

public class Test04_window {

public static void main(String[] args) throws InterruptedException {

SparkConf conf = new SparkConf().setAppName("SparkStream").setMaster("local[2]");

JavaStreamingContext jssc = new JavaStreamingContext(conf, Duration.apply(3000));

jssc.checkpoint("ck");

ArrayList<String> topics = new ArrayList<>();

topics.add("atguigu");

HashMap<String, Object> kafkaParams = new HashMap<>();

kafkaParams.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092");

kafkaParams.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.GROUP_ID_CONFIG, "sparkStreaming001");

JavaInputDStream<ConsumerRecord<String, String>> directStream = KafkaUtils.createDirectStream(

jssc,

LocationStrategies.PreferBrokers(),

ConsumerStrategies.Subscribe(topics, kafkaParams)

);

directStream

.flatMapToPair(consumerRecord -> {

ArrayList<Tuple2<String, Integer>> list = new ArrayList<>();

if(consumerRecord.value()==null|| "".equals(consumerRecord.value())){

return list.iterator();

}

String[] words = consumerRecord.value().split("\\s+");

for (String word : words) {

list.add(new Tuple2<>(word,1));

}

return list.iterator();

})

// 参数含义:窗口时长 滑动步长 这两者都必须为采集批次大小的整数倍。

.window(Duration.apply(6000),Duration.apply(3000))

.reduceByKey((sum,elem)-> sum+elem) // 无状态转换算子

.print();

jssc.start();

jssc.awaitTermination();

}

}

有状态转换算子reduceByKeyAndWindow并使用优雅关闭

public class Test05_reduceByKeyAndWindow {

public static void main(String[] args) throws InterruptedException {

SparkConf conf = new SparkConf().setAppName("SparkStream").setMaster("local[2]");

JavaStreamingContext jssc = new JavaStreamingContext(conf, Duration.apply(3000));

jssc.checkpoint("ck");

ArrayList<String> topics = new ArrayList<>();

topics.add("atguigu");

HashMap<String, Object> kafkaParams = new HashMap<>();

kafkaParams.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG,"hadoop102:9092");

kafkaParams.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class.getName());

kafkaParams.put(ConsumerConfig.GROUP_ID_CONFIG, "sparkStreaming001");

JavaInputDStream<ConsumerRecord<String, String>> directStream = KafkaUtils.createDirectStream(

jssc,

LocationStrategies.PreferBrokers(),

ConsumerStrategies.Subscribe(topics, kafkaParams)

);

directStream

.flatMapToPair(consumerRecord -> {

ArrayList<Tuple2<String, Integer>> list = new ArrayList<>();

if(consumerRecord.value()==null|| "".equals(consumerRecord.value())){

return list.iterator();

}

String[] words = consumerRecord.value().split("\\s+");

for (String word : words) {

list.add(new Tuple2<>(word,1));

}

return list.iterator();

})

.reduceByKeyAndWindow(

//函数一:状态累加

new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer sum, Integer elem) throws Exception {

return sum + elem;

}

},

//函数二:状态与旧的状态累减:可以保存key值不丢失

// new Function2<Integer, Integer, Integer>() {

// @Override

// public Integer call(Integer sum, Integer before) throws Exception {

// return sum-before;

// }

// },

//窗口时长

Duration.apply(6000),

//滑动步长

Duration.apply(3000)

)

// .reduceByKey((sum,elem)-> sum+elem) // 无状态转换算子

.print();

//使用观察者模式

new Thread(new Runnable() {

@Override

public void run() {

while (true) {

try {

Thread.sleep(500);

File file = new File("stopStreaming");

if(file.exists()) {

//优雅关闭:执行完当前的计算任务就结束

jssc.stop(true, true);

System.exit(0);

}

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}

}).start();

jssc.start();

jssc.awaitTermination();

}

}