原文地址: https://blog.csdn.net/njpjsoftdev/article/details/52956508

我们在生产环境中使用Druid也遇到了很多问题,通过阅读官网文档、源码以及社区提问解决或部分解决了很多问题,现将遇到的问题、解决方案以及调优经验总结如下:

问题一:Hadoop batch ingestion失败,日志错误为“No buckets?…“

解决方案:这个问题当初困扰了我们大概一周的时间,对于大部分刚接触Druid人来说基本都会遇到时区问题。

其实问题很简单,主要在于集群工作时区与导入数据时区不一致。由于Druid是时间序列数据库,所以对时间非常敏感。Druid底层采用绝对毫秒数存储时间,如果不指定时区,默认输出为零时区时间,即ISO8601中yyyy-MM-ddThh:mm:ss.SSSZ。我们生产环境中采用东八区,也就是Asia/Hong Kong时区,所以需要将集群所有UTC时间调整为UTC+08:00;同时导入的数据的timestamp列格式必须为:yyyy-MM-ddThh:mm:ss.SSS+08:00

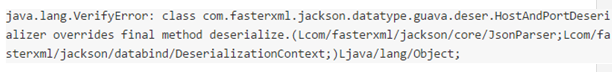

问题二:Druid与Hadoop在Jackson上出现版本冲突,日志错误信息:

解决方案:当前版本的Druid是用Hadoop-2.3.0版本进行编译的,针对上述出现的问题,要么更换Hadoop版本为2.3.0(我们生产环境就是这么做的),要么按照该文章中的方法解决:

https://github.com/druid-io/druid/blob/master/docs/content/operations/other-hadoop.md

问题三:Segment的建议大小在300-700MB之间,我们目前是一个小时聚合一次,每小时的原始数据大概在50G左右,生成的Segments数据在10G左右,Segments过大影响查询性能,该如何处理?

解决方案:

目前有两种途径:

- 减小SegmentGranularity

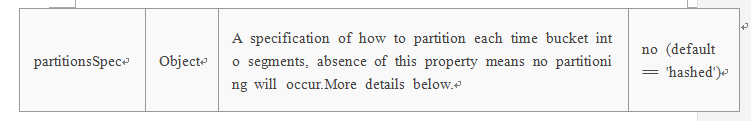

按小时粒度聚合,可以考虑减少到分钟级别,比如20分钟。 - 在TunningConfig中增加partitionsSpec,官方解释如下:

问题四:索引任务在handoff失败后很长时间内无法释放,同时一直waiting for handoff,错误日志信息如下:

2016-05-26 22:05:25,805 ERROR i.d.s.r.p.CoordinatorBasedSegmentHandoffNotifier [coordinator_handoff_scheduled_0] Exception while checking handoff for dataSource[hm_flowinfo_analysis] Segment[SegmentDescriptor{interval=2016-05-26T21:00:00.000+0800/2016-05-26T22:00:00.000+0800, version=’2016-05-26T21:00:00.000+0800’, partitionNumber=0}], Will try again after [60000]secs

解决方案:

这个问题在Druid使用中非常常见,在社区提问的相似问题也很多,问题原因主要集中在Historical Node没有内存去加载Segment。排查此类问题的方法总结如下:

-

首先引用开发者对该问题的解答

I guess due to the network storage the segment is not being pushed to deep storage at all.

Do you see a segment metadata entry in DB for above segment ?

If no, then check for any exception in the task logs or overlord logs related to segment publishing.

If the metadata entry is present in the db, make sure you have enough free space available on the historical nodes to load the segments and there are no exceptions in coordinator/historical while loading the segment. -

以及对handoff阶段工作流的简单解释

The coordinator is the process that detects the new segment built by the indexing task and signals the historical nodes to load the segment. The indexing task will only complete once it gets notification that a historical has picked up the segment so it knows it can stop serving it. The coordinator logs should help determine whether or not the coordinator noticed the new segment, if it tried to signal a historical to load it but failed, if there were rules preventing it from loading, etc. Historical logs would show you if a historical received the load order but failed for some reason (e.g. out of memory). -

所以,综合上述两方面,此问题的根本原因总结如下

Indexing task如果长时间没有释放,是因为没有收到Historical Nodes加载成功后的返回信息。CoordinatorBasedSegmentHandoffNotifier类主要负责注册等待Handoff的Segments以及检查待Handoff的Segments的状态。

注册等待Handoff的Segments主要使用内部的ConcurrentMap保存Segments的相关信息;

检查待Handoff的Segments的状态主要通过:

(1)首先通过Apache curator向Zookeeper上查询Coordinator实例;

(2)CoordinatorClient内部封装了一个HttpClient,向存活的Coordinator实例发送HttpGet请求,获取当前集群中所有Segments的load信息List;

(3)对比内部缓存的ConcurrentMap与List,成功Handoff则删除信息,失败则会打印出上述log,同时Coordinator会每隔一分钟去元信息库中同步已发布的Segments,所以Handoff失败也会每隔一分钟去重试。

最终解决方案总结如下

-

检查元信息库是否已注册此Segment的信息,如果没有,那么检查task logs(Middle Manager)或者Overlord关于此Segment发布时的log,是否有异常抛出;

-

如果元信息库中已注册该Segment,那么检查Coordinator是否已检测到此Segments已生成,如果Coordinator试图通知Historical Nodes去加载但是失败了,检查是否有rules 阻止加载此Segments等;以及检查在Coordinator中通知load此Segment的Historical Nodes在加载该Segment的过程中是否出现了异常。

问题五:在0.9.1.1版本中新引入的Kafka Indexing Service,如果设置了过短的Kafka Retention时间,同时Druid消费速度又小于Retention速率,那么会出现offset过期,即还未来得及消费的数据已经被Kafka删除了,在Peon日志会一直出现offsetOutofRangeException,并且后续的所有任务全部失败。

解决方案:

这个问题出现的情况比较极端,由于我们使用场景数据量大,同时集群带宽资源不足,所有Kafka Retention时间设置为2小时,不过Kafka提供auto.offset.reset这个策略已应对offset过期的问题,但是在spec文件中配置了{“auto.offset.reset” : “latest” },表示如果offset过期则自动rewind到最新的offset,通过跟踪Overlord日志以及Peon日志发现,Overlord日志中该配置项已生效,但是在Peon日志中该配置项被设置为“None”,“None”表示offset过期不作任何处理,只抛出异常,即offsetOutofRangeException。在Peon中不生效是因为在代码中写死了该项,主要是为了满足Exactly-once Semantics,不过开发者在写死的同时,并未考虑到如何解决这种死循环的问题。

对于该问题,开发者给出的回答如下:

Because of your 2 hour data retention, I guess you’re hitting a case where the Druid Kafka indexing tasks are trying to read offsets that have already been deleted. This causes problems with the exactly-once transaction handling scheme, which requires that all offsets be read in order, without skipping any. The Github issue https://github.com/druid-io/druid/issues/3195 is about making this better – basically you would have an option to reset the Kafka indexing to latest (this would involve resetting the ingestion metadata Druid stores for the datasource).

In the meantime, maybe it’s possible to make this happen less often by either extending your Kafka retention, or by setting your Druid taskDuration lower than the default of 1 hour.

所以,对于此问题,目前并没有彻底的解决方案,不过以下方案可以部分或暂时性解决:

-

尽量增大Kafka Retention时间,我们设置2小时确实过于极端

-

减少taskDuration;

-

在上述两个都无法彻底解决问题的情况下,可以清空元信息库中druid_dataSource表,这张表中记录了所有消费的Kafka topic对应的partition以及offset信息,同时重启MiddleManager节点。

不过,在0.9.2-milestone版本中,该特性应该会进一步改进,以下引入自Github :

The Kafka indexing service can get into a stuck state where it is trying to read the Kafka offset following the last one recorded in the dataSource metadata table but can’t read it because Kafka’s retention period has elapsed and that message is no longer available. We probably don’t want to automatically jump to a valid Kafka offset since we would have missed events and would no longer have ingested each message exactly once, so currently we start throwing exceptions and the user needs to acknowledge what happened by removing the last committed offset from the dataSource table.

It would be nice to include an API that will help them by either removing the dataSource table entry or setting it to a valid Kafka offset, but would still require a manual action/acknowledgment by the user.