作者:紫气东来

项目地址:https://zhuanlan.zhihu.com/p/632369186

一、概述

提示工程(Prompt Engineering),也称为 In-Context Prompting,是指在不更新模型权重的情况下如何与 LLM 交互以引导其行为以获得所需结果的方法。 在提示工程中,任务的描述会被嵌入到输入中。例如,不是隐含地给予模型一定的参数,而是以问题的形式直接输入。 提示工程的典型工作方式是将一个或多个任务转换为基于提示的数据集,并通过所谓的“基于提示的学习(prompt-based learning)”来训练语言模型。

提示工程不仅仅是关于设计和研发提示词。它包含了与大语言模型交互和研发的各种技能和技术。提示工程在实现和大语言模型交互、对接,以及理解大语言模型能力方面都起着重要作用。用户可以通过提示工程来提高大语言模型的安全性,也可以赋能大语言模型,比如借助专业领域知识和外部工具来增强大语言模型能力。

提示词可以包含以下任意要素:

指令:想要模型执行的特定任务或指令。

上下文:包含外部信息或额外的上下文信息,引导语言模型更好地响应。

输入数据:用户输入的内容或问题。

输出指示:指定输出的类型或格式。

以下是设计提示的通用技巧:

从简单开始:在设计提示时,需要记住这是一个迭代的过程,需要大量的实验来获得最佳结果。可以从简单的提示开始,不断添加更多的元素和上下文,以获得更好的结果。

指令:可以使用命令来指示模型执行各种简单任务,例如“写入”、“分类”、“总结”、“翻译”、“排序”等,从而为各种简单任务设计有效的提示。

具体性:对希望模型执行的指令和任务,提示越具体和详细,结果就越好。实际上,在提示中提供示例非常有效,可以以特定格式获得所需的输出。

避免不精确:这里的类比非常类似于有效的沟通——越直接,信息传递就越有效。

做还是不做:设计提示时的另一个常见技巧是避免说不要做什么,而是说要做什么。

二、提示技术

时至今日,改进提示显然有助于在不同任务上获得更好的结果。这就是提示工程背后的整个理念。在本节中,我们将介绍更高级的提示工程技术,使我们能够完成更复杂和有趣的任务,所有测试案例均通过text-davinci-003 得到。

2.1 Zero-shot 与 Few-shot

Zero-shot 与 Few-shot 是最基础的提示技术。经过大量数据训练并调整指令的LLM能够执行 Zero-shot 任务,即直接向模型输入文本以获取回答。

如,Zero-shot 输入:

输出:

Few-shot learning 在目标任务上提供了一组高质量的演示,每个演示都包含输入和期望的输出。 当模型首先看到好的例子时,它可以更好地理解人类的意图和需要什么样的答案的标准。 因此,少样本学习通常比零样本学习有更好的性能。 然而,它是以更多的 token 消耗为代价的,并且当输入和输出文本很长时可能会达到上下文长度限制。

如,Few-shot 输入:

输出:

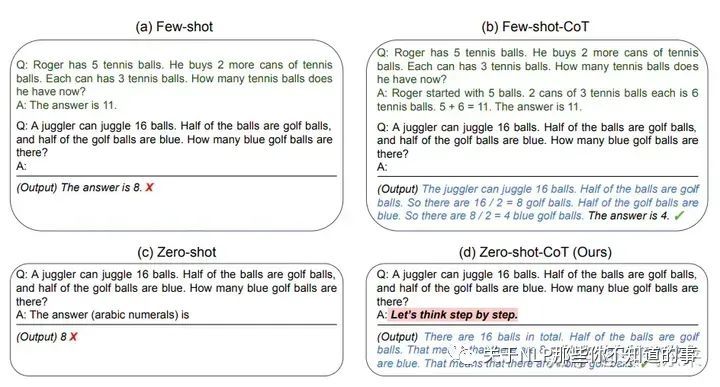

2.2 思维链(Chain-of-Thought, CoT)提示

CoT 提示 生成一系列短句来逐步描述推理逻辑,称为推理链或基本原理,最终得出最终答案。 CoT 的好处对于复杂的推理任务更为,同时使用大型模型(例如,参数超过 50B)时效果更加明显。

如,Few-shot CoT 输入:

输出:

如,Zero-shot CoT 输入:

输出:

2.3 指令提示(Instruction Prompting)

Instructed LM(例如 InstructGPT)使用高质量数据微调预训练模型,使 LM 更好地理解用户意图并遵循指令。 RLHF 是一种常用的方法。 instruction style fine-tuning 的好处是改进了模型,使其更符合人类的意图,并大大降低了沟通成本。

在与指令模型交互时,我们应该详细描述任务要求,尽量具体和准确,避免说“不做某事”,而是具体说明要做什么。如针对特定人群的输入:

输出:

In-context instruction learning 将小样本学习与指令提示相结合。 它在提示中包含多个跨不同任务的演示示例,每个演示都由指令、任务输入和输出组成。 请注意,他们的实验仅针对分类任务,指令提示包含所有标签选项。

如,In-context instruction learning 输入:

输出:

2.4 自我一致性(Self-Consistency)采样

自我一致性旨在“替换链式思维提示中使用的天真贪婪解码方法”。其想法是通过少样本CoT采样多个不同的推理路径,并使用生成结果选择最一致的答案。这有助于提高CoT提示在涉及算术和常识推理的任务中的性能。

尝试进行以下数学推理问题:

When I was 6 my sister was half my age. Now I’m 70 how old is my sister?结果如下:

结果错误,下面使用自我一致性来尝试该问题,输入如下:

输出1:

输出2:

输出3:

2.5 自动提示工程师(Automatic Prompt Engineer, APE)

APE 是一种搜索模型生成的候选指令池,然后根据所选得分函数过滤候选集以最终选择得分最高的最佳候选指令的方法。其过程可概括为3个阶段:

提示 LLM 根据输入输出对形式的一小组演示生成候选指令。如:{ {Given desired input-output pairs}}\n\nThe instruction is ;

使用迭代蒙特卡洛搜索方法通过提示(如:Generate a variation of the following instruction while keeping the semantic meaning.\n\nInput: ...\n\nOutput:...)来提出语义相似的变体来改进最佳候选者。

为了构造自动户 CoT 提示,Shum et al. (2023) 建议进行剪枝选择,包括以下3步:

增强(Augment):使用 Few-shot 或 Zero-shot CoT 提示生成给定问题的多个伪思维链;

修剪(Prune):根据生成的答案是否与基本事实相匹配来修剪伪链。

选择(Select):应用减少方差的策略梯度策略来学习所选示例的概率分布,同时将示例的概率分布视为策略,将验证集的准确性视为奖励。

Zhang et al. (2023) 认为采用聚类技术对问题进行抽样,然后生成链。 他们观察到 LLM 倾向于犯某些类型的错误。 一种类型的错误在嵌入空间中可能相似,因此被组合在一起。 通过仅从频繁错误的集群中抽取一个或几个样本,我们可以防止对一种错误类型的过多错误演示,并收集一组不同的示例。

问题聚类(Question clustering):Embed 问题使用 k-means 的方法进行聚类。

示例选择(Demonstration selection):从每个集群中选择一组有代表性的问题; 即来自一个集群的一个示例。 每个簇中的样本按到簇质心的距离排序,最接近质心的样本首先被选择。

论据生成(Rationale generation):使用 Zero-shot CoT 为选定的问题生成推理链,并构建 Few-shot 提示以运行推理。

三、更多资料

3.1 实用工具

OpenAI Cookbook has many in-depth examples for how to utilize LLM efficiently.

LangChain, a library for combining language models with other components to build applications.

Prompt Engineering Guide repo contains a pretty comprehensive collection of education materials on prompt engineering.

learnprompting.org

PromptPerfect

Semantic Kernel

3.2 数据集

Anthropic's Red Team dataset(opens in a new tab),(论文)(opens in a new tab)

Awesome ChatGPT Prompts(opens in a new tab)

DiffusionDB(opens in a new tab)

Midjourney Prompts(opens in a new tab)

P3 - Public Pool of Prompts(opens in a new tab)

PartiPrompts(opens in a new tab)

Real Toxicity Prompts(opens in a new tab)

Stable Diffusion Dataset(opens in a new tab)

WritingPrompts(opens in a new tab)

3.3 相关论文

综述

Nature Language Reasoning, A Survey(opens in a new tab) (March 2023)

Augmented Language Models: a Survey(opens in a new tab) (Feb 2023)

A Survey for In-context Learning(opens in a new tab) (Dec 2022)

Towards Reasoning in Large Language Models: A Survey(opens in a new tab) (Dec 2022)

Reasoning with Language Model Prompting: A Survey(opens in a new tab) (Dec 2022)

Emergent Abilities of Large Language Models(opens in a new tab) (Jun 2022)

A Taxonomy of Prompt Modifiers for Text-To-Image Generation(opens in a new tab) (Apr 2022)

Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing(opens in a new tab) (Jul 2021)

方法

Self-Refine: Iterative Refinement with Self-Feedback(opens in a new tab) (Mar 2023)

kNN Prompting: Beyond-Context Learning with Calibration-Free Nearest Neighbor Inference(opens in a new tab) (Mar 2023)

Visual-Language Prompt Tuning with Knowledge-guided Context Optimization(opens in a new tab) (Mar 2023)

Fairness-guided Few-shot Prompting for Large Language Models(opens in a new tab) (Mar 2023)

Context-faithful Prompting for Large Language Models(opens in a new tab) (Mar 2023)

Is Prompt All You Need? No. A Comprehensive and Broader View of Instruction Learning(opens in a new tab) (Mar 2023)

UPRISE: Universal Prompt Retrieval for Improving Zero-Shot Evaluation(opens in a new tab) (Mar 2023)

Model-tuning Via Prompts Makes NLP Models Adversarially Robust(opens in a new tab) (Mar 2023)

Structure Pretraining and Prompt Tuning for Knowledge Graph Transfer(opens in a new tab) (March 2023)

CoTEVer: Chain of Thought Prompting Annotation Toolkit for Explanation Verification(opens in a new tab) (March 2023)

Larger language models do in-context learning differently(opens in a new tab) (March 2023)

OpenICL: An Open-Source Framework for In-context Learning(opens in a new tab) (March 2023)

Dynamic Prompting: A Unified Framework for Prompt Tuning(opens in a new tab) (March 2023)

Multitask Prompt Tuning Enables Parameter-Efficient Transfer Learning(opens in a new tab) (March 2023)

Effectiveness of Data Augmentation for Prefix Tuning with Limited Data(opens in a new tab) (March 2023)

Mixture of Soft Prompts for Controllable Data Generation(opens in a new tab) (March 2023)

Prompt, Generate, then Cache: Cascade of Foundation Models makes Strong Few-shot Learners(opens in a new tab) (March 2023)

How Robust is GPT-3.5 to Predecessors? A Comprehensive Study on Language Understanding Tasks(opens in a new tab) (March 2023)

Can ChatGPT Understand Too? A Comparative Study on ChatGPT and Fine-tuned BERT(opens in a new tab) (Feb 2023)

EvoPrompting: Language Models for Code-Level Neural Architecture Search(opens in a new tab) (Feb 2023)

In-Context Instruction Learning(opens in a new tab) (Feb 2023)

Chain of Hindsight Aligns Language Models with Feedback(opens in a new tab) (Feb 2023)

Language Is Not All You Need: Aligning Perception with Language Models(opens in a new tab) (Feb 2023)

Automatic Prompt Augmentation and Selection with Chain-of-Thought from Labeled Data(opens in a new tab) (Feb 2023)

Active Prompting with Chain-of-Thought for Large Language Models(opens in a new tab) (Feb 2023)

More than you've asked for: A Comprehensive Analysis of Novel Prompt Injection Threats to Application-Integrated Large Language Models(opens in a new tab) (Feb 2023)

A Prompt Pattern Catalog to Enhance Prompt Engineering with ChatGPT(opens in a new tab) (Feb 2023)

Guiding Large Language Models via Directional Stimulus Prompting(opens in a new tab) (Feb 2023)

How Does In-Context Learning Help Prompt Tuning?(opens in a new tab) (Feb 2023)

Scalable Prompt Generation for Semi-supervised Learning with Language Models(opens in a new tab) (Feb 2023)

Bounding the Capabilities of Large Language Models in Open Text Generation with Prompt Constraints(opens in a new tab) (Feb 2023)

À-la-carte Prompt Tuning (APT): Combining Distinct Data Via Composable Prompting(opens in a new tab) (Feb 2023)

GraphPrompt: Unifying Pre-Training and Downstream Tasks for Graph Neural Networks(opens in a new tab) (Feb 2023)

The Capacity for Moral Self-Correction in Large Language Models(opens in a new tab) (Feb 2023)

SwitchPrompt: Learning Domain-Specific Gated Soft Prompts for Classification in Low-Resource Domains(opens in a new tab) (Feb 2023)

Evaluating the Robustness of Discrete Prompts(opens in a new tab) (Feb 2023)

Compositional Exemplars for In-context Learning(opens in a new tab) (Feb 2023)

Hard Prompts Made Easy: Gradient-Based Discrete Optimization for Prompt Tuning and Discovery(opens in a new tab) (Feb 2023)

Multimodal Chain-of-Thought Reasoning in Language Models(opens in a new tab) (Feb 2023)

Large Language Models Can Be Easily Distracted by Irrelevant Context(opens in a new tab) (Feb 2023)

Synthetic Prompting: Generating Chain-of-Thought Demonstrations for Large Language Models(opens in a new tab) (Feb 2023)

Progressive Prompts: Continual Learning for Language Models(opens in a new tab) (Jan 2023)

Batch Prompting: Efficient Inference with LLM APIs(opens in a new tab) (Jan 2023)

Demonstrate-Search-Predict: Composing retrieval and language models for knowledge-intensive NLP(opens in a new tab) (Dec 2022)

On Second Thought, Let's Not Think Step by Step! Bias and Toxicity in Zero-Shot Reasoning(opens in a new tab) (Dec 2022)

Constitutional AI: Harmlessness from AI Feedback(opens in a new tab) (Dec 2022)

Successive Prompting for Decomposing Complex Questions(opens in a new tab) (Dec 2022)

Large Language Models are reasoners with Self-Verification(opens in a new tab) (Dec 2022)

Discovering Language Model Behaviors with Model-Written Evaluations(opens in a new tab) (Dec 2022)

Structured Prompting: Scaling In-Context Learning to 1,000 Examples(opens in a new tab) (Dec 2022)

PAL: Program-aided Language Models(opens in a new tab) (Nov 2022)

Large Language Models Are Human-Level Prompt Engineers(opens in a new tab) (Nov 2022)

Ignore Previous Prompt: Attack Techniques For Language Models(opens in a new tab) (Nov 2022)

Machine Generated Text: A Comprehensive Survey of Threat Models and Detection Methods(opens in a new tab) (Nov 2022)

Teaching Algorithmic Reasoning via In-context Learning(opens in a new tab) (Nov 2022)

Enhancing Self-Consistency and Performance of Pre-Trained Language Models through Natural Language Inference(opens in a new tab) (Nov 2022)

Ask Me Anything: A simple strategy for prompting language models(opens in a new tab) (Oct 2022)

Recitation-Augmented Language Models(opens in a new tab) (Oct 2022)

ReAct: Synergizing Reasoning and Acting in Language Models(opens in a new tab) (Oct 2022)

Prompting GPT-3 To Be Reliable(opens in a new tab) (Oct 2022)

Decomposed Prompting: A Modular Approach for Solving Complex Tasks(opens in a new tab) (Oct 2022)

Language Models Are Greedy Reasoners: A Systematic Formal Analysis of Chain-of-Thought(opens in a new tab) (Oct 2022)

Evaluating the Susceptibility of Pre-Trained Language Models via Handcrafted Adversarial Examples(opens in a new tab) (Sep 2022)

Dynamic Prompt Learning via Policy Gradient for Semi-structured Mathematical Reasoning(opens in a new tab) (Sep 2022)

Promptagator: Few-shot Dense Retrieval From 8 Examples(opens in a new tab) (Sep 2022)

Atlas: Few-shot Learning with Retrieval Augmented Language Models(opens in a new tab) (Nov 2022)

DocPrompting: Generating Code by Retrieving the Docs(opens in a new tab) (July 2022)

On the Advance of Making Language Models Better Reasoners(opens in a new tab) (June 2022)

Large Language Models are Zero-Shot Reasoners(opens in a new tab) (May 2022)

Maieutic Prompting: Logically Consistent Reasoning with Recursive Explanations(opens in a new tab) (May 2022)

MRKL Systems: A modular, neuro-symbolic architecture that combines large language models, external knowledge sources and discrete reasoning(opens in a new tab) (May 2022)

PPT: Pre-trained Prompt Tuning for Few-shot Learning(opens in a new tab) (Mqy 2022)

Toxicity Detection with Generative Prompt-based Inference(opens in a new tab) (May 2022)

Learning to Transfer Prompts for Text Generation(opens in a new tab) (May 2022)

The Unreliability of Explanations in Few-shot Prompting for Textual Reasoning(opens in a new tab) (May 2022)

A Taxonomy of Prompt Modifiers for Text-To-Image Generation(opens in a new tab) (Apr 2022)

PromptChainer: Chaining Large Language Model Prompts through Visual Programming(opens in a new tab) (Mar 2022)

Self-Consistency Improves Chain of Thought Reasoning in Language Models(opens in a new tab) (March 2022)

Training language models to follow instructions with human feedback(opens in a new tab)

Rethinking the Role of Demonstrations: What Makes In-Context Learning Work?(opens in a new tab) (Feb 2022)

Chain of Thought Prompting Elicits Reasoning in Large Language Models(opens in a new tab) (Jan 2022)

Show Your Work: Scratchpads for Intermediate Computation with Language Models(opens in a new tab) (Nov 2021)

AI Chains: Transparent and Controllable Human-AI Interaction by Chaining Large Language Model Prompts(opens in a new tab) (Oct 2021)

Generated Knowledge Prompting for Commonsense Reasoning(opens in a new tab) (Oct 2021)

Multitask Prompted Training Enables Zero-Shot Task Generalization(opens in a new tab) (Oct 2021)

Reframing Instructional Prompts to GPTk's Language(opens in a new tab) (Sep 2021)

Design Guidelines for Prompt Engineering Text-to-Image Generative Models(opens in a new tab) (Sep 2021)

Making Pre-trained Language Models Better Few-shot Learners(opens in a new tab) (Aug 2021)

Fantastically Ordered Prompts and Where to Find Them: Overcoming Few-Shot Prompt Order Sensitivity(opens in a new tab) (April 2021)

BERTese: Learning to Speak to BERT(opens in a new tab) (April 2021)

The Power of Scale for Parameter-Efficient Prompt Tuning(opens in a new tab) (April 2021)

Prompt Programming for Large Language Models: Beyond the Few-Shot Paradigm(opens in a new tab) (Feb 2021)

Calibrate Before Use: Improving Few-Shot Performance of Language Models(opens in a new tab) (Feb 2021)

Prefix-Tuning: Optimizing Continuous Prompts for Generation(opens in a new tab) (Jan 2021)

Learning to Generate Task-Specific Adapters from Task Description(opens in a new tab) (Jan 2021)

Making Pre-trained Language Models Better Few-shot Learners(opens in a new tab) (Dec 2020)

Learning from Task Descriptions(opens in a new tab) (Nov 2020)

AutoPrompt: Eliciting Knowledge from Language Models with Automatically Generated Prompts(opens in a new tab) (Oct 2020)

Language Models are Few-Shot Learners(opens in a new tab) (May 2020)

How Can We Know What Language Models Know?(opens in a new tab) (July 2020)

Scaling Laws for Neural Language Models(opens in a new tab) (Jan 2020)

应用

PaLM 2 Technical Report(opens in a new tab) (May 2023)

BloombergGPT: A Large Language Model for Finance(opens in a new tab) (March 2023)

Medical Intervention Duration Estimation Using Language-enhanced Transformer Encoder with Medical Prompts(opens in a new tab) (March 2023)

Soft-prompt tuning to predict lung cancer using primary care free-text Dutch medical notes(opens in a new tab) (March 2023)

TaskMatrix.AI: Completing Tasks by Connecting Foundation Models with Millions of APIs(opens in a new tab) (March 2023)

Larger Probes Tell a Different Story: Extending Psycholinguistic Datasets Via In-Context Learning(opens in a new tab) (March 2023)

Linguistically Informed ChatGPT Prompts to Enhance Japanese-Chinese Machine Translation: A Case Study on Attributive Clauses(opens in a new tab) (March 2023)

Knowledge-augmented Frame Semantic Parsing with Hybrid Prompt-tuning(opens in a new tab) (March 2023)

Debiasing Scores and Prompts of 2D Diffusion for Robust Text-to-3D Generation(opens in a new tab) (March 2023)

Zero-shot Model Diagnosis(opens in a new tab) (March 2023)

Prompting Large Language Models to Generate Code-Mixed Texts: The Case of South East Asian Languages(opens in a new tab) (March 2023)

SPeC: A Soft Prompt-Based Calibration on Mitigating Performance Variability in Clinical Notes Summarization(opens in a new tab) (March 2023)

Large Language Models and Simple, Stupid Bugs(opens in a new tab) (March 2023)

Can Generative Pre-trained Transformers (GPT) Pass Assessments in Higher Education Programming Courses?(opens in a new tab) (Mar 2023)

SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models(opens in a new tab) (Mar 2023)

ICL-D3IE: In-Context Learning with Diverse Demonstrations Updating for Document Information Extraction(opens in a new tab) (March 2023)

MathPrompter: Mathematical Reasoning using Large Language Models(opens in a new tab) (March 2023)

Prompt-Based Learning for Thread Structure Prediction in Cybersecurity Forums(opens in a new tab) (March 2023)

Choice Over Control: How Users Write with Large Language Models using Diegetic and Non-Diegetic Prompting(opens in a new tab) (March 2023)

Prompting Large Language Models with Answer Heuristics for Knowledge-based Visual Question Answering(opens in a new tab) (March 2023)

Soft Prompt Guided Joint Learning for Cross-Domain Sentiment Analysis(opens in a new tab) (March 2023)

SpeechPrompt v2: Prompt Tuning for Speech Classification Tasks(opens in a new tab) (March 2023)

Goal Driven Discovery of Distributional Differences via Language Descriptions(opens in a new tab) (Feb 2023)

Navigating the Grey Area: Expressions of Overconfidence and Uncertainty in Language Models(opens in a new tab) (Feb 2023)

TabGenie: A Toolkit for Table-to-Text Generation(opens in a new tab) (Feb 2023)

SGL-PT: A Strong Graph Learner with Graph Prompt Tuning(opens in a new tab) (Feb 2023)

Few-Shot Table-to-Text Generation with Prompt-based Adapter(opens in a new tab) (Feb 2023)

Language Models Are Few-shot Learners for Prognostic Prediction(opens in a new tab) (Feb 2023)

STA: Self-controlled Text Augmentation for Improving Text Classifications(opens in a new tab) (Feb 2023)

Check Your Facts and Try Again: Improving Large Language Models with External Knowledge and Automated Feedback(opens in a new tab) (Feb 2023)

How Generative AI models such as ChatGPT can be (Mis)Used in SPC Practice, Education, and Research? An Exploratory Study(opens in a new tab) (Feb 2023)

Grimm in Wonderland: Prompt Engineering with Midjourney to Illustrate Fairytales(opens in a new tab) (Feb 2023)

LabelPrompt: Effective Prompt-based Learning for Relation Classification(opens in a new tab) (Feb 2023)

Language Model Crossover: Variation through Few-Shot Prompting(opens in a new tab) (Feb 2023)

Prompt Tuning of Deep Neural Networks for Speaker-adaptive Visual Speech Recognition(opens in a new tab) (Feb 2023)

The Capacity for Moral Self-Correction in Large Language Models(opens in a new tab) (Feb 2023)

Prompting for Multimodal Hateful Meme Classification(opens in a new tab) (Feb 2023)

PLACES: Prompting Language Models for Social Conversation Synthesis(opens in a new tab) (Feb 2023)

Commonsense-Aware Prompting for Controllable Empathetic Dialogue Generation(opens in a new tab) (Feb 2023)

Crawling the Internal Knowledge-Base of Language Models(opens in a new tab) (Jan 2023)

Legal Prompt Engineering for Multilingual Legal Judgement Prediction(opens in a new tab) (Dec 2022)

Investigating Prompt Engineering in Diffusion Models(opens in a new tab) (Nov 2022)

Learn to Explain: Multimodal Reasoning via Thought Chains for Science Question Answering(opens in a new tab) (Sep 2022)

Conversing with Copilot: Exploring Prompt Engineering for Solving CS1 Problems Using Natural Language(opens in a new tab) (Oct 2022)

Piloting Copilot and Codex: Hot Temperature, Cold Prompts, or Black Magic?(opens in a new tab) (Oct 2022)

Plot Writing From Scratch Pre-Trained Language Models(opens in a new tab) (July 2022)

Survey of Hallucination in Natural Language Generation(opens in a new tab) (Feb 2022)

论文汇编

Chain-of-Thought Papers(opens in a new tab)

Papers with Code(opens in a new tab)

Prompt Papers

参考资料

[1] Prompt Engineering https://lilianweng.github.io/posts/2023-03-15-prompt-engineering/#chain-of-thought-cot

[2] Prompt Engineering Guide https://www.promptingguide.ai/zh

[3] https://platform.openai.com/playgroundyground

[4] [2201.11903] Chain-of-Thought Prompting Elicits Reasoning in Large Language Models (arxiv.org)

[5] [2211.01910] Large Language Models Are Human-Level Prompt Engineers (arxiv.org)

[6] Automatic Prompt Augmentation and Selection with Chain-of-Thought from Labeled Data

关于NLP那些你不知道的事-VIP社群是高质量圈子,无广告营销及杂乱等无用内容!只有一个人走得更快,一群人才能走得更远,加入VIP社区,不断提高学习水平。

首先,因为VIP社区(知识星球)付费。所以我们必须对得起付费用户。努力编写有价值的文章,这些文章比公众号上的免费材料和文件更全面、质量更高。

AIGC的发展每日一个样,如果不主动学习起来,不用等到35岁,估计掉队只是分分钟的事情,像ChatGPT、Stable diffusion等新技术是否了解,AIGC 新理念是否有接触。

目前AIGC技术高速迭代,基本每天一个 LLMs 或者新idea,因此需要我们时刻保持主动学习,带有学习新技术新理念的心态,我们星球也在持续加码 AIGC 新理念新技术分享,像ChatGPT、Stable diffusion 等新理念/新技术 会找一些优秀的学习资料分享在星球!让星球的小伙伴努力跟上 AIGC 发展的脚步,不被时代所抛弃!