视频链接

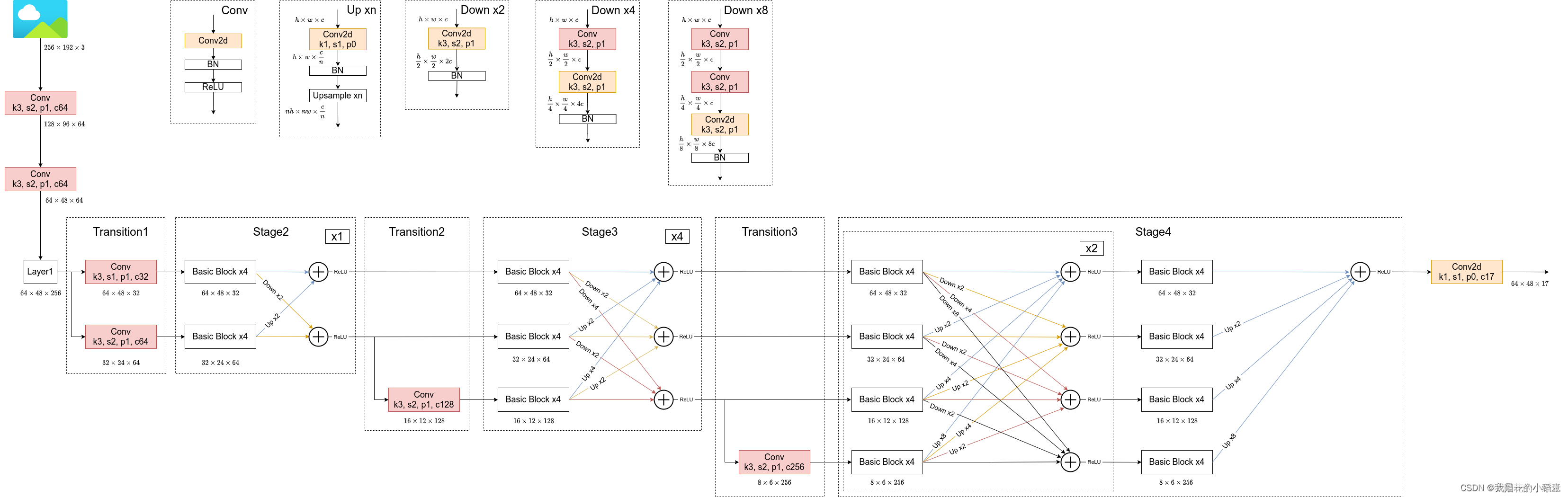

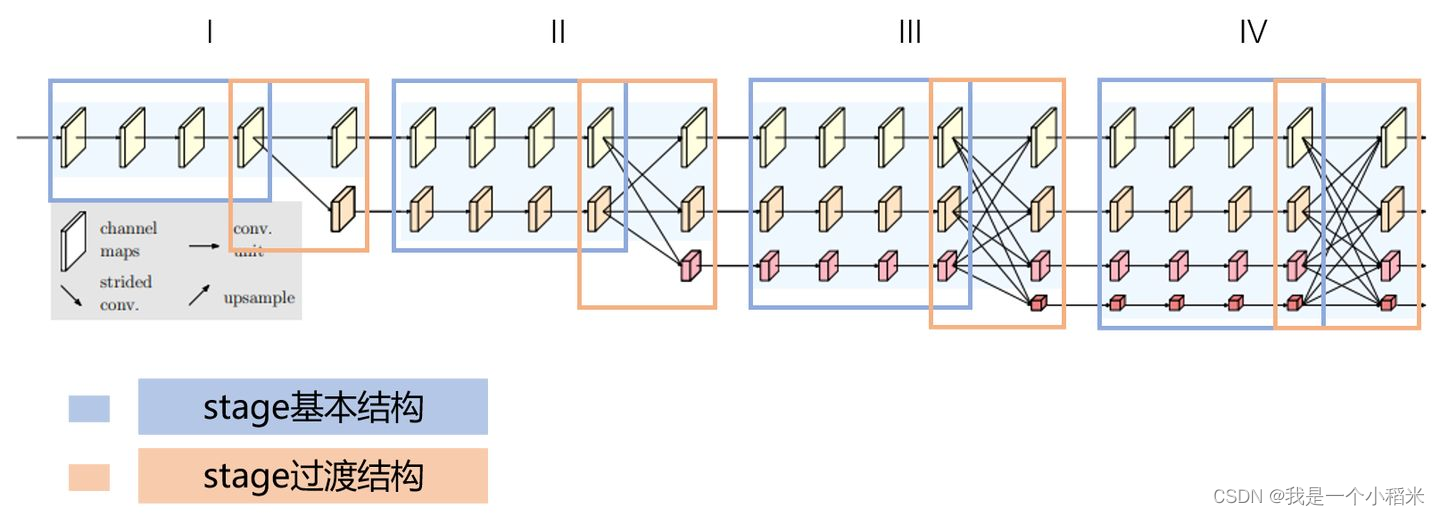

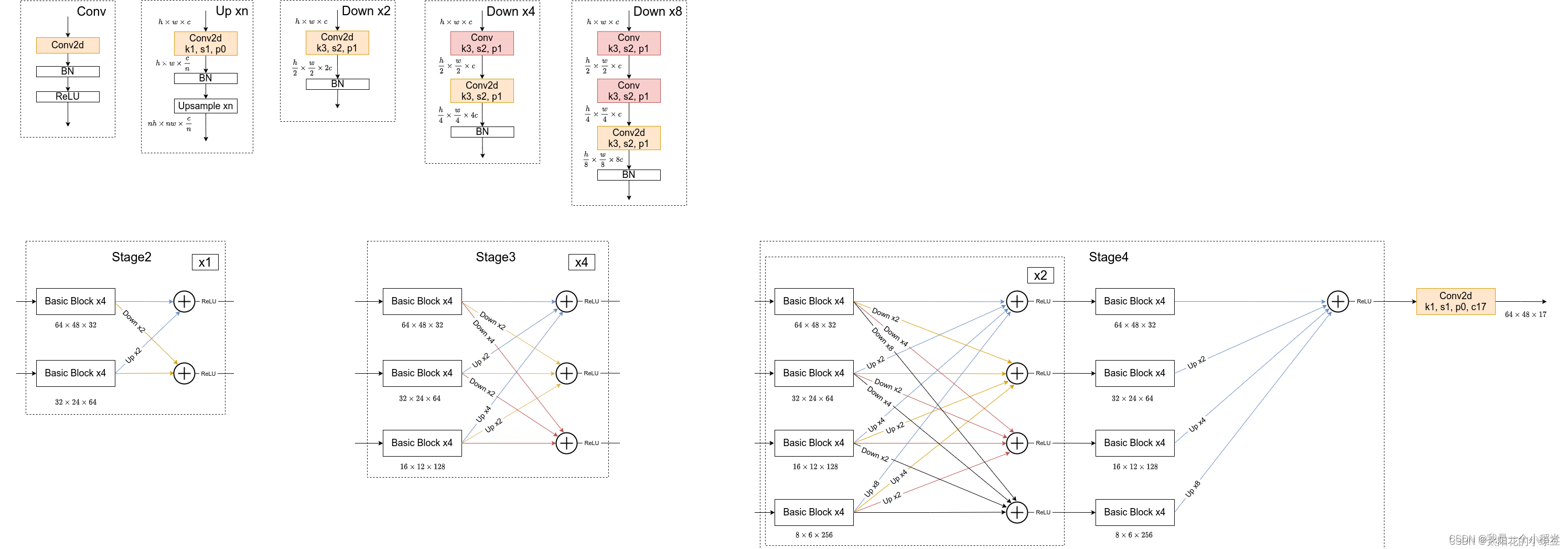

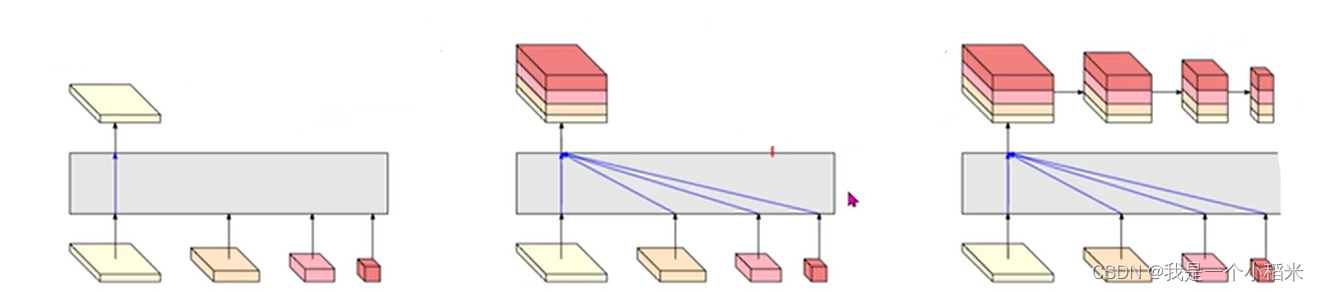

图一:

图二:(本文网络实现是依照图二,图一做通道数参考)

import paddle

from paddle import nn

from paddle.nn import initializer

from paddle.nn import functional as F

import numpy as np

参数

# BatchNorm2D动量参数__归一化时使用

BN_MOMENTUM = 0.2

不需要做操作的那些地方

# 占位符

# f(x) = x

class PlaceHolder(nn.Layer):

def __init__(self):

super(PlaceHolder, self).__init__()

def forward(self, inputs):

return inputs

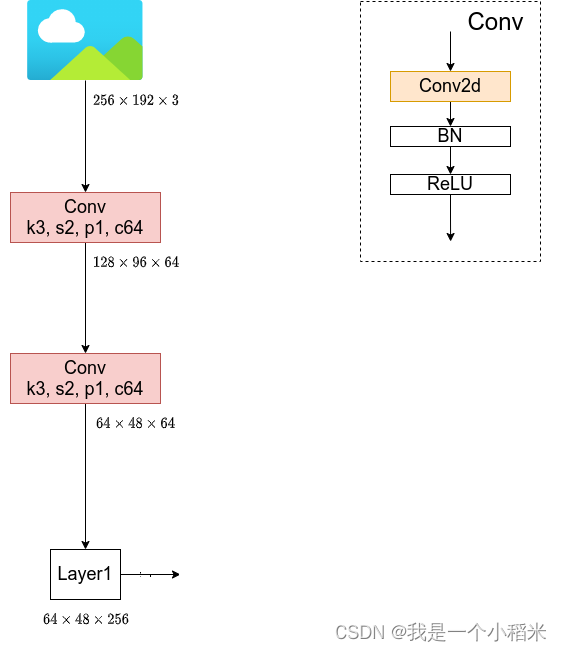

1. input输入层

对应结构如下图:

通用的3*3卷积核

class HRNetConv3_3(nn.Layer):

def __init__(self, inchannels,outchannels, stride=1, padding=0):

super(HRNetConv3_3, self).__init__()

self.conv = nn.Conv2D(inchannels, outchannels, kernel_size=3, stride=stride, padding=padding)

# BN的输入通道数等于卷积的输出通道数

self.bn = nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM)

self.relu = nn.ReLU()

# 前向传播

def forward(self, inputs):

x = self.conv(inputs)

x = self.bn(x)

x = self.relu(x)

return x

输入图像时进行的卷积操作

class HRNetStem(nn.Layer):

def __init__(self, inchannels,outchannels):

super(HRNetStem, self).__init__()

# 每经过一次卷积模块,图像的分辨率,也就是宽高,都降低1/2

self.conv1 = HRNetConv3_3(inchannels, outchannels, stride=2, padding=1) # (128, 96, 64)

self.conv2 = HRNetConv3_3(outchannels, outchannels, stride=2, padding=1) # (64, 48, 64)

def forward(self, inputs):

# (256, 192 ,3)

x = self.conv1(inputs) # (128, 96, 64)

x = self.conv2(x) # (64, 48, 64)

return x

网络输入层

class HRNetInput(nn.Layer):

def __init__(self, inchannels, outchannels, stage1_inchannels):

super(HRNetInput, self).__init__()

self.stem = HRNetStem(inchannels, outchannels)

self.in_change_conv = nn.Conv2D(outchannels, stage1_inchannels, kernel_size=1, stride=1, bias_attr=False)

self.in_change_bn = nn.BatchNorm2D(stage1_inchannels, momentum=BN_MOMENTUM)

self.relu = nn.ReLU()

def forward(self, inputs):

x = self.stem(inputs)

x = self.in_change_conv(x)

x = self.in_change_bn(x)

x = self.relu(x)

return x

2. Stage网络层实现

普通block------两次卷积

class NormalBlock(nn.Layer):

def __init__(self, inchannels, outchannels):

super(NormalBlock, self).__init__()

self.conv1 = HRNetConv3_3(inchannels=inchannels, outchannels=outchannels, stride=1, padding=1)

self.conv2 = HRNetConv3_3(inchannels=outchannels, outchannels=outchannels, stride=1, padding=1)

def forward(self, inputs):

x = self.conv1(inputs)

x = self.conv2(x)

return x

残差block

class ResidualBlock(nn.Layer):

def __init__(self, inchannels, outchannels):

super(ResidualBlock, self).__init__()

self.conv1 = HRNetConv3_3(inchannels=inchannels, outchannels=outchannels, stride=1, padding=1)

self.conv2 = nb.Conv2D(outchannels, outchannels, kernel_size = 3, stride = 1, padding = 1)

self.bn2 = nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM)

self.relu = nn.ReLU()

def forward(self, inputs):

residual = inputs

x = self.conv1(inputs)

x = self.conv2(x)

x = self.bn2(x)

# 残差拼接

x+=residual

x = self.relu(x)

return x

branch_layer结构:

tostage_layers结构:

传入的是一个分支列表,经过处理后,返回的还是相同大小的分支列表

class HRNetStage(nn.Layer):

def __init__(self, stage_channels, block):

super(HRNetStage, self).__init__()

# 每个阶段串行结构的数量

self.stage_channels = stage_channels

# 并行结构的数量

self.stage_branch_num = len(stage_channels)

self.block = block

self.block_num = 4

self.stage_layers = self.create_stage_layers()

# 传进来的是多个分支,对每个分支做不同的操作

def forward(self, inputs):

outs = []

for i in range(len(inputs)):

x = inputs[i]

out = self.stage_layers[i](x)

outs.append(out)

return outs

def create_stage_layers(self):

# 保存并行的结构

tostage_layers = []

# 遍历每一个分支,构建串联的网路结构

for i in range(self.stage_branch_num):

# 保存串行的结构

branch_layer = []

for j in range(self.block_num):

# 每一个阶段的串行结构中加入block模块____模块中图像的通道数和分辨率没有改变

branch_layer.append(self.block(self.stage_channels[i], self.stage_channels[i]))

# 转换为线性层,而不是使用list

branch_layer = nn.Sequential(*branch_layer)

tostage_layers.append(branch_layer)

# 当前stage的branch layer集合

return nn.LayerList(tostage_layers)

3. Trans网络层实现

class HRNetTrans(nn.Layer):

def __init__(self, old_branch_channels, new_branch_channels):

super(HRNetTrans, self).__init__()

# [32, 64]

self.old_branch_channels = old_branch_channels

# [32, 64, 128]

self.new_branch_channels = new_branch_channels

# 2

self.old_branch_num = len(old_branch_channels)

# 3

self.new_branch_num = len(new_branch_channels)

# 生成一分支拓展以及跨分辨率融合的网络层

self.trans_layers = self.create_new_branch_trans_layers()

前向传播

def forward(self, inputs):

outs = []

for i in range(self.old_branch_num):

x = inputs[i]

out = []

for j in range(self.new_branch_num):

y = self.trans_layers[i][j](x)

out.append(y)

if len(outs) == 0:

outs = out

else:

for i in range(self.new_branch_num):

outs[i] += out[i]

return outs

def create_new_branch_trans_layers(self):

# 存放所有分支的转换层

totrans_layers = []

# 遍历旧的分支数量

for i in range(self.old_branch_num):

# 存放新的分支

branch_trans = []

# 遍历新的分支数量

for j in range(self.new_branch_num):

layer = []

inchannels = self.old_branch_channels[i]

# 如果如果旧分支id和新分支id相同时,是不进行任何操作的

if i==j:

layer.append(PlaceHolder())

# 如果i大于j,需要进行上采样,插值为几,就进行几次上采样

elif j<i:

# 如果i小于j,需要进行下采样,插值为几,就进行几次下采样

for k in range(i - j):

# 1*1 卷积改变通道数

layer.append(nn.Conv2D(in_channels=inchannels, out_channels=self.new_branch_channels[j], kernel_size=1, bias_attr=False))

# BN

layer.append(nn.BatchNorm2D(self.new_branch_channels[j], momentum=BN_MOMENTUM))

layer.append(nn.ReLU())

# 上采样

layer.append(nn.Upsample(scale_factor=2.))

inchannels = self.new_branch_channels[j]

else:

for k in range(j - i):

# 1*1 卷积改变通道数

layer.append(nn.Conv2D(in_channels=inchannels, out_channels=self.new_branch_channels[j], kernel_size=1, bias_attr=False))

layer.append(nn.BatchNorm2D(self.new_branch_channels[j], momentum=BN_MOMENTUM))

layer.append(nn.ReLU())

# 3*3 下采样

layer.append(nn.Conv2D(in_channels=self.new_branch_channels[j], out_channels=self.new_branch_channels[j], kernel_size=3, stride=2, padding=1, bias_attr=False))

# BN

layer.append(nn.BatchNorm2D(self.new_branch_channels[j], momentum=BN_MOMENTUM))

layer.append(nn.ReLU())

inchannels = self.new_branch_channels[j]

layer = nn.Sequential(*layer)

branch_trans.append(layer)

branch_trans = nn.LayerList(branch_trans)

totrans_layers.append(branch_trans)

# 返回值是 layer list

return nn.LayerList(totrans_layers)

4. Fuse 网络层实现

三种融合模式

# [1, 32, 64, 48]

# [1, 64, 32, 24]

# [1, 128, 16, 12]

# [1, 256, 8, 6]

Fusion_mode = ['keep', 'fuse', 'multi']

class HRNetFusion(nn.Layer):

def __init__(self, stage4_channels, mode = 'keep'):

super(HRNetFusion, self).__init__()

self.stage4_channels = stage4_channels

self.mode = mode

# 根据模式去构建融合层

self.fuse_layer = self.create_fuse_layers()

def forward(self, inputs):

x1, x2, x3, x4 = inputs

outs = []

if self.mode == Fusion_mode[0]:

out = self.fuse_layer(x1)

outs.append(out)

elif self.mode == Fusion_mode[1]:

out = self.fuse_layer[0](x1)

out += self.fuse_layer[1](x2)

out += self.fuse_layer[2](x3)

out += self.fuse_layer[3](x4)

outs.append(out)

elif self.mode == Fusion_mode[2]:

out1 = self.fuse_layer[0][0](x1)

out1 += self.fuse_layer[0][1](x2)

out1 += self.fuse_layer[0][2](x3)

out1 += self.fuse_layer[0][3](x4)

outs.append(out1)

out2 = self.fuse_layer[1](out1)

outs.append(out2)

out3 = self.fuse_layer[2](out2)

outs.append(out3)

out4 = self.fuse_layer[3](out3)

outs.append(out4)

return outs

def create_fuse_layers(self):

layer = None

if self.mode == 'keep':

layer = self.create_keep_fusion_layers()

elif self.mode == 'fuse':

layer = self.create_fuse_fusion_layers()

elif self.mode == 'multi':

layer = self.create_multi_fusion_layers()

return layer

# 第一种融合模式__直接输出

def create_keep_fusion_layers(self):

self.outchannels = self.stage4_channels[0]

return PlaceHolder()

# 第二种融合模式__聚合输出

def create_fuse_fusion_layers(self):

layers = []

# 通道数向最后一层分支转化

outchannels = self.stage4_channels[3]

for i in range(len(self.stage4_channels)):

inchannel = self.stage4_channels[i]

layer = []

if i != len(self.stage4_channels)-1:

layer.append(nn.Conv2D(in_channels=inchannel, out_channels=outchannels, kernel_size=1, bias_attr=False))

layer.append(nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM))

layer.append(nn.ReLU())

# 第几个分支就进行几次上采样,图像分辨率向第一个分支看齐

for j in range(i):

layer.append(nn.Upsample(scale_factor=2.))

layer = nn.Sequential(*layer)

layers.append(layer)

self.outchannels = outchannels

return nn.LayerList(layers)

# 第三种融合模式聚合多尺度输出

def create_multi_fusion_layers(self):

multi_fuse_layers = []

layers = []

# 通道数向最后一层分支转化

outchannels = self.stage4_channels[3]

for i in range(len(self.stage4_channels)):

inchannel = self.stage4_channels[i]

layer = []

if i != len(self.stage4_channels)-1:

layer.append(nn.Conv2D(in_channels=inchannel, out_channels=outchannels, kernel_size=1, bias_attr=False))

layer.append(nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM))

layer.append(nn.ReLU())

# 第几个分支就进行几次上采样,图像分辨率向第一个分支看齐

for j in range(i):

layer.append(nn.Upsample(scale_factor=2.))

layer = nn.Sequential(*layer)

layers.append(layer)

multi_fuse_layers.append(nn.LayerList(layers))

# 下采样

multi_fuse_layers.append(

nn.Sequential(

nn.Conv2D(outchannels, outchannels, kernel_size=3, stride=2, padding=1, bias_attr=False),

nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM),

nn.ReLU()

)

)

multi_fuse_layers.append(

nn.Sequential(

nn.Conv2D(outchannels, outchannels, kernel_size=3, stride=2, padding=1, bias_attr=False),

nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM),

nn.ReLU()

)

)

multi_fuse_layers.append(

nn.Sequential(

nn.Conv2D(outchannels, outchannels, kernel_size=3, stride=2, padding=1, bias_attr=False),

nn.BatchNorm2D(outchannels, momentum=BN_MOMENTUM),

nn.ReLU()

)

)

return nn.LayerList(multi_fuse_layers)

5. 检验网络输入输出

class TestNet(nn.Layer):

def __init__(self):

super(TestNet, self).__init__()

self.input = HRNetInput(3, outchannels=64, stage1_inchannels=32)

self.stage1 = HRNetStage([32], NormalBlock)

self.trans1 = HRNetTrans([32], [32, 64])

self.stage2 = HRNetStage([32, 64], NormalBlock)

self.trans2 = HRNetTrans([32, 64], [32, 64, 128])

self.stage3 = HRNetStage([32, 64, 128], NormalBlock)

self.trans3 = HRNetTrans([32, 64, 128], [32, 64, 128, 256])

self.stage4 = HRNetStage([32, 64, 128, 256], NormalBlock)

self.fuse_layer = HRNetFusion([32, 64, 128, 256], 'multi')

def forward(self, inputs):

x = self.input(inputs)

x = [x]

x = self.stage1(x)

x = self.trans1(x)

x = self.stage2(x)

x = self.trans2(x)

x = self.stage3(x)

x = self.trans3(x)

x = self.stage4(x)

x = self.fuse_layer(x)

return x

if __name__ == '__main__':

model = TestNet()

data = np.random.randint(0, 256, (1, 3, 256, 192)).astype(np.float32)

data = paddle.to_tensor(data)

y = model(data)

for i in range(len(y)):

print(y[i].shape)

总结:

- 如果是分类任务,用最后一个特征图

- 如果是比较敏感的,那么用高分辨率的特征图