Canal GitHub:https://github.com/alibaba/canal#readme

实时采集工具canal:利用mysql主从复制的原理,slave定期读取master的binarylog对binarylog进行解析。

canal工作原理

canal模拟MySQL slave的交互协议,伪装自己为MySQL slave,向MySQL master发送dump协议

MySQL master收到dump请求,开始推送binary log给slave(即canal)

canal解析binary log对像(原始为bye流)

官网配置:https://github.com/alibaba/canal/wiki/QuickStart

1.在mysql中开启binlog日志功能

mysql上配置

linux>vi /etc/my.cnf

server-id=1

log-bin=mysql-bin

binlog_format=row

binlog-do-db=testdb //指定数据库

2.重启mysql服务

linux>systemctl restart mysqld

3.查看binlog是否生效:

linux>ls /var/lib/mysql

4.解压canal压缩包

linux>tar -zxvf canal-* -C canal

5.数据库设置

登陆mysql

mysql>GRANT SELECT, REPLICATION SLAVE, REPLICATION CLIENT ON *.* TO 'canal'@'%' IDENTIFIED BY 'canal';

mysql>flush privileges;

6.配置文件

1.修改canal.properties配置文件

linux>vi conf/canal.properties

canal.instance.parser.parallelThreadSize = 1

2.修改instance.properties配置文件

linux>vi conf/example/instance.properties

canal.instance.mysql.slaveId=21

canal.instance.master.address=192.168.58.203:3306

7.启动服务并查看进程

linux>bin/startup.sh

linux>jps

xxxx CanalLauncher

查看日志

linux>cat /opt/install/canal/logs/canal/canal.log

idea客户端

pom.xml

<dependency>

<groupId>com.alibaba.otter</groupId>

<artifactId>canal.client</artifactId>

<version>1.1.2</version>

</dependency>

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>2.4.1</version>

</dependency>

import java.net.InetSocketAddress;

import java.util.List;

import com.alibaba.otter.canal.client.CanalConnectors;

import com.alibaba.otter.canal.client.CanalConnector;

import com.alibaba.otter.canal.common.utils.AddressUtils;

import com.alibaba.otter.canal.protocol.CanalEntry;

import com.alibaba.otter.canal.protocol.Message;

import com.alibaba.otter.canal.protocol.CanalEntry.Column;

import com.alibaba.otter.canal.protocol.CanalEntry.Entry;

import com.alibaba.otter.canal.protocol.CanalEntry.EntryType;

import com.alibaba.otter.canal.protocol.CanalEntry.EventType;

import com.alibaba.otter.canal.protocol.CanalEntry.RowChange;

import com.alibaba.otter.canal.protocol.CanalEntry.RowData;

public class CanalClientDemo {

public static void main(String args[]) {

// 创建链接

CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress("192.168.58.203)",

11111), "example", "", "");

int batchSize = 1000;

int emptyCount = 0;

try {

//testdb库中的所有表

connector.connect();

connector.subscribe("testdb.*");

connector.rollback();

int totalEmptyCount = 120;

while (emptyCount < totalEmptyCount) {

Message message = connector.getWithoutAck(batchSize); // 获取指定数量的数据

long batchId = message.getId();

int size = message.getEntries().size();

if (batchId == -1 || size == 0) {

emptyCount++;

System.out.println("empty count : " + emptyCount);

try {

Thread.sleep(1000);

} catch (InterruptedException e) {

}

} else {

emptyCount = 0;

// System.out.printf("message[batchId=%s,size=%s] \n", batchId, size);

printEntry(message.getEntries());

}

connector.ack(batchId); // 提交确认

// connector.rollback(batchId); // 处理失败, 回滚数据

}

System.out.println("empty too many times, exit");

} finally {

connector.disconnect();

}

}

private static void printEntry(List<CanalEntry.Entry> entrys) {

for (Entry entry : entrys) {

if (entry.getEntryType() == EntryType.TRANSACTIONBEGIN || entry.getEntryType() == EntryType.TRANSACTIONEND) {

continue;

}

RowChange rowChage = null;

try {

rowChage = RowChange.parseFrom(entry.getStoreValue());

} catch (Exception e) {

throw new RuntimeException("ERROR ## parser of eromanga-event has an error , data:" + entry.toString(),

e);

}

EventType eventType = rowChage.getEventType();

System.out.println(String.format("================> binlog[%s:%s] , name[%s,%s] , eventType : %s",

entry.getHeader().getLogfileName(), entry.getHeader().getLogfileOffset(),

entry.getHeader().getSchemaName(), entry.getHeader().getTableName(),

eventType));

for (RowData rowData : rowChage.getRowDatasList()) {

if (eventType == EventType.DELETE) {

printColumn(rowData.getBeforeColumnsList());

} else if (eventType == EventType.INSERT) {

printColumn(rowData.getAfterColumnsList());

} else {

System.out.println("-------> before");

printColumn(rowData.getBeforeColumnsList());

System.out.println("-------> after");

printColumn(rowData.getAfterColumnsList());

}

}

}

}

private static void printColumn(List<Column> columns) {

for (Column column : columns) {

System.out.println(column.getName() + " : " + column.getValue() + " update=" + column.getUpdated());

}

}

}

注意:canal只能在java8中运行,如果canal进程CanalLauncher起不来,检查本地java环境

CanalClientDemo运行提示拒绝连接,检查脚本中的连接地址是不是运行canal的主机 CanalConnector connector = CanalConnectors.newSingleConnector(new InetSocketAddress(“192.168.58.203(canal))”,

xxxx");

Canal kafka github 配置官网:github.com/alibaba/canal/wiki/Canal-Kafka-RocketMQ-QuickStart

使用canal将数据同步到kafka上

#重新解压一个canal到nodefour上进行配置

1.修改canal.properties配置文件

linux>vi canal.properties

canal.serverMode = kafka

canal.instance.parser.parallel = false

canal.mq.servers = 192.168.58.201:9092,192.168.58.202:9092,192.168.58.203:9092

2.修改instance.properties配置文件

linux>vi instance.properties

canal.instance.mysql.slaveId=21

canal.instance.master.address=192.168.58.203:3306

canal.mq.topic=example

3.可以创建topic,也可以不创建

在kafka上启动一个消费者

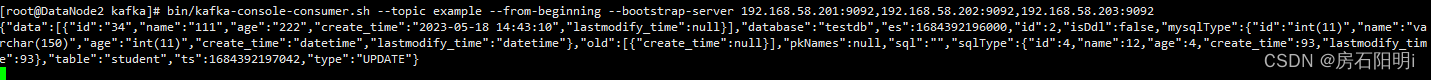

bin/kafka-console-consumer.sh --topic example --from-beginning --bootstrap-server 192.168.58.201:9092,192.168.58.202:9092,192.168.58.203:9092

启动 canal

linux>bin/startup.sh

结果: