添加损失函数和优化器,形成相对完整的网络

损失函数

loss = nn.CrossEntropyLoss() #交叉熵损失函数

优化器

optim = torch.optim.SGD(tudui.parameters(),lr=0.01) #梯度下降

代码:

import torchvision

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

import torch.nn as nn

import torch

dataset_transform = torchvision.transforms.Compose([

torchvision.transforms.ToTensor()

])

test_data = torchvision.datasets.CIFAR10(root="./test10_dataset", train=False, transform=dataset_transform)

test_loader = DataLoader(dataset=test_data, batch_size=128)

class MyNet(nn.Module):

def __init__(self):

super(MyNet, self).__init__()

self.model1 = Sequential(

Conv2d(in_channels=3, out_channels=32, kernel_size=5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10),

)

def forward(self, x):

x = self.model1(x)

return x

# 交叉熵损失函数

loss = nn.CrossEntropyLoss()

MyNet = MyNet()

# 优化器

optim = torch.optim.SGD(MyNet.parameters(),lr=0.01)

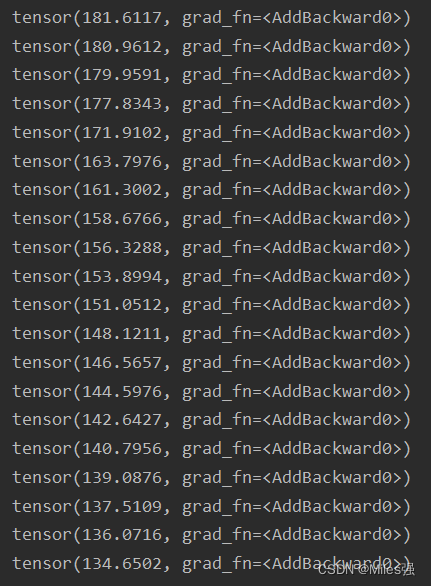

for epoch in range(20):

running_loss = 0.0

for data in test_loader:

imgs, target = data

output = MyNet(imgs)

result_loss = loss(output,target)

# 把每个可以调节梯度的梯度调为0

optim.zero_grad()

result_loss.backward()

# 调优

optim.step()

running_loss = running_loss + result_loss

print(running_loss)

训练20次,打印每次的损失函数