深度学习(19)——informer 详解

文章目录

抱歉了,家人们,这main我写了很多注释解释每个参数,可是,服务器上粘贴过来全变成问号,欺负我英语不好,没用英文写注释???将就看吧,不理解的评论或者私信吧,或者等我那天心情好的时候更新吧。后面我都在本地注释,争取不出现这种情况。

注:这篇文章只讲解核心代码,util中的或者是一些不重要的部分没写

需要数据或者打包代码的私信或者

等我哪天整理放git后更新链接

一、使用场景

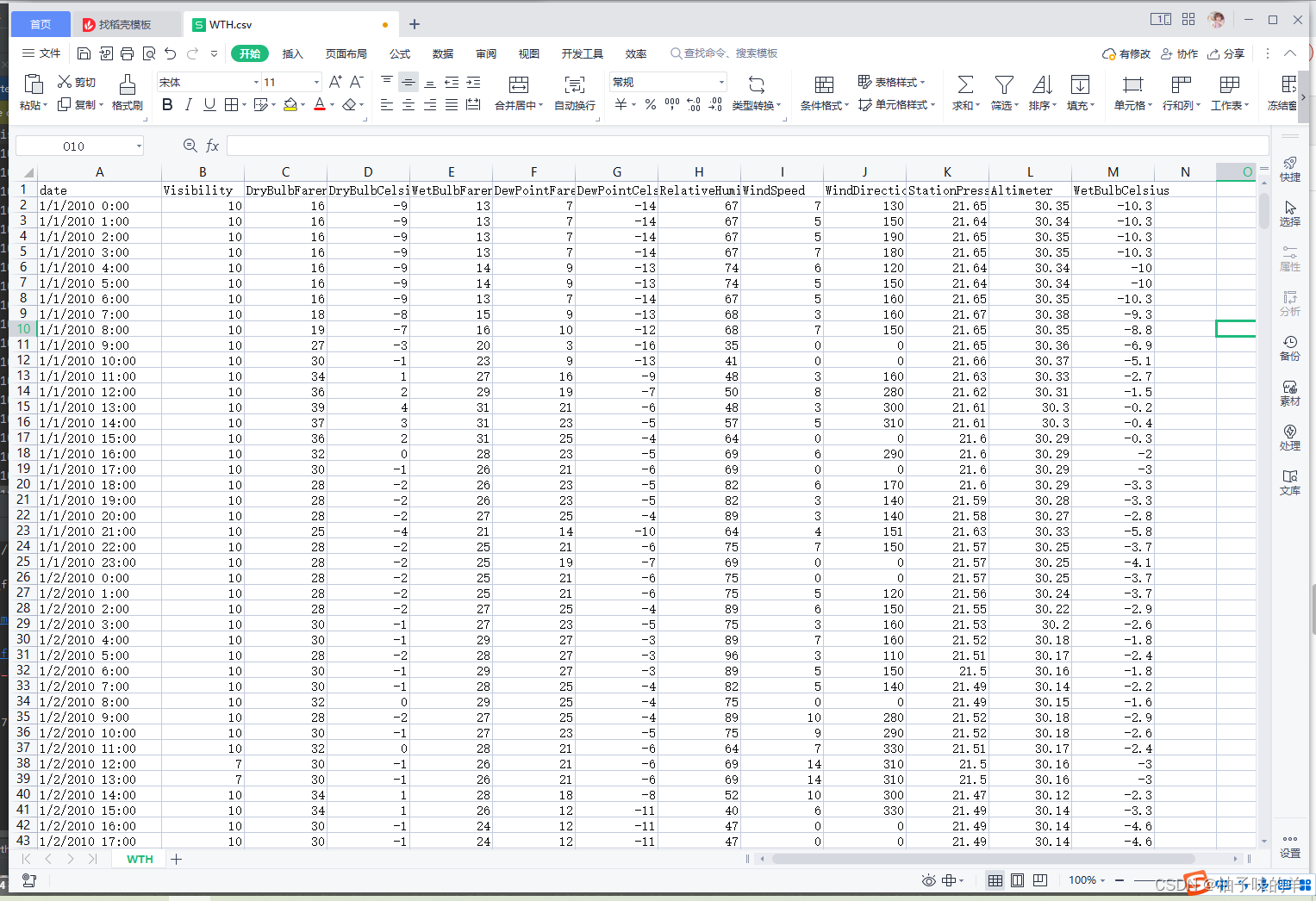

时间序列预测都可以,一般用在长时间序列预测。看一下数据格式:天气数据:逐个小时,3w+条

二、入口

main.py

# -- coding: utf-8 --

import argparse

import os

import torch

from exp.exp_informer import Exp_Informer

parser = argparse.ArgumentParser(description='[Informer] Long Sequences Forecasting')

parser.add_argument('--model', type=str, default='informer',

help='model of experiment, options: [informer, informerstack, informerlight(TBD)]') # model可以选择informer或者informerstack

parser.add_argument('--data', type=str, default='WTH', help='data') # ????demo????????????????

parser.add_argument('--root_path', type=str, default='./data/', help='root path of the data file') # ???????Excel?????

parser.add_argument('--data_path', type=str, default='WTH.csv', help='data file') # ??????

parser.add_argument('--features', type=str, default='MS',

help='forecasting task, options:[M, S, MS]; M:multivariate predict multivariate S:univariate predict univariate, MS:multivariate predict univariate') # ??????????????????????????????

parser.add_argument('--target', type=str, default='OT', help='target feature in S or MS task')

parser.add_argument('--freq', type=str, default='h',

help='freq for time features encoding, options:[s:secondly, t:minutely, h:hourly, d:daily, b:business days, w:weekly, m:monthly], you can also use more detailed freq like 15min or 3h') # ????

parser.add_argument('--checkpoints', type=str, default='./checkpoints/', help='location of model checkpoints') # ??

parser.add_argument('--seq_len', type=int, default=96, help='input sequence length of Informer encoder') #??????

parser.add_argument('--label_len', type=int, default=48, help='start token length of Informer decoder')# ???????????

parser.add_argument('--pred_len', type=int, default=24, help='prediction sequence length')# ??????

# Informer decoder input: concat[start token series(label_len), zero padding series(pred_len)]

parser.add_argument('--enc_in', type=int, default=7, help='encoder input size') # encoder ????

parser.add_argument('--dec_in', type=int, default=7, help='decoder input size') # decoder ????

parser.add_argument('--c_out', type=int, default=7, help='output size') # ????

parser.add_argument('--d_model', type=int, default=512, help='dimension of model') # ??

parser.add_argument('--n_heads', type=int, default=8, help='num of heads') # ????

parser.add_argument('--e_layers', type=int, default=2, help='num of encoder layers') # encoder???

parser.add_argument('--d_layers', type=int, default=1, help='num of decoder layers') # decoder???

parser.add_argument('--s_layers', type=str, default='3,2,1', help='num of stack encoder layers') # encoder ??????model???informerstack???????

parser.add_argument('--d_ff', type=int, default=2048, help='dimension of fcn') # ???????

parser.add_argument('--factor', type=int, default=5, help='probsparse attn factor') # ??????????????????

parser.add_argument('--padding', type=int, default=0, help='padding type')

parser.add_argument('--distil', action='store_false',

help='whether to use distilling in encoder, using this argument means not using distilling',

default=True) # ?????distill?

parser.add_argument('--dropout', type=float, default=0.05, help='dropout')

parser.add_argument('--attn', type=str, default='prob',

help='attention used in encoder, options:[prob, full]') # ??????prob-attention

parser.add_argument('--embed', type=str, default='timeF',

help='time features encoding, options:[timeF, fixed, learned]')

parser.add_argument('--activation', type=str, default='gelu', help='activation') # ????

parser.add_argument('--output_attention', action='store_true', help='whether to output attention in encoder') # ?encoder????attention

parser.add_argument('--do_predict', action='store_true', help='whether to predict unseen future data') # ??????

parser.add_argument('--mix', action='store_false', help='use mix attention in generative decoder', default=True)# ?decoder????mix attention

parser.add_argument('--cols', type=str, nargs='+', help='certain cols from the data files as the input features') # ???????????

parser.add_argument('--num_workers', type=int, default=0, help='data loader num workers') # dataloader ???cpu??

parser.add_argument('--itr', type=int, default=2, help='experiments times')

parser.add_argument('--train_epochs', type=int, default=4, help='train epochs') # ???

parser.add_argument('--batch_size', type=int, default=16, help='batch size of train input data')

parser.add_argument('--patience', type=int, default=3, help='early stopping patience') # ??

parser.add_argument('--learning_rate', type=float, default=0.0001, help='optimizer learning rate') # ??????????

parser.add_argument('--des', type=str, default='test', help='exp description')

parser.add_argument('--loss', type=str, default='mse', help='loss function') # loss????MSE????MAE

parser.add_argument('--lradj', type=str, default='type1', help='adjust learning rate') # ???????

parser.add_argument('--use_amp', action='store_true', help='use automatic mixed precision training', default=False)

parser.add_argument('--inverse', action='store_true', help='inverse output data', default=False)

parser.add_argument('--use_gpu', type=bool, default=True, help='use gpu')

parser.add_argument('--gpu', type=int, default=1, help='gpu')

parser.add_argument('--use_multi_gpu', action='store_true', help='use multiple gpus', default=False)

parser.add_argument('--devices', type=str, default='0,1', help='device ids of multile gpus')

args = parser.parse_args() # ??????

args.use_gpu = True if torch.cuda.is_available() and args.use_gpu else False

if args.use_gpu and args.use_multi_gpu:

args.devices = args.devices.replace(' ', '')

device_ids = args.devices.split(',')

args.device_ids = [int(id_) for id_ in device_ids]

args.gpu = args.device_ids[0]

data_parser = {

'ETTh1': {

'data': 'ETTh1.csv', 'T': 'OT', 'M': [7, 7, 7], 'S': [1, 1, 1], 'MS': [7, 7, 1]},

'ETTh2': {

'data': 'ETTh2.csv', 'T': 'OT', 'M': [7, 7, 7], 'S': [1, 1, 1], 'MS': [7, 7, 1]},

'ETTm1': {

'data': 'ETTm1.csv', 'T': 'OT', 'M': [7, 7, 7], 'S': [1, 1, 1], 'MS': [7, 7, 1]},

'ETTm2': {

'data': 'ETTm2.csv', 'T': 'OT', 'M': [7, 7, 7], 'S': [1, 1, 1], 'MS': [7, 7, 1]},

'WTH': {

'data': 'WTH.csv', 'T': 'WetBulbCelsius', 'M': [12, 12, 12], 'S': [1, 1, 1], 'MS': [12, 12, 1]},

'ECL': {

'data': 'ECL.csv', 'T': 'MT_320', 'M': [321, 321, 321], 'S': [1, 1, 1], 'MS': [321, 321, 1]},

'Solar': {

'data': 'solar_AL.csv', 'T': 'POWER_136', 'M': [137, 137, 137], 'S': [1, 1, 1], 'MS': [137, 137, 1]},

}

if args.data in data_parser.keys():

data_info = data_parser[args.data]

args.data_path = data_info['data']

args.target = data_info['T'] # target??

args.enc_in, args.dec_in, args.c_out = data_info[args.features]

args.s_layers = [int(s_l) for s_l in args.s_layers.replace(' ', '').split(',')]

args.detail_freq = args.freq

args.freq = args.freq[-1:]

#print('Args in experiment:')

#print(args)

Exp = Exp_Informer

for ii in range(args.itr):

# setting record of experiments

setting = '{}_{}_ft{}_sl{}_ll{}_pl{}_dm{}_nh{}_el{}_dl{}_df{}_at{}_fc{}_eb{}_dt{}_mx{}_{}_{}'.format(args.model,

args.data,

args.features,

args.seq_len,

args.label_len,

args.pred_len,

args.d_model,# 512

args.n_heads,

args.e_layers,

args.d_layers,

args.d_ff,

args.attn, # prob attention

args.factor,

args.embed,

args.distil,

args.mix,

args.des, ii)

exp = Exp(args) # set experiments

print('>>>>>>>start training : {}>>>>>>>>>>>>>>>>>>>>>>>>>>'.format(setting))

exp.train(setting)

print('>>>>>>>testing : {}<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<'.format(setting))

exp.test(setting)

if args.do_predict:

print('>>>>>>>predicting : {}<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<<'.format(setting))

exp.predict(setting, True)

torch.cuda.empty_cache()

三、dataloader

dataloader.py

import os

import numpy as np

import pandas as pd

import torch

from torch.utils.data import Dataset, DataLoader

# from sklearn.preprocessing import StandardScaler

from utils.tools import StandardScaler

from utils.timefeatures import time_features

import warnings

warnings.filterwarnings('ignore')

class Dataset_Custom(Dataset):

def __init__(self, root_path, flag='train', size=None,

features='S', data_path='ETTh1.csv',

target='OT', scale=True, inverse=False, timeenc=0, freq='h', cols=None):

# size [seq_len, label_len, pred_len]

# info

if size == None:

self.seq_len = 24*4*4

self.label_len = 24*4

self.pred_len = 24*4

else:

self.seq_len = size[0]

self.label_len = size[1]

self.pred_len = size[2]

# init

assert flag in ['train', 'test', 'val']

type_map = {

'train':0, 'val':1, 'test':2}

self.set_type = type_map[flag]

self.features = features

self.target = target

self.scale = scale

self.inverse = inverse

self.timeenc = timeenc

self.freq = freq

self.cols=cols

self.root_path = root_path

self.data_path = data_path

self.__read_data__()

def __read_data__(self):

self.scaler = StandardScaler() # 针对特征(一列数据)进行归一化处理,均值为0,方差为1

df_raw = pd.read_csv(os.path.join(self.root_path,

self.data_path))

'''

df_raw.columns: ['date', ...(other features), target feature]

'''

# cols = list(df_raw.columns);

if self.cols:

cols=self.cols.copy()

cols.remove(self.target)

else:

cols = list(df_raw.columns); cols.remove(self.target); cols.remove('date')

df_raw = df_raw[['date']+cols+[self.target]]# 将数据处理为[date,特征,target]格式

# 训练集验证集split

num_train = int(len(df_raw)*0.7)

num_test = int(len(df_raw)*0.2)

num_vali = len(df_raw) - num_train - num_test

border1s = [0, num_train-self.seq_len, len(df_raw)-num_test-self.seq_len] # 训练集,验证集,测试集的起始点

border2s = [num_train, num_train+num_vali, len(df_raw)]# 训练集,验证集,测试集终点

border1 = border1s[self.set_type] # 不同的阶段选择不同的数据起点,0,1,2分别对应训练,验证,测试

border2 = border2s[self.set_type]

if self.features=='M' or self.features=='MS':

cols_data = df_raw.columns[1:]

df_data = df_raw[cols_data]

elif self.features=='S':

df_data = df_raw[[self.target]]

if self.scale: # 是否标准化

train_data = df_data[border1s[0]:border2s[0]] # 虽然写的是train_data ,但是会根据不同的阶段变换

self.scaler.fit(train_data.values)

data = self.scaler.transform(df_data.values)

else:

data = df_data.values

df_stamp = df_raw[['date']][border1:border2]

df_stamp['date'] = pd.to_datetime(df_stamp.date) #检查数据中的date格式是否一致

data_stamp = time_features(df_stamp, timeenc=self.timeenc, freq=self.freq)

self.data_x = data[border1:border2]

if self.inverse:

self.data_y = df_data.values[border1:border2]

else:

self.data_y = data[border1:border2]

self.data_stamp = data_stamp

def __getitem__(self, index):

s_begin = index #输入序列起始位置

s_end = s_begin + self.seq_len #输入序列终止位置

r_begin = s_end - self.label_len

r_end = r_begin + self.label_len + self.pred_len

seq_x = self.data_x[s_begin:s_end]

if self.inverse:

seq_y = np.concatenate([self.data_x[r_begin:r_begin+self.label_len], self.data_y[r_begin+self.label_len:r_end]], 0)

else:

seq_y = self.data_y[r_begin:r_end]

seq_x_mark = self.data_stamp[s_begin:s_end] # 时间戳

seq_y_mark = self.data_stamp[r_begin:r_end]

return seq_x, seq_y, seq_x_mark, seq_y_mark # 返回输入序列,输出序列,输入时间戳,输出时间戳

def __len__(self):

return len(self.data_x) - self.seq_len- self.pred_len + 1

def inverse_transform(self, data):

return self.scaler.inverse_transform(data)

class Dataset_Pred(Dataset):

def __init__(self, root_path, flag='pred', size=None,

features='S', data_path='ETTh1.csv',

target='OT', scale=True, inverse=False, timeenc=0, freq='15min', cols=None):

# size [seq_len, label_len, pred_len]

# info

if size == None:

self.seq_len = 24*4*4

self.label_len = 24*4

self.pred_len = 24*4

else:

self.seq_len = size[0]

self.label_len = size[1]

self.pred_len = size[2]

# init

assert flag in ['pred']

self.features = features

self.target = target

self.scale = scale

self.inverse = inverse

self.timeenc = timeenc

self.freq = freq

self.cols=cols

self.root_path = root_path

self.data_path = data_path

self.__read_data__()

def __read_data__(self):

self.scaler = StandardScaler()

df_raw = pd.read_csv(os.path.join(self.root_path,

self.data_path))

'''

df_raw.columns: ['date', ...(other features), target feature]

'''

if self.cols:

cols=self.cols.copy()

cols.remove(self.target)

else:

cols = list(df_raw.columns); cols.remove(self.target); cols.remove('date')

df_raw = df_raw[['date']+cols+[self.target]]

border1 = len(df_raw)-self.seq_len

border2 = len(df_raw)

if self.features=='M' or self.features=='MS':

cols_data = df_raw.columns[1:]

df_data = df_raw[cols_data]

elif self.features=='S':

df_data = df_raw[[self.target]]

if self.scale:

self.scaler.fit(df_data.values)

data = self.scaler.transform(df_data.values)

else:

data = df_data.values

tmp_stamp = df_raw[['date']][border1:border2]

tmp_stamp['date'] = pd.to_datetime(tmp_stamp.date)

pred_dates = pd.date_range(tmp_stamp.date.values[-1], periods=self.pred_len+1, freq=self.freq)

df_stamp = pd.DataFrame(columns = ['date'])

df_stamp.date = list(tmp_stamp.date.values) + list(pred_dates[1:])

data_stamp = time_features(df_stamp, timeenc=self.timeenc, freq=self.freq[-1:])

self.data_x = data[border1:border2]

if self.inverse:

self.data_y = df_data.values[border1:border2]

else:

self.data_y = data[border1:border2]

self.data_stamp = data_stamp

def __getitem__(self, index):

s_begin = index

s_end = s_begin + self.seq_len

r_begin = s_end - self.label_len

r_end = r_begin + self.label_len + self.pred_len

seq_x = self.data_x[s_begin:s_end]

if self.inverse:

seq_y = self.data_x[r_begin:r_begin+self.label_len]

else:

seq_y = self.data_y[r_begin:r_begin+self.label_len]

seq_x_mark = self.data_stamp[s_begin:s_end]

seq_y_mark = self.data_stamp[r_begin:r_end]

return seq_x, seq_y, seq_x_mark, seq_y_mark

def __len__(self):

return len(self.data_x) - self.seq_len + 1

def inverse_transform(self, data):

return self.scaler.inverse_transform(data)

四、model

informer.py

import torch

import torch.nn as nn

import torch.nn.functional as F

from utils.masking import TriangularCausalMask, ProbMask

from models.encoder import Encoder, EncoderLayer, ConvLayer, EncoderStack

from models.decoder import Decoder, DecoderLayer

from models.attn import FullAttention, ProbAttention, AttentionLayer

from models.embed import DataEmbedding

class Informer(nn.Module):

def __init__(self, enc_in, dec_in, c_out, seq_len, label_len, out_len,

factor=5, d_model=512, n_heads=8, e_layers=3, d_layers=2, d_ff=512,

dropout=0.0, attn='prob', embed='fixed', freq='h', activation='gelu',

output_attention=False, distil=True, mix=True,

device=torch.device('cuda:0')):

super(Informer, self).__init__()

self.pred_len = out_len

self.attn = attn

self.output_attention = output_attention

# Encoding

self.enc_embedding = DataEmbedding(enc_in, d_model, embed, freq, dropout)

self.dec_embedding = DataEmbedding(dec_in, d_model, embed, freq, dropout)

# Attention

Attn = ProbAttention if attn == 'prob' else FullAttention

# Encoder

self.encoder = Encoder(

[

EncoderLayer(

AttentionLayer(Attn(False, factor, attention_dropout=dropout, output_attention=output_attention),

d_model, n_heads, mix=False),

d_model,

d_ff,

dropout=dropout,

activation=activation

) for l in range(e_layers)

],

[

ConvLayer(

d_model

) for l in range(e_layers - 1)

] if distil else None,

norm_layer=torch.nn.LayerNorm(d_model)

)

# Decoder

self.decoder = Decoder(

[

DecoderLayer(

AttentionLayer(Attn(True, factor, attention_dropout=dropout, output_attention=False),

d_model, n_heads, mix=mix),

AttentionLayer(FullAttention(False, factor, attention_dropout=dropout, output_attention=False),

d_model, n_heads, mix=False),

d_model,

d_ff,

dropout=dropout,

activation=activation,

)

for l in range(d_layers)

],

norm_layer=torch.nn.LayerNorm(d_model)

)

# self.end_conv1 = nn.Conv1d(in_channels=label_len+out_len, out_channels=out_len, kernel_size=1, bias=True)

# self.end_conv2 = nn.Conv1d(in_channels=d_model, out_channels=c_out, kernel_size=1, bias=True)

self.projection = nn.Linear(d_model, c_out, bias=True)

def forward(self, x_enc, x_mark_enc, x_dec, x_mark_dec,

enc_self_mask=None, dec_self_mask=None, dec_enc_mask=None):

# print(x_enc.shape)

# print(x_mark_enc.shape)

enc_out = self.enc_embedding(x_enc, x_mark_enc) # 将特征与data做embedding(包括三部分)

enc_out, attns = self.encoder(enc_out, attn_mask=enc_self_mask)

# print(x_dec.shape)

# print(x_mark_dec.shape)

dec_out = self.dec_embedding(x_dec, x_mark_dec)

dec_out = self.decoder(dec_out, enc_out, x_mask=dec_self_mask, cross_mask=dec_enc_mask)

# print(dec_out.shape)

dec_out = self.projection(dec_out)

# print(dec_out.shape)

# dec_out = self.end_conv1(dec_out)

# dec_out = self.end_conv2(dec_out.transpose(2,1)).transpose(1,2)

if self.output_attention:

return dec_out[:, -self.pred_len:, :], attns

else:

return dec_out[:, -self.pred_len:, :] # [B, L, D]

(1)Embedding

embed.py

在进入encoder和decoder前需要先对输入进行embedding,其中涉及到的是value的token_embedding,位置的position_embedding和长时间的time_feature_embedding

- 【TokenEmbedding】将序列长度转化为可进入模型的维度(本例中为512)

- 【PositionalEmbedding】可以理解为选取sin和cos函数中位置与这个序列对应,给一个在正弦或者余弦上对应的位置信息(512)

- 【TimeFeatureEmbedding】将现在的时间间隔转为和上面相同的维度(512)

import torch

import torch.nn as nn

import torch.nn.functional as F

import math

class PositionalEmbedding(nn.Module):

def __init__(self, d_model, max_len=5000):

super(PositionalEmbedding, self).__init__()

# Compute the positional encodings once in log space.

pe = torch.zeros(max_len, d_model).float()

pe.require_grad = False

position = torch.arange(0, max_len).float().unsqueeze(1)

div_term = (torch.arange(0, d_model, 2).float() * -(math.log(10000.0) / d_model)).exp()

pe[:, 0::2] = torch.sin(position * div_term)

pe[:, 1::2] = torch.cos(position * div_term)

pe = pe.unsqueeze(0)

self.register_buffer('pe', pe) #一共5000个位置(1,5000,512)

def forward(self, x):

return self.pe[:, :x.size(1)] # 序列长度的位置(seq_len)(1,seq_len,512)

class TokenEmbedding(nn.Module):

def __init__(self, c_in, d_model):

super(TokenEmbedding, self).__init__()

padding = 1 if torch.__version__>='1.5.0' else 2

self.tokenConv = nn.Conv1d(in_channels=c_in, out_channels=d_model,

kernel_size=3, padding=padding, padding_mode='circular')

for m in self.modules():

if isinstance(m, nn.Conv1d):

nn.init.kaiming_normal_(m.weight,mode='fan_in',nonlinearity='leaky_relu')

def forward(self, x):

# print(x.shape)

x = self.tokenConv(x.permute(0, 2, 1)).transpose(1,2)# (B,seq_len,feature_len)——>(B,seq_len,512)

# print(x.shape)

return x

class FixedEmbedding(nn.Module):

def __init__(self, c_in, d_model):

super(FixedEmbedding, self).__init__()

w = torch.zeros(c_in, d_model).float()

w.require_grad = False

position = torch.arange(0, c_in).float().unsqueeze(1)

div_term = (torch.arange(0, d_model, 2).float() * -(math.log(10000.0) / d_model)).exp()

w[:, 0::2] = torch.sin(position * div_term)

w[:, 1::2] = torch.cos(position * div_term)

self.emb = nn.Embedding(c_in, d_model)

self.emb.weight = nn.Parameter(w, requires_grad=False)

def forward(self, x):

return self.emb(x).detach()

class TemporalEmbedding(nn.Module):

def __init__(self, d_model, embed_type='fixed', freq='h'):

super(TemporalEmbedding, self).__init__()

minute_size = 4; hour_size = 24

weekday_size = 7; day_size = 32; month_size = 13

Embed = FixedEmbedding if embed_type=='fixed' else nn.Embedding

if freq=='t':

self.minute_embed = Embed(minute_size, d_model)

self.hour_embed = Embed(hour_size, d_model)

self.weekday_embed = Embed(weekday_size, d_model)

self.day_embed = Embed(day_size, d_model)

self.month_embed = Embed(month_size, d_model)

def forward(self, x):

# print(x.shape)

x = x.long()

minute_x = self.minute_embed(x[:,:,4]) if hasattr(self, 'minute_embed') else 0.

# print(minute_x.shape)

hour_x = self.hour_embed(x[:,:,3])

# print(hour_x.shape)

weekday_x = self.weekday_embed(x[:,:,2])

# print(weekday_x.shape)

day_x = self.day_embed(x[:,:,1])

# print(day_x.shape)

month_x = self.month_embed(x[:,:,0])

# print(month_x.shape)

return hour_x + weekday_x + day_x + month_x + minute_x

class TimeFeatureEmbedding(nn.Module):

def __init__(self, d_model, embed_type='timeF', freq='h'):

super(TimeFeatureEmbedding, self).__init__()

freq_map = {

'h':4, 't':5, 's':6, 'm':1, 'a':1, 'w':2, 'd':3, 'b':3}

d_inp = freq_map[freq]

self.embed = nn.Linear(d_inp, d_model) # 4维映射d_model维度

def forward(self, x):

return self.embed(x)

class DataEmbedding(nn.Module):

def __init__(self, c_in, d_model, embed_type='fixed', freq='h', dropout=0.1):

super(DataEmbedding, self).__init__()

self.value_embedding = TokenEmbedding(c_in=c_in, d_model=d_model)

self.position_embedding = PositionalEmbedding(d_model=d_model)

self.temporal_embedding = TemporalEmbedding(d_model=d_model, embed_type=embed_type, freq=freq) if embed_type!='timeF' else TimeFeatureEmbedding(d_model=d_model, embed_type=embed_type, freq=freq)

self.dropout = nn.Dropout(p=dropout)

def forward(self, x, x_mark):

x = self.value_embedding(x) + self.position_embedding(x) + self.temporal_embedding(x_mark) # x表示输入值,mark表示对应的时间,将值,位置和时间embedding后相加作为最后的x

return self.dropout(x)

(2)Encoder

encoder.py

Encoder主要由两个attention和多个卷积层构成,其中attention部分【后面介绍】又由ProbAttention和卷积层组成

import torch

import torch.nn as nn

import torch.nn.functional as F

class ConvLayer(nn.Module):

def __init__(self, c_in):

super(ConvLayer, self).__init__()

padding = 1 if torch.__version__>='1.5.0' else 2

self.downConv = nn.Conv1d(in_channels=c_in,

out_channels=c_in,

kernel_size=3,

padding=padding,

padding_mode='circular')

self.norm = nn.BatchNorm1d(c_in)

self.activation = nn.ELU()

self.maxPool = nn.MaxPool1d(kernel_size=3, stride=2, padding=1)

def forward(self, x):

x = self.downConv(x.permute(0, 2, 1))

x = self.norm(x)

x = self.activation(x)

x = self.maxPool(x)

x = x.transpose(1,2)

return x

class EncoderLayer(nn.Module):

def __init__(self, attention, d_model, d_ff=None, dropout=0.1, activation="relu"):

super(EncoderLayer, self).__init__()

d_ff = d_ff or 4*d_model

self.attention = attention

self.conv1 = nn.Conv1d(in_channels=d_model, out_channels=d_ff, kernel_size=1) # input:512,output:2048

self.conv2 = nn.Conv1d(in_channels=d_ff, out_channels=d_model, kernel_size=1)

self.norm1 = nn.LayerNorm(d_model)

self.norm2 = nn.LayerNorm(d_model)

self.dropout = nn.Dropout(dropout)

self.activation = F.relu if activation == "relu" else F.gelu

def forward(self, x, attn_mask=None):

# x [B, L, D]

# x = x + self.dropout(self.attention(

# x, x, x,

# attn_mask = attn_mask

# ))

new_x, attn = self.attention(

x, x, x,

attn_mask = attn_mask

)

x = x + self.dropout(new_x) # 残差连接 B,seq_len,d_model

y = x = self.norm1(x)

y = self.dropout(self.activation(self.conv1(y.transpose(-1,1))))

y = self.dropout(self.conv2(y).transpose(-1,1))

return self.norm2(x+y), attn

class Encoder(nn.Module):

def __init__(self, attn_layers, conv_layers=None, norm_layer=None):

super(Encoder, self).__init__()

self.attn_layers = nn.ModuleList(attn_layers)

self.conv_layers = nn.ModuleList(conv_layers) if conv_layers is not None else None

self.norm = norm_layer

def forward(self, x, attn_mask=None):

# x [B, L, D]

attns = []

if self.conv_layers is not None:

for attn_layer, conv_layer in zip(self.attn_layers, self.conv_layers):

x, attn = attn_layer(x, attn_mask=attn_mask) # 针对embedding的input做prob_attention

x = conv_layer(x) # (b,sqe_len,dimension)——>(b,sqe_len/2,dimension)

attns.append(attn)

x, attn = self.attn_layers[-1](x, attn_mask=attn_mask)

attns.append(attn)

else:

for attn_layer in self.attn_layers:

x, attn = attn_layer(x, attn_mask=attn_mask)

attns.append(attn)

if self.norm is not None:

x = self.norm(x)

return x, attns

(3)Decoder

decoder.py

decoder 也由两个attention组成,一个使用ProbAttention求decoder_input的自注意力,另一个使用FullAttention求decoder_input和encoder_output之间的cross attention.

import torch

import torch.nn as nn

import torch.nn.functional as F

class DecoderLayer(nn.Module):

def __init__(self, self_attention, cross_attention, d_model, d_ff=None,

dropout=0.1, activation="relu"):

super(DecoderLayer, self).__init__()

d_ff = d_ff or 4*d_model

self.self_attention = self_attention # x本身的注意力机制

self.cross_attention = cross_attention # x和y之间的注意力机制

self.conv1 = nn.Conv1d(in_channels=d_model, out_channels=d_ff, kernel_size=1)

self.conv2 = nn.Conv1d(in_channels=d_ff, out_channels=d_model, kernel_size=1)

self.norm1 = nn.LayerNorm(d_model)

self.norm2 = nn.LayerNorm(d_model)

self.norm3 = nn.LayerNorm(d_model)

self.dropout = nn.Dropout(dropout)

self.activation = F.relu if activation == "relu" else F.gelu

def forward(self, x, cross, x_mask=None, cross_mask=None): # cross是encoder的output

x = x + self.dropout(self.self_attention(

x, x, x,

attn_mask=x_mask

)[0])

x = self.norm1(x)

x = x + self.dropout(self.cross_attention(

x, cross, cross, #q,k,v

attn_mask=cross_mask

)[0])

y = x = self.norm2(x)

y = self.dropout(self.activation(self.conv1(y.transpose(-1,1))))

y = self.dropout(self.conv2(y).transpose(-1,1))

return self.norm3(x+y)

class Decoder(nn.Module):

def __init__(self, layers, norm_layer=None):

super(Decoder, self).__init__()

self.layers = nn.ModuleList(layers)

self.norm = norm_layer

def forward(self, x, cross, x_mask=None, cross_mask=None):

for layer in self.layers:

x = layer(x, cross, x_mask=x_mask, cross_mask=cross_mask)

if self.norm is not None:

x = self.norm(x)

return x

五、Attention

attention.py

import torch

import torch.nn as nn

import torch.nn.functional as F

import numpy as np

from math import sqrt

from utils.masking import TriangularCausalMask, ProbMask

class FullAttention(nn.Module):

def __init__(self, mask_flag=True, factor=5, scale=None, attention_dropout=0.1, output_attention=False):

super(FullAttention, self).__init__()

self.scale = scale

self.mask_flag = mask_flag

self.output_attention = output_attention

self.dropout = nn.Dropout(attention_dropout)

def forward(self, queries, keys, values, attn_mask):

B, L, H, E = queries.shape

_, S, _, D = values.shape

scale = self.scale or 1./sqrt(E)

scores = torch.einsum("blhe,bshe->bhls", queries, keys) #query:decoder_input 与 encoder_outputb,h,seq_len,start_len

# print(scores.shape)

if self.mask_flag:

if attn_mask is None:

attn_mask = TriangularCausalMask(B, L, device=queries.device)

scores.masked_fill_(attn_mask.mask, -np.inf)

A = self.dropout(torch.softmax(scale * scores, dim=-1)) #取scale

V = torch.einsum("bhls,bshd->blhd", A, values)

# print(V.shape)

if self.output_attention:

return (V.contiguous(), A)

else:

return (V.contiguous(), None)

class ProbAttention(nn.Module):

def __init__(self, mask_flag=True, factor=5, scale=None, attention_dropout=0.1, output_attention=False):

super(ProbAttention, self).__init__()

self.factor = factor

self.scale = scale

self.mask_flag = mask_flag

self.output_attention = output_attention

self.dropout = nn.Dropout(attention_dropout)

def _prob_QK(self, Q, K, sample_k, n_top): # n_top: c*ln(L_q)

# Q [B, H, L, D]

B, H, L_K, E = K.shape

_, _, L_Q, _ = Q.shape

# calculate the sampled Q_K

K_expand = K.unsqueeze(-3).expand(B, H, L_Q, L_K, E)#先增加一个维度,相当于复制,再扩充

# print(K_expand.shape)

index_sample = torch.randint(L_K, (L_Q, sample_k)) # real U = U_part(factor*ln(L_k))*L_q 构建96*25的随机数

K_sample = K_expand[:, :, torch.arange(L_Q).unsqueeze(1), index_sample, :]# 随机取出的25个k值

# print(K_sample.shape)

Q_K_sample = torch.matmul(Q.unsqueeze(-2), K_sample.transpose(-2, -1)).squeeze()#96个Q和25个K之间的关系

# print(Q_K_sample.shape)

# find the Top_k query with sparisty measurement

M = Q_K_sample.max(-1)[0] - torch.div(Q_K_sample.sum(-1), L_K)#96个Q中每一个选跟其他K关系最大的值 再计算与均匀分布的差异

# print(Q_K_sample.max(-1)[0].shape)

# print(M.shape)

M_top = M.topk(n_top, sorted=False)[1]#对96个Q的评分中选出25个 返回值1表示要得到索引

# print(M_top.shape)

# use the reduced Q to calculate Q_K

Q_reduce = Q[torch.arange(B)[:, None, None],

torch.arange(H)[None, :, None],

M_top, :] # factor*ln(L_q) 取出来Q的特征

# print(Q_reduce.shape)

Q_K = torch.matmul(Q_reduce, K.transpose(-2, -1)) # factor*ln(L_q)*L_k 25个Q和全部K之间的关系

# print(Q_K.shape)

return Q_K, M_top

def _get_initial_context(self, V, L_Q):

B, H, L_V, D = V.shape

if not self.mask_flag:

# V_sum = V.sum(dim=-2)

V_sum = V.mean(dim=-2)

# print(V_sum.shape)

contex = V_sum.unsqueeze(-2).expand(B, H, L_Q, V_sum.shape[-1]).clone()#先把96个V都用均值来替换

# print(contex.shape)

else: # use mask

assert(L_Q == L_V) # requires that L_Q == L_V, i.e. for self-attention only

contex = V.cumsum(dim=-2) #累加

# print(contex.shape)

return contex

def _update_context(self, context_in, V, scores, index, L_Q, attn_mask): # context:初始值,一般初始是每个序列的均值,v为value,score表示top的分数,index表示top的index,l_q是序列长度

B, H, L_V, D = V.shape

if self.mask_flag:

attn_mask = ProbMask(B, H, L_Q, index, scores, device=V.device)

scores.masked_fill_(attn_mask.mask, -np.inf) # mask中TRUE的部分填充为inf

# print(scores.shape)

attn = torch.softmax(scores, dim=-1) # nn.Softmax(dim=-1)(scores)

# print(attn.shape)

context_in[torch.arange(B)[:, None, None],

torch.arange(H)[None, :, None],

index, :] = torch.matmul(attn, V).type_as(context_in)#对25个有Q的更新V,其余的没变还是均值

# print(context_in.shape)

if self.output_attention:

attns = (torch.ones([B, H, L_V, L_V])/L_V).type_as(attn).to(attn.device)

attns[torch.arange(B)[:, None, None], torch.arange(H)[None, :, None], index, :] = attn

# print(attns.shape)

return (context_in, attns)

else:

return (context_in, None)

def forward(self, queries, keys, values, attn_mask):

'''

注意力改编

:param queries:B*seq_len*head*(d_model/head)

:param keys: B*seq_len*head*(d_model/head)

:param values: B*seq_len*head*(d_model/head)

:param attn_mask:

:return: 返回attention矩阵

'''

B, L_Q, H, D = queries.shape

_, L_K, _, _ = keys.shape

queries = queries.transpose(2,1)# (32,72,8,64)——>(32,8,72,64)

keys = keys.transpose(2,1)

values = values.transpose(2,1)

U_part = self.factor * np.ceil(np.log(L_K)).astype('int').item() # c*ln(L_k) Key里要选的个数

u = self.factor * np.ceil(np.log(L_Q)).astype('int').item() # c*ln(L_q)

U_part = U_part if U_part<L_K else L_K

u = u if u<L_Q else L_Q

scores_top, index = self._prob_QK(queries, keys, sample_k=U_part, n_top=u) # 得到分数最高的u个,返回u个的index

# add scale factor

scale = self.scale or 1./sqrt(D)

if scale is not None:

scores_top = scores_top * scale

# get the context,刚开始将所有的value赋值为每个序列的均值(48个和的均值)

context = self._get_initial_context(values, L_Q)

# update the context with selected top_k queries

context, attn = self._update_context(context, values, scores_top, index, L_Q, attn_mask)# 根据score和top-index更新value值

return context.transpose(2,1).contiguous(), attn

class AttentionLayer(nn.Module):

def __init__(self, attention, d_model, n_heads,

d_keys=None, d_values=None, mix=False):

super(AttentionLayer, self).__init__()

d_keys = d_keys or (d_model//n_heads)

d_values = d_values or (d_model//n_heads)

self.inner_attention = attention

self.query_projection = nn.Linear(d_model, d_keys * n_heads)

self.key_projection = nn.Linear(d_model, d_keys * n_heads)

self.value_projection = nn.Linear(d_model, d_values * n_heads)

self.out_projection = nn.Linear(d_values * n_heads, d_model)

self.n_heads = n_heads

self.mix = mix

def forward(self, queries, keys, values, attn_mask):

B, L, _ = queries.shape

_, S, _ = keys.shape

H = self.n_heads

queries = self.query_projection(queries).view(B, L, H, -1) # (32,72,512)——>(32,72,8,64)

keys = self.key_projection(keys).view(B, S, H, -1)# (32,72,512)——>(32,72,8,64)

values = self.value_projection(values).view(B, S, H, -1)# (32,72,512)——>(32,72,8,64)

out, attn = self.inner_attention(

queries,

keys,

values,

attn_mask

)

if self.mix:

out = out.transpose(2,1).contiguous()

out = out.view(B, L, -1) # B,seq_len,d_model

return self.out_projection(out), attn

六、人肉笔记

- 模型的一个理念:要达到长时间序列的需求,前期一定是由长时间序列作为训练这是肯定的,在已经有大量的前时间段的数据情形下,在预测未来时间的情形是,将之前已知的部分数据做为先验知识,一同放进预测的数据中,将真正要预测的数据进行mask,如要预测未来连续24个时间点的值,那作者会在24个之前先concat 48个这时间以前的数据(可以理解为让模型更好的使用已知数据,有肉套白狼一定比空手套白狼效率更高)

- 这个模型的创作者非常友善的地方就是在dataloader的部分是custom的,所以大家可以不用再自己写dataloader了,当然用不了还是要自己写的 。我必然是要手写的,因为我的数据不规律,frequency是不规律的,此外,我的数据也和他的完全不同!!这里的dataloader每次返回值是x(feature),y(result),x_stamp,y_stamp

- 模型训练的核心是process_one_batch这个函数,他的主要功能就是得到decoder的input(将先验的seq和要预测的seq拼接)decoder_input

- model_forward (x,x_stamp,decoder_input,decoder_stamp)

-

Embedding(Token,Position,TimeFeature)

-

encoder_forward

-

encoderLayer_forward

- AttentionLayer_forward

变多头- probAttention_forward

- AttentionLayer_forward

-

-

encoder 经过一个probAttention后会pooling一次序列维度从dim减低为一半,之后再经过一个probAttention

-

decoder 与encoder相似,但是有不同,差异在:

- 在initial context的时候,encoder的初始值是取平均值,decoder是累加的

- 在更新context的时候,需要先初始化probmask,encoder不需要生成mask

- decoder中的两个attention,一个是probAttention另一个是fullattention

希望对大家有所启发,我明天要接着搬我自己的砖了