YoloV5训练安全帽检测并部署在安卓上

一.Requirements

本教程使用的环境:u版yolov5,源码下载地址:

PyTorch:1.8.0

Cuda:10.2

Python:3.8

官方要求:Python>=3.6.0 is required with all requirements.txt installed including PyTorch>=1.7

git clone https://github.com/ultralytics/yolov5

cd yolov5

pip install -r requirements.txt

二.准备好自己的数据集(VOC格式)

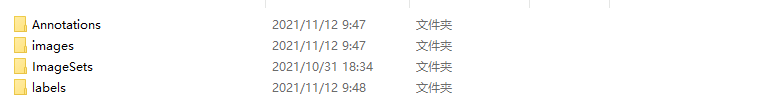

1.在yolov5目录下创建helmet_data文件夹(改名字根据具体项目自定义),目录结构如下,将使用labelImg标注好的xml文件和图片放到对应目录下

helmet_data

Annotations #存放图片对应的xml文件

images #存放图片

ImageSets/Main #之后会在Main文件夹中生成test.txt、train.txt、trainval.txt和val.txt四个文件,分别存放测试集、训练集、验证集图片的名字

示例如下:

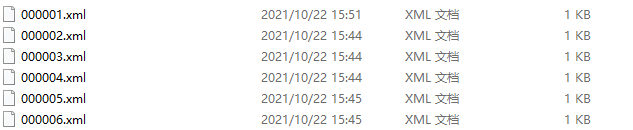

Annotations文件夹下面为xml文件(标注工具采用labelImg),内容如下:

images为jgp图片:

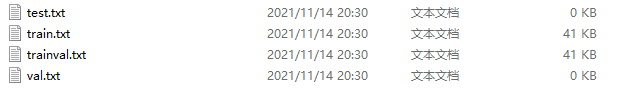

ImageSets文件夹下面有个Main子文件夹,其下面存放训练集、验证集、测试集的划分,通过脚本生成,在helmet_data下创建split_train_val.py文件,代码如下:

# coding:utf-8

import os

import random

import argparse

parser = argparse.ArgumentParser()

#xml文件的地址,根据自己的数据进行修改 xml一般存放在Annotations下

parser.add_argument('--xml_path', default='Annotations', type=str, help='input xml label path')

#数据集的划分,地址选择自己数据下的ImageSets/Main

parser.add_argument('--txt_path', default='ImageSets/Main', type=str, help='output txt label path')

opt = parser.parse_args()

trainval_percent = 1.0

train_percent = 0.9

xmlfilepath = opt.xml_path

txtsavepath = opt.txt_path

total_xml = os.listdir(xmlfilepath)

if not os.path.exists(txtsavepath):

os.makedirs(txtsavepath)

num = len(total_xml)

list_index = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list_index, tv)

train = random.sample(trainval, tr)

file_trainval = open(txtsavepath + '/trainval.txt', 'w')

file_test = open(txtsavepath + '/test.txt', 'w')

file_train = open(txtsavepath + '/train.txt', 'w')

file_val = open(txtsavepath + '/val.txt', 'w')

for i in list_index:

name = total_xml[i][:-4] + '\n'

if i in trainval:

file_trainval.write(name)

if i in train:

file_train.write(name)

else:

file_val.write(name)

else:

file_test.write(name)

file_trainval.close()

file_train.close()

file_val.close()

file_test.close()

运行之后在ImageSets\Mian下会生成下面四个txt文档:

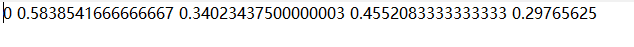

2.接下来准备labels,吧数据集格式转换成yolo_txt格式,即将每个xml标注提取BoundingBox信息为txt格式,每个图像对应一个txt文件,文件内容为,class、x_center、y_center、width、height。格式如下:

在yolov5文件夹下创建voc_label.py文件,将训练集、验证集、测试集生成label标签,同时将数据集路径导入txt文件中,代码内容如下:

# -*- coding: utf-8 -*-

import xml.etree.ElementTree as ET

import os

from os import getcwd

sets = ['train', 'val', 'test']

classes = ["helmet"] # 改成自己的类别

abs_path = os.getcwd()

print(abs_path)

def convert(size, box):

dw = 1. / (size[0])

dh = 1. / (size[1])

x = (box[0] + box[1]) / 2.0 - 1

y = (box[2] + box[3]) / 2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return x, y, w, h

def convert_annotation(image_id):

#Annotations文件夹路径

in_file = open('E:\\yolov5-master\\yolov5-master\\helmet_data\\Annotations\\%s.xml' % (image_id), encoding='UTF-8')

#labels文件夹路径

out_file = open('E:\\yolov5-master\\yolov5-master\\helmet_data\\labels\\%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

# difficult = obj.find('difficult').text

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

b1, b2, b3, b4 = b

# 标注越界修正

if b2 > w:

b2 = w

if b4 > h:

b4 = h

b = (b1, b2, b3, b4)

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for image_set in sets:

if not os.path.exists('E:\\yolov5-master\\yolov5-master\\helmet_data\\labels\\'):

os.makedirs('E:\\yolov5-master\\yolov5-master\\helmet_data\\labels\\')

#Main路径

image_ids = open('E:\\yolov5-master\\yolov5-master\\helmet_data\\ImageSets\\Main\\%s.txt' % (image_set)).read().strip().split()

list_file = open('helmet_data\\%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write(abs_path + '\\helmet_data\\images\\%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

运行后会在labels中生成如下文件:

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-0zdlUKnL-1636952968895)(/Users/zhou/Desktop/yolov5/YoloV5训练安全帽检测并部署在安卓上.assets/9358FA382185E3DF5E2DD2294694F027-6893893.png)]

该文件即为yolo_txt格式的label文件。并且在helmet_data中生成train、test、val的路径文件。如下:[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-K7gyDgtP-1636952968895)(/Users/zhou/Desktop/yolov5/YoloV5训练安全帽检测并部署在安卓上.assets/AC3F67AA4F8226AC8E0F7C3CB5CAD903-6894056.png)]

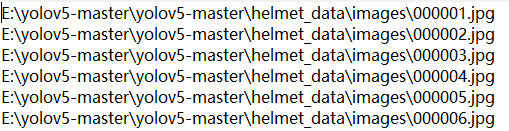

这三个文件保存的是图片的绝对路径:

3.配置文件

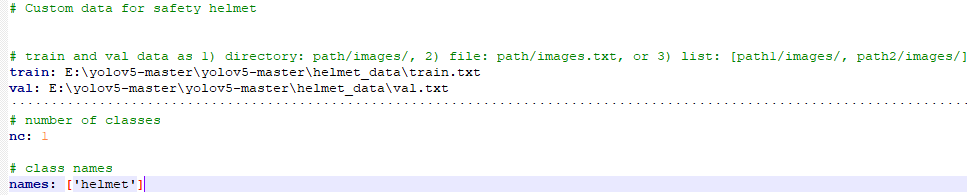

在yolov5目录下的data文件夹下新建helmet.yaml文件(根据项目自定义名字),用来存放训练集和验证集的划分文件(train.txt和val.txt),这两个文件是通过voc_label.py 生成的,然后设定目标的类别数目和具体类别列表,helmet_data内容如下:

⚠️:***train:***与路径之间有个空格

⚠️:***val:***与路径之间有个空格

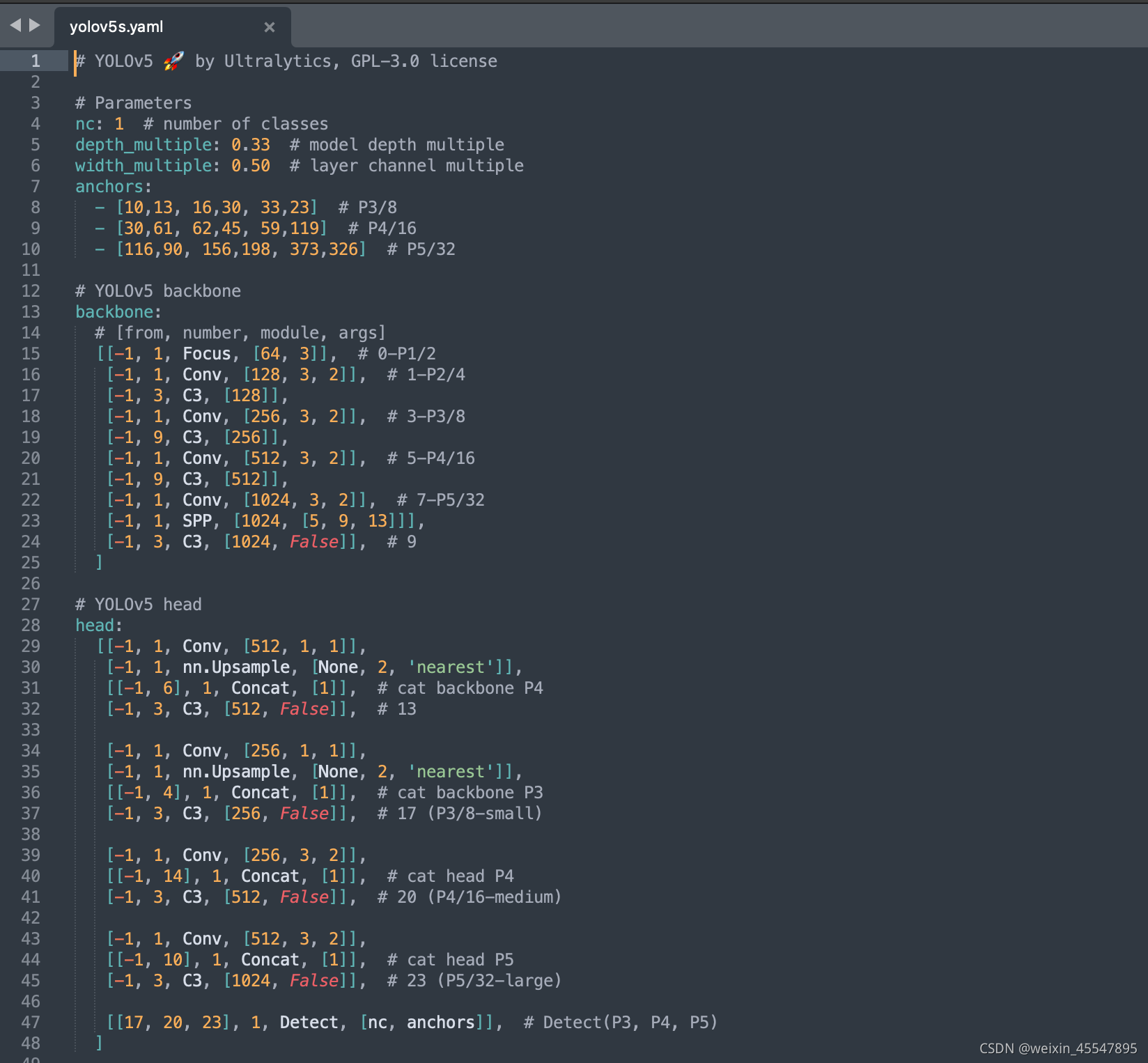

4.选取模型

在yolov5目录下的model文件夹下是模型的配置文件,官方提供s、m、l、x版本,逐渐增大,此处因为要部署在安卓端,因此采用最小的模型yolov5s.yaml,这里只需修改nc这一个参数,把nc修改成类别数,我们此处只有一个类别因此nc为1。

三.模型训练

1、yolov5源码中提供了4中预下载模型,在weights文件夹下。weights的下载可能由于网络原因而难以进行,可以去找百度网盘中的预训练模型。

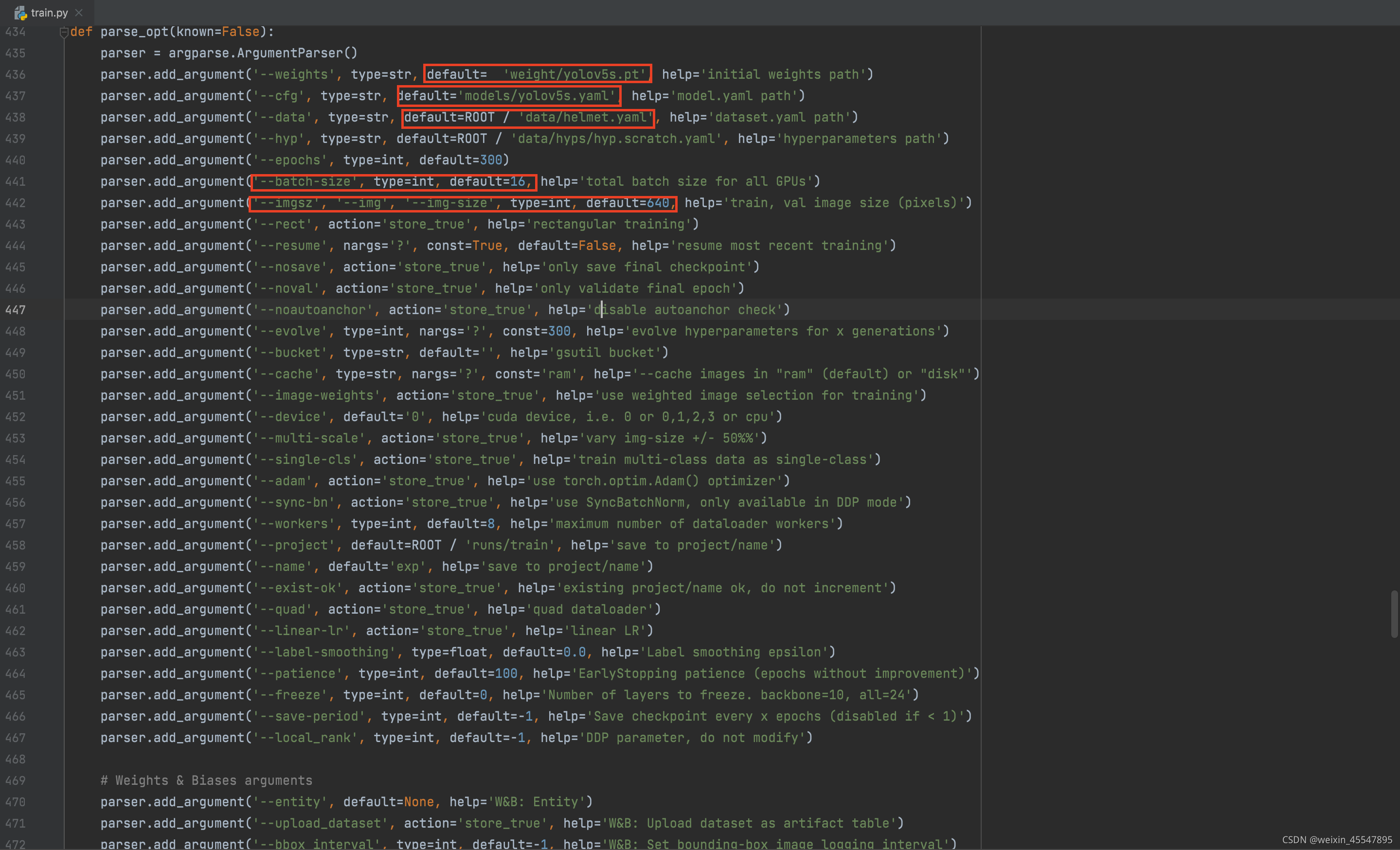

2、训练

在train.py中作一下修改:

参数解释:

epochs:指的是训练过程中整个数据集将会迭代多少次,显卡性能差的调小一点

batch-size:进行权重更新的图片数,显卡性能差的适当调小

cfg:存储模型结构的配置文件

data:存储训练、测试数据的文件

img-size:输入的图片的宽高,显卡性能差的适当调小

rect:进行矩形训练

resume:使用最近保存的模型开始训练,默认为false,即重新训练

nosave:仅保存最终的checkpoint

notest:仅测试最后的epoch

cache:存图片以加快训练

weights:权重文件路径

name:保存的文件名

device:cuda device,i.e. 0 or 0,1,2,3 or cpu

adam:使用adam优化

multi-scale:多尺度训练,image-size +/-50%

single-cls:单类别的训练集

运行训练命令:

python train.py --img 640 --batch 16 --epoch 300 --data data/helmet.yaml --cfg models/yolov5s.yaml --weights weights/yolov5s.pt --device 0

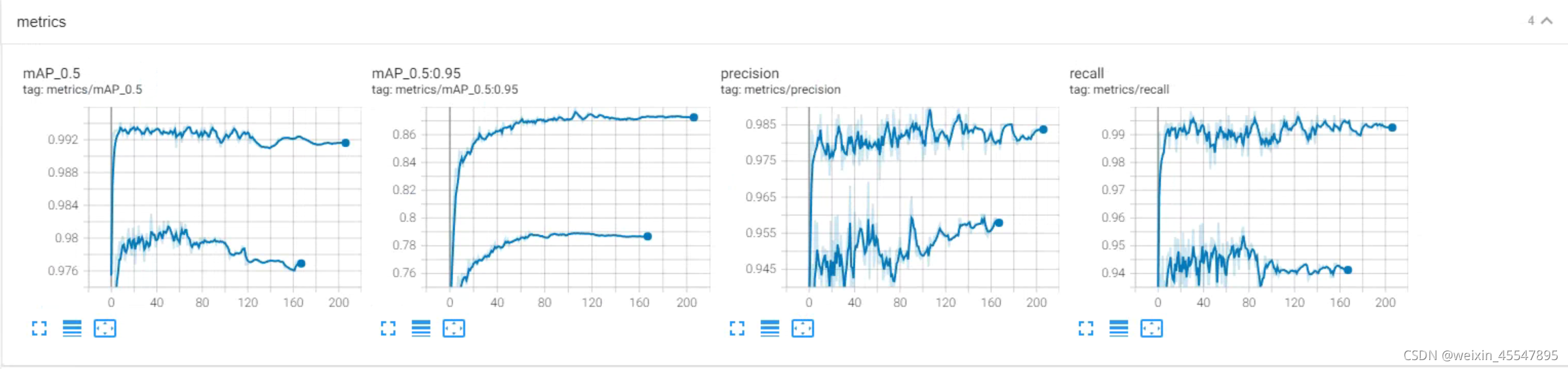

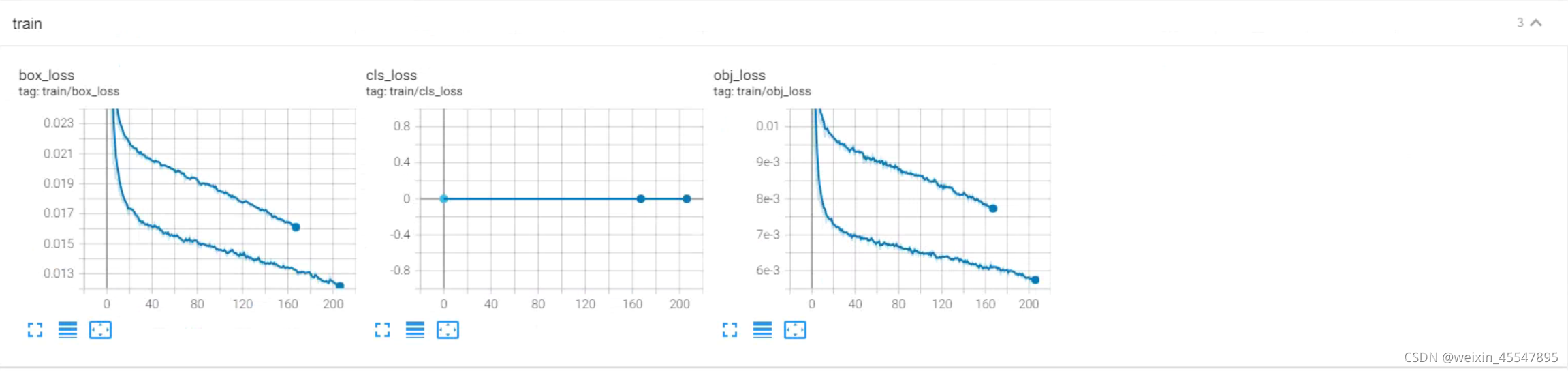

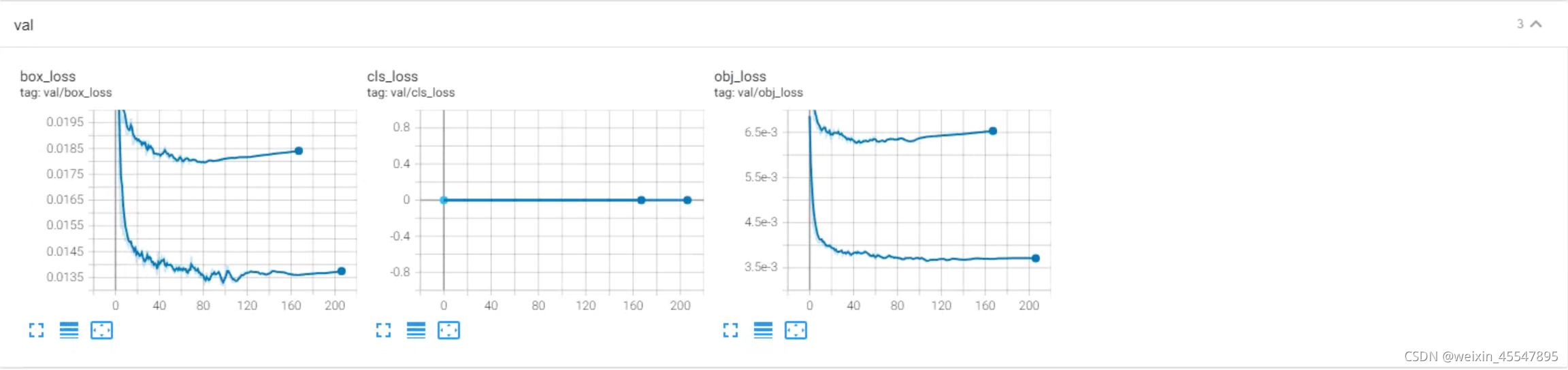

3.训练过程可视化

利用tensorboard可视化训练过程训练开始会在yolov5目录下生成一个runs文件夹保存着训练日志,查看命令如下:

tensorboard --logdir=runs

至此,已经完成了模型的训练。

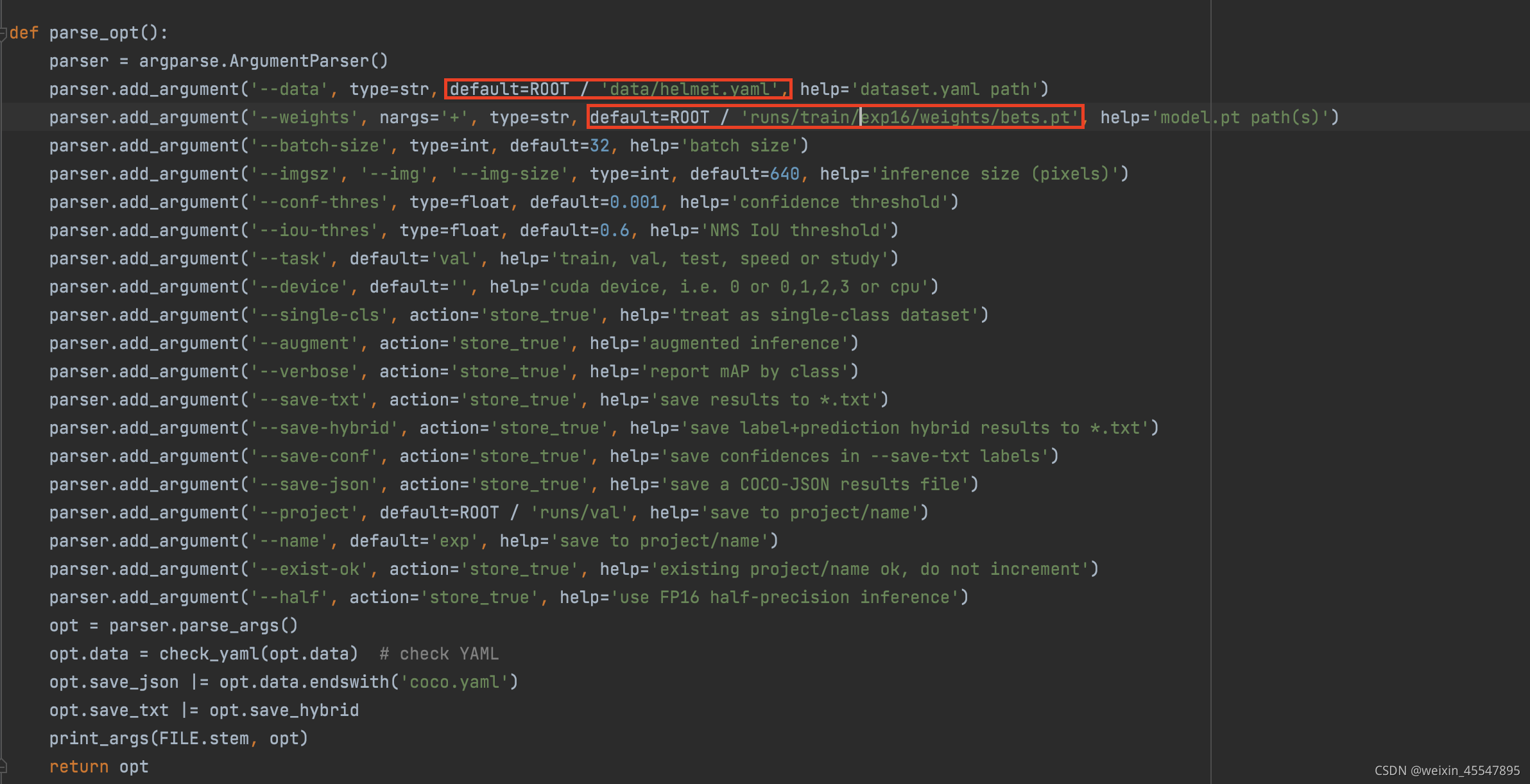

四.模型测试

评估模型的好坏就是在有标注的测试集或验证集上进行模型效果的评估,在目标检测中最常用的评估指标为mAP。在val.py文件中指定数据集配置文件。

通过下面的命令进行模型测试:

python val.py --data data/helmet.yaml --weights runs/train/exp16/weights/best.pt -- augment

模型测试结果如下:

五.模型推理

将模型在没有标注的数据集上进行推理,在detect.py中指定测试图片和测试模型的路径,其中weights使用最满意的训练模型即可,source则提供一个包含所有测试图片的文件夹路径即可。

python detect.py --weights runs/train/exp16/weights/best.pt --source data/images/ --device 0

测试完毕后,会输出标有boundingbox的图片

六.模型导出

我们需要将安全帽检测部署到安卓端,安卓端采用ncnn进行推理,这里需要先将模型转化成onnx然后在转换成ncnn所需的模型。

python export.py --weights runs/trains/exp16/weight/best.pt --include onnx --img 640 --batch 1

这里命令中一定要使用train模式,否则在模型转换后会出现MemoryData。

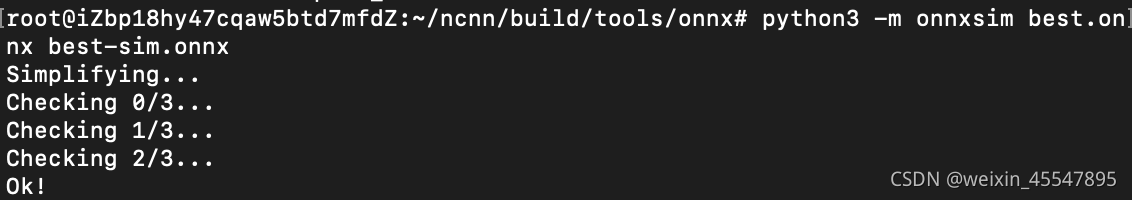

模型转换后使用onnxsim进行简化

python -m onnxsim best.onnx best-sim.onnx

提示这个说明简化成功。

七.模型转化

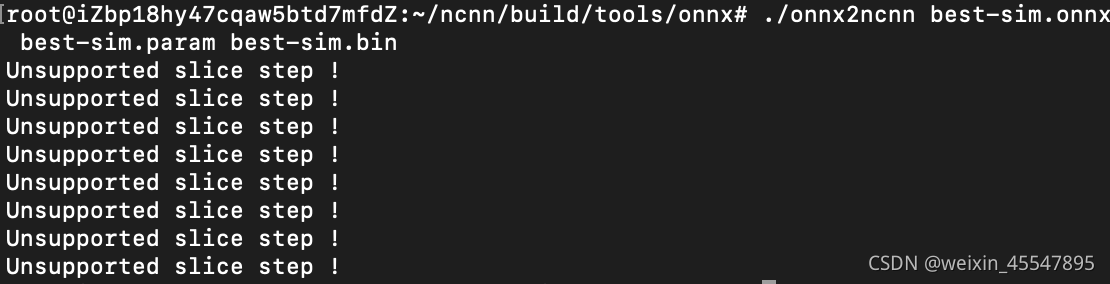

使用onnx2ncnn进行模型转化

./onnx2ncnn best-sim.onnx best-sim.param best-sim.bin

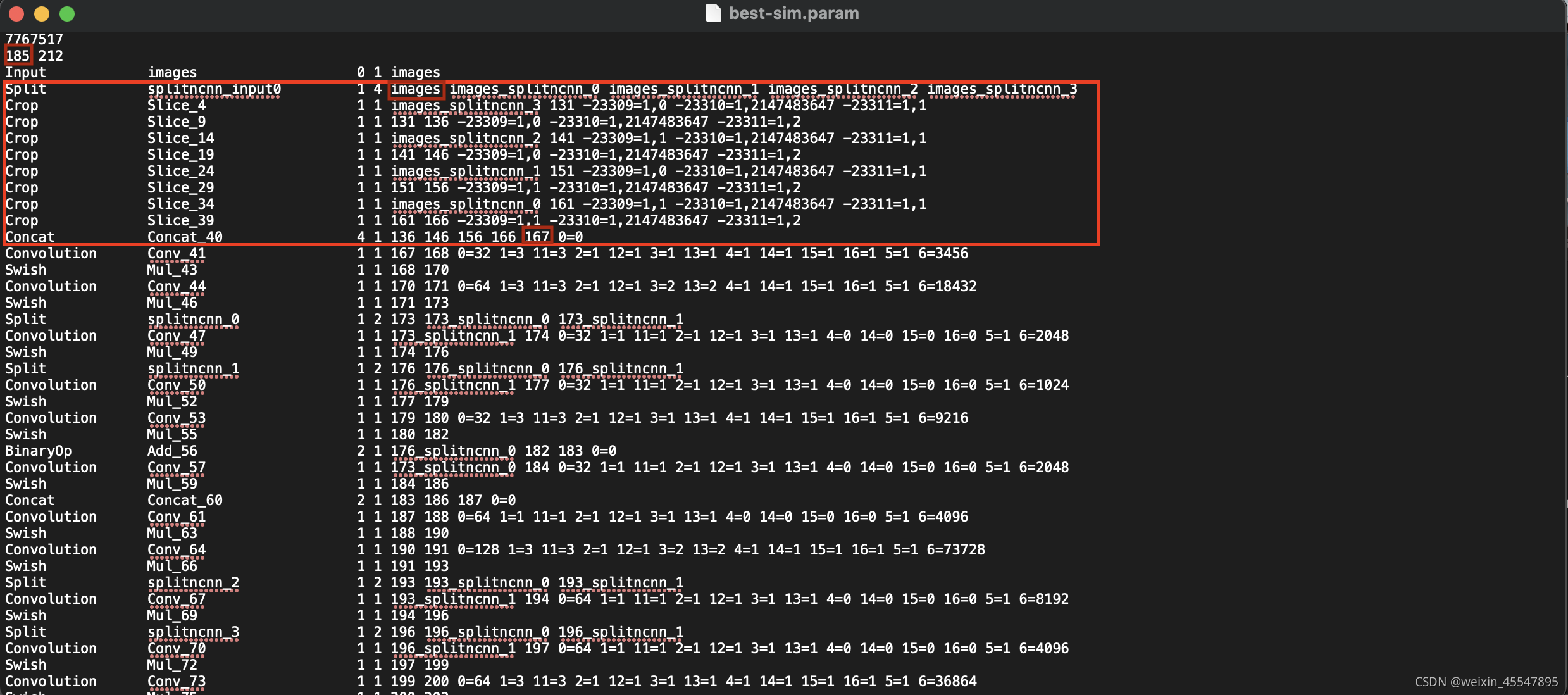

转换为 ncnn 模型,会输出很多 Unsupported slice step,这是focus模块转换的报错

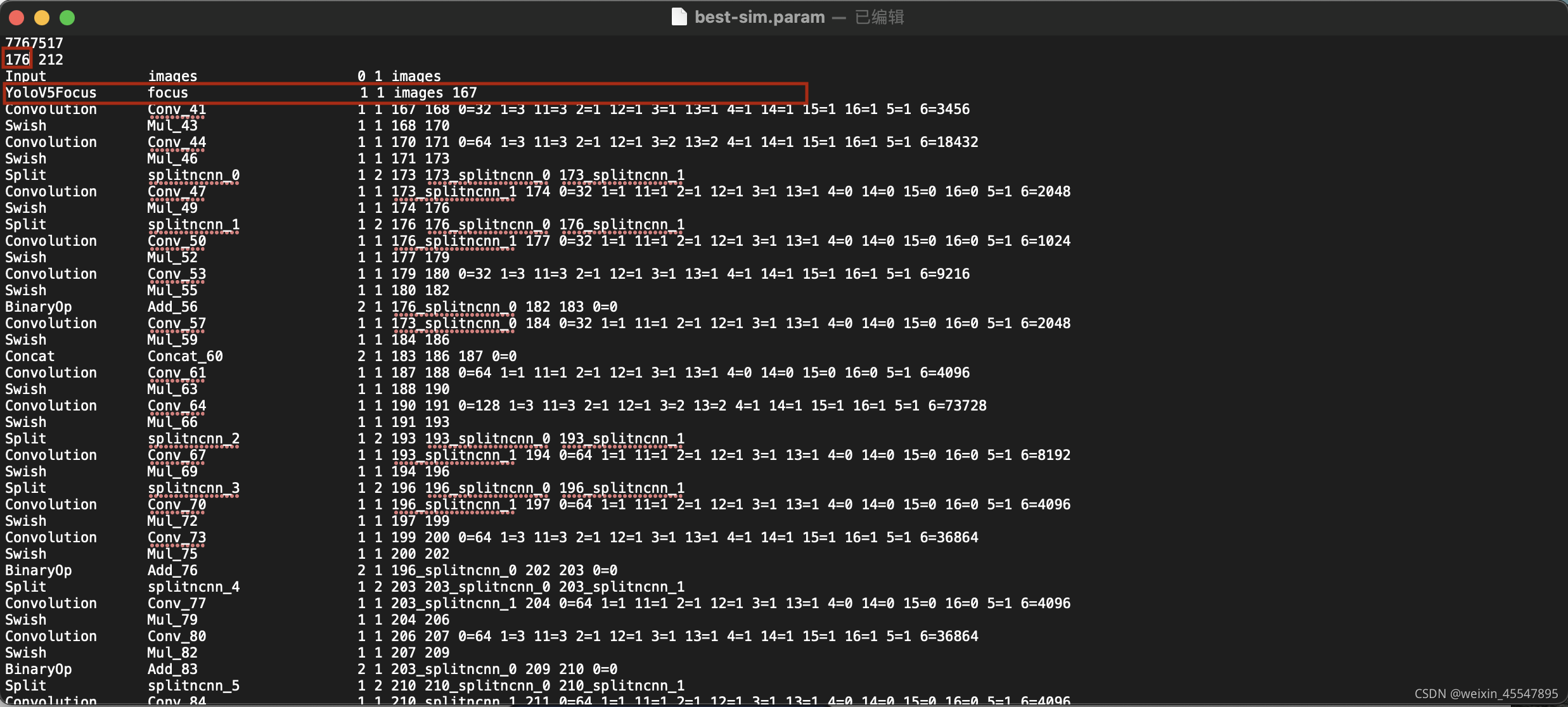

打开param文件,使用自定义的YoloV5Focus层替代

修改后

替换后使用ncnnoptimize 过一遍模型,缩小模型体积

./ncnnoptimize best-sim.param best-sim.bin best-opt.param best-opt.bin

至此已经完成模型的转化,可以部署至安卓端。

八.安卓端部署

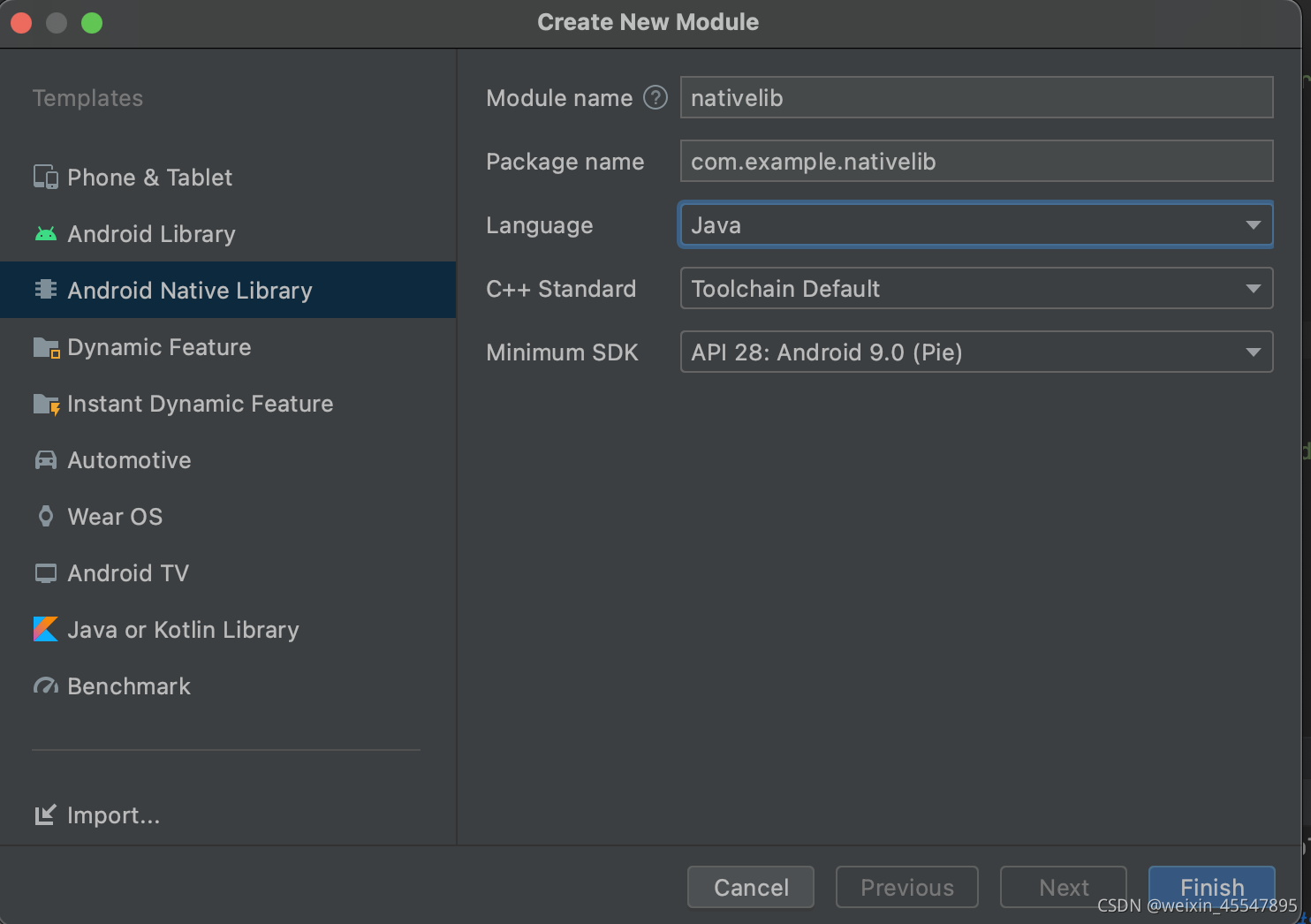

新建一个Android Native Library

根据ncnn部署的方式将ncnn部署好

以下代码为对应的java接口代码

import android.content.res.AssetManager;

import android.graphics.Bitmap;

public class YoloV5Ncnn

{

private static YoloV5Ncnn instance;

private YoloV5Ncnn(){

System.out.println("YoloV5Ncnn has loaded");

}

public static YoloV5Ncnn getInstance(){

if(instance==null){

synchronized (YoloV5Ncnn.class){

if(instance==null){

instance=new YoloV5Ncnn();

}

}

}

return instance;

}

public native boolean Init(AssetManager mgr);

public class Obj

{

public float x;

public float y;

public float w;

public float h;

public String label;

public float prob;

}

public native Obj[] Detect(Bitmap bitmap, boolean use_gpu);

static {

System.loadLibrary("native-lib");

}

}

对应的cpp代码为

//

// Created by 45467 on 2021/10/12.

//

#include <android/asset_manager_jni.h>

#include <android/bitmap.h>

#include <android/log.h>

#include <jni.h>

#include <string>

#include <vector>

// ncnn

#include "layer.h"

#include "net.h"

#include "benchmark.h"

#include <jni.h>

static ncnn::UnlockedPoolAllocator g_blob_pool_allocator;

static ncnn::PoolAllocator g_workspace_pool_allocator;

static ncnn::Net yolov5;

class YoloV5Focus : public ncnn::Layer

{

public:

YoloV5Focus()

{

one_blob_only = true;

}

virtual int forward(const ncnn::Mat& bottom_blob, ncnn::Mat& top_blob, const ncnn::Option& opt) const

{

int w = bottom_blob.w;

int h = bottom_blob.h;

int channels = bottom_blob.c;

int outw = w / 2;

int outh = h / 2;

int outc = channels * 4;

top_blob.create(outw, outh, outc, 4u, 1, opt.blob_allocator);

if (top_blob.empty())

return -100;

#pragma omp parallel for num_threads(opt.num_threads)

for (int p = 0; p < outc; p++)

{

const float* ptr = bottom_blob.channel(p % channels).row((p / channels) % 2) + ((p / channels) / 2);

float* outptr = top_blob.channel(p);

for (int i = 0; i < outh; i++)

{

for (int j = 0; j < outw; j++)

{

*outptr = *ptr;

outptr += 1;

ptr += 2;

}

ptr += w;

}

}

return 0;

}

};

DEFINE_LAYER_CREATOR(YoloV5Focus)

struct Object

{

float x;

float y;

float w;

float h;

int label;

float prob;

};

static inline float intersection_area(const Object& a, const Object& b)

{

if (a.x > b.x + b.w || a.x + a.w < b.x || a.y > b.y + b.h || a.y + a.h < b.y)

{

// no intersection

return 0.f;

}

float inter_width = std::min(a.x + a.w, b.x + b.w) - std::max(a.x, b.x);

float inter_height = std::min(a.y + a.h, b.y + b.h) - std::max(a.y, b.y);

return inter_width * inter_height;

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects, int left, int right)

{

int i = left;

int j = right;

float p = faceobjects[(left + right) / 2].prob;

while (i <= j)

{

while (faceobjects[i].prob > p)

i++;

while (faceobjects[j].prob < p)

j--;

if (i <= j)

{

// swap

std::swap(faceobjects[i], faceobjects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(faceobjects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(faceobjects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects)

{

if (faceobjects.empty())

return;

qsort_descent_inplace(faceobjects, 0, faceobjects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& faceobjects, std::vector<int>& picked, float nms_threshold)

{

picked.clear();

const int n = faceobjects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = faceobjects[i].w * faceobjects[i].h;

}

for (int i = 0; i < n; i++)

{

const Object& a = faceobjects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = faceobjects[picked[j]];

// intersection over union

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

static void generate_proposals(const ncnn::Mat& anchors, int stride, const ncnn::Mat& in_pad, const ncnn::Mat& feat_blob, float prob_threshold, std::vector<Object>& objects)

{

const int num_grid = feat_blob.h;

int num_grid_x;

int num_grid_y;

if (in_pad.w > in_pad.h)

{

num_grid_x = in_pad.w / stride;

num_grid_y = num_grid / num_grid_x;

}

else

{

num_grid_y = in_pad.h / stride;

num_grid_x = num_grid / num_grid_y;

}

const int num_class = feat_blob.w - 5;

const int num_anchors = anchors.w / 2;

for (int q = 0; q < num_anchors; q++)

{

const float anchor_w = anchors[q * 2];

const float anchor_h = anchors[q * 2 + 1];

const ncnn::Mat feat = feat_blob.channel(q);

for (int i = 0; i < num_grid_y; i++)

{

for (int j = 0; j < num_grid_x; j++)

{

const float* featptr = feat.row(i * num_grid_x + j);

// find class index with max class score

int class_index = 0;

float class_score = -FLT_MAX;

for (int k = 0; k < num_class; k++)

{

float score = featptr[5 + k];

if (score > class_score)

{

class_index = k;

class_score = score;

}

}

float box_score = featptr[4];

float confidence = sigmoid(box_score) * sigmoid(class_score);

if (confidence >= prob_threshold)

{

// yolov5/models/yolo.py Detect forward

// y = x[i].sigmoid()

// y[..., 0:2] = (y[..., 0:2] * 2. - 0.5 + self.grid[i].to(x[i].device)) * self.stride[i] # xy

// y[..., 2:4] = (y[..., 2:4] * 2) ** 2 * self.anchor_grid[i] # wh

float dx = sigmoid(featptr[0]);

float dy = sigmoid(featptr[1]);

float dw = sigmoid(featptr[2]);

float dh = sigmoid(featptr[3]);

float pb_cx = (dx * 2.f - 0.5f + j) * stride;

float pb_cy = (dy * 2.f - 0.5f + i) * stride;

float pb_w = pow(dw * 2.f, 2) * anchor_w;

float pb_h = pow(dh * 2.f, 2) * anchor_h;

float x0 = pb_cx - pb_w * 0.5f;

float y0 = pb_cy - pb_h * 0.5f;

float x1 = pb_cx + pb_w * 0.5f;

float y1 = pb_cy + pb_h * 0.5f;

Object obj;

obj.x = x0;

obj.y = y0;

obj.w = x1 - x0;

obj.h = y1 - y0;

obj.label = class_index;

obj.prob = confidence;

objects.push_back(obj);

}

}

}

}

}

extern "C" {

// FIXME DeleteGlobalRef is missing for objCls

static jclass objCls = NULL;

static jmethodID constructortorId;

static jfieldID xId;

static jfieldID yId;

static jfieldID wId;

static jfieldID hId;

static jfieldID labelId;

static jfieldID probId;

JNIEXPORT jint JNI_OnLoad(JavaVM* vm, void* reserved)

{

__android_log_print(ANDROID_LOG_DEBUG, "YoloV5Ncnn", "JNI_OnLoad");

ncnn::create_gpu_instance();

return JNI_VERSION_1_4;

}

JNIEXPORT void JNI_OnUnload(JavaVM* vm, void* reserved)

{

__android_log_print(ANDROID_LOG_DEBUG, "YoloV5Ncnn", "JNI_OnUnload");

ncnn::destroy_gpu_instance();

}

// public native boolean Init(AssetManager mgr);

JNIEXPORT jboolean JNICALL

Java_com_luo_face_YoloV5Ncnn_Init(JNIEnv *env, jobject thiz, jobject assetManager) {

ncnn::Option opt;

opt.lightmode = true;

opt.num_threads = 4;

opt.blob_allocator = &g_blob_pool_allocator;

opt.workspace_allocator = &g_workspace_pool_allocator;

opt.use_packing_layout = true;

// use vulkan compute

if (ncnn::get_gpu_count() != 0)

opt.use_vulkan_compute = true;

AAssetManager* mgr = AAssetManager_fromJava(env, assetManager);

yolov5.opt = opt;

yolov5.register_custom_layer("YoloV5Focus", YoloV5Focus_layer_creator);

// init param

{

int ret = yolov5.load_param(mgr, "helmet-opt.param");

if (ret != 0)

{

__android_log_print(ANDROID_LOG_DEBUG, "YoloV5Ncnn", "load_param failed");

return JNI_FALSE;

}

}

// init bin

{

int ret = yolov5.load_model(mgr, "helmet-opt.bin");

if (ret != 0)

{

__android_log_print(ANDROID_LOG_DEBUG, "YoloV5Ncnn", "load_model failed");

return JNI_FALSE;

}

}

// init jni glue

jclass localObjCls = env->FindClass("com/luo/face/YoloV5Ncnn$Obj");

objCls = reinterpret_cast<jclass>(env->NewGlobalRef(localObjCls));

constructortorId = env->GetMethodID(objCls, "<init>", "(Lcom/luo/face/YoloV5Ncnn;)V");

xId = env->GetFieldID(objCls, "x", "F");

yId = env->GetFieldID(objCls, "y", "F");

wId = env->GetFieldID(objCls, "w", "F");

hId = env->GetFieldID(objCls, "h", "F");

labelId = env->GetFieldID(objCls, "label", "Ljava/lang/String;");

probId = env->GetFieldID(objCls, "prob", "F");

return JNI_TRUE;

}

JNIEXPORT jobjectArray JNICALL

Java_com_luo_face_YoloV5Ncnn_Detect(JNIEnv *env, jobject thiz, jobject bitmap, jboolean use_gpu) {

// TODO: implement Detect()

if (use_gpu == JNI_TRUE && ncnn::get_gpu_count() == 0)

{

return NULL;

//return env->NewStringUTF("no vulkan capable gpu");

}

double start_time = ncnn::get_current_time();

AndroidBitmapInfo info;

AndroidBitmap_getInfo(env, bitmap, &info);

const int width = info.width;

const int height = info.height;

if (info.format != ANDROID_BITMAP_FORMAT_RGBA_8888)

return NULL;

// ncnn from bitmap

const int target_size = 640;

// letterbox pad to multiple of 32

int w = width;

int h = height;

float scale = 1.f;

if (w > h)

{

scale = (float)target_size / w;

w = target_size;

h = h * scale;

}

else

{

scale = (float)target_size / h;

h = target_size;

w = w * scale;

}

ncnn::Mat in = ncnn::Mat::from_android_bitmap_resize(env, bitmap, ncnn::Mat::PIXEL_RGB, w, h);

// pad to target_size rectangle

// yolov5/utils/datasets.py letterbox

int wpad = (w + 31) / 32 * 32 - w;

int hpad = (h + 31) / 32 * 32 - h;

ncnn::Mat in_pad;

ncnn::copy_make_border(in, in_pad, hpad / 2, hpad - hpad / 2, wpad / 2, wpad - wpad / 2, ncnn::BORDER_CONSTANT, 114.f);

// yolov5

std::vector<Object> objects;

{

const float prob_threshold = 0.25f;

const float nms_threshold = 0.45f;

const float norm_vals[3] = {1 / 255.f, 1 / 255.f, 1 / 255.f};

in_pad.substract_mean_normalize(0, norm_vals);

ncnn::Extractor ex = yolov5.create_extractor();

ex.set_vulkan_compute(use_gpu);

ex.input("images", in_pad);

std::vector<Object> proposals;

// anchor setting from yolov5/models/yolov5s.yaml

// stride 8

{

ncnn::Mat out;

ex.extract("output", out);

ncnn::Mat anchors(6);

anchors[0] = 10.f;

anchors[1] = 13.f;

anchors[2] = 16.f;

anchors[3] = 30.f;

anchors[4] = 33.f;

anchors[5] = 23.f;

std::vector<Object> objects8;

generate_proposals(anchors, 8, in_pad, out, prob_threshold, objects8);

proposals.insert(proposals.end(), objects8.begin(), objects8.end());

}

// stride 16

{

ncnn::Mat out;

ex.extract("427", out);

ncnn::Mat anchors(6);

anchors[0] = 30.f;

anchors[1] = 61.f;

anchors[2] = 62.f;

anchors[3] = 45.f;

anchors[4] = 59.f;

anchors[5] = 119.f;

std::vector<Object> objects16;

generate_proposals(anchors, 16, in_pad, out, prob_threshold, objects16);

proposals.insert(proposals.end(), objects16.begin(), objects16.end());

}

// stride 32

{

ncnn::Mat out;

ex.extract("452", out);

ncnn::Mat anchors(6);

anchors[0] = 116.f;

anchors[1] = 90.f;

anchors[2] = 156.f;

anchors[3] = 198.f;

anchors[4] = 373.f;

anchors[5] = 326.f;

std::vector<Object> objects32;

generate_proposals(anchors, 32, in_pad, out, prob_threshold, objects32);

proposals.insert(proposals.end(), objects32.begin(), objects32.end());

}

// sort all proposals by score from highest to lowest

qsort_descent_inplace(proposals);

// apply nms with nms_threshold

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

// adjust offset to original unpadded

float x0 = (objects[i].x - (wpad / 2)) / scale;

float y0 = (objects[i].y - (hpad / 2)) / scale;

float x1 = (objects[i].x + objects[i].w - (wpad / 2)) / scale;

float y1 = (objects[i].y + objects[i].h - (hpad / 2)) / scale;

// clip

x0 = std::max(std::min(x0, (float)(width - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(height - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(width - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(height - 1)), 0.f);

objects[i].x = x0;

objects[i].y = y0;

objects[i].w = x1 - x0;

objects[i].h = y1 - y0;

}

}

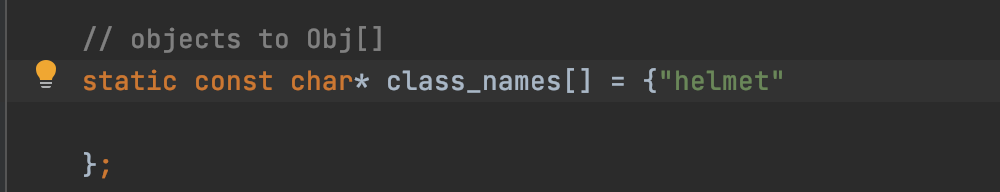

// objects to Obj[]

static const char* class_names[] = {"helmet"

};

jobjectArray jObjArray = env->NewObjectArray(objects.size(), objCls, NULL);

for (size_t i=0; i<objects.size(); i++)

{

jobject jObj = env->NewObject(objCls, constructortorId, thiz);

env->SetFloatField(jObj, xId, objects[i].x);

env->SetFloatField(jObj, yId, objects[i].y);

env->SetFloatField(jObj, wId, objects[i].w);

env->SetFloatField(jObj, hId, objects[i].h);

env->SetObjectField(jObj, labelId, env->NewStringUTF(class_names[objects[i].label]));

env->SetFloatField(jObj, probId, objects[i].prob);

env->SetObjectArrayElement(jObjArray, i, jObj);

}

double elasped = ncnn::get_current_time() - start_time;

__android_log_print(ANDROID_LOG_DEBUG, "YoloV5Ncnn", "%.2fms detect", elasped);

return jObjArray;

}

}

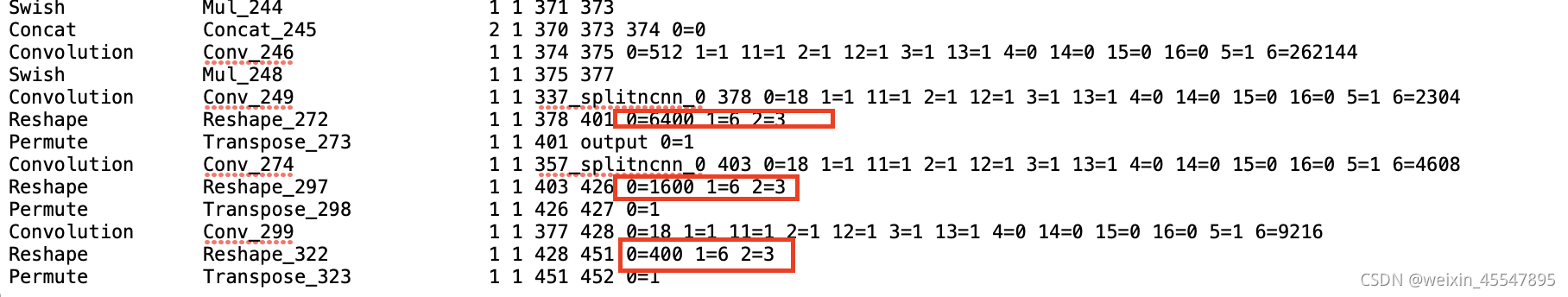

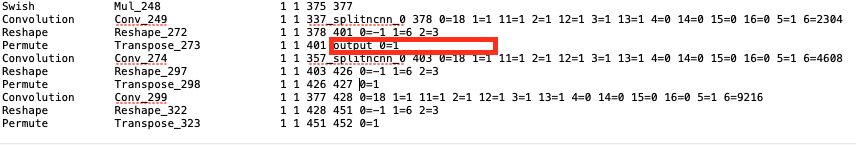

此时我们需要修改下param文件中的输出

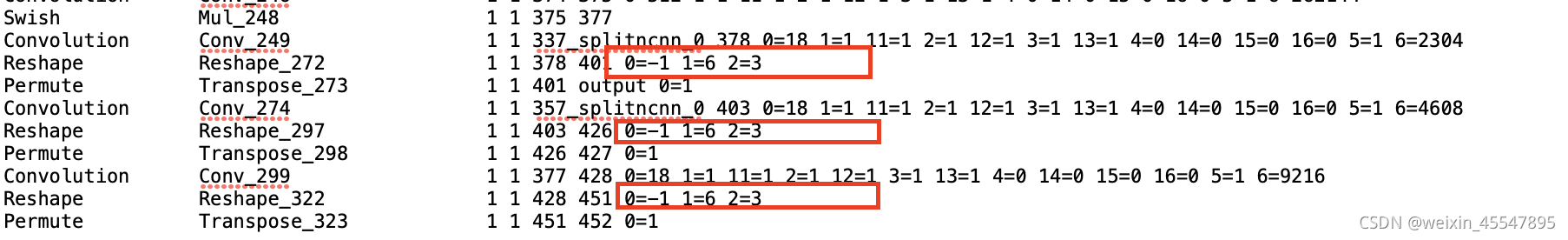

修改为:

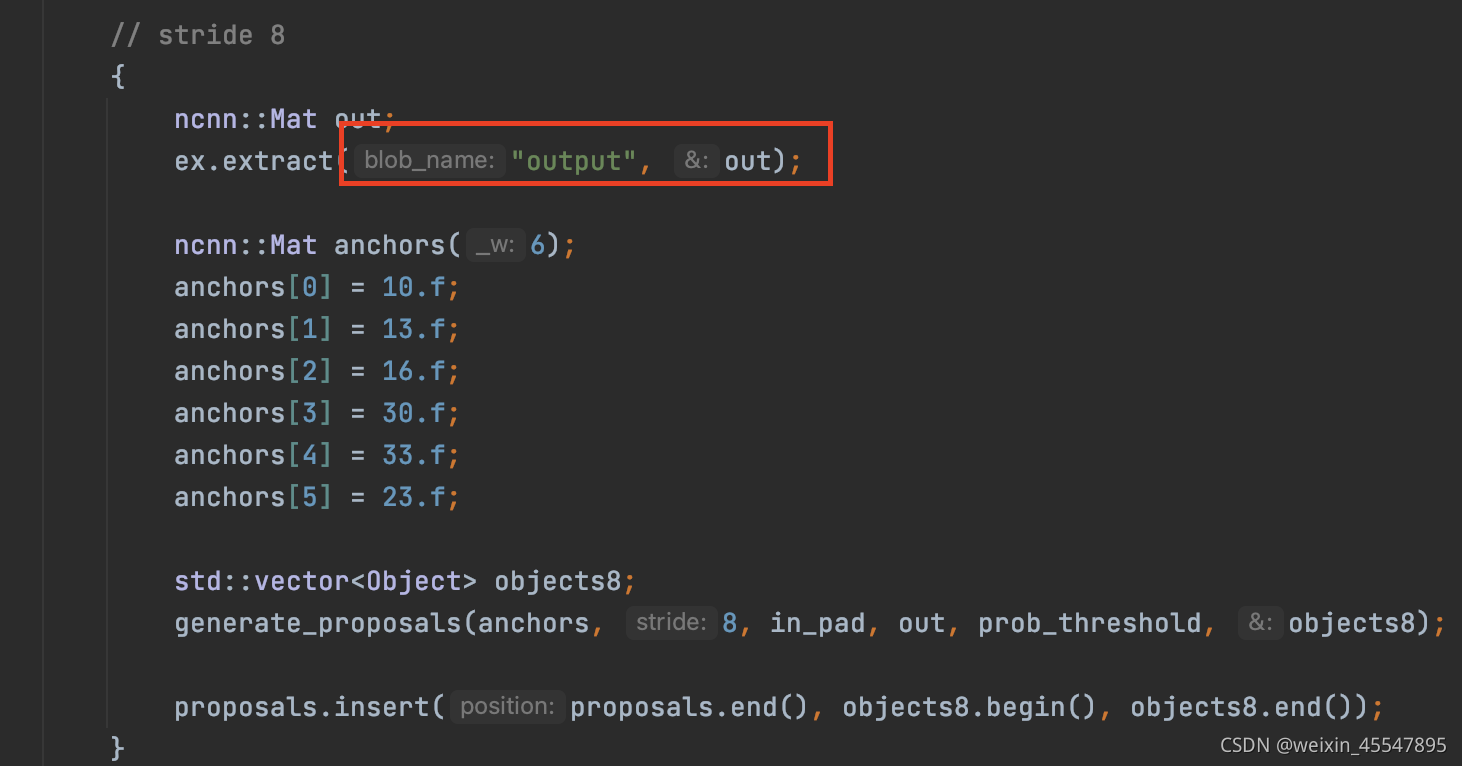

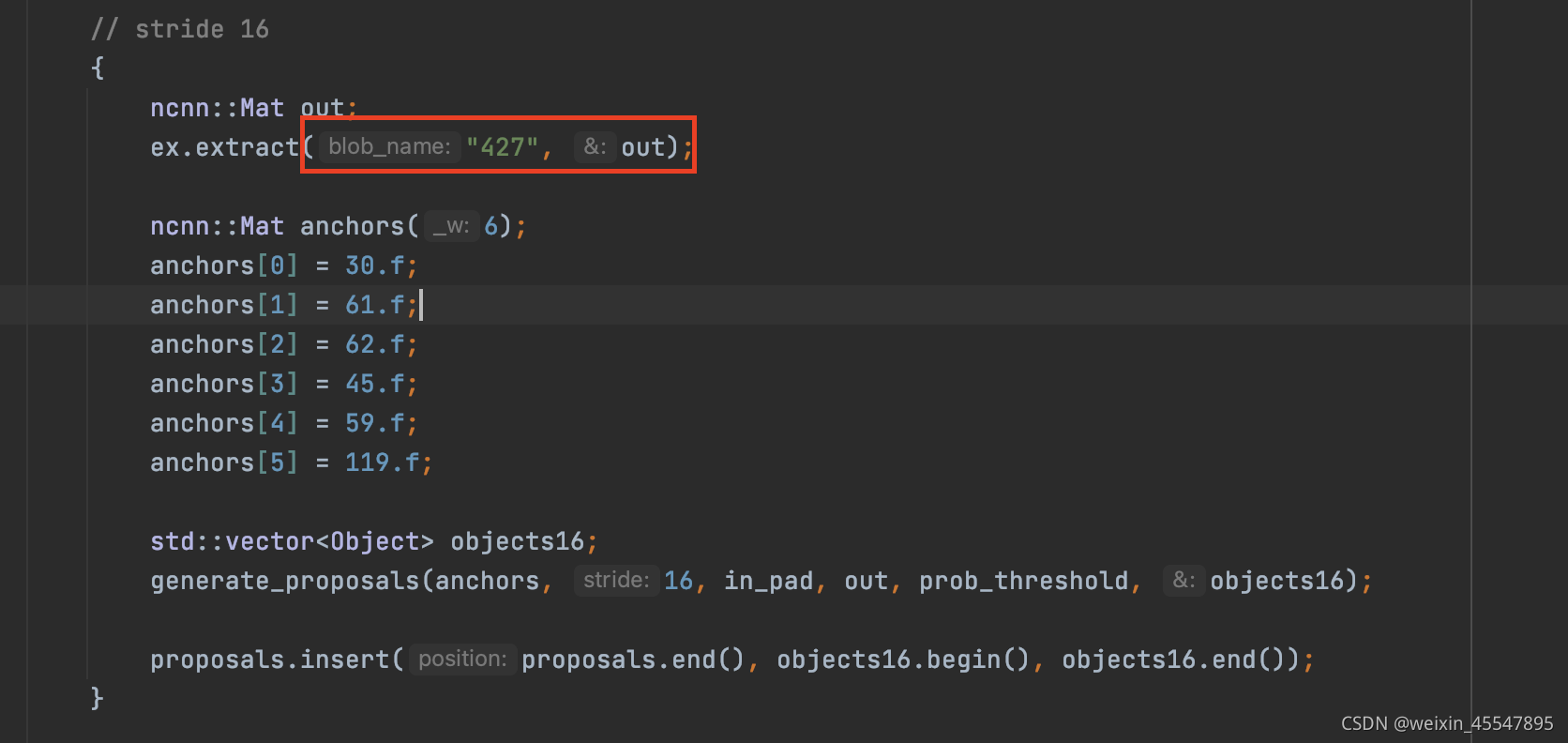

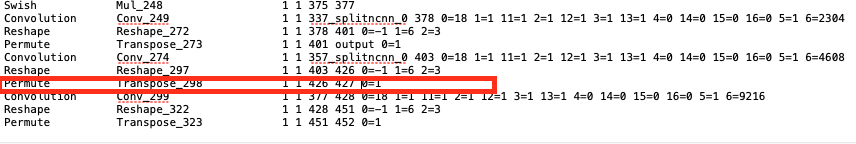

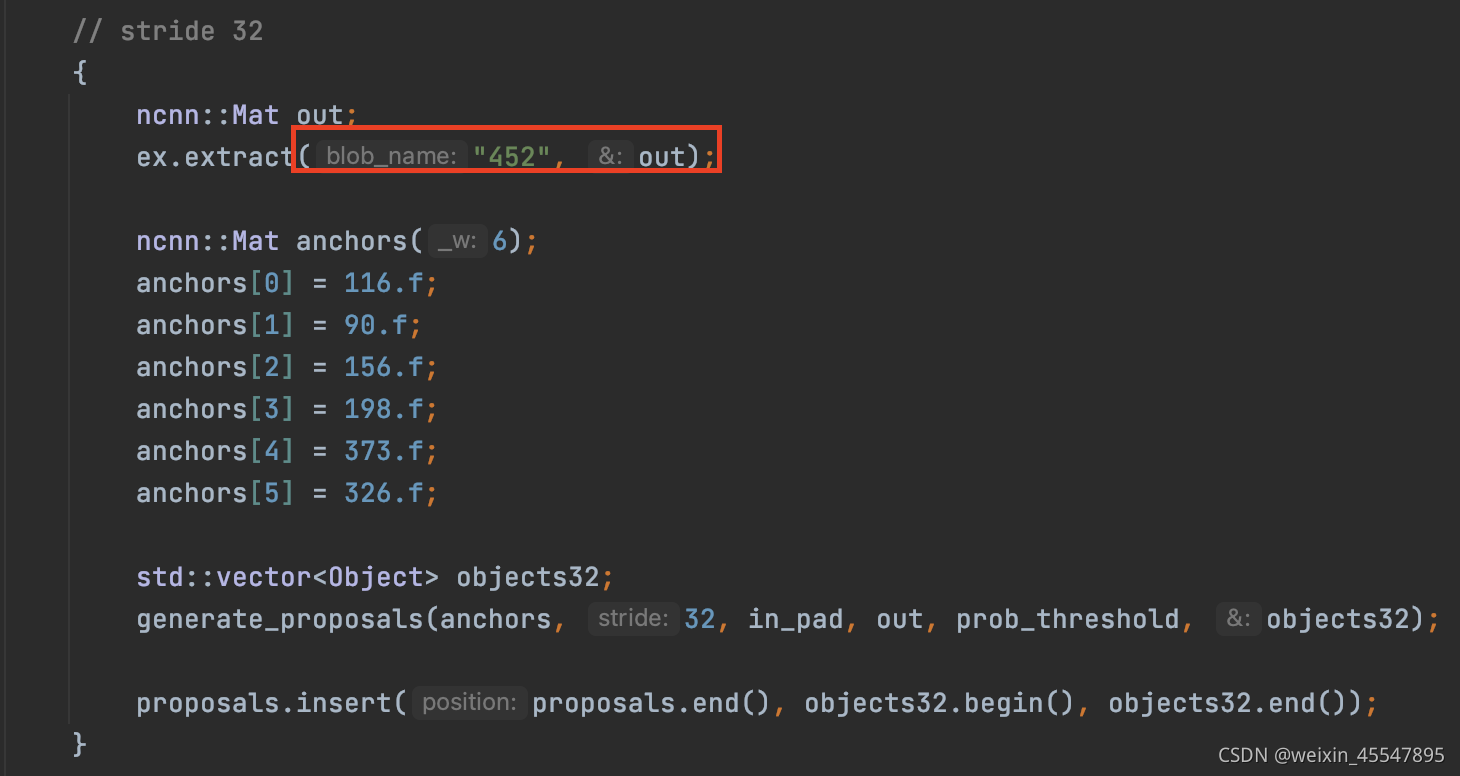

对应的代码部分也需要作相应的修改

对应于:

对应于:

对应于:

还需修改对应的类名(按照需求更改为自己的类名)

此处只有helmet一个类。

最后获取安全帽的置信度

至此,安全帽检测的已经完成了在安卓端的部署。

感谢章某煜以及潘某怡同学帮忙制作的数据集。

工地安全帽数据集已有一万多张,需要的请留言。