文章目录

注意:部署OpenStack-Nova组件之前需要先部署OpenStack-Placement组件

【OpenStack-Placement组件部署】

一、创建数据库实例和数据库用户

[root@ct ~]# mysql -uroot -p

MariaDB [(none)]> CREATE DATABASE placement;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' IDENTIFIED BY 'PLACEMENT_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY 'PLACEMENT_DBPASS';

MariaDB [(none)]> flush privileges;

MariaDB [(none)]> exit;

二、创建Placement服务用户和API的endpoint

● 创建placement用户

[root@ct ~]# openstack user create --domain default --password PLACEMENT_PASS placement

● 给与placement用户对service项目拥有admin权限

[root@ct ~]# openstack role add --project service --user placement admin

● 创建一个placement服务,服务类型为placement

[root@ct ~]# openstack service create --name placement --description "Placement API" placement

● 注册API端口到placement的service中;注册的信息会写入到mysql中

[root@ct ~]# openstack endpoint create --region RegionOne placement public http://ct:8778

[root@ct ~]# openstack endpoint create --region RegionOne placement internal http://ct:8778

[root@ct~]# openstack endpoint create --region RegionOne placement admin http://ct:8778

● 安装placement服务

[root@ct ~]# yum -y install openstack-placement-api

● 修改placement配置文件

#修改配置文件,可以直接刷下面命令

grep -Ev '^$|#' /etc/placement/placement.conf.bak > /etc/placement/placement.conf

openstack-config --set /etc/placement/placement.conf placement_database connection mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

openstack-config --set /etc/placement/placement.conf api auth_strategy keystone

openstack-config --set /etc/placement/placement.conf keystone_authtoken auth_url http://ct:5000/v3

openstack-config --set /etc/placement/placement.conf keystone_authtoken memcached_servers ct:11211

openstack-config --set /etc/placement/placement.conf keystone_authtoken auth_type password

openstack-config --set /etc/placement/placement.conf keystone_authtoken project_domain_name Default

openstack-config --set /etc/placement/placement.conf keystone_authtoken user_domain_name Default

openstack-config --set /etc/placement/placement.conf keystone_authtoken project_name service

openstack-config --set /etc/placement/placement.conf keystone_authtoken username placement

openstack-config --set /etc/placement/placement.conf keystone_authtoken password PLACEMENT_PASS

#查看placement配置文件

[root@ct ~]# cd /etc/placement/

[root@ct placement]# cat placement.conf

[DEFAULT]

[api]

auth_strategy = keystone

[cors]

[keystone_authtoken]

auth_url = http://ct:5000/v3 #指定keystone地址

memcached_servers = ct:11211 #session信息是缓存放到了memcached中

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = placement

password = PLACEMENT_PASS

[oslo_policy]

[placement]

[placement_database]

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

[profiler]

● 导入数据库

su -s /bin/sh -c "placement-manage db sync" placement

● 修改Apache配置文件: 00-placemenct-api.conf(安装完placement服务后会自动创建该文件-虚拟主机配置 )

#虚拟主机配置文件

[root@ct ~]# cd /etc/httpd/conf.d

[root@ct conf.d]# vim 00-placement-api.conf #安装完placement会自动创建此文件

Listen 8778

<VirtualHost *:8778>

WSGIProcessGroup placement-api

WSGIApplicationGroup %{

GLOBAL}

WSGIPassAuthorization On

WSGIDaemonProcess placement-api processes=3 threads=1 user=placement group=placement

WSGIScriptAlias / /usr/bin/placement-api

<IfVersion >= 2.4>

ErrorLogFormat "%M"

</IfVersion>

ErrorLog /var/log/placement/placement-api.log

#SSLEngine On

#SSLCertificateFile ...

#SSLCertificateKeyFile ...

</VirtualHost>

Alias /placement-api /usr/bin/placement-api

<Location /placement-api>

SetHandler wsgi-script

Options +ExecCGI

WSGIProcessGroup placement-api

WSGIApplicationGroup %{

GLOBAL}

WSGIPassAuthorization On

</Location>

<Directory /usr/bin> #此处是bug,必须添加下面的配置来启用对placement api的访问,否则在访问apache的api时会报403;添加在文件的最后即可

<IfVersion >= 2.4>

Require all granted

</IfVersion>

<IfVersion < 2.4> #apache版本;允许apache访问/usr/bin目录;否则/usr/bin/placement-api将不允许被访问

Order allow,deny

Allow from all #允许apache访问

</IfVersion>

</Directory>

● 重新启动apache

[root@ct conf.d]# systemctl restart httpd

● 测试

① curl 测试访问

[root@ct ~]# curl ct:8778

{

"versions": [{

"status": "CURRENT", "min_version": "1.0", "max_version": "1.36", "id": "v1.0", "links": [{

"href": "", "rel": "self"}]}]}

② 查看端口占用(netstat、lsof)

[root@ct ~]# netstat -natp | grep 8778

tcp6 0 0 :::8778 :::* LISTEN 72994/httpd

③ 检查placement状态

[root@ct ~]# placement-status upgrade check

+----------------------------------+

| Upgrade Check Results |

+----------------------------------+

| Check: Missing Root Provider IDs |

| Result: Success |

| Details: None |

+----------------------------------+

| Check: Incomplete Consumers |

| Result: Success |

| Details: None |

+----------------------------------+

● 小结

Placement提供了placement-apiWSGI脚本,用于与Apache,nginx或其他支持WSGI的Web服务器一起运行服务(通过nginx或apache实现python入口代理)。

根据用于部署OpenStack的打包解决方案,WSGI脚本可能位于/usr/bin 或中/usr/local/bin

Placement服务是从 S 版本,从nova服务中拆分出来的组件,作用是收集各个node节点的可用资源,把node节点的资源统计写入到mysql,Placement服务会被nova scheduler服务进行调用 Placement服务的监听端口是8778

需修改的配置文件:

① placement.conf

主要修改思路:

Keystone认证相关(url、HOST:PORT、域、账号密码等)

对接数据库(位置)

② 00-placement-api.conf

主要修改思路:

Apache权限、访问控制

【OpenStack-nova组件部署】

一、nova组件部署位置

【控制节点ct】

nova-api(nova主服务)

nova-scheduler(nova调度服务)

nova-conductor(nova数据库服务,提供数据库访问)

nova-novncproxy(nova的vnc服务,提供实例的控制台)

【计算节点c1、c2】

nova-compute(nova计算服务)

二、计算节点Nova服务配置

● 创建nova数据库,并执行授权操作

[root@ct ~]# mysql -uroot -p

MariaDB [(none)]> CREATE DATABASE nova_api;

MariaDB [(none)]> CREATE DATABASE nova;

MariaDB [(none)]> CREATE DATABASE nova_cell0;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY 'NOVA_DBPASS';

MariaDB [(none)]> flush privileges;

MariaDB [(none)]> exit

● 管理Nova用户及服务

#创建nova用户

[root@ct ~]# openstack user create --domain default --password NOVA_PASS nova

#把nova用户添加到service项目,拥有admin权限

[root@ct ~]# openstack role add --project service --user nova admin

#创建nova服务

[root@ct ~]# openstack service create --name nova --description "OpenStack Compute" compute

#给Nova服务关联endpoint(端点)

[root@ct ~]# openstack endpoint create --region RegionOne compute public http://ct:8774/v2.1

[root@ct ~]# openstack endpoint create --region RegionOne compute internal http://ct:8774/v2.1

[root@ct ~]# openstack endpoint create --region RegionOne compute admin http://ct:8774/v2.1

安装nova组件(nova-api、nova-conductor、nova-novncproxy、nova-scheduler)

[root@ct ~]# yum -y install openstack-nova-api openstack-nova-conductor openstack-nova-novncproxy openstack-nova-scheduler

● 修改nova配置文件(nova.conf)

[root@ct ~]# cp -a /etc/nova/nova.conf{

,.bak}

[root@ct ~]# grep -Ev '^$|#' /etc/nova/nova.conf.bak > /etc/nova/nova.conf

#修改nova.conf

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.100.11 ####修改为 ct的IP(内部IP,即VM IP)

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron true

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver

[root@ct ~]# openstack-config --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@ct

[root@ct ~]# openstack-config --set /etc/nova/nova.conf api_database connection mysql+pymysql://nova:NOVA_DBPASS@ct/nova_api

[root@ct ~]# openstack-config --set /etc/nova/nova.conf database connection mysql+pymysql://nova:NOVA_DBPASS@ct/nova

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement_database connection mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

[root@ct ~]# openstack-config --set /etc/nova/nova.conf api auth_strategy keystone

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://ct:5000/v3

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers ct:11211

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type password

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken project_name service

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken username nova

[root@ct ~]# openstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc enabled true

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc server_listen ' $my_ip'

[root@ct ~]# openstack-config --set /etc/nova/nova.conf vnc server_proxyclient_address ' $my_ip'

[root@ct ~]# openstack-config --set /etc/nova/nova.conf glance api_servers http://ct:9292

[root@ct ~]# openstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement region_name RegionOne

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement project_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement project_name service

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement auth_type password

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement user_domain_name Default

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement auth_url http://ct:5000/v3

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement username placement

[root@ct ~]# openstack-config --set /etc/nova/nova.conf placement password PLACEMENT_PASS

#查看nova.conf

[root@ct ~]# cat /etc/nova/nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata #指定支持的api类型

my_ip = 192.168.100.11 #定义本地IP

use_neutron = true #通过neutron获取IP地址

firewall_driver = nova.virt.firewall.NoopFirewallDriver

transport_url = rabbit://openstack:RABBIT_PASS@ct #指定连接的rabbitmq

[api]

auth_strategy = keystone #指定使用keystone认证

[api_database]

connection = mysql+pymysql://nova:NOVA_DBPASS@ct/nova_api

[barbican]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[database]

connection = mysql+pymysql://nova:NOVA_DBPASS@ct/nova

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://ct:9292

[guestfs]

[healthcheck]

[hyperv]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken] #配置keystone的认证信息

auth_url = http://ct:5000/v3 #到此url去认证

memcached_servers = ct:11211 #memcache数据库地址:端口

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[libvirt]

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency] #指定锁路径

lock_path = /var/lib/nova/tmp #锁的作用是创建虚拟机时,在执行某个操作的时候,需要等此步骤执行完后才能执行下一个步骤,不能并行执行,保证操作是一步一步的执行

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://ct:5000/v3

username = placement

password = PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc] #此处如果配置不正确,则连接不上虚拟机的控制台

enabled = true

server_listen = $my_ip #指定vnc的监听地址

server_proxyclient_address = $my_ip #server的客户端地址为本机地址;此地址是管理网的地址

[workarounds]

[wsgi]

[xenserver]

[xvp]

[zvm]

[placement_database]

connection = mysql+pymysql://placement:PLACEMENT_DBPASS@ct/placement

● 初始化数据库

初始化nova_api数据库

[root@ct ~]# su -s /bin/sh -c "nova-manage api_db sync" nova

● 注册cell0数据库

nova服务内部把资源划分到不同的cell中,把计算节点划分到不同的cell中;openstack内部基于cell把计算节点进行逻辑上的分组

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

#创建cell1单元格;

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

#初始化nova数据库;可以通过 /var/log/nova/nova-manage.log 日志判断是否初始化成功

[root@ct ~]# su -s /bin/sh -c "nova-manage db sync" nova

#可使用以下命令验证cell0和cell1是否注册成功

su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova #验证cell0和cell1组件是否注册成功

● 启动Nova服务

[root@ct ~]# systemctl enable openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

[root@ct ~]# systemctl start openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service

● 检查nova服务端口

[root@ct ~]# netstat -tnlup|egrep '8774|8775'

[root@ct ~]# curl http://ct:8774

【计算节点配置Nova服务-c1和c2节点】

● 安装nova-compute组件

yum -y install openstack-nova-compute

修改配置文件

#编辑计算节点节点Nova配置文件(c1和c2同样配置、只有IP不同)

cp -a /etc/nova/nova.conf{

,.bak}

grep -Ev '^$|#' /etc/nova/nova.conf.bak > /etc/nova/nova.conf

openstack-config --set /etc/nova/nova.conf DEFAULT enabled_apis osapi_compute,metadata

openstack-config --set /etc/nova/nova.conf DEFAULT transport_url rabbit://openstack:RABBIT_PASS@ct

openstack-config --set /etc/nova/nova.conf DEFAULT my_ip 192.168.100.12 #此处修改为对应节点的内部(VM)IP

openstack-config --set /etc/nova/nova.conf DEFAULT use_neutron true

openstack-config --set /etc/nova/nova.conf DEFAULT firewall_driver nova.virt.firewall.NoopFirewallDriver

openstack-config --set /etc/nova/nova.conf api auth_strategy keystone

openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_url http://ct:5000/v3

openstack-config --set /etc/nova/nova.conf keystone_authtoken memcached_servers ct:11211

openstack-config --set /etc/nova/nova.conf keystone_authtoken auth_type password

openstack-config --set /etc/nova/nova.conf keystone_authtoken project_domain_name Default

openstack-config --set /etc/nova/nova.conf keystone_authtoken user_domain_name Default

openstack-config --set /etc/nova/nova.conf keystone_authtoken project_name service

openstack-config --set /etc/nova/nova.conf keystone_authtoken username nova

openstack-config --set /etc/nova/nova.conf keystone_authtoken password NOVA_PASS

openstack-config --set /etc/nova/nova.conf vnc enabled true

openstack-config --set /etc/nova/nova.conf vnc server_listen 0.0.0.0

openstack-config --set /etc/nova/nova.conf vnc server_proxyclient_address ' $my_ip'

openstack-config --set /etc/nova/nova.conf vnc novncproxy_base_url http://192.168.100.11:6080/vnc_auto.html

openstack-config --set /etc/nova/nova.conf glance api_servers http://ct:9292

openstack-config --set /etc/nova/nova.conf oslo_concurrency lock_path /var/lib/nova/tmp

openstack-config --set /etc/nova/nova.conf placement region_name RegionOne

openstack-config --set /etc/nova/nova.conf placement project_domain_name Default

openstack-config --set /etc/nova/nova.conf placement project_name service

openstack-config --set /etc/nova/nova.conf placement auth_type password

openstack-config --set /etc/nova/nova.conf placement user_domain_name Default

openstack-config --set /etc/nova/nova.conf placement auth_url http://ct:5000/v3

openstack-config --set /etc/nova/nova.conf placement username placement

openstack-config --set /etc/nova/nova.conf placement password PLACEMENT_PASS

openstack-config --set /etc/nova/nova.conf libvirt virt_type qemu

#配置文件内容如下:

[root@c1 ~]# cd /etc/nova/

[root@c1 nova]# cat nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:RABBIT_PASS@ct

my_ip = 192.168.100.12

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[api_database]

[barbican]

[cache]

[cinder]

[compute]

[conductor]

[console]

[consoleauth]

[cors]

[database]

[devices]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://ct:9292

[guestfs]

[healthcheck]

[hyperv]

[ironic]

[key_manager]

[keystone]

[keystone_authtoken]

auth_url = http://ct:5000/v3

memcached_servers = ct:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = NOVA_PASS

[libvirt]

virt_type = qemu

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://ct:5000/v3

username = placement

password = PLACEMENT_PASS

[powervm]

[privsep]

[profiler]

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[upgrade_levels]

[vault]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://192.168.100.11:6080/vnc_auto.html #比较特殊的地方,需要手动添加IP地址,否则之后搭建成功后,无法通过UI控制台访问到内部虚拟机

[workarounds]

[wsgi]

[xenserver]

[xvp]

[zvm]

● 开启服务

systemctl enable libvirtd.service openstack-nova-compute.service

systemctl start libvirtd.service openstack-nova-compute.service

【计算节点-c2】与c1相同(除了IP地址)

【controller节点操作】

● 查看compute节点是否注册到controller上,通过消息队列;需要在controller节点(即ct节点)执行

[root@ct ~]# openstack compute service list --service nova-compute

● 扫描当前openstack中有哪些计算节点可用,发现后会把计算节点创建到cell中,后面就可以在cell中创建虚拟机;相当于openstack内部对计算节点进行分组,把计算节点分配到不同的cell中

[root@ct ~]# su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova

● 默认每次添加个计算节点,在控制端就需要执行一次扫描,这样会很麻烦,所以可以修改控制端nova的主配置文件:

[root@ct ~]# vim /etc/nova/nova.conf

[scheduler]

discover_hosts_in_cells_interval = 300 #每300秒扫描一次

[root@ct ~]# systemctl restart openstack-nova-api.service

● 验证计算节点服务

#检查 nova 的各个服务是否都是正常,以及 compute 服务是否注册成功

[root@ct ~]# openstack compute service list

#查看各个组件的 api 是否正常

[root@ct ~]# openstack catalog list

#查看是否能够拿到镜像

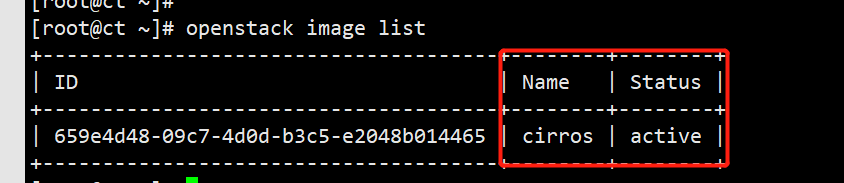

[root@ct ~]# openstack image list

#查看cell的api和placement的api是否正常,只要其中一个有误,后期无法创建虚拟机

[root@ct ~]# nova-status upgrade check

● 小结

Nova分为控制节点、计算节点

Nova组件核心功能是调度资源,在配置文件中需要体现的部分:指向认证节点位置(URL、ENDPOINT)、调用服务、注册、提供支持等,配置文件中的所有配置参数基本都是围绕此范围进行设置