wordcountReduce.java

package MaperReduce;

import java.io.IOException;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

//reduce阶段

/*

* 四个泛型的解释

* KEYIN:K2的类型

* VALUEIN:V2的类型

*

* KEYOUT:K3的类型

* VALUEOUT:V3的类型

*/

public class wordcountReduce extends Reducer<Text, LongWritable, Text, LongWritable> {

/*

* 参数 : key :新k2

* value:新v2

* context:表示上下文对象

*/

@Override

protected void reduce(Text key, Iterable<LongWritable> values,

Reducer<Text, LongWritable, Text, LongWritable>.Context context) throws IOException, InterruptedException {

long count = 0;

//遍历集合,将集合中的数字相加,得到v3

for (LongWritable value : values) {

count += value.get();

}

//将k3和v3写入上下文中

context.write(key, new LongWritable(count));

}

}

wrodcountMaper.java

package MaperReduce;

import java.io.IOException;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

//map阶段

/*

* 四个泛型的解释

* KEYIN:K1的类型

* VALUEIN:V1的类型

*

* KEYOUT:K2的类型

* VALUEOUT:V2的类型

*/

public class wrodcountMaper extends Mapper<LongWritable, Text, Text, LongWritable> {

//map方法就是将k1和v1转为k2和v2

/*

* 参数: key :k1 行偏移量 value :v1 每行的文本数据(就是统计的单词本身) context:表示上下文对象

*/

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, LongWritable>.Context context)

throws IOException, InterruptedException {

Text text = new Text();

LongWritable longWritable = new LongWritable();

// 1、将一行的文本数据进行拆分

String[] split = value.toString().split(",");

// 2、遍历数组,组装k2和v2

for (String word : split) {

// 可以new出来调用 context.write(new Text(word), new LongWritable(1));

// 3、将k2和v2写入上下文

text.set(word);

longWritable.set(1);

context.write(text, longWritable);

}

}

}

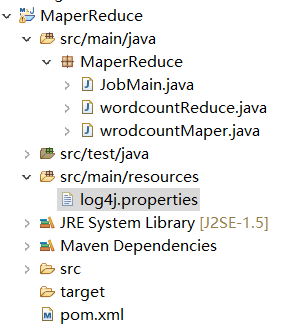

JobMain.java(也就是driver类,驱动类)

package MaperReduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

public class JobMain extends Configured implements Tool{

//该方法用于指定一个job任务

public int run(String[] args) throws Exception {

//1、创建一个job任务对象

Job job=Job.getInstance(super.getConf(),"wordcount");

//2、配置job任务对象(8个步骤)

//一、指定文件的读取方式和读取路径

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path("hdfs:192.168.2.101:9000/wordcount"));

//二、指定Map阶段的处理方式

job.setMapperClass(wrodcountMaper.class);

//设置map阶段k2的类型

job.setMapOutputKeyClass(Text.class);

//设置map阶段v2的类型

job.setMapOutputValueClass(LongWritable.class);

//suffer阶段 第三四五六步采用默认的方式

//第七步:指定Reduce阶段的处理方式和和数据类型

job.setReducerClass(wordcountReduce.class);

//设置k3的类型

job.setOutputKeyClass(Text.class);

//设置v3的类型

job.setMapOutputValueClass(LongWritable.class);

//第八步:设置输出类型

job.setOutputFormatClass(TextOutputFormat.class);

//设置输出的路径

TextOutputFormat.setOutputPath(job,new Path("hdfs:192.168.2.101:9000/wordcount_out"));

//等待任务结束

boolean b1 = job.waitForCompletion(true);

//如果b1是ture那就返回0,反之返回1

return b1 ? 0:1;

}

public static void main(String[] args) throws Exception {

Configuration configuration=new Configuration();

//启动job任务

int run=ToolRunner.run(configuration,new JobMain(), args);

System.exit(run);

}

}

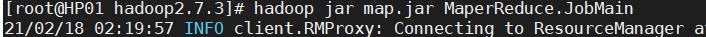

运行模式:

集群运行:

1、将MapReduce程序提交给Yarn集群,分法到很多节点上并发执行

2、处理的数据和输出结果应该位于HDFS文件系统

3、提交集群的实现步骤,将程序打成jar包,并上传,然后在集群上用hadoop命令启动

hadoop jar hadoop_hdfs_operate-1.0-SNAPSHOT.jar

cn.itcast.mapreduce.JobMain

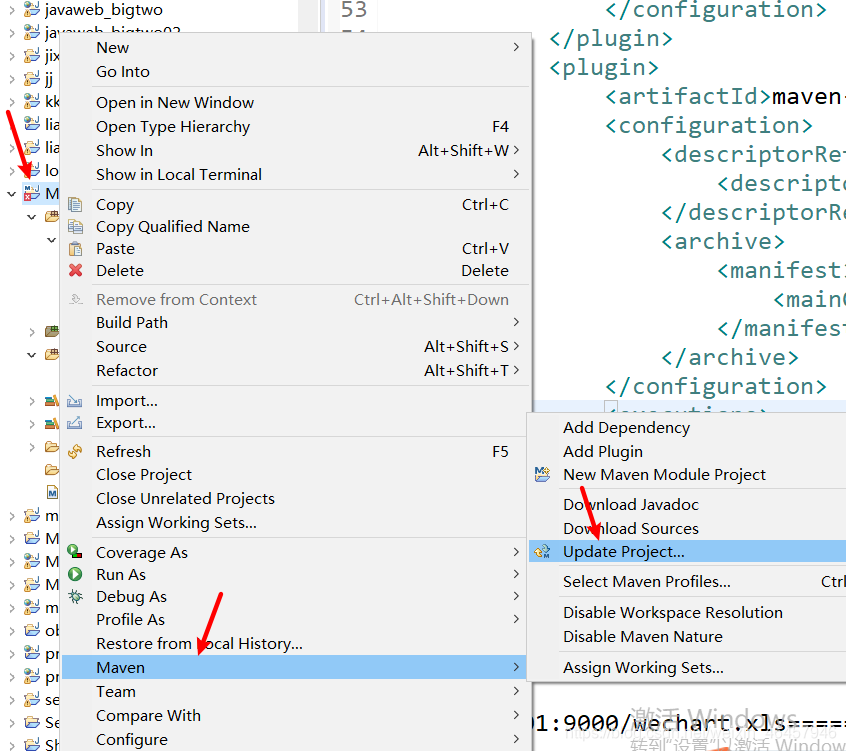

用maven打jar包,需要添加的打包插件依赖

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>2.3.2</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

<plugin>

<artifactId>maven-assembly-plugin </artifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

<archive>

<manifest>

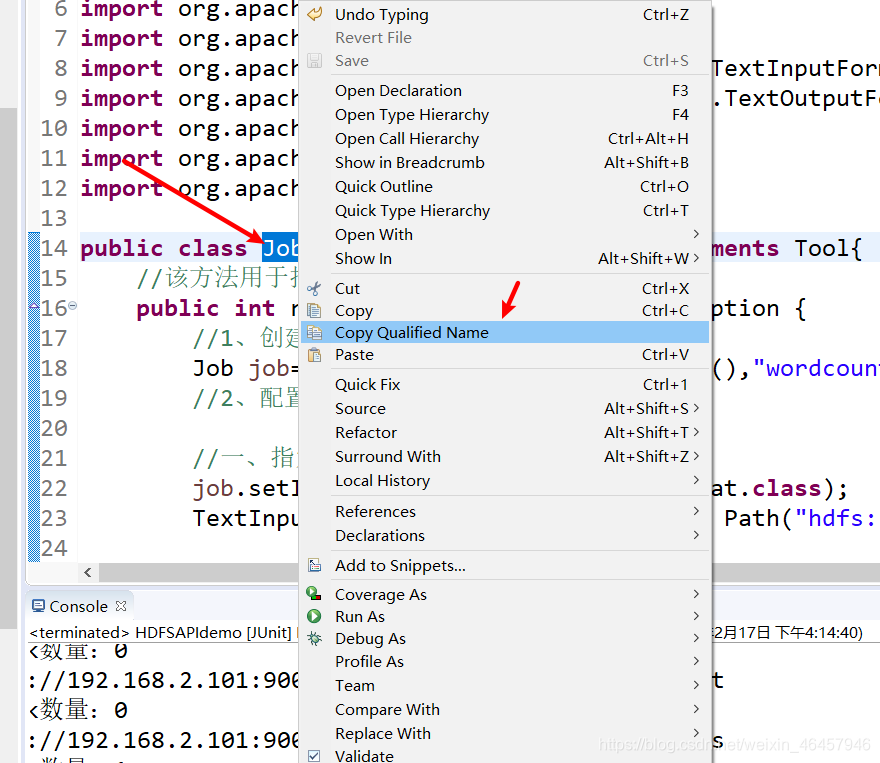

<mainClass>MaperReduce.JobMain</mainClass>

</manifest>

</archive>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

以上红框内容是需要修改的地方,是你自己的主类路径

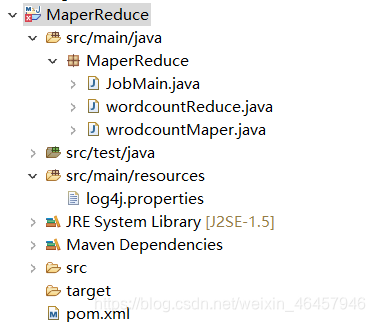

这有个叉,没事,一会儿就好。

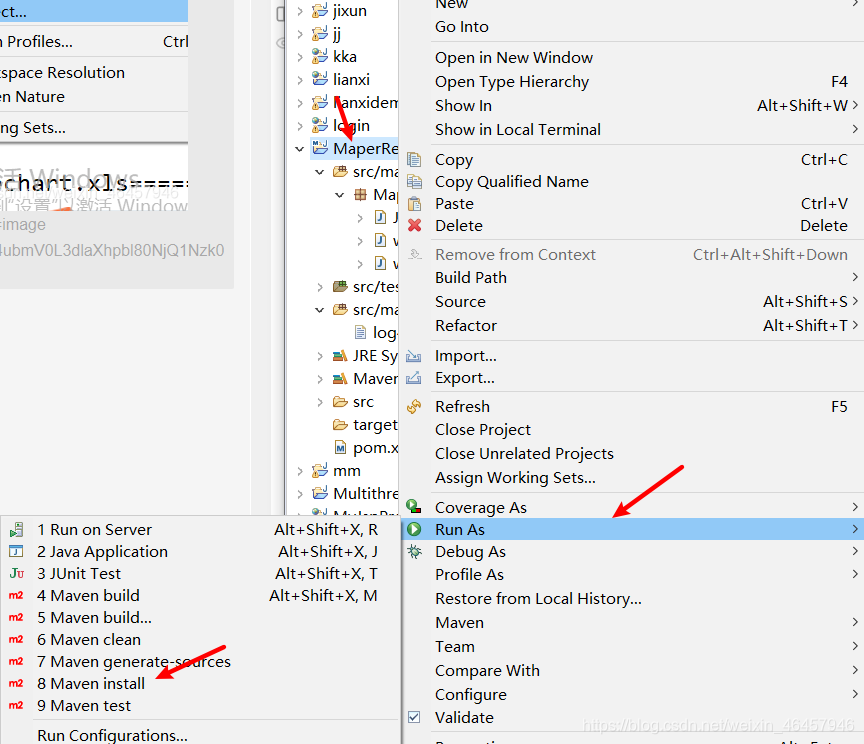

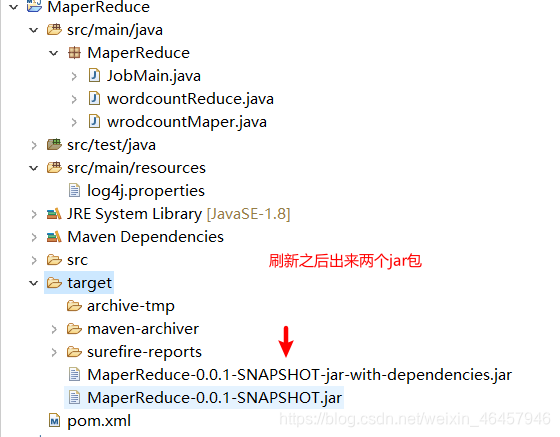

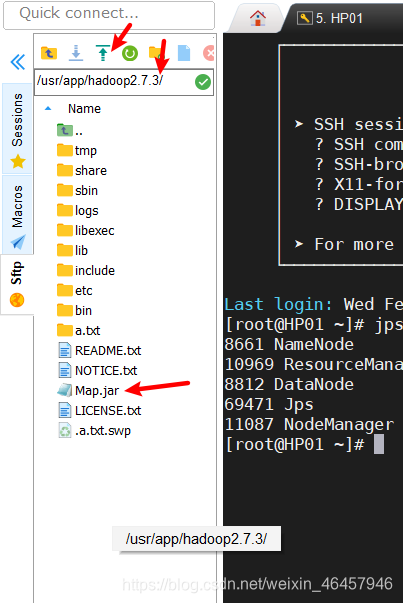

打包:

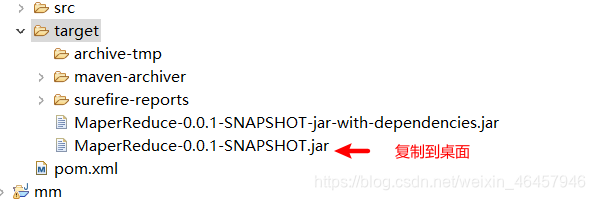

把他重命名

指定一个目录把刚刚的jar包传上去

本地运行模式:

1、MapReduce程序是在本地以单进程的形式运行

2、处理的数据及输出结果在本地文件系统